The electron

Understanding what the electron might actually be is probably key to understanding all of physics. You have been taught to think of electrons as having no components or substructure but that is nonsense. You should also separate the idea of the electron and its charge: the two concepts are related – very much so, of course – but not quite the same. The difference has to do with the two different radii of the electron. Let us start the discussion with that.

The Compton versus the Thomson radius of an electron

From photon scattering experiments, we know photons scatter either elastically or non-elastically, and these two processes are associated with two different radii of the electron. When photons scatter off an electron elastically, the wavelength of the incoming and outgoing photon remains unchanged. In contrast, Compton scattering does involve a wavelength change and, therefore, a more complicated interaction between the photon and the electron. To be specific, we think of the photon as being briefly absorbed, before the electron emits another photon of lower energy. Let us elaborate this.

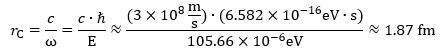

Elastic scattering is referred to as Thomson scattering and the effective scattering radius is associated with the classical electron radius re = α·rC ≈ 2.818 femtometer (10−15 m). The classical electron radius – which is also referred to as the Thomson or Lorentz radius – is related to the larger radius (the Compton radius) by the fine-structure constant α ≈ 0.0073.

From the laws of energy and momentum conservation, one can easily show that inelastic scattering – Compton scattering, in other words – obeys the following formula (see, for example, Prof. Dr. Patrick R. LeClair’s rather instructive lecture on it):

Δω = (1 − cosθ)·rC = (1 − cosθ)·ħ/mc ≈ (1 − cosθ)·0.386×10−12 m (picometer)

The Δω is the change in the (angular) frequency of the photon, and we effectively interpret the Compton radius or – multiplied by a factor 2 or 2π – the Compton diameter, circumference or wavelength (λC = 2π·rC) as the scale of the electron in a particle-like sense.

In contrast, we think of the photon itself as being pointlike. Indeed, unlike an electron, a photon does not carry any charge, and the electric and magnetic field vectors that describe it (E and B) will be zero at each and every point in time and in space except if our photon happens to be at the (x, y, z) location at time t.

So what is the electron, then? We think of it as an oscillating charge. Erwin Schrödinger, who stumbled upon the idea while exploring solutions to Dirac’s wave equation for the electron, referred to this oscillatory motion as a Zitterbewegung: Zitter means trembling or shaking. Other authors refer to it as the toroidal ring model, and you can google many variants. I actually added to the confusion by inventing yet another term for it – the oscillator model – but I now refer to it as the ring current model. A ring current is an electric current trapped in a magnetic field and, yes, that’s how one might think of an electron—although we will make some remarks on that particular interpretation of the model.

Let us, by way of an introduction to the model, quote Dirac’s summary of Schrödinger’s discovery:

“The variables give rise to some rather unexpected phenomena concerning the motion of the electron. These have been fully worked out by Schrödinger. It is found that an electron which seems to us to be moving slowly, must actually have a very high frequency oscillatory motion of small amplitude superposed on the regular motion which appears to us. As a result of this oscillatory motion, the velocity of the electron at any time equals the velocity of light. This is a prediction which cannot be directly verified by experiment, since the frequency of the oscillatory motion is so high and its amplitude is so small. But one must believe in this consequence of the theory, since other consequences of the theory which are inseparably bound up with this one, such as the law of scattering of light by an electron, are confirmed by experiment.” (Paul A.M. Dirac, Theory of Electrons and Positrons, Nobel Lecture, December 12, 1933)

We think this quote summarizes both the nature of the model as well as the main experimental evidence for it rather well. Dirac effectively refers to the dual radius of an electron here. Indeed, a description of the electron in terms of a core charge zittering around some center does not only offer an elegant explanation of the electron’s wave-particle duality – thereby explaining interference without having to resort to mystical blah blah (the Copenhagen interpretation of quantum mechanics) – but, as we will show in the next section, it also offers an intuitive explanation of all of the intrinsic properties of an electron, including its dual radius.

The ring current model is actually older than Schrödinger’s discovery. Indeed, we do not know if Schrödinger and Dirac were aware of the electron model which was developed by the British chemist and physicist Alfred Lauck Parson in 1915. He referred to it as the magneton model—for magnetic electron. Unfortunately, Bohr subsequently used the term magneton term to refer to something entirely different—we will come back to that when discussing the magnetic moment of an electron.

Any case, if you are interested in the history of these models, we may refer you to the writings of Oliver Consa (April 2018), who developed his own version of it: the Helical Solenoid Model of the Electron (http://www.ptep-online.com/2018/PP-53-06.PDF). Now that we are talking about Oliver Consa, we also recommend the reader to read Consa’s article on the development of mainstream quantum mechanics. It’s title says enough: Something is rotten in the state of QED (https://vixra.org/pdf/2002.0011v1.pdf). We will, effectively, be presenting a non-mainstream explanation for the anomalous magnetic moment of an electron – not using quantum field theory and all of the hocus-pocus that is related to it – so we warmly recommend to read Consa’s article as background material.

[…]

We should now proceed to the model itself. However, before we do so, we should probably preempt likely criticism from the more informed reader, who will want to hear about scattering cross-sections rather than radii. That is fine. A cross-section is a surface area. In our physical interpretation of the classical electron radius, this surface area corresponds to the surface of a disk which – in this case – is equal to A = 2π·re2 = 2π·(αħ/mc)2 ≈ 49.9 (fm)2. Now, quantum-mechanical calculations based on real-life experiments yield a Thomson cross-scattering section that is slightly larger. To be precise (we refer the more uninformed reader to the Wikipedia article on Thomson scattering for a brief overview of the matter), the Thomson scattering radius is equal to σ = (8π/3)·(αħ/mc)2 ≈ 66.5 (fm)2, so that’s 4/3 ≈ 1.333… times larger than our simple A = 2π·re2 = 2π·(αħ/mc)2 ≈ 49.9 (fm)2 result.

We don’t have any ready-made explanation for the 4/3 factor. As Feynman once famously wrote, when doing calculations like this, “we need not trust our answer to within factors like 2, π, etc.” We, therefore, think our answer is pretty good, and we trust the 4/3 factor can be explained very rationally. The ring current will generate an electromagnetic field, for example, and, hence, the effective radius of interaction between an electron and the photons used to probe it should, effectively, be somewhat larger than the radius of the ring current itself.

Let us now do what we wanted to do here, and that is to detail the electron model.

The ring current model of an electron

The illustration below captures the basics of the model. We already mentioned we may imagine – as David Hestenes and other modern Zitterbewegung theorists do – the electron as a perpetual current in a superconducting ring. However, when everything is said and done, we need to answer Dr. Burinskii‘s basic question: what keeps [the pointlike charge] in its circular orbit? Hence, we must, somehow, picture the underlying reality – or whatever you’d want to refer to it – as some centripetal force keeping the charge in some kind of orbit. We analyzed this force as a two-dimensional oscillation: we can, effectively, analyze the force in terms of two perpendicular or orthogonal components which, taken together, make up a two-dimensional oscillator. However, we must refer the reader to our paper(s) or our manuscript for more detail here because as we don’t want to be too long here.

We already mentioned we may imagine – as David Hestenes and other modern Zitterbewegung theorists do – the electron as a perpetual current in a superconducting ring. However, when everything is said and done, we need to answer Dr. Burinskii‘s basic question: what keeps [the pointlike charge] in its circular orbit? Hence, we must, somehow, picture the underlying reality – or whatever you’d want to refer to it – as some centripetal force keeping the charge in some kind of orbit. We analyzed this force as a two-dimensional oscillation: we can, effectively, analyze the force in terms of two perpendicular or orthogonal components which, taken together, make up a two-dimensional oscillator. However, we must refer the reader to our paper(s) or our manuscript for more detail here because as we don’t want to be too long here.

Let us go over the basic equations. First, we should note that we do not need Maxwell’s equations to calculate the radius of this oscillation. We get it from (1) the Planck-Einstein relation (which we interpret as modeling a fundamental cycle in Nature), (2) the mass-energy equivalence relation (a relation which you should probably think of as summarizing relativity theory), and (3) the tangential velocity formula (which you should probably interpret as the idea of a circle): The derivation is weird because strangely straightforward. In fact, because it is so straightforward – no need to invoke Maxwell’s laws of electromagnetism here! – we think the oscillation (and, therefore, the force that drives it) is very fundamental. It is, in fact, the reason why we consider our model to be very different from that of Hestenes and other Zitterbewegung theorists. Let us repeat what we wrote to emphasize this point: we do not need the laws of electromagnetism to calculate the Compton radius of an electron. Our model, therefore, stands on it own.

The derivation is weird because strangely straightforward. In fact, because it is so straightforward – no need to invoke Maxwell’s laws of electromagnetism here! – we think the oscillation (and, therefore, the force that drives it) is very fundamental. It is, in fact, the reason why we consider our model to be very different from that of Hestenes and other Zitterbewegung theorists. Let us repeat what we wrote to emphasize this point: we do not need the laws of electromagnetism to calculate the Compton radius of an electron. Our model, therefore, stands on it own.

We may even wonder: do we actually need the concept of mass in this derivation? The answer is: yes and no. We may think we don’t need the mass concept because the model is a wonderful application of Wheeler’s ‘mass without mass’ idea: all of the mass of the electron is nothing but the equivalent mass of the energy in the oscillation.

Having said that, we think the concept of mass is actually useful: how are you going to measure energy? It’s a derived concept: energy is force times a distance, and how do we measure the force? We need the concept of mass: mass is the inertia to a change in the state of motion – and, therefore, the energy state – of an object.

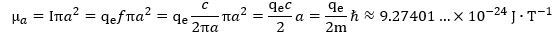

We also need the concept of mass in our derivation of the Compton radius because the formulas don’t tell us why an electron is what it is: the derivation above works for any mass m. Maxwell’s laws save us here—and then they don’t. They save us because a current ring of this size – with the charge moving at the speed of light – gives us the measured magnetic moment of an electron: So, yes, the electron is the electron because its mass is what it is, and that mass gives us the Compton radius, which happens to be the exact radius of a ring current that generates the electron’s magnetic moment.

So, yes, the electron is the electron because its mass is what it is, and that mass gives us the Compton radius, which happens to be the exact radius of a ring current that generates the electron’s magnetic moment.

At this point, we should talk about the so-called anomalous magnetic moment. The issue is this: the value above is very near but not quite equal to the CODATA value for the electron magnetic moment:

μr = μCODATA = 9.2847647043(28)×10-24 J·T-1

So, yes, there is a small anomaly in the magnetic moment, and so we need to explain it. Our explanation is easy: we don’t think of it as an anomaly. We think the Planck-Einstein relation is as absolute as relativity theory. However, our mathematical idealizations – the idea of a pointlike charge that has no spatial dimension whatsoever – are what they are: mathematical idealizations. Let us – before we move on to a discussion of the muon-electron – talk about that: how can we explain the anomaly? We have a very easy geometric explanation of it, which we will present in the next section.

Before we do so, however, we want to show you something weird: we can relate the Planck-Einstein relation to a relation which quantum physicists think is very important: the relation which defines the so-called gyromagnetic factor. Yes, we are talking the rather (in)famous g–factor here. Let us insert a separate section on it.

Shapes, form factors and the gyromagnetic ratio

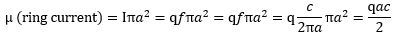

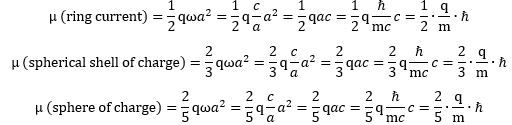

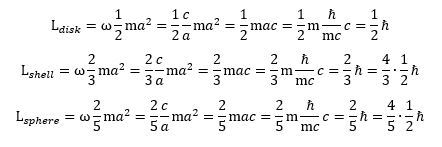

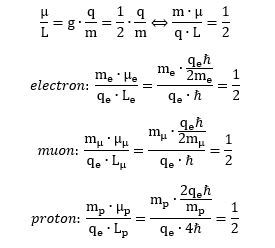

Let us look at that formula for the magnetic moment of our ring current once again: What if it is some other shape? We think the electron is a ring current, but let us try to think of some other shape. What formula do we get for the magnetic moment of a rotating sphere or shell of charge? We let you google these formulas. They look pretty much the same, except that we have to add what I refer to as a form or shape factor. Indeed, instead of a 1/2 factor in the formula, we should now get a 2/3 or 2/5 factor:

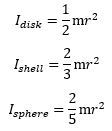

What if it is some other shape? We think the electron is a ring current, but let us try to think of some other shape. What formula do we get for the magnetic moment of a rotating sphere or shell of charge? We let you google these formulas. They look pretty much the same, except that we have to add what I refer to as a form or shape factor. Indeed, instead of a 1/2 factor in the formula, we should now get a 2/3 or 2/5 factor: It’s the same form factor that you see in the formulas for the angular mass of an object—and please don’t confuse the symbol (I) with that for an electric current here (I):

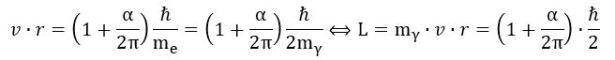

It’s the same form factor that you see in the formulas for the angular mass of an object—and please don’t confuse the symbol (I) with that for an electric current here (I): You’ll say: why the 1/2 factor? Why the disk? The angular mass of a rotating point mass m is just I = mr2,, isn’t it? It is. The answer is complicated, and then not: the ring current model assumes half of the energy is kinetic – as reflected what is referred to as the effective mass of the charge as it’s whizzing around at lightspeed. We denote it by mγ, and we write: mγ = me/2. Hence, we can re-write the Idisk = mr2/2 formula either as mer2,/2, or as mγr2. Capito? Probably not. Let us, therefore, say a few more words on it. The concept of the effective mass is an entirely relativistic mass concept: we think the zbw charge has zero rest mass. In that sense, it makes one think of the concept of a photon—but, while photons do carry electromagnetic energy, they don’t carry charge! Any case, you’ll wonder: where’s the other half then? That’s the energy in the force field—the centripetal force that keeps the zbw charge in place. By the way, this is also relativistic mass: it’s the equivalent mass of the energy in the oscillation.

You’ll say: why the 1/2 factor? Why the disk? The angular mass of a rotating point mass m is just I = mr2,, isn’t it? It is. The answer is complicated, and then not: the ring current model assumes half of the energy is kinetic – as reflected what is referred to as the effective mass of the charge as it’s whizzing around at lightspeed. We denote it by mγ, and we write: mγ = me/2. Hence, we can re-write the Idisk = mr2/2 formula either as mer2,/2, or as mγr2. Capito? Probably not. Let us, therefore, say a few more words on it. The concept of the effective mass is an entirely relativistic mass concept: we think the zbw charge has zero rest mass. In that sense, it makes one think of the concept of a photon—but, while photons do carry electromagnetic energy, they don’t carry charge! Any case, you’ll wonder: where’s the other half then? That’s the energy in the force field—the centripetal force that keeps the zbw charge in place. By the way, this is also relativistic mass: it’s the equivalent mass of the energy in the oscillation.

Any case, we need to move on here, so we refer to our paper(s) on that. Let us do the next logical thing, and that’s to calculate angular momenta. These easy formulas may do the trick: As you can see, angular momentum can be expressed in half or in full units of Planck’s (reduced) constant (ħ). I like to think that the Planck-Einstein relation tells us angular momentum should probably come in full units of ħ: the 1/2 factor is there because the energy equipartition theorem tells us to distinguish between the effective mass of the zbw charge and the equivalent mass of the force field that keeps it in place. However, as you have probably been brainwashed believing with the rather vague idea of an electron as a spin-1/2 particle, you may prefer the half-units. The half-units are a bit misleading though: the 4/5 factor for a sphere of charge suggests it has more angular momentum than a disk or a shell (for the same mass, the same charge and the same radius, that is) but that’s not the case: it has less (2/5 = 0.4). It’s the shell of charge that yields more angular momentum for a given mass, charge and radius.

As you can see, angular momentum can be expressed in half or in full units of Planck’s (reduced) constant (ħ). I like to think that the Planck-Einstein relation tells us angular momentum should probably come in full units of ħ: the 1/2 factor is there because the energy equipartition theorem tells us to distinguish between the effective mass of the zbw charge and the equivalent mass of the force field that keeps it in place. However, as you have probably been brainwashed believing with the rather vague idea of an electron as a spin-1/2 particle, you may prefer the half-units. The half-units are a bit misleading though: the 4/5 factor for a sphere of charge suggests it has more angular momentum than a disk or a shell (for the same mass, the same charge and the same radius, that is) but that’s not the case: it has less (2/5 = 0.4). It’s the shell of charge that yields more angular momentum for a given mass, charge and radius.

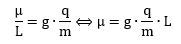

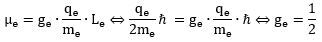

These ratios naturally lead to the idea of what physicists refer to as the gyromagnetic ratio. It relates the magnetic moment to the (assumed) angular momentum of an elementary particle. Assumed? Yes. You should always remember we cannot directly observe the shape of an elementary particle, nor can we measure its angular momentum: the only thing we can measure is its magnetic moment (and its mass, charge and effective scattering radius, of course). So let us jot down the formula: The informed reader will immediately cry wolf: the q/m factor misses a 1/2 factor. We should get Bohr’s magneton on the right-hand side of the equation, and Bohr’s magneton is equal to qħ/2m. You are right, and then you are not. The true answer is: it doesn’t matter because we get the 1/2 factor from measuring angular momentum in half-units of ħ. I prefer the measurement in full-units, and so my g-factor is, effectively, half of the g-factor you’ll see elsewhere. Think of it for yourself: the q/m factor is much easier to understand – intuitively, that is – than a q/2m factor. Why? It’s just more natural: q/m is the charge per mass unit. A simple concept, right? It is some kind of natural unit, I would think. In contrast, what’s q/2m? I think of it as a confusing concept: it just adds to the mystery of quantum mechanics by leading us astray.

The informed reader will immediately cry wolf: the q/m factor misses a 1/2 factor. We should get Bohr’s magneton on the right-hand side of the equation, and Bohr’s magneton is equal to qħ/2m. You are right, and then you are not. The true answer is: it doesn’t matter because we get the 1/2 factor from measuring angular momentum in half-units of ħ. I prefer the measurement in full-units, and so my g-factor is, effectively, half of the g-factor you’ll see elsewhere. Think of it for yourself: the q/m factor is much easier to understand – intuitively, that is – than a q/2m factor. Why? It’s just more natural: q/m is the charge per mass unit. A simple concept, right? It is some kind of natural unit, I would think. In contrast, what’s q/2m? I think of it as a confusing concept: it just adds to the mystery of quantum mechanics by leading us astray.

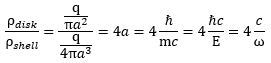

Now that we are thinking in terms of charge distributions and all that – the charge per unit mass gives us some kind of idea of the charge distribution, isn’t it? – we may, perhaps, add some more geometric ideas here. Think about surface areas. The surface area of a current loop is equal to A = πr2 formula, and the surface of a sphere is given by the S = 4πr3 formula. The A/S ratio is, therefore, equal to four times r. So if we think of a charge distribution over a surface, we get the following ratio: Is this formula useful? Probably not. We just inserted it here because we will encounter a similar 1/4 or 4 factor when discussing the radius of the proton, and it may or may not be related to the form factor above. What does the formula tell us? Not all that much. It tells us that, for the same mass, the same charge and the same radius, we will get a different charge distribution for a disk versus a shell of charge. These distributions will differ by a factor that is equal to four times the radius. Hence, the distribution (for the disk) will be proportional to the radius and the factor of proportionality is equal to 4.

Is this formula useful? Probably not. We just inserted it here because we will encounter a similar 1/4 or 4 factor when discussing the radius of the proton, and it may or may not be related to the form factor above. What does the formula tell us? Not all that much. It tells us that, for the same mass, the same charge and the same radius, we will get a different charge distribution for a disk versus a shell of charge. These distributions will differ by a factor that is equal to four times the radius. Hence, the distribution (for the disk) will be proportional to the radius and the factor of proportionality is equal to 4.

Let us not dwell on this. We need to move on. Let us discuss that rather infamous anomaly now.

The non-anomalous anomaly

As mentioned, we should not think of the anomaly as an anomaly. We think the zbw charge has a very small but non-zero spatial dimension: it is pointlike but in a physical sense only—not in a mathematical sense. We must, therefore, distinguish between (1) our mathematical idealizations (the theoretical a = a = ħ/mc radius and the theoretical velocity c) and (2) what we shall refer to as the effective radius r and the effective velocity v.

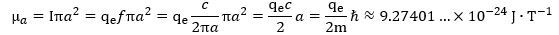

The effective radius r must be very near but not quite equal to the theoretical Compton radius a = ħ/mc, and the same must be true for the effective velocity: it will be very near but not equal to c. In our initial calculations, we thought of r and v being smaller than a = ħ/mc and c respectively. However, when we were reviewing our calculations while building this website, we realize we made a small but significant mistake. The measured magnetic moment – that is its CODATA value, for all practical purposes – is actually larger than the theoretical magnetic moment we’d calculate from the current I = q·f = q·E/h and the radius a = ħ/mc. To be precise, this theoretical value, which we’ll denote by μa, is equal to: In contrast, the CODATA or measured value, which we’ll denote as μr, is equal to what we already wrote above:

In contrast, the CODATA or measured value, which we’ll denote as μr, is equal to what we already wrote above:

μr = μCODATA = 9.2847647043(28)×10-24 J·T-1

As per the convention, you should actually add a minus sign, because the elementary charge is negative here. However, when calculating the anomaly, these minus signs cancel out: all that counts are the magnitudes here.

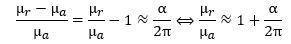

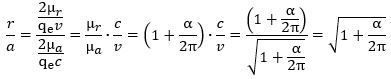

In any case, you should note that the μr value is actually larger – not smaller – than μa. The μr = Iπr2 = qfπr2 = q·(v/2πr)·πr2 = qvr/2 formula implies, therefore, that the r·v product must be larger than the a·c product. Let us re-calculate the anomaly to make sure we know what we are doing. We know it should be equal to this: How do we know that? Because it has been measured like that. The μr/μa ratio effectively yields gives us this:

How do we know that? Because it has been measured like that. The μr/μa ratio effectively yields gives us this: In fact, you may have heard that Schwinger’s factor explains about 99.85% of the anomaly. You can check from the formula above that it’s actually much better than that: the μr/μa ratio is about 99.99982445% of 1 + α/2π. 🙂 That is very good, isn’t it? 🙂

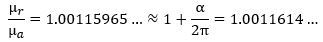

In fact, you may have heard that Schwinger’s factor explains about 99.85% of the anomaly. You can check from the formula above that it’s actually much better than that: the μr/μa ratio is about 99.99982445% of 1 + α/2π. 🙂 That is very good, isn’t it? 🙂 Let us get on with the calculations. 🙂 Re-writing the ratio between the theoretical and actual magnetic moment and equating it with our 99.99982445% explanation (i.e. the 1 + α/2π approximation), we get this:

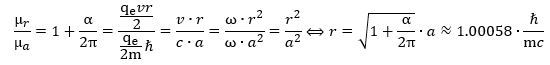

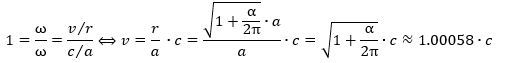

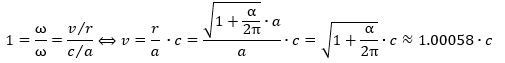

Let us get on with the calculations. 🙂 Re-writing the ratio between the theoretical and actual magnetic moment and equating it with our 99.99982445% explanation (i.e. the 1 + α/2π approximation), we get this: We can now also calculate the effective velocity. Indeed, we know the v/c and r/a ratios must be the same. Why? Because of the Planck-Einstein relation: the frequency of our electron is the frequency of our electron. We can, therefore, calculate the effective velocity v as:

We can now also calculate the effective velocity. Indeed, we know the v/c and r/a ratios must be the same. Why? Because of the Planck-Einstein relation: the frequency of our electron is the frequency of our electron. We can, therefore, calculate the effective velocity v as: It is a wonderful result, and you can check its consistency by taking the v/r ratio which – when inserting the formulas above – effectively yields the Planck-Einstein frequency again (v/r = ω = E/ħ).

It is a wonderful result, and you can check its consistency by taking the v/r ratio which – when inserting the formulas above – effectively yields the Planck-Einstein frequency again (v/r = ω = E/ħ).

Of course, your first reaction will be this: this is an impossible result! Velocities cannot exceed the speed of light, can they? They cannot: the velocity that is being calculated here is a mathematical quantity: the (tangential) velocity of the charge – as a particle (or, as we think of it here, as some component of it) – does not exceed the speed of light. It is a bit like the difference between the group and phase velocity when studying a wave packet: the phase velocity can be larger than the speed of light but – as it does not carry energy or any signal (it is just a mathematical quantity) – we do not think of that as a violation of relativity theory. In contrast, the group velocity cannot exceed c.

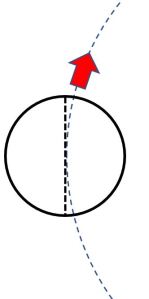

The discussion above relates to this other question that you should have: why do we have a slightly larger effective radius? The illustration below should answer that question: if the zbw charge is effectively whizzing around at the speed of light, and we think of it as a charged sphere, shell or some other shape, then its effective center of charge will not coincide with its center. Why not? Because the ratio between (1) the charge that is outside of the disk formed by the radius of its orbital motion (the large circle, of which we only show an arc) and (2) the charge inside – note the triangular areas between the diameter line of the smaller circle (which you should think of as modeling the zbw charge) and the larger circle (the arc which represent its orbital) – is slightly larger than 1/2. It all looks astonishingly simple, doesn’t it? Too simple, perhaps? We don’t think so, but we will let you – the reader – judge, of course!

It all looks astonishingly simple, doesn’t it? Too simple, perhaps? We don’t think so, but we will let you – the reader – judge, of course!

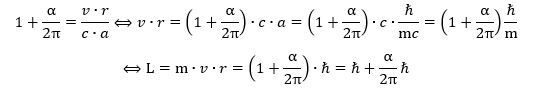

To conclude this section, we should add one more formula. It is an interesting one because it brings a very important nuance to the quantum-mechanical rule that angular momentum should come in units of ħ. Indeed, our calculation shows the actual angular momentum of an electron is slightly larger than ħ: Unsurprisingly, the difference is, once again, given by Schwinger’s α/2π factor.

Unsurprisingly, the difference is, once again, given by Schwinger’s α/2π factor.

The attentive reader may note something else here: the electron is a spin-1/2 particle, isn’t it? It is. So why do we have an angular momentum that’s equal to ħ (or almost equal to ħ, taking into account the anomaly)? That’s an easy question, but the answer is, unfortunately, somewhat less straightforward. The relevant mass for the L = m·v·r formula is the effective (relativistic) mass of the zbw charge as it whizzes around in its oscillatory or orbital motion. An easy geometric argument (we refer to our paper(s) here) shows this effective mass – which we denote as mγ – is half of the electron mass. We, therefore, do get this spin-1/2 property when re-writing the formula above as follows: In any case, we do not think the distinction between spin-1/2 and spin-1 particles is particularly enlightening. On the contrary: we think it is one of the unproductive myths of mainstream quantum mechanics—a useless generalization that confuses rather than clarifies things.

In any case, we do not think the distinction between spin-1/2 and spin-1 particles is particularly enlightening. On the contrary: we think it is one of the unproductive myths of mainstream quantum mechanics—a useless generalization that confuses rather than clarifies things.

Let us now move to the next question: can we apply the ring current model to the heavier version of the electron, i.e. the muon-electron? The answer is: yes. It works perfectly well. Let us show you.

The muon-electron

The electron has not one but two heavier versions. Both of them are unstable, but the muon-electron has some longevity, which may explain why its Compton radius seems to make sense too! Let us first remind you of the basics:

- The muon energy is about 105.66 MeV, so that’s about 207 times the electron energy. Its lifetime is much shorter than that of a free neutron but longer than that of other unstable particles: about 2.2 microseconds (10-6 s). That’s fairly long as compared to other non-stable particles. At the same time, we should also not exaggerate this presumed longevity of the muon: the mean lifetime of (charged) pions, for example, is about 26 nanoseconds (10-9 s), so that’s only 85 times less.

- In contrast, the lifetime of the tau electron is extremely short: 2.9×10−13 s only. Its energy is about 1776 MeV, so that’s almost 3,500 times the electron mass. Because of its short lifetime, we think of it as some resonance or a transient oscillation. Indeed, I like to reserve the term ‘particle’ for stable particles. We don’t think the Planck-Einstein relation applies to resonances or other temporary configurations of stabler constituents. In other words, we think the tau-electron quickly disintegrates because its cycle is way off, and we will, therefore, not pay any attention to it.

Let us see what we get when calculating the Compton radius for the muon-electron: Now, if you divide the CODATA value for the Compton wavelength of the muon by 2π, you will get a value that is about the same—and, with about the same, I mean a value within the same confidence interval, so that’s very precise! Hence, the ring current model works for the muon as well! However, it triggers at least two or three obvious questions:

Now, if you divide the CODATA value for the Compton wavelength of the muon by 2π, you will get a value that is about the same—and, with about the same, I mean a value within the same confidence interval, so that’s very precise! Hence, the ring current model works for the muon as well! However, it triggers at least two or three obvious questions:

1. Why is the muon unstable? We think it is because the oscillation is almost on, but not quite. Hence, the Planck-Einstein relation applies only approximately.

2. Why is the centripetal force so much stronger for a muon than it is for an electron? That’s a good question, and we will talk about that in a moment. We think some strong(er) force must be involved here.

3. Why is the anomaly of the magnetic moment of the muon almost – but not quite – the same as that of the electron? Let us list the CODATA values for the magnetic moment of an electron, a muon and Schwinger’s factor respectively:

ae = 1.00115965218128(18)

aμ = 1.00116592089(63)

1 + α/2π = 1.00116140973…

Let us start with the last question—on the anomaly. The attentive reader will have noticed we refrained from fast conclusions on the radius or diameter of the zbw charge while analyzing the difference between the effective and theoretical (Compton) radius of an electron. If the zbw charge inside a muon and an electron would be the same hard core charge with the same radius, then the anomaly of the magnetic moment of a muon-electron would not have the same order of magnitude. We may, indeed, remind ourselves that the anomaly in the magnetic moment must equal the (square root of the) anomaly of the radius: [The square or the square root in these formulas is always difficult to appreciate but think of it like this: the (angular) frequency is the ratio of the (tangential) velocity and the radius, so we have two variables (rather than just one) – velocity and radius – that must be proportional to the same factor (frequency). The point is intuitive and, at the same time, not at all. In any case, the reader will be able to verify the formula above respects the (r·v)/(a·c) = μr/μa = 1 + α/2π equation, so that’s at least something. 🙂]

[The square or the square root in these formulas is always difficult to appreciate but think of it like this: the (angular) frequency is the ratio of the (tangential) velocity and the radius, so we have two variables (rather than just one) – velocity and radius – that must be proportional to the same factor (frequency). The point is intuitive and, at the same time, not at all. In any case, the reader will be able to verify the formula above respects the (r·v)/(a·c) = μr/μa = 1 + α/2π equation, so that’s at least something. 🙂]

The point is this: we have the same anomaly – more or less – at least, and that anomaly is expressed in relative terms: we don’t see anything like the classical electron radius here. In fact, the classical electron radius is larger than the muon itself, so we can’t think of something of such scale zittering around in a muon!

So what’s the matter here? It is a rather intuitive thought, but we suspect there is no real hard core charge—not inside the electron, and not inside the muon. Perhaps there is some kind of fractal structure—finite or infinite. [Fractals structures are usually thought of as infinite structures: ratios within ratios, but we see no a priori reason here to think of an infinite structure here: Nature seems to be discrete – so the ‘order within the order’ may involve some absolute scaling constant as well. Because we have no idea about the dimensions of that constant, we should probably think of it as a some kind of absolute minimum value on any scale. If this sounds absurd, we may usefully note that the fine-structure constant has no physical dimension whatsoever. As such, we think α (no physical dimension) and ħ (a product of force, distance and time) make for a good couple. :-)]

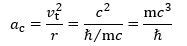

Let us revert to the second question. This question is quite deep. Let us analyze this in terms of the centripetal acceleration vector here, which we will denote by ac, and which is equal to:

ac = vt2/r = r·ω2

Is this formula relativistically correct? Where does it come from? The position or radius vector r (which describes the position of the zbw charge) has a horizontal and a vertical component: x = r·cos(ωt) and y = r·sin(ωt). We can now calculate the two components of the (tangential) velocity vector v = dr/dt as vx = –r·ω·sin(ωt) and vy y = –r· ω·cos(ωt) and, in the next step, the components of the (centripetal) acceleration vector ac: ax = –r·ω2·cos(ωt) and ay = –r·ω2·sin(ωt). The magnitude of this vector is then calculated as follows:

ac2 = ax2 + ay2 = r2·ω4·cos2(ωt) + r2·ω4·sin2(ωt) = r2·ω4 ⇔ ac = r·ω2 = vt2/r

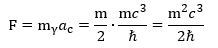

Now, the tangential velocity is assumed to be equal to c, and the radius r is equal to r = ħ/mc. The centripetal acceleration should, therefore, be equal to: Now, Newton’s force law tells us that the magnitude of the centripetal force ǀFǀ = F will be equal to the product of this acceleration and the mass, but what mass should we use here? We need to use the effective mass of the zbw charge as it zitters around the center of its motion at (nearly) the speed of light. We will denote the effective mass as mγ, and we used a geometric argument to prove it is half the electron mass—or, in this case, the muon mass. [The reader may not be familiar with the concept of the effective mass of an electron but it pops up quite naturally in quantum physics. Richard Feynman, for example, gets the equation out of a quantum-mechanical analysis of how an electron could move along a line of atoms in a crystal lattice.]

Now, Newton’s force law tells us that the magnitude of the centripetal force ǀFǀ = F will be equal to the product of this acceleration and the mass, but what mass should we use here? We need to use the effective mass of the zbw charge as it zitters around the center of its motion at (nearly) the speed of light. We will denote the effective mass as mγ, and we used a geometric argument to prove it is half the electron mass—or, in this case, the muon mass. [The reader may not be familiar with the concept of the effective mass of an electron but it pops up quite naturally in quantum physics. Richard Feynman, for example, gets the equation out of a quantum-mechanical analysis of how an electron could move along a line of atoms in a crystal lattice.]

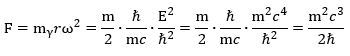

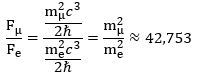

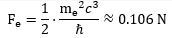

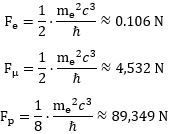

To make a long story short, we get this for the force inside the muon—and the electron, if we replace m by the electron mass! We can double-check this formula by using the other formula for ac:

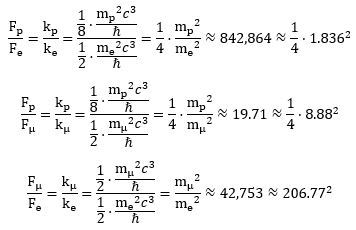

We can double-check this formula by using the other formula for ac: What’s the point of these calculations? It is this: if we denote the force inside the muon by Fμ and the force inside the electron by Fe , then we can use the CODATA value for the mμ/me mass ratio to calculate both the absolute as well as the relative strength of both forces. Indeed, their ratio (Fμ/Fe) can be calculated as:

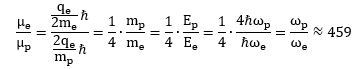

What’s the point of these calculations? It is this: if we denote the force inside the muon by Fμ and the force inside the electron by Fe , then we can use the CODATA value for the mμ/me mass ratio to calculate both the absolute as well as the relative strength of both forces. Indeed, their ratio (Fμ/Fe) can be calculated as: We can, of course, calculate an actual value for the force inside the electron. We will use yet another formula—just for fun (you can check it amounts to the same):

We can, of course, calculate an actual value for the force inside the electron. We will use yet another formula—just for fun (you can check it amounts to the same): That’s a huge force at the sub-atomic scale: it is equivalent to a force that gives a mass of about 106 gram (1 g = 10-3 kg) an acceleration of 1 m/s per second! However, our force ratio shows the force inside the muon-electron is about 42,743 times stronger! Hence, we should probably refer to it as the strong(er) force—especially because we know muon decay also involves the emission of neutrinos, which we think of as carriers of the strong force!

That’s a huge force at the sub-atomic scale: it is equivalent to a force that gives a mass of about 106 gram (1 g = 10-3 kg) an acceleration of 1 m/s per second! However, our force ratio shows the force inside the muon-electron is about 42,743 times stronger! Hence, we should probably refer to it as the strong(er) force—especially because we know muon decay also involves the emission of neutrinos, which we think of as carriers of the strong force!

To be precise, you can do the calculation using CODATA values for the energy or mass of the muon, and you will find the centripetal force inside a muon should be equal to about 4,532 N. Enormous, but not as enormous as the force which holds a proton together. Indeed, a proton is even smaller (about 0.83-84 fm) and even more massive: the proton-muon mass ratio is about 8.88. So what happens there? We’ll turn to that discussion in the next section. Before we do so, we will just quickly jot down the decay reaction of a muon—to show those neutrinos! Indeed, a muon decays into an electron while emitting two neutrinos: one with low energy – which is referred to as an electron neutrino – and another with very high energy – which is referred to as a muon neutrino. So it looks like this:

μ− → e− + νe + νμ

The muon’s anti-matter counterpart decays into the positron, of course:

μ+ → e+ + νe + νμ

Should we distinguish anti-neutrinos here? We don’t think so. Neutrinos may have opposite spin, but the idea of anti-matter involves a charge, and neutrinos – just like photons – do not carry charge. We mention this because we think of the question of the anti-neutrino as one of those mystery which mainstream physicists religiously worship.

Let us now say a few things about the proton. Before we do so, however, we should make one or two final remarks:

1. We started by noting the anomalies are almost but not quite equal to Schwinger’s factor. We can imagine some fractal structure explaining the second-, third- and other higher-order factors, but what explains the slight difference between the magnetic moment of a muon of an electron in that case? We are not quite sure, but we are not worried about it—especially not because of all the hanky-panky around both the theory as well as the measurements: we do not exclude some future experiment may suddenly provide a reasonable explanation to bring the two measured values closer together! Such is, sadly, the kind of distrust in regard to the dismal science of modern physics one needs to nurture nowadays!

2. The a = ħ/mc formula for the Compton radius implies the radius is inversely proportional to the mass. This is somewhat counter-intuitive: in daily life, we think of larger masses as bigger things. Here we get smaller but more massive objects with higher energies. Think of the muon versus the electron radius here: 1.87 femtometer versus 0.386 picometer. The muon is, effectively, about 207 times more massive but also 207 times smaller than an electron. Why is this so? The answer is in the Planck-Einstein relation and the tangential velocity formula: higher energy implies higher frequency. However, because the tangential velocity is equal to c, both for the zbw charge in the electron as well as the one in the muon, the radius of oscillation must be smaller. The inverse proportionality between the radius (a) and the frequency (ω) is, effectively, reflected in the c = a·ω = a·E/ħ relation.

3. However, a smaller radius does result in a much smaller magnetic moment. Because the magnetic moment is equal to the current times the surface area of the loop, there are two things to consider here. One is the current, which increases with the frequency: I = q·f = qc/2πa. Hence, smaller loops give larger currents. However, this effect is offset because the surface area (A) is proportional to the squared radius: A = πa2. Hence, the net result is that the magnetic moment is linearly proportional to the radius: μ = q·c·a/2.

These remarks will, hopefully, help the reader to understand things somewhat more intuitively. Let us now proceed to our discussion of the proton.

The proton

The confusion around the exact radius of a proton now seems to have been solved. A 2019 electron-proton scattering experiment by the PRad (proton radius) team at Jefferson Lab effectively measured the root mean square (rms) charge radius of the proton as rp = 0.831 ± 0.007stat ± 0.012syst fm, and that’s close enough to the calculations of Pohl (2010) and Antognini (2013), which were not based on any direct measurement but on an analysis of the spectrum of muonic hydrogen spectroscopy and yielded point estimates of 0.84184(67) and 0.84087(39) fm respectively. Indeed, we wrote Prof. Dr. Pohl at the Max Planck Institute and he wrote us this:

“There is no difference between the values. You have to take uncertainties seriously (sometimes we spend much more time on determining the uncertainty than we do for the central value), and these numbers are in perfect agreement with each other.”

The question is: is there any theoretical value for the proton radius? We contacted the PRad Lab with our paper on the proton radius, suggesting the ap = 4ħ/mc = 0.84123564… fm value might be a good candidate for the theoretical radius of a proton, and also shared the material above. The spokesman for the group, Ashot Gasparan, replied this:

“I found your thoughts on [the] proton radius very interesting. […] For the electron: for sure, Nature cannot have a point-like object. That is coming from Newton. For the proton, there is no theoretical prediction in QCD. Lattice [theory] is trying to come up [with something] but that will take another decade before we get any reasonable number from that.” (my italics)

So how did we get that ap = 4ħ/mc = 0.84123564… fm value? Why do we have that factor of 4 here? The honest answer is: we do not know. However, we do have a bit of a heuristic or make-do explanation for it. Let us present the basics of it.

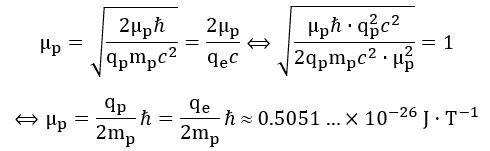

The radius and magnetic moment of a proton

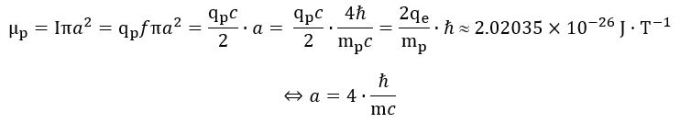

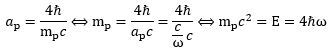

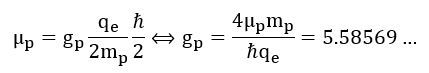

1. We may want to start by calculating a theoretical magnetic moment using the ring current formula (μ = Iπa2 = qfπa2), combining it with the two different formulas for the frequency (f = E/h and f = c/2πa), and then equating both expressions for what must be the same magnetic moment (note that we often make abstraction of the sign by equating qe and qp): 2. This result differs from the CODATA value, which is equal to:

2. This result differs from the CODATA value, which is equal to:

μCODATA = 1.41060679736(60)×10-26 J·T-1

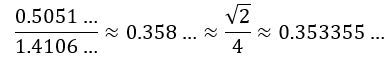

The order of magnitude is the same, however, and we, therefore, wanted to see if there is some easy ratio between the two. There is. We immediately saw a √2/4 factor: The ratio is not exact, of course. In fact, the difference is about 1.25% of the larger value. However, we thought this might be explained by a larger anomaly and so we just soldiered on. So we have a √2 and a 1/4 factor here. What is our explanation of them?

The ratio is not exact, of course. In fact, the difference is about 1.25% of the larger value. However, we thought this might be explained by a larger anomaly and so we just soldiered on. So we have a √2 and a 1/4 factor here. What is our explanation of them?

3. A magnetic dipole will precess when placed in a magnetic field, which is what is being done when measuring the magnetic moment of a proton. Its effective radius will, therefore, be smaller. Hence, as part of our realist interpretation of quantum physics, we imagine there is some real magnetic moment here, which we denote as μL, and which must be larger than the CODATA value. To be precise, it must be equal to:

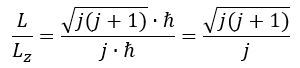

μL = √2·(1.41060679736…0-26 J·T-1) ≈ 1.995…×10-26 J·T-1

The subscript L in the μL notation stands for (orbital) angular momentum. We refer to Feynman’s Lectures (Vol. II, Chapter 34, Section 7) for an easy and very meaningful explanation of the relation between the magnitude of the actual angular momentum of a precessing quantum-mechanical magneton – which we’ll denote as L – and the measured (quantum-mechanical) value – which we’ll denote as Lz: For j = 1/2, we get a √3 factor. We think of the quantum-mechanical rule that fermions – matter-particles – are spin-1/2 particles as one of those quantum-mechanical myths: our interpretation of the Planck-Einstein relation tells us angular momentum does come in full– rather than half-units of ħ and so, yes, for j = 1, we get the required L/Lz = √2 factor.

For j = 1/2, we get a √3 factor. We think of the quantum-mechanical rule that fermions – matter-particles – are spin-1/2 particles as one of those quantum-mechanical myths: our interpretation of the Planck-Einstein relation tells us angular momentum does come in full– rather than half-units of ħ and so, yes, for j = 1, we get the required L/Lz = √2 factor.

Of course, we’ve asked ourselves the obvious question: why no √2 factor for the magnetic moment of an electron or a muon? Prof. Dr. Randolf Pohl is, since October 2019, also a member of the CODATA Task Group for Fundamental Constants, and – because of his keen interest in the matter (he and his team established the 0.841 fm measurement back in 2010!), we hope he will look into this and confirm or deny.

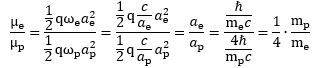

Let us move on. We are now left with the 1/4 factor. Indeed, if μL ≈ 1.995…×10-26 J·T-1 is a sensible value for the actual magnetic moment, then we may also calculate a sensible theoretical value: 4. At first, this looks like a weird result: the only radius that is compatible with the magnetic moment of a proton – in- or excluding some tiny anomaly – is a radius that is four times the radius we would get from applying the formula we used to calculate the Compton radius for an electron or a muon. What explanation do we have here? Should we sacrifice our interpretation of the Planck-Einstein relation and insert that 1/4 factor by writing something like E/4 = ħ·ω? It seems to work alright:

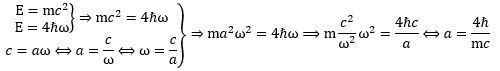

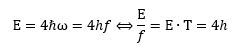

4. At first, this looks like a weird result: the only radius that is compatible with the magnetic moment of a proton – in- or excluding some tiny anomaly – is a radius that is four times the radius we would get from applying the formula we used to calculate the Compton radius for an electron or a muon. What explanation do we have here? Should we sacrifice our interpretation of the Planck-Einstein relation and insert that 1/4 factor by writing something like E/4 = ħ·ω? It seems to work alright: Let us see if we don’t get any contradictions. Combining the E = 4ħω and c = a·ω equations, and re-inserting the radius formula, we get: E = 4ħω = 4ħc/a = 4ħmc2/4ħ = mc2 = E. In fact, we can see it also works the other way. If a is equal to 4ħ/mc, then we can use the a = c/ω formula to calculate backwards and obtain the E = 4ħω formula:

Let us see if we don’t get any contradictions. Combining the E = 4ħω and c = a·ω equations, and re-inserting the radius formula, we get: E = 4ħω = 4ħc/a = 4ħmc2/4ħ = mc2 = E. In fact, we can see it also works the other way. If a is equal to 4ħ/mc, then we can use the a = c/ω formula to calculate backwards and obtain the E = 4ħω formula: Hence, it seems to work, but it doesn’t answer the obvious question: how can we explain or interpret the E = 4ħω formula?

Hence, it seems to work, but it doesn’t answer the obvious question: how can we explain or interpret the E = 4ħω formula?

The puzzle of the proton mass

There are two ways to go about it—perhaps more, of course, but we readily see two. The first way is the one we first used to get the a = 4ħ·/mc, radius: we talk about in our paper on the proton radius – the one we sent to Prof. Dr. Pohl and the PRad team – and it involves some rather ad hoc assumptions involving the energy equipartition theorem. To be precise, we basically assume only 1/4 of the energy of the proton is in the Zitterbewegung of the elementary charge inside. It works, but it doesn’t feel good as an explanation: where are the other three quarters of the energy then?

The second way to think about it is in terms of a different form factor. The ring current model for the electron and the muon assumed a pointlike charge in orbit. Combining this idea of an orbital with the electromagnetic field, you may think of the electron (and the muon) as a disk-like structure. The 1/4 factor suggests we should, perhaps, not think of the proton as a disk-like structure based on the idea of a ring current, as we did for the electron and the muon. There are other possibilities, indeed. Perhaps it is a disk-like structure, but then it might a whole disk of electric charge in some Zitterbewegung-like oscillatory motion or – a more intriguing possibility – perhaps it is a sphere or a shell of charge!

Let us explore these ideas by developing some thoughts on the g-factor for a proton.

The g-factor for a proton

We have already noted that the concept of a g-factor may be more confusing than enlightening. Why? Because we cannot directly measure the angular momentum. All we can measure is the magnetic moment. Hence, the calculation of a g-factor always involves an assumption regarding the angular mass of the particle that we are looking at. We, therefore, think that the concept of a g-factor is not very scientific: what is the use of calculating some g-factor if one cannot directly observe the shape of an electron, or a muon, or a proton—or any sub-atomic particle, really?

Having said that, we do think the g = 2 value for an electron (or our g = 1 value when using the simpler q/m unit) makes sense. In fact, perhaps, we should say the g-factor for an electron is 1/2. Why? It’s a much simpler equation for the magnetic moment, isn’t? No mysterious 1/2 or 2 factors: Again, the reader may exclaim: what about the spin-1/2 property? Think of it like this: the electron is spin-1/2 because its real g-factor is 1/2. 🙂 Seriously, we really don’t want to ridicule mainstream scientists, but the equations are what they are, and so we are free to group and/or un-group any numeric factor in them like we want. The idea of a gyromagnetic ratio that we cannot directly measure should not deter us here.

Again, the reader may exclaim: what about the spin-1/2 property? Think of it like this: the electron is spin-1/2 because its real g-factor is 1/2. 🙂 Seriously, we really don’t want to ridicule mainstream scientists, but the equations are what they are, and so we are free to group and/or un-group any numeric factor in them like we want. The idea of a gyromagnetic ratio that we cannot directly measure should not deter us here.

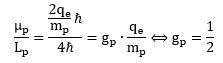

Let us now try to think some more. We have a CODATA value for the g-factor for a proton: it’s equal to 5.5857, more or less. This value is calculated using the theoretical (or mythical, I’d say) ħ/2 value for the angular momentum. It also uses the CODATA value for the magnetic moment, as opposed to our μL value, which is the CODATA value corrected for precession. Hence, the CODATA calculation of the g-factor is this: That is a weird result. Indeed, if the geometry is that of an easy mathematical shape – like a hoop, a disk or sphere – then we should get a g-factor that is some integer or some fraction of integers that is related to the difference between two shapes. Think, for example, of the ratio of the 1/2 and 2/5 factors in the formulas for the angular mass of a disk and a sphere: (1/2)/(2/5) = 5/4.

That is a weird result. Indeed, if the geometry is that of an easy mathematical shape – like a hoop, a disk or sphere – then we should get a g-factor that is some integer or some fraction of integers that is related to the difference between two shapes. Think, for example, of the ratio of the 1/2 and 2/5 factors in the formulas for the angular mass of a disk and a sphere: (1/2)/(2/5) = 5/4.

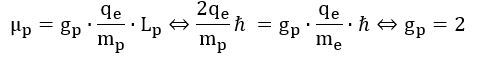

So why don’t we get such number here? The answer is: we actually do get a simple integer number if we incorporate the √2 factor which – as mentioned – may or may not be there because of the precession of an atomic or sub-atomic magnet in a magnetic field. To be precise, using the q/m variant of the nuclear magneton (rather than the usual q/2m definition of it), we get a g-factor that is equal to 2: Wow ! We get a g-ratio that is four times than of an electron! What does it mean? We are not sure, but we can check a few relations that may or may not help to interpret this result. Let us, for example, calculate the ratio of the magnetic moment of the electron and the proton:

Wow ! We get a g-ratio that is four times than of an electron! What does it mean? We are not sure, but we can check a few relations that may or may not help to interpret this result. Let us, for example, calculate the ratio of the magnetic moment of the electron and the proton: That’s a very interesting result, especially because you can actually calculate it and you will see it works! So, yes, perhaps we are onto something here. Now that we are calculating ratios of magnetic moments, we should, perhaps, use our formulas for the magnetic moment of a ring, a shell and a sphere of charge. Let us first try the ratio using the formula for a ring current—for the electron as well as for the proton. We get this:

That’s a very interesting result, especially because you can actually calculate it and you will see it works! So, yes, perhaps we are onto something here. Now that we are calculating ratios of magnetic moments, we should, perhaps, use our formulas for the magnetic moment of a ring, a shell and a sphere of charge. Let us first try the ratio using the formula for a ring current—for the electron as well as for the proton. We get this: We get the same result—not approximately, but exactly! We don’t need the formula for the magnetic moment of a sphere or a shell of charge!

We get the same result—not approximately, but exactly! We don’t need the formula for the magnetic moment of a sphere or a shell of charge!

What is going on? Our E = 4ħω formula works, but we still haven’t explained it.

The proton as a spin-1/2 particle

We are a bit at a loss here. We’ve explored the idea of a different form factor for the proton, but it doesn’t work. You can check: just re-calculate the μe/μp ratio using the ring current formula for μe and, say, the formula for a shell or a sphere of charge for μp. We get a 3/4 or a 5/4 factor we can’t get rid of. What is the solution?

The answer is simple but mysterious: we must accept this modified Planck-Einstein relation for a proton: E = 4ħω. What does it mean? We can re-write this in terms of energy (E) and cycle time (T): What does it mean? It means that physical action does not always come in units of h. In the case of a proton, it comes in units of four times h! Dividing by 2π, that means its angular momentum must also be equal to four units of ħ! It means our proton is – after all – a spin-1/2 particle. Indeed, we can write this:

What does it mean? It means that physical action does not always come in units of h. In the case of a proton, it comes in units of four times h! Dividing by 2π, that means its angular momentum must also be equal to four units of ħ! It means our proton is – after all – a spin-1/2 particle. Indeed, we can write this: Something inside of me says all these Mystery Wallahs had a secret version of the correct quantum theory somewhere in a drawer.

Something inside of me says all these Mystery Wallahs had a secret version of the correct quantum theory somewhere in a drawer. So what’s the meaning then of this mysterious spin-1/2 property? It simply is this: the ratio of (1) the product of the mass and the magnetic moment and (2) the product of the charge and the angular momentum is equal to 1/2—for an electron, for a muon, and for a proton. Indeed, you can easily verify this now:

We may, therefore, say that the only meaningful g-factor that can be defined is really this: (1/2)·q/m. It is, effectively, the ratio between the magnetic moment and the angular momentum for all of the matter-particles we looked at there, which are the electron, the muon and the proton. Rather ironically, this newly defined g-factor is, effectively, Bohr’s magneton. Why did he want to confuse us with the definition of some new one? Some pure but rather meaningless number, such as 1/2 or 2? We don’t know: the Mystery Wallahs must have had their own reasons.

We may, therefore, say that the only meaningful g-factor that can be defined is really this: (1/2)·q/m. It is, effectively, the ratio between the magnetic moment and the angular momentum for all of the matter-particles we looked at there, which are the electron, the muon and the proton. Rather ironically, this newly defined g-factor is, effectively, Bohr’s magneton. Why did he want to confuse us with the definition of some new one? Some pure but rather meaningless number, such as 1/2 or 2? We don’t know: the Mystery Wallahs must have had their own reasons.

Let us conclude by doing some final calculations—we are interested in the magnitude of that force inside the proton, aren’t we? We sure are!

The strong(er) force inside of a proton

Using the formulas we derived from our geometric analysis of the centripetal force and acceleration, we can calculate the force inside an electron, a muon-electron and a proton as follows: We can also calculate their ratios. Indeed, in our two-dimensional oscillator force, we effectively think of the centripetal force as some restoring force. This force depends linearly on the displacement from the center and the (linear) proportionality constant is usually written as k. Hence, we can write Fe, Fμ, and Fp as Fe = −kex , Fμ = −kμx and Fp = −kpx respectively. Taking the ratio of each of these forces gives an idea of their respective strength:

We can also calculate their ratios. Indeed, in our two-dimensional oscillator force, we effectively think of the centripetal force as some restoring force. This force depends linearly on the displacement from the center and the (linear) proportionality constant is usually written as k. Hence, we can write Fe, Fμ, and Fp as Fe = −kex , Fμ = −kμx and Fp = −kpx respectively. Taking the ratio of each of these forces gives an idea of their respective strength: You will recognize the numbers on the right-hand side as mass ratios. There are no surprises there: the proton is about 8.88 times more massive than the muon, which, in turn, is almost 207 times more massive than an electron. The proton is, therefore, 1,836 times more massive than an electron. For the rest, it is difficult to make sense of these ratios. We should probably understand these oscillations, frequencies and forces as higher modes of some fundamental frequency but such rather vague statements should be detailed, of course—and we are not (yet) in a position to do so.

You will recognize the numbers on the right-hand side as mass ratios. There are no surprises there: the proton is about 8.88 times more massive than the muon, which, in turn, is almost 207 times more massive than an electron. The proton is, therefore, 1,836 times more massive than an electron. For the rest, it is difficult to make sense of these ratios. We should probably understand these oscillations, frequencies and forces as higher modes of some fundamental frequency but such rather vague statements should be detailed, of course—and we are not (yet) in a position to do so.

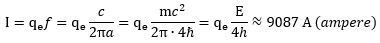

We will leave it to the reader to calculate the electric currents inside these elementary particles. For the electron, you should find a current of about 19.8 A (ampere). That’s a household-level current—inside something we measure at the pico-meter scale. If you think that’s outrageous, please calculate the current inside a proton. It is also inversely proportional to the radius. Hence, the proton is much smaller, but we calculate the current inside as being much larger: The associated electromagnetic field strengths are equally enormous. Lest the reader becomes very skeptical here, we remind him of this: something has to explain the enormous mass density of a proton (and a muon), as compared to the electron, and because our model is basically a ‘mass without mass’ model, the energies have to be humongous, indeed!

The associated electromagnetic field strengths are equally enormous. Lest the reader becomes very skeptical here, we remind him of this: something has to explain the enormous mass density of a proton (and a muon), as compared to the electron, and because our model is basically a ‘mass without mass’ model, the energies have to be humongous, indeed!

We hope that comes across as credible enough! Having said that, we do invite you to think everything through and, importantly, to also check our calculations so as to make sure we’re OK! I hope you enjoyed this!

21 March 2020

8 thoughts on “Matter”