Pre-scriptum (dated 26 June 2020): This post did not suffer much – if at all – from the attack by the dark force—which is good because I still like it. Enjoy !

Original post:

This post will probably be of little or no interest to you. I wrote it to get somewhat more acquainted with logarithms myself. Indeed, I struggle with them. I think they come across as difficult because we don’t learn about the logarithmic function when we learn about the exponential function: we only learn logarithms later – much later. And we don’t use them a lot: exponential functions pop up everywhere, but logarithms not so much. Therefore, we are not as familiar with them as we should be.

The second point issue is notation: x = loga(y) looks more terrifying than y = ax because… Well… Too many letters. It would be more logical to apply the same economy of symbols. We could just write x = ay instead of loga(y), for example, using a subscript in front of the variable–as opposed to a superscript behind the variable, as we do for the exponential function. Or, else, we could be equally verbose for the exponential function and write y = expa(x) instead of y = ax. In fact, you’ll find such more explicit expressions in spreadsheets and other software, because these don’t take subscripts or superscripts. And then, of course, we also have the use of the Euler number e in ex and ln(x). While it’s just a real number, e is not as familiar to us as π, and that’s again because we learned trigonometry before we learned advanced calculus.

Historically, however, the exponential and logarithmic functions were ‘invented’, so to say, around the same time and by the same people: they are associated with John Napier, a Scot (1550–1617), and Henry Briggs, an Englishman (1561–1630). Briggs is best known for the so-called common (i.e. base 10) logarithm tables, which he published in 1624 as the Arithmetica Logarithmica. It is logical that the mathematical formalism needed to deal with both was invented around the same time, because they are each other’s inverse: if y = ax, then x = loga(y).

These Briggs tables were used, in their original format more or less, until computers took over. Indeed, it’s funny to read what Feynman writes about these tables in 1965: “We are all familiar with the way to multiply numbers if we have a table of logarithms.” (Feynman’s Lectures, p. 22-4). Well… Not any more. And those slide rules, or slipsticks as they were called in the US, have disappeared as well, although you can still find circular slide rules on some expensive watches, like the one below.

It’s a watch for aviators, and it allows them to rapidly multiply numbers indeed: the time multiplied by the speed will give a pilot the distance covered. Of course, there’s all kinds of intricacies here because we’ll measure time in minutes (or even seconds), and speed in knots or miles per hour, and so that explains all the other fancy markings on it. 🙂 In case you have one, now you know what you’re paying for! A real aviator watch! 🙂

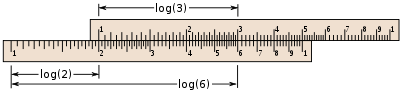

How does it work? Well… These slide rules can be used for a number of things but their most basic function is to multiply numbers indeed, and that function is based on the logb(ac) = logb(a) + logb(c). In fact, this works for any base so we can just write log(ac) = log(a) + log(c). So the numbers on the slide rule below are the a, b and c. Note that the slides start with 1 because we’re working with positive numbers only and log(1) = 0, so that corresponds with the zero point indeed. The example below is simple (2 times 3 is six, obviously): it would have been better to demonstrate 1.55×2.35 or something. But you see how it goes: we add log(2) and log(3) to get log(6) = log(2×3). For 1.55×2.35, the slider would show a position between 3.6 and 3.7. The calculator on my $30 Nokia phone gives me 3.6425. So, yes, it’s not far off. However, it’s hard to imagine that engineers and scientists actually used these slide rules over the past 300 years or so, if not longer.

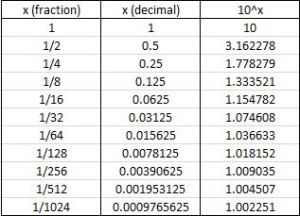

Of course, Briggs’ tables are more accurate. It’s quite amazing really: he calculated the logarithms of 30,000 (natural) numbers to to fourteen decimal places. It’s quite instructive to check how he did that: all he did, basically, was to calculate successive square roots of 10.

Huh?

Yes. The secret behind is the basic rule of exponentiation: exponentiation is repeated multiplication, and so we can write: am+n =aman and, more importantly, am–n = ama–n = am/an. Because Briggs used the common base 10, we should write 10m–n = 10m/10n. Now Briggs had a table with the successive square roots of 10, like the one below (it’s only six significant digits behind the decimal point, not fourteen, but I just want to demonstrate the principle here), and so that’s basically what he used to calculate the logarithm (to base 10) of 30,000 numbers! Talking patience ! Can you imagine him doing that, day after day, week after week, month after month, year after year? Waw !

So how did he do it? Well… Let’s do it for x = log10(2) = log(2). So we need to find some x for which 10x = 2. From the table above, it’s obvious that log(2) cannot be 1/2 (= 0.5), because 101/2 = 3.162278, so that’s too big (bigger than 2). Hence, x = log(2) must be smaller than 0.5 = 1/2. On the other hand, we can see that x will be bigger than 1/4 = 0.25 because 101/4 = 1.778279, and so that’s less than 2.

In short, x = log(2) will be between 0.25 (= 1/4) and 0.5. What Briggs did then, is to take that 101/4 factor out using the 10m–n = 10m/10n formula indeed:

10x–0.25 = 10x/100.25 = 2/1.778279 = 1.124683

If you’re panicking already, relax. Just sit back. What we’re doing here, in this first step, is to write 2 as

2 = 10x = 10[0.25 + (x–0.25)] = 101/410x–0.25 = (1.778279)(1.124683)

[If you’re in doubt, just check using your calculator.] We now need log(10x–0.25) = log(1.124683). Now, 1.124683 is between 1.154782 and 1.074608 in the table. So we’ll use the lowest value (101/32) to take another factor out. Hence, we do another division: 1.124683/1.074608 = 1.046598. So now we have 2 = 10x = 10[1/4 + 1/32 + (x – 1/4 – 1/32)] = (1.778279)(1.074608)(1.046598).

We now need log(10x–1/4–1/32) = log(1.046598). We check the table once again, and see that 1.046598 is bigger than the value for 101/64, so now we can take that 101/64 value out by doing another division. (10x–1/4–1/32)/101/64 = 1.046598/1.036633 = 1.009613. Waw, this is getting small! However, we can still take an additional factor out because it’s larger than the 1.009035 value in the table. So we can do another division: 1.009613/1.009035 = 1.000573. So now we have 2 = 10x = 10[1/4 + 1/32 + 1/64 + 1/256 + (x – 1/4 –1/32 – 1/64 –1/256)] = 101/4101/32101/64101/25610x–1/4–1/32–1/64–1/256 = (1.778279)(1.074608)(1.036633)(1.009035)(1.000573).

Now, the last factor is outside of the range of our table: it’s too small to find a fraction. However, we had a linear approximation based on the gradient for very small fractions x: 10r = 1 + 2.302585·r. So, in this case, we have 1.000573 = 1 + 2.302585·r and, hence, we can calculate r as 0.000248. [I can shown where this approximation comes from: just check my previous posts if you want to know. It’s not difficult.] So, now, we can finally write the result of our iterations:

2 = 10x ≈ 10(1/4 + 1/32 + 1/64 + 1/256 + 0.000248)

So log(2) is approximated by 0.25 + 0.03125 + 0.015625 + 0.00390625 + 0.000248 = 0.30103. Now, you can check this easily: it’s essentially correct, to an accuracy of six digits that is!

Hmm… But how did Briggs calculate these square roots of 10? Well… That was done ‘by cut and try’ apparently! Pf-ff ! Talk of patience indeed ! I think it’s amazing ! And I am sure he must have kept this table with the square roots of 10 in a very safe place ! 🙂

So, why did I show this? Well… I don’t know. Just to pay homage to those 17th century mathematicians, I guess. 🙂 But there’s another point as well. While the argument above basically demonstrated the am+n = aman formula or, to be more precise, the am–n = am/an formula, it also shows the so-called product rule for logarithms:

logb(ac) = logb(a) + logb(c)

Indeed, we wrote 2 as a product of individual factors 10r and then we could see the exponents r in all of these individual factors add up to 2. However, the more formal proof is interesting, and much shorter too: 🙂

- Let m = loga(x) and n = loga(y)

- Write in exponent form: x = am and y = an

- Multiply x and y: xy = aman = am+n

- Now take loga of both sides: loga(xy) = loga(am+n) = (m+n)loga(a) = m+n = loga(x) + loga(y)

You’ll notice that we used another rule in this proof, and that’s the so-called power rule for logarithms:

loga(xn)= nloga(x)

This power rule is proved as follows:

- Let m = loga(x)

- Write in exponent form: x = am

- Raise both sides to the power of n: xn = (am)n

- Convert back to a logarithmic equation: loga(xn)= mn

- Substitute for m = loga(x): loga(xn)= n loga(x)

Are there any other rules?

Yes. Of course, we have the quotient rule:

loga(x/y) = loga(x) – loga(y)

The proof of this follows the proof of the product rule, and so I’ll let you work on that.

Finally, we have the ‘change-of-base’ rule, which shows us how we can easily switch from one base to another indeed:

The proof is as follows:

- Let x = loga b

- Write in exponent form: ax = b

- Take log c of both sides and evaluate:

log c ax = log c b

xlog c a = log c b

[I copied these rules and proofs from onlinemathlearning.com, so let me acknowledge that here. :-)]

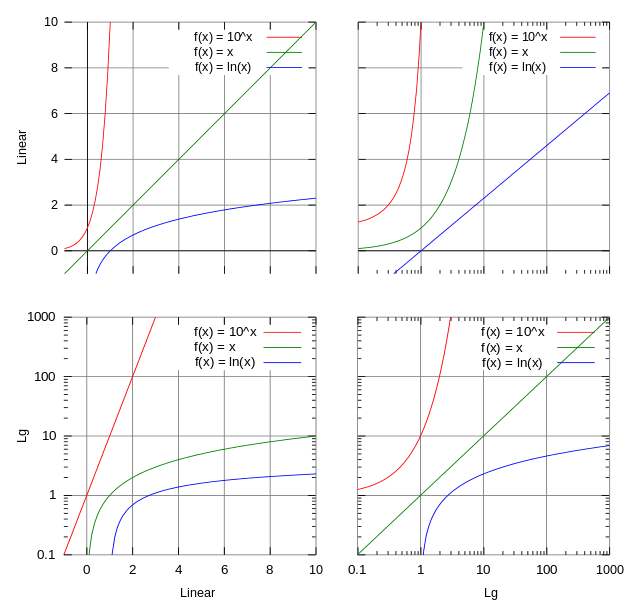

Is that it? Well… Yes. Or no. Let me add a few more lines on these logarithmic scales that you often encounter in various graphs. It the same scale as those logarithmic scales used for that slide that we showed above but it covers several orders of magnitude, all equally spaced: 1, 10, 100, 1000, etcetera, instead of 0, 1, 2, 3, etcetera. So each unit increase on the scale corresponds to a unit increase of the exponent for a given base (base 10 in this case): 101, 102, 103, etcetera. The illustration below (which I took from Wikipedia) compares logarithmic scales to linear ones, for one or both axes.

So, on a logarithmic scale, the distance from 1 to 100 is the same as the distance from 10 to 1000, or the distance from 0.1 to 10, or the distance between any point that’s 100 (= 102) times another point. This is easily explained by the product rule, or the quotient rule rather:

log(10) – log(0.1) = log(101/1–1) = log(102) = 2

= log(1000) – log(10) = log(103/11) = log(102/) = 2

= log(100) – log(1) = log(102/100) = log(102) = 2

And, of course, we could say the same for the distance between 1 and 1000, and 0.1 and 100. The distance on the scale is 3 units here, while the point is 1000 = 103 the other point.

Why would we use logarithmic scales? Well… Large quantities are often better expressed like that. For example, the Richter scale used to measure the magnitude of an earthquake is just a base–10 logarithmic scale. With magnitude, we mean the amplitude of the seismic waves here. So an earthquake that registers 5.0 units on the Richter scale has a ‘shaking amplitude’ that is 10 times greater than that of an earthquake that registers 4.0. Both are fairly light earthquakes, however: magnitude 7, 8 or 9 are the big killers. Note that, theoretically, we could have earthquakes of a magnitude higher than 10 on the Richter scale: scientists think that the asteroid that created the Chicxulub crater created a cataclysm that would have measured 13 on Richter’s scale, and they associate it with the extinction of the dinosaurs.

The decibel, measuring the level of sound, is another logarithmic unit, so the power associated with 40 decibel is not two times but one hundred times that of 20 decibel!

Now that we’re talking sound, it seems that logarithmic scales are more ‘natural’ when it comes to human perception in general, but I’ll let you have fun googling some more stuff on that! 🙂