[Note: The text below is a bit of a placeholder for the time being. We intend to do a lot more research in this area over the coming year(s).]

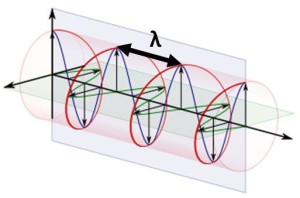

So far, we have been mainly focused on making sure our electron and proton models are consistent. Why? Because we believe the hybrid description of the ring current model should provide for a concise and consistent description of diffraction and interference patterns when forcing particles through single or double slits—even if they are going through them one-by-one. Why? Because we can now interpret the wavefunction as the oscillatory motion of the charge itself (or, in case of the photon, as an electromagnetic point oscillation) and we, therefore, can analyze it as some physical wave going through the slit(s).

However, none of our papers have actually given such concise and consistent description: we were just too focused on getting the basics of the model right. Hence, it is about time we try to use them to explain what needs to be explained: diffraction and interference. In our manuscript[1], we presented this rather simplistic Archimedes screw illustration of how the zbw charge might actually move through space when the electron as a whole acquires some (non-relativistic) velocity v. So is this a moving electron, really? Probably not: we see no reason why the plane of the oscillation – the plane of rotation of the pointlike charge, that is – should be perpendicular to the direction of propagation of the electron as a whole. On the contrary, we think there is every reason to believe the plane of oscillation moves about itself – in some kind of random motion – unless an external electromagnetic field snaps it into place, which may be either up or down, depending on where the magnetic moment was pointing when the electron – a magnetic dipole because of the current inside – entered the external field.

So is this a moving electron, really? Probably not: we see no reason why the plane of the oscillation – the plane of rotation of the pointlike charge, that is – should be perpendicular to the direction of propagation of the electron as a whole. On the contrary, we think there is every reason to believe the plane of oscillation moves about itself – in some kind of random motion – unless an external electromagnetic field snaps it into place, which may be either up or down, depending on where the magnetic moment was pointing when the electron – a magnetic dipole because of the current inside – entered the external field.

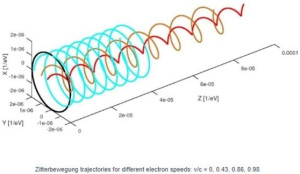

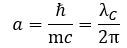

The illustration is useful to help us in understanding what may or may not be happening to the radius of the oscillation (a = ħ/mc) and the λ wavelength in the illustration above, which is like the distance between two crests or two troughs of the wave.[2] Indeed, we should briefly present a rather particular geometric property of the Zitterbewegung (zbw) motion: the a = ħ/mc radius – the Compton radius of our electron – must decrease as the (classical) velocity of our electron increases. The idea is visualized in the illustration below (for which credit goes to an Italian group of zbw theorists[3]): What happens here is quite easy to understand. If the tangential velocity remains equal to c, and the pointlike charge has to cover some horizontal distance as well, then the circumference of its rotational motion must decrease so it can cover the extra distance. But let us analyze it the way we should analyze it, and that’s by using our formulas. Let us first think about our formula for the zbw radius a:

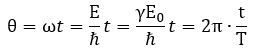

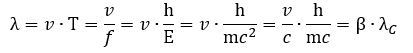

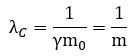

What happens here is quite easy to understand. If the tangential velocity remains equal to c, and the pointlike charge has to cover some horizontal distance as well, then the circumference of its rotational motion must decrease so it can cover the extra distance. But let us analyze it the way we should analyze it, and that’s by using our formulas. Let us first think about our formula for the zbw radius a: The λC is the Compton wavelength, so that’s the circumference of the circular motion.[4] How can it decrease? If the electron moves, it will have some kinetic energy, which we must add to the rest energy. Hence, the mass m in the denominator (mc) increases and, because ħ and c are physical constants, a must decrease.[5] How does that work with the frequency? The frequency is proportional to the energy (E = ħ·ω = h·f = h/T) so the frequency – in whatever way you want to measure it – must also increase. The cycle time T, therefore, must decrease. We write:

The λC is the Compton wavelength, so that’s the circumference of the circular motion.[4] How can it decrease? If the electron moves, it will have some kinetic energy, which we must add to the rest energy. Hence, the mass m in the denominator (mc) increases and, because ħ and c are physical constants, a must decrease.[5] How does that work with the frequency? The frequency is proportional to the energy (E = ħ·ω = h·f = h/T) so the frequency – in whatever way you want to measure it – must also increase. The cycle time T, therefore, must decrease. We write: So our Archimedes’ screw gets stretched, so to speak. Let us think about what happens here. We get the following formula for our new λ wavelength:

So our Archimedes’ screw gets stretched, so to speak. Let us think about what happens here. We get the following formula for our new λ wavelength: Can the velocity go to c? In the limit, yes. Why? Because the rest mass of the zbw charge is zero. This is very interesting, because we can see that the circumference which describes the two-dimensional oscillation of the zbw charges seems to transform into some wavelength in the process! This relates the geometry of our zbw electron to the geometry of our photon model. However, we should not pay too much attention to this now.

Can the velocity go to c? In the limit, yes. Why? Because the rest mass of the zbw charge is zero. This is very interesting, because we can see that the circumference which describes the two-dimensional oscillation of the zbw charges seems to transform into some wavelength in the process! This relates the geometry of our zbw electron to the geometry of our photon model. However, we should not pay too much attention to this now.

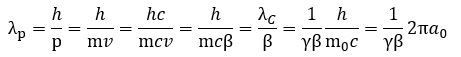

We have a classical velocity (v), so we should now relate the equally classical linear momentum of our particle to this geometry. Hence, we should now talk about the de Broglie wavelength, which we’ll denote by using a separate subscript: λp = h/p.

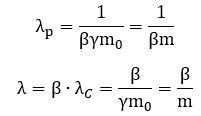

Is it different from the λ wavelength we had already introduced? It is. We have three wavelengths now: the Compton wavelength λC (which is a circumference, actually), that weird horizontal distance λ, and the de Broglie wavelength λp. Can we make sense of that? We can. Let us first re-write the de Broglie wavelength in terms of the Compton wavelength (λC = h/mc), its (relative) velocity β = v/c, and the Lorentz factor γ: It is a curious function, but it helps us to see what happens to the de Broglie wavelength as m and v both increase as our electron picks up some momentum p = m·v. Its wavelength must actually decrease as its (linear) momentum goes from zero to some much larger value – possibly infinity as v goes to c – and the 1/γβ factor tells us how exactly. The graph below shows how the 1/γβ factor comes down from infinity (+∞) to zero as v goes from 0 to c or – what amounts to the same – if the relative velocity β = v/c goes from 0 to 1. The 1/γ factor – so that’s the inverse Lorentz factor) – is just a simple circular arc, while the 1/β function is just a regular inverse function (y = 1/x) over the domain β = v/c, which goes from 0 to 1 as v goes from 0 to c. Their product gives us the green curve which – as mentioned – comes down from +∞ to 0.

It is a curious function, but it helps us to see what happens to the de Broglie wavelength as m and v both increase as our electron picks up some momentum p = m·v. Its wavelength must actually decrease as its (linear) momentum goes from zero to some much larger value – possibly infinity as v goes to c – and the 1/γβ factor tells us how exactly. The graph below shows how the 1/γβ factor comes down from infinity (+∞) to zero as v goes from 0 to c or – what amounts to the same – if the relative velocity β = v/c goes from 0 to 1. The 1/γ factor – so that’s the inverse Lorentz factor) – is just a simple circular arc, while the 1/β function is just a regular inverse function (y = 1/x) over the domain β = v/c, which goes from 0 to 1 as v goes from 0 to c. Their product gives us the green curve which – as mentioned – comes down from +∞ to 0.

1/γ, 1/β and 1/γβ graphs

We may add two other results here:

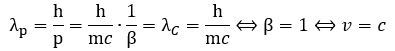

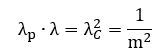

1. The de Broglie wavelength will be equal to λC = h/mc for v = c: 2. We can now relate both Compton as well as de Broglie wavelengths to our new wavelength λ = β·λC wavelength—which is that length between the crests or troughs of the wave.[6] We get the following two rather remarkable results:

2. We can now relate both Compton as well as de Broglie wavelengths to our new wavelength λ = β·λC wavelength—which is that length between the crests or troughs of the wave.[6] We get the following two rather remarkable results: The product of the λ = β·λC wavelength and de Broglie wavelength is the square of the Compton wavelength, and their ratio is the square of the relative velocity β = v/c. – always! – and their ratio is equal to 1 – always! These two results are quite remarkable. Is there an easy geometric interpretation?

The product of the λ = β·λC wavelength and de Broglie wavelength is the square of the Compton wavelength, and their ratio is the square of the relative velocity β = v/c. – always! – and their ratio is equal to 1 – always! These two results are quite remarkable. Is there an easy geometric interpretation?

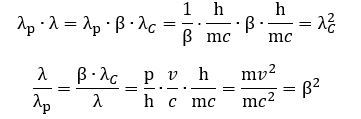

There is if we use natural units. Equating c to 1 gives us natural distance and time units, and equating h to 1 then gives us a natural force unit—and, because of Newton’s law, a natural mass unit as well. Why? Because Newton’s F = m·a equation is relativistically correct: a force is that what gives some mass acceleration. Conversely, mass can be defined of the inertia to a change of its state of motion—because any change in motion involves a force and some acceleration. We write: m = F/a. If we re-define our distance, time and force units by equating c and h to 1, then the Compton wavelength (remember: it’s a circumference, really) and the mass of our electron will have a simple inversely proportional relation: We get equally simple formulas for the de Broglie wavelength and our λ wavelength:

We get equally simple formulas for the de Broglie wavelength and our λ wavelength: This is quite deep: we have three lengths here – defining all of the geometry of the model – and they all depend on the rest mass of our object and its relative velocity only. They are related through that equation we found above:

This is quite deep: we have three lengths here – defining all of the geometry of the model – and they all depend on the rest mass of our object and its relative velocity only. They are related through that equation we found above: This is nothing but the latus rectum formula for an ellipse. What formula? Relax. We didn’t know it either. Just look at the illustration below.[7] The length of the chord – perpendicular to the major axis of an ellipse is referred to as the latus rectum. One half of that length is the actual radius of curvature of the osculating circles at the endpoints of the major axis.[8] We then have the usual distances along the major and minor axis (a and b). Now, one can show that the following formula has to be true:

This is nothing but the latus rectum formula for an ellipse. What formula? Relax. We didn’t know it either. Just look at the illustration below.[7] The length of the chord – perpendicular to the major axis of an ellipse is referred to as the latus rectum. One half of that length is the actual radius of curvature of the osculating circles at the endpoints of the major axis.[8] We then have the usual distances along the major and minor axis (a and b). Now, one can show that the following formula has to be true:

a·p = b2

Hence, our three wavelengths obey the same formula. You may think this is interesting (or not), but you should ask: what’s the relation with what we want to talk about here?

You are right: the objective of the discussion above was just to give you a feel of the geometry of our electron as it starts moving about. As you can see, things aren’t all that easy. Talking about some wave going through one slit (diffraction) or two slits (interference) sounds easy, but the nitty-gritty of it is very complicated.

We basically assume the electron – as an electromagnetic oscillation with some pointlike charge whirling around in it – does effectively go through the two slits at the same time, but how exactly it then interacts with the electrons of the material in which these slits were cut is a very complicated matter. We should not exclude, for example, that it may actually not be the same electron that goes in and comes out¾and that’s just for starters!

So, yes, we will likely be busy for years to come, and so this page is, effectively, a bit of a placeholder only for the time being! 😊

[1] See: The Emperor Has No Clothes: A Realist Interpretation of Quantum Mechanics (https://vixra.org/abs/1901.0105).

[2] Because it is a wave in two dimensions, we cannot really say there are crests or troughs, but the terminology might help you with the interpretation of the geometry here.

[3] Vassallo, G., Di Tommaso, A. O., and Celani, F, The Zitterbewegung interpretation of quantum mechanics as theoretical framework for ultra-dense deuterium and low energy nuclear reactions, in: Journal of Condensed Matter Nuclear Science, 2017, Vol 24, pp. 32-41. Don’t worry about the rather weird distance scale (1´10–6 eV–1). Time and distance can be expressed in inverse energy units when using so-called natural units (c = ħ = 1). We are not very fond of this because we think it does not necessarily clarify or simplify relations. Just note that 1´10–9 eV–1 = 1 GeV–1 » 0.1975´10–15 m. As you can see, the zbw radius is of the order of 2´10–6 eV–1 in the diagram, so that’s about 0.4´10–12 m, which is what we calculated: a » 0.386´10–12 m.

[4] Hence, the C subscript stands for the C of Compton, not for the speed of light (c).

[5] We advise the reader to always think about proportional (y = kx) and inversely proportional (y = x/k) relations in our exposé, because they are not always intuitive.

[6] We should emphasize, once again, that our two-dimensional wave has no real crests or troughs: λ is just the distance between two points whose argument is the same—except for a phase factor equal to n·2π (n = 1, 2,…).

[7] Source: Wikimedia Commons (By Ag2gaeh – Own work, CC BY-SA 4.0, https://commons.wikimedia.org/w/index.php?curid=57428275).

[8] The endpoints are also known as the vertices of the ellipse. As for the concept of an osculating circles, that’s the circle which, among all tangent circles at the given point, which approaches the curve most tightly. It was named circulus osculans – which is Latin for ‘kissing circle’ – by Gottfried Wilhelm Leibniz. You know him, right? Apart from being a polymath and a philosopher, he was also a great mathematician. In fact, he was the one who invented differential and integral calculus.