Pre-script (dated 26 June 2020): This post has become less relevant (even irrelevant, perhaps) because my views on all things quantum-mechanical have evolved significantly as a result of my progression towards a more complete realist (classical) interpretation of quantum physics. In addition, some of the material was removed by a dark force (that also created problems with the layout, I see now). In any case, we recommend you read our recent papers. I keep blog posts like these mainly because I want to keep track of where I came from. I might review them one day, but I currently don’t have the time or energy for it. 🙂

Original post:

We’ve covered a lot of ground in the previous post, but we’re not quite there yet. We need to look at a few more things in order to gain some kind of ‘physical’ understanding’ of Maxwell’s equations, as opposed to a merely ‘mathematical’ understanding only. That will probably disappoint you. In fact, you probably wonder why one needs to know about Gauss’ and Stokes’ Theorems if the only objective is to ‘understand’ Maxwell’s equations.

To some extent, your skepticism is justified. It’s already quite something to get some feel for those two new operators we’ve introduced in the previous post, i.e. the divergence (div) and curl operators, denoted by ∇• and ∇× respectively. By now, you understand that these two operators act on a vector field, such as the electric field vector E, or the magnetic field vector B, or, in the example we used, the heat flow h, so we should write ∇•(a vector) and ∇×(a vector. And, as for that del operator – i.e. ∇ without the dot (•) or the cross (×) – if there’s one diagram you should be able to draw off the top of your head, it’s the one below, which shows:

- The heat flow vector h, whose magnitude is the thermal energy that passes, per unit time and per unit area, through an infinitesimally small isothermal surface, so we write: h = |h| = ΔJ/ΔA.

- The gradient vector ∇T, whose direction is opposite to that of h, and whose magnitude is proportional to h, so we can write the so-called differential equation of heat flow: h = –κ∇T.

- The components of the vector dot product ΔT = ∇T•ΔR = |∇T|·ΔR·cosθ.

You should also remember that we can re-write that ΔT = ∇T•ΔR = |∇T|·ΔR·cosθ equation – which we can also write as ΔT/ΔR = |∇T|·cosθ – in a more general form:

Δψ/ΔR = |∇ψ|·cosθ

That equation says that the component of the gradient vector ∇ψ along a small displacement ΔR is equal to the rate of change of ψ in the direction of ΔR. And then we had three important theorems, but I can imagine you don’t want to hear about them anymore. So what can we do without them? Let’s have a look at Maxwell’s equations again and explore some linkages.

Curl-free and divergence-free fields

From what I wrote in my previous post, you should remember that:

- The curl of a vector field C (i.e. ∇×C) represents its circulation, i.e. its (infinitesimal) rotation.

- Its divergence (i.e. ∇•C) represents the outward flux out of an (infinitesimal) volume around the point we’re considering.

Back to Maxwell’s equations:

Let’s start at the bottom, i.e. with equation (4). It says that a changing electric field (i.e. ∂E/∂t ≠ 0) and/or a (steady) electric current (j/ε0) will cause some circulation of B, i.e. the magnetic field. It’s important to note that (a) the electric field has to change and/or (b) that electric charges (positive or negative) have to move in order to cause some circulation of B: a steady electric field will not result in any magnetic effects.

This brings us to the first and easiest of all the circumstances we can analyze: the static case. In that case, the time derivatives ∂E/∂t and ∂B/∂t are zero, and Maxwell’s equations reduce to:

- ∇•E = ρ/ε0. In this equation, we have ρ, which represents the so-called charge density, which describes the distribution of electric charges in space: ρ = ρ(x, y, z). To put it simply: ρ is the ‘amount of charge’ (which we’ll denote by Δq) per unit volume at a given point. Hence, if we consider a small volume (ΔV) located at point (x, y, z) in space – an infinitesimally small volume, in fact (as usual) –then we can write: Δq = ρ(x, y, z)ΔV. [As for ε0, you already know this is a constant which ensures all units are ‘compatible’.] This equation basically says we have some flux of E, the exact amount of which is determined by the charge density ρ or, more in general, by the charge distribution in space.

- ∇×E = 0. That means that the curl of E is zero: everywhere, and always. So there’s no circulation of E. We call this a curl-free field.

- ∇•B = 0. That means that the divergence of B is zero: everywhere, and always. So there’s no flux of B. None. We call this a divergence-free field.

- c2∇×B = j/ε0. So here we have steady current(s) causing some circulation of B, the exact amount of which is determined by the (total) current j. [What about that c2 factor? Well… We talked about that before: magnetism is, basically, a relativistic effect, and so that’s where that factor comes from. I’ll just refer you to what Feynman writes about this in his Lectures, and warmly recommend to read it, because it’s really quite interesting: it gave me at least a much deeper understanding of what it’s all about, and so I hope it will help you as much.]

Now you’ll say: why bother with all these difficult mathematical constructs if we’re going to consider curl-free and divergence-free fields only. Well… B is not curl-free, and E is not divergence-free. To be precise:

- E is a field with zero curl and a given divergence, and

- B is a field with zero divergence and a given curl.

Yeah, but why can’t we analyze fields that have both curl and divergence? The answer is: we can, and we will, but we have to start somewhere, and so we start with an easier analysis first.

Electrostatics and magnetostatics

The first thing you should note is that, in the static case (i.e. when charges and currents are static), there is no interdependence between E and B. The two fields are not interconnected, so to say. Therefore, we can neatly separate them into two pairs:

- Electrostatics: (1) ∇•E = ρ/ε0 and (2) ∇×E = 0.

- Magnetostatics: (1) ∇×B = j/c2ε0 and (2) ∇•B = 0.

Now, I won’t go through all of the particularities involved. In fact, I’ll refer you to a real physics textbook on that (like Feynman’s Lectures indeed). My aim here is to use these equations to introduce some more math and to gain a better understanding of vector calculus – an understanding that goes, in fact, beyond the math (i.e. a ‘physical’ understanding, as Feynman terms it).

At this point, I have to introduce two additional theorems. They are nice and easy to understand (although not so easy to prove, and so I won’t):

Theorem 1: If we have a vector field – let’s denote it by C – and we find that its curl is zero everywhere, then C must be the gradient of something. In other words, there must be some scalar field ψ (psi) such that C is equal to the gradient of ψ. It’s easier to write this down as follows:

If ∇×C = 0, there is a ψ such that C = ∇ψ.

Theorem 2: If we have a vector field – let’s denote it by D, just to introduce yet another letter – and we find that its divergence is zero everywhere, then D must be the curl of some vector field A. So we can write:

If ∇•D = 0, there is an A such that D = ∇×A.

We can apply this to the situation at hand:

- For E, there is some scalar potential Φ such that E = –∇Φ. [Note that we could integrate the minus sign in Φ, but we leave it there as a reminder that the situation is similar to that of heat flow. It’s a matter of convention really: E ‘flows’ from higher to lower potential.]

- For B, there is a so-called vector potential A such that B = ∇×A.

The whole game is then to compute Φ and A everywhere. We can then take the gradient of Φ, and the curl of A, to find the electric and magnetic field respectively, at every single point in space. In fact, most of Feynman’s second Volume of his Lectures is devoted to that, so I’ll refer you that if you’d be interested. As said, my goal here is just to introduce the basics of vector calculus, so you gain a better understanding of physics, i.e. an understanding which goes beyond the math.

Electrodynamics

We’re almost done. Electrodynamics is, of course, much more complicated than the static case, but I don’t have the intention to go too much in detail here. The important thing is to see the linkages in Maxwell’s equations. I’ve highlighted them below:

I know this looks messy, but it’s actually not so complicated. The interactions between the electric and magnetic field are governed by equation (2) and (4), so equation (1) and (3) is just ‘statics’. Something needs to trigger it all, of course. I assume it’s an electric current (that’s the arrow marked by [0]).

Indeed, equation (4), i.e. c2∇×B = ∂E/∂t + j/ε0, implies that a changing electric current – an accelerating electric charge, for instance – will cause the circulation of B to change. More specifically, we can write: ∂[c2∇×B]/∂t = ∂[j/ε0]∂t. However, as the circulation of B changes, the magnetic field B itself must be changing. Hence, we have a non-zero time derivative of B (∂B/∂t ≠ 0). But, then, according to equation (2), i.e. ∇×E = –∂B/∂t, we’ll have some circulation of E. That’s the dynamics marked by the red arrows [1].

Now, assuming that ∂B/∂t is not constant (because that electric charge accelerates and decelerates, for example), the time derivative ∂E/∂t will be non-zero too (∂E/∂t ≠ 0). But so that feeds back into equation (4), according to which a changing electric field will cause the circulation of B to change. That’s the dynamics marked by the yellow arrows [2].

The ‘feedback loop’ is closed now: I’ve just explained how an electromagnetic field (or radiation) actually propagates through space. Below you can see one of the fancier animations you can find on the Web. The blue oscillation is supposed to represent the oscillating magnetic vector, while the red oscillation is supposed to represent the electric field vector. Note how the effect travels through space.

This is, of course, an extremely simplified view. To be precise, it assumes that the light wave (that’s what an electromagnetic wave actually is) is linearly (aka as plane) polarized, as the electric (and magnetic field) oscillate on a straight line. If we choose the direction of propagation as the z-axis of our reference frame, the electric field vector will oscillate in the xy-plane. In other words, the electric field will have an x- and a y-component, which we’ll denote as Ex and Ex respectively, as shown in the diagrams below, which give various examples of linear polarization.

Light is, of course, not necessarily plane-polarized. The animation below shows circular polarization, which is a special case of the more general elliptical polarization condition.

Light is, of course, not necessarily plane-polarized. The animation below shows circular polarization, which is a special case of the more general elliptical polarization condition.

The relativity of magnetic and electric fields

Allow me to make a small digression here, which has more to do with physics than with vector analysis. You’ll have noticed that we didn’t talk about the magnetic field vector anymore when discussing the polarization of light. Indeed, when discussing electromagnetic radiation, most – if not all – textbooks start by noting we have E and B vectors, but then proceed to discuss the E vector only. Where’s the magnetic field? We need to note two things here.

1. First, I need to remind you of the force on any electrically charged particle (and note we only have electric charge: there’s no such thing as a magnetic charge according to Maxwell’s third equation) consists of two components. Indeed, the total electromagnetic force (aka Lorentz force) on a charge q is:

F = q(E + v×B) = qE + q(v×B) = FE + FM

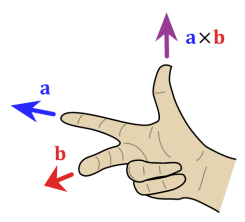

The velocity vector v is the velocity of the charge: if the charge is not moving, then there’s no magnetic force. The illustration below shows you the components of the vector cross product that, by now, you’re fully familiar with. Indeed, in my previous post, I gave you the expressions for the x, y and z coordinate of a cross product, but there’s a geometrical definition as well:

v×B = |v||B|sin(θ)n

The magnetic force FM is q(v×B) = qv×B = q|v||B|sin(θ)n. The unit vector n determines the direction of the force, which is determined by that right-hand rule that, by now, you also are fully familiar with: it’s perpendicular to both v and B (cf. the two 90° angles in the illustration). Just to make sure, I’ve also added the right-hand rule illustration above: check it out, as it does involve a bit of arm-twisting in this case. 🙂

In any case, the point to note here is that there’s only one electromagnetic force on the particle. While we distinguish between an E and a B vector, the E and B vector depend on our reference frame. Huh? Yes. The velocity v is relative: we specify the magnetic field in a so-called inertial frame of reference here. If we’d be moving with the charge, the magnetic force would, quite simply, disappear, because we’d have a v equal to zero, so we’d have v×B = 0×B= 0. Of course, all other charges (i.e. all ‘stationary’ and ‘moving’ charges that were causing the field in the first place) would have different velocities as well and, hence, our E and B vector would look very different too: they would come in a ‘different mixture’, as Feynman puts it. [If you’d want to know in what mixture exactly, I’ll refer you Feynman: it’s a rather lengthy analysis (five rather dense pages, in fact), but I can warmly recommend it: in fact, you should go through it if only to test your knowledge at this point, I think.]

You’ll say: So what? That doesn’t answer the question above. Why do physicists leave out the magnetic field vector in all those illustrations?

You’re right. I haven’t answered the question. This first remark is more like a warning. Let me quote Feynman on it:

“Since electric and magnetic fields appear in different mixtures if we change our frame of reference, we must be careful about how we look at the fields E and B. […] The fields are our way of describing what goes on at a point in space. In particular, E and B tell us about the forces that will act on a moving particle. The question “What is the force on a charge from a moving magnetic field?” doesn’t mean anything precise. The force is given by the values of E and B at the charge, and the F = q(E + v×B) formula is not to be altered if the source of E or B is moving: it is the values of E and B that will be altered by the motion. Our mathematical description deals only with the fields as a function of x, y, z, and t with respect to some inertial frame.”

If you allow me, I’ll take this opportunity to insert another warning, one that’s quite specific to how we should interpret this concept of an electromagnetic wave. When we say that an electromagnetic wave ‘travels’ through space, we often tend to think of a wave traveling on a string: we’re smart enough to understand that what is traveling is not the string itself (or some part of the string) but the amplitude of the oscillation: it’s the vertical displacement (i.e. the movement that’s perpendicular to the direction of ‘travel’) that appears first at one place and then at the next and so on and so on. It’s in that sense, and in that sense only, that the wave ‘travels’. However, the problem with this comparison to a wave traveling on a string is that we tend to think that an electromagnetic wave also occupies some space in the directions that are perpendicular to the direction of travel (i.e. the x and y directions in those illustrations on polarization). Now that’s a huge misconception! The electromagnetic field is something physical, for sure, but the E and B vectors do not occupy any physical space in the x and y direction as they ‘travel’ along the z direction!

Let me conclude this digression with Feynman’s conclusion on all of this:

“If we choose another coordinate system, we find another mixture of E and B fields. However, electric and magnetic forces are part of one physical phenomenon—the electromagnetic interactions of particles. While the separation of this interaction into electric and magnetic parts depends very much on the reference frame chosen for the description, the complete electromagnetic description is invariant: electricity and magnetism taken together are consistent with Einstein’s relativity.”

2. You’ll say: I don’t give a damn about other reference frames. Answer the question. Why are magnetic fields left out of the analysis when discussing electromagnetic radiation?

The answer to that question is very mundane. When we know E (in one or the other reference frame), we also know B, and, while B is as ‘essential’ as E when analyzing how an electromagnetic wave propagates through space, the truth is that the magnitude of B is only a very tiny fraction of that of E.

Huh? Yes. That animation with these oscillating blue and red vectors is very misleading in this regard. Let me be precise here and give you the formulas:

![]()

I’ve analyzed these formulas in one of my other posts (see, for example, my first post on light and radiation), and so I won’t repeat myself too much here. However, let me recall the basics of it all. The eR′ vector is a unit vector pointing in the apparent direction of the charge. When I say ‘apparent’, I mean that this unit vector is not pointing towards the present position of the charge, but at where is was a little while ago, because this ‘signal’ can only travel from the charge to where we are now at the same speed of the wave, i.e. at the speed of light c. That’s why we prime the (radial) vector R also (so we write R′ instead of R). So that unit vector wiggles up and down and, as the formula makes clear, it’s the second-order derivative of that movement which determines the electric field. That second-order derivative is the acceleration vector, and it can be substituted for the vertical component of the acceleration of the charge that caused the radiation in the first place but, again, I’ll refer you my post on that, as it’s not the topic we want to cover here.

What we do want to look at here, is that formula for B: it’s the cross product of that eR′ vector (the minus sign just reverses the direction of the whole thing) and E divided by c. We also know that the E and eR′ vectors are at right angles to each, so the sine factor (sinθ) is 1 (or –1) too. In other words, the magnitude of B is |E|/c = E/c, which is a very tiny fraction of E indeed (remember: c ≈ 3×108).

So… Yes, for all practical purposes, B doesn’t matter all that much when analyzing electromagnetic radiation, and so that’s why physicists will note it but then proceed and look at E only when discussing radiation. Poor B! That being said, the magnetic force may be tiny, but it’s quite interesting. Just look at its direction! Huh? Why? What’s so interesting about it? I am not talking the direction of B here: I am talking the direction of the force. Oh… OK… Hmm… Well…

Let me spell it out. Take the force formula: F = q(E + v×B) = qE + q(v×B). When our electromagnetic wave hits something real (I mean anything real, like a wall, or some molecule of gas), it is likely to hit some electron, i.e. an actual electric charge. Hence, the electric and magnetic field should have some impact on it. Now, as we pointed here, the magnitude of the electric force will be the most important one – by far – and, hence, it’s the electric field that will ‘drive’ that charge and, in the process, give it some velocity v, as shown below. In what direction? Don’t ask stupid questions: look at the equation. FE = qE, so the electric force will have the same direction as E.

But we’ve got a moving charge now and, therefore, the magnetic force comes into play as well! That force is FM = q(v×B) and its direction is given by the right-hand rule: it’s the F above in the direction of the light beam itself. Admittedly, it’s a tiny force, as its magnitude is F = qvE/c only, but it’s there, and it’s what causes the so-called radiation pressure (or light pressure tout court). So, yes, you can start dreaming of fancy solar sailing ships (the illustration below shows one out of of Star Trek) but… Well… Good luck with it! The force is very tiny indeed and, of course, don’t forget there’s light coming from all directions in space!

Jokes aside, it’s a real and interesting effect indeed, but I won’t say much more about it. Just note that we are really talking the momentum of light here, and it’s a ‘real’ as any momentum. In an interesting analysis, Feynman calculates this momentum and, rather unsurprisingly (but please do check out how he calculates these things, as it’s quite interesting), the same 1/c factor comes into play once: the momentum (p) that’s being delivered when light hits something real is equal to 1/c of the energy that’s being absorbed. So, if we denote the energy by W (in order to not create confusion with the E symbol we’ve used already), we can write: p = W/c.

Now I can’t resist one more digression. We’re, obviously, fully in classical physics here and, hence, we shouldn’t mention anything quantum-mechanical here. That being said, you already know that, in quantum physics, we’ll look at light as a stream of photons, i.e. ‘light particles’ that also have energy and momentum. The formula for the energy of a photon is given by the Planck relation: E = hf. The h factor is Planck’s constant here – also quite tiny, as you know – and f is the light frequency of course. Oh – and I am switching back to the symbol E to denote energy, as it’s clear from the context I am no longer talking about the electric field here.

Now, you may or may not remember that relativity theory yields the following relations between momentum and energy:

E2 – p2c2 = m0c4 and/or pc = Ev/c

In this equations, m0 stands, obviously, for the rest mass of the particle, i.e. its mass at v = 0. Now, photons have zero rest mass, but their speed is c. Hence, both equations reduce to p = E/c, so that’s the same as what Feynman found out above: p = W/c.

Of course, you’ll say: that’s obvious. Well… No, it’s not obvious at all. We do find the same formula for the momentum of light (p) – which is great, of course – but so we find the same thing coming from very different necks parts of the woods. The formula for the (relativistic) momentum and energy of particles comes from a very classical analysis of particles – ‘real-life’ objects with mass, a very definite position in space and whatever other properties you’d associate with billiard balls – while that other p = W/c formula comes out of a very long and tedious analysis of light as an electromagnetic wave. The two analytical frameworks couldn’t differ much more, could they? Yet, we come to the same conclusion indeed.

Physics is wonderful. 🙂

So what’s left?

Lots, of course! For starters, it would be nice to show how these formulas for E and B with eR′ in them can be derived from Maxwell’s equations. There’s no obvious relation, is there? You’re right. Yet, they do come out of the very same equations. However, for the details, I have to refer you to Feynman’s Lectures once again – to the second Volume to be precise. Indeed, besides calculating scalar and vector potentials in various situations, a lot of what he writes there is about how to calculate these wave equations from Maxwell’s equations. But so that’s not the topic of this post really. It’s, quite simply, impossible to ‘summarize’ all those arguments and derivations in a single post. The objective here was to give you some idea of what vector analysis really is in physics, and I hope you got the gist of it, because that’s what needed to proceed. 🙂

The other thing I left out is much more relevant to vector calculus. It’s about that del operator (∇) again: you should note that it can be used in many more combinations. More in particular, it can be used in combinations involving second-order derivatives. Indeed, till now, we’ve limited ourselves to first-order derivatives only. I’ll spare you the details and just copy a table with some key results:

- ∇•(∇T) = div(grad T) = ∇•∇T = (∇•∇)T = ∇2T = ∂2T/∂x2 + ∂2T/∂y2 + ∂2T/∂z2 = a scalar field

- (∇•∇)h = ∇2h = a vector field

- ∇(∇•h) = grad(div h) = a vector field

- ∇×(∇×h) = curl(curl h) =∇(∇•h) – ∇2h

- ∇•(∇×h) = div(curl h) = 0 (always)

- ∇×(∇T) = curl(grad T) = 0 (always)

So we have yet another set of operators here: not less than six, to be precise. You may think that we can have some more, like (∇×∇), for example. But… No. A (∇×∇) operator doesn’t make sense. Just write it out and think about it. Perhaps you’ll see why. You can try to invent some more but, if you manage, you’ll see they won’t make sense either. The combinations that do make sense are listed above, all of them.

Now, while of these combinations make (some) sense, it’s obvious that some of these combinations are more useful than others. More in particular, the first operator, ∇2, appears very often in physics and, hence, has a special name: it’s the Laplacian. As you can see, it’s the divergence of the gradient of a function.

Note that the Laplace operator (∇2) can be applied to both scalar as well as vector functions. If we operate with it on a vector, we’ll apply it to each component of the vector function. The Wikipedia article on the Laplace operator shows how and where it’s used in physics, and so I’ll refer to that if you’d want to know more. Below, I’ll just write out the operator itself, as well as how we apply it to a vector:

So that covers (1) and (2) above. What about the other ‘operators’?

Let me start at the bottom. Equations (5) and (6) are just what they are: two results that you can use in some mathematical argument or derivation. Equation (4) is… Well… Similar: it’s an identity that may or may not help one when doing some derivation.

What about (3), i.e. the gradient of the divergence of some vector function? Nothing special. As Feynman puts it: “It is a possible vector field, but there is nothing special to say about it. It’s just some vector field which may occasionally come up.”

So… That should conclude my little introduction to vector analysis, and so I’ll call it a day now. 🙂 I hope you enjoyed it.

Some content on this page was disabled on June 17, 2020 as a result of a DMCA takedown notice from Michael A. Gottlieb, Rudolf Pfeiffer, and The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 17, 2020 as a result of a DMCA takedown notice from Michael A. Gottlieb, Rudolf Pfeiffer, and The California Institute of Technology. You can learn more about the DMCA here:https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 17, 2020 as a result of a DMCA takedown notice from Michael A. Gottlieb, Rudolf Pfeiffer, and The California Institute of Technology. You can learn more about the DMCA here:https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 17, 2020 as a result of a DMCA takedown notice from Michael A. Gottlieb, Rudolf Pfeiffer, and The California Institute of Technology. You can learn more about the DMCA here:https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 20, 2020 as a result of a DMCA takedown notice from Michael A. Gottlieb, Rudolf Pfeiffer, and The California Institute of Technology. You can learn more about the DMCA here: