Pre-scriptum (dated 26 June 2020): My views on the true nature of light, matter and the force or forces that act on them have evolved significantly as part of my explorations of a more realist (classical) explanation of quantum mechanics. If you are reading this, then you are probably looking for not-to-difficult reading. In that case, I would suggest you read my re-write of Feynman’s introductory lecture to QM. If you want something shorter, you can also read my paper on what I believe to be the true Principles of Physics. Having said that, I still think there are a few good quotes and thoughts in this post too. 🙂

Original post:

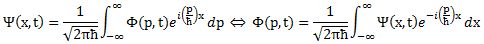

It looks like I am getting ready for my next plunge into Roger Penrose’s Road to Reality. I still need to learn more about those Hamiltonian operators and all that, but I can sort of ‘see’ what they are supposed to do now. However, before I venture off on another series of posts on math instead of physics, I thought I’d briefly present what Feynman identified as ‘loose ends’ in his 1985 Lectures on Quantum Electrodynamics – a few years before his untimely death – and then see if any of those ‘loose ends’ appears less loose today, i.e. some thirty years later.

The three-forces model and coupling constants

All three forces in the Standard Model (the electromagnetic force, the weak force and the strong force) are mediated by force carrying particles: bosons. [Let me talk about the Higgs field later and – of course – I leave out the gravitational force, for which we do not have a quantum field theory.]

Indeed, the electromagnetic force is mediated by the photon; the strong force is mediated by gluons; and the weak force is mediated by W and/or Z bosons. The mechanism is more or less the same for all. There is a so-called coupling (or a junction) between a matter particle (i.e. a fermion) and a force-carrying particle (i.e. the boson), and the amplitude for this coupling to happen is given by a number that is related to a so-called coupling constant.

Let’s give an example straight away – and let’s do it for the electromagnetic force, which is the only force we have been talking about so far. The illustration below shows three possible ways for two electrons moving in spacetime to exchange a photon. This involves two couplings: one emission, and one absorption. The amplitude for an emission or an absorption is the same: it’s –j. So the amplitude here will be (–j)(–j) = j2. Note that the two electrons repel each other as they exchange a photon, which reflects the electromagnetic force between them from a quantum-mechanical point of view !

We will have a number like this for all three forces. Feynman writes the coupling constant for the electromagnetic force as j and the coupling constant for the strong force (i.e. the amplitude for a gluon to be emitted or absorbed by a quark) as g. [As for the weak force, he is rather short on that and actually doesn’t bother to introduce a symbol for it. I’ll come back on that later.]

We will have a number like this for all three forces. Feynman writes the coupling constant for the electromagnetic force as j and the coupling constant for the strong force (i.e. the amplitude for a gluon to be emitted or absorbed by a quark) as g. [As for the weak force, he is rather short on that and actually doesn’t bother to introduce a symbol for it. I’ll come back on that later.]

The coupling constant is a dimensionless number and one can interpret it as the unit of ‘charge’ for the electromagnetic and strong force respectively. So the ‘charge’ q of a particle should be read as q times the coupling constant. Of course, we can argue about that unit. The elementary charge for electromagnetism was or is – historically – the charge of the proton (q = +1), but now the proton is no longer elementary: it consists of quarks with charge –1/3 and +2/3 (for the d and u quark) respectively (a proton consists of two u quarks and one d quark, so you can write it as uud). So what’s j then? Feynman doesn’t give its precise value but uses an approximate value of –0.1. It is an amplitude so it should be interpreted as a complex number to be added or multiplied with other complex numbers representing amplitudes – so –0.1 is “a shrink to about one-tenth, and half a turn.” [In these 1985 Lectures on QED, which he wrote for a lay audience, he calls amplitudes ‘arrows’, to be combined with other ‘arrows.’ In complex notation, –0.1 = 0.1eiπ = 0.1(cosπ + isinπ).]

Let me give a precise number. The coupling constant for the electromagnetic force is the so-called fine-structure constant, and it’s usually denoted by the alpha symbol (α). There is a remarkably easy formula for α, which becomes even easier if we fiddle with units to simplify the matter even more. Let me paraphrase Wikipedia on α here, because I have no better way of summarizing it (the summary is also nice as it shows how changing units – replacing the SI units by so-called natural units – can simplify equations):

1. There are three equivalent definitions of α in terms of other fundamental physical constants:

- where e is the elementary charge (so that’s the electric charge of the proton); ħ = h/2π is the reduced Planck constant; c is the speed of light (in vacuum); ε0 is the electric constant (i.e. the so-called permittivity of free space); µ0 is the magnetic constant (i.e. the so-called permeability of free space); and ke is the Coulomb constant.

2. In the old centimeter-gram-second variant of the metric system (cgs), the unit of electric charge is chosen such that the Coulomb constant (or the permittivity factor) equals 1. Then the expression of the fine-structure constant just becomes:

3. When using so-called natural units, we equate ε0 , c and ħ to 1. [That does not mean they are the same, but they just become the unit for measurement for whatever is measured in them. :-)] The value of the fine-structure constant then becomes:

Of course, then it just becomes a matter of choosing a value for e. Indeed, we still haven’t answered the question as to what we should choose as ‘elementary’: 1 or 1/3? If we take 1, then α is just a bit smaller than 0.08 (around 0.0795775 to be somewhat more precise). If we take 1/3 (the value for a quark), then we get a much smaller value: about 0.008842 (I won’t bother too much about the rest of the decimals here). Feynman’s (very) rough approximation of –0.1 obviously uses the historic proton charge, so e = +1.

The coupling constant for the strong force is much bigger. In fact, if we use the SI units (i.e. one of the three formulas for α under point 1 above), then we get an alpha equal to some 7.297×10–3. In fact, its value will usually be written as 1/α, and so we get a value of (roughly) 1/137. In this scheme of things, the coupling constant for the strong force is 1, so that’s 137 times bigger.

Coupling constants, interactions, and Feynman diagrams

So how does it work? The Wikipedia article on coupling constants makes an extremely useful distinction between the kinetic part and the proper interaction part of an ‘interaction’. Indeed, before we just blindly associate qubits with particles, it’s probably useful to not only look at how photon absorption and/or emission works, but also at how a process as common as photon scattering works (so we’re talking Compton scattering here – discovered in 1923, and it earned Compton a Nobel Prize !).

The illustration below separates the kinetic and interaction part properly: the photon and the electron are both deflected (i.e. the magnitude and/or direction of their momentum (p) changes) – that’s the kinetic part – but, in addition, the frequency of the photon (and, hence, its energy – cf. E = hν) is also affected – so that’s the interaction part I’d say.

With an absorption or an emission, the situation is different, but it also involves frequencies (and, hence, energy levels), as show below: an electron absorbing a higher-energy photon will jump two or more levels as it absorbs the energy by moving to a higher energy level (i.e. a so-called excited state), and when it re-emits the energy, the emitted photon will have higher energy and, hence, higher frequency.

This business of frequencies and energy levels may not be so obvious when looking at those Feynman diagrams, but I should add that these Feynman diagrams are not just sketchy drawings: the time and space axis is precisely defined (time and distance are measured in equivalent units) and so the direction of travel of particles (photons, electrons, or whatever particle is depicted) does reflect the direction of travel and, hence, conveys precious information about both the direction as well as the magnitude of the momentum of those particles. That being said, a Feynman diagram does not care about a photon’s frequency and, hence, its energy (its velocity will always be c, and it has no mass, so we can’t get any information from its trajectory).

Let’s look at these Feynman diagrams now, and the underlying force model, which I refer to as the boson exchange model.

The boson exchange model

The quantum field model – for all forces – is a boson exchange model. In this model, electrons, for example, are kept in orbit through the continuous exchange of (virtual) photons between the proton and the electron, as shown below.

Now, I should say a few words about these ‘virtual’ photons. The most important thing is that you should look at them as being ‘real’. They may be derided as being only temporary disturbances of the electromagnetic field but they are very real force carriers in the quantum field theory of electromagnetism. They may carry very low energy as compared to ‘real’ photons, but they do conserve energy and momentum – in quite a strange way obviously: while it is easy to imagine a photon pushing an electron away, it is a bit more difficult to imagine it pulling it closer, which is what it does here. Nevertheless, that’s how forces are being mediated by virtual particles in quantum mechanics: we have matter particles carrying charge but neutral bosons taking care of the exchange between those charges.

Now, I should say a few words about these ‘virtual’ photons. The most important thing is that you should look at them as being ‘real’. They may be derided as being only temporary disturbances of the electromagnetic field but they are very real force carriers in the quantum field theory of electromagnetism. They may carry very low energy as compared to ‘real’ photons, but they do conserve energy and momentum – in quite a strange way obviously: while it is easy to imagine a photon pushing an electron away, it is a bit more difficult to imagine it pulling it closer, which is what it does here. Nevertheless, that’s how forces are being mediated by virtual particles in quantum mechanics: we have matter particles carrying charge but neutral bosons taking care of the exchange between those charges.

In fact, note how Feynman actually cares about the possibility of one of those ‘virtual’ photons briefly disintegrating into an electron-positron pair, which underscores the ‘reality’ of photons mediating the electromagnetic force between a proton and an electron, thereby keeping them close together. There is probably no better illustration to explain the difference between quantum field theory and the classical view of forces, such as the classical view on gravity: there are no gravitons doing for gravity what photons are doing for electromagnetic attraction (or repulsion).

Pandora’s Box

I cannot resist a small digression here. The ‘Box of Pandora’ to which Feynman refers in the caption of the illustration above is the problem of calculating the coupling constants. Indeed, j is the coupling constant for an ‘ideal’ electron to couple with some kind of ‘ideal’ photon, but how do we calculate that when we actually know that all possible paths in spacetime have to be considered and that we have all of these ‘virtual’ mess going on? Indeed, in experiments, we can only observe probabilities for real electrons to couple with real photons.

In the ‘Chapter 4’ to which the caption makes a reference, he briefly explains the mathematical procedure, which he invented and for which he got a Nobel Prize. He calls it a ‘shell game’. It’s basically an application of ‘perturbation theory’, which I haven’t studied yet. However, he does so with skepticism about its mathematical consistency – skepticism which I mentioned and explored somewhat in previous posts, so I won’t repeat that here. Here, I’ll just note that the issue of ‘mathematical consistency’ is much more of an issue for the strong force, because the coupling constant is so big.

Indeed, terms with j2, j3, j4 etcetera (i.e. the terms involved in adding amplitudes for all possible paths and all possible ways in which an event can happen) quickly become very small as the exponent increases, but terms with g2, g3, g4 etcetera do not become negligibly small. In fact, they don’t become irrelevant at all. Indeed, if we wrote α for the electromagnetic force as 7.297×10–3, then the α for the strong force is one, and so none of these terms becomes vanishingly small. I won’t dwell on this, but just quote Wikipedia’s very succinct appraisal of the situation: “If α is much less than 1 [in a quantum field theory with a dimensionless coupling constant α], then the theory is said to be weakly coupled. In this case it is well described by an expansion in powers of α called perturbation theory. [However] If the coupling constant is of order one or larger, the theory is said to be strongly coupled. An example of the latter [the only example as far as I am aware: we don’t have like a dozen different forces out there !] is the hadronic theory of strong interactions, which is why it is called strong in the first place. [Hadrons is just a difficult word for particles composed of quarks – so don’t worry about it: you understand what is being said here.] In such a case non-perturbative methods have to be used to investigate the theory.”

Hmm… If Feynman thought his technique for calculating weak coupling constants was fishy, then his skepticism about whether or not physicists actually know what they are doing when calculating stuff using the strong coupling constant is probably justified. But let’s come back on that later. With all that we know here, we’re ready to present a picture of the ‘first-generation world’.

The first-generation world

The first-generation is our world, excluding all that goes in those particle accelerators, where they discovered so-called second- and third-generation matter – but I’ll come back to that. Our world consists of only four matter particles, collectively referred to as (first-generation) fermions: two quarks (a u and a d type), the electron, and the neutrino. This is what is shown below.

Indeed, u and d quarks make up protons and neutrons (a proton consists of two u quarks and one d quark, and a neutron must be neutral, so it’s two d quarks and one u quark), and then there’s electrons circling around them and so that’s our atoms. And from atoms, we make molecules and then you know the rest of the story. Genesis !

Oh… But why do we need the neutrino? [Damn – you’re smart ! You see everything, don’t you? :-)] Well… There’s something referred to as beta decay: this allows a neutron to become a proton (and vice versa). Beta decay explains why carbon-14 will spontaneously decay into nitrogen-14. Indeed, carbon-12 is the (very) stable isotope, while carbon-14 has a life-time of 5,730 ± 40 years ‘only’ and, hence, measuring how much carbon-14 is left in some organic substance allows us to date it (that’s what (radio)carbon-dating is about). Now, a beta particle can refer to an electron or a positron, so we can have β– decay (e.g. the above-mentioned carbon-14 decay) or β+ decay (e.g. magnesium-23 into sodium-23). If we have β– decay, then some electron will be flying out in order to make sure the atom as a whole stays electrically neutral. If it’s β+ decay, then emitting a positron will do the job (I forgot to mention that each of the particles above also has a anti-matter counterpart – but don’t think I tried to hide anything else: the fermion picture above is pretty complete). That being said, Wolfgang Pauli, one of those geniuses who invented quantum theory, noted, in 1930 already, that some momentum and energy was missing, and so he predicted the emission of this mysterious neutrinos as well. Guess what? These things are very spooky (relatively high-energy neutrinos produced by stars (our Sun in the first place) are going through your and my my body, right now and right here, at a rate of some hundred trillion per second) but, because they are so hard to detect, the first actual trace of their existence was found in 1956 only. [Neutrino detection is fairly standard business now, however.] But back to quarks now.

Quarks are held together by gluons – as you probably know. Quarks come in flavors (u and d), but gluons come in ‘colors’. It’s a bit of a stupid name but the analogy works great. Quarks exchange gluons all of the time and so that’s what ‘glues’ them so strongly together. Indeed, the so-called ‘mass’ that gets converted into energy when a nuclear bomb explodes is not the mass of quarks (their mass is only 2.4 and 4.8 MeV/c2. Nuclear power is binding energy between quarks that gets converted into heat and radiation and kinetic energy and whatever else a nuclear explosion unleashes. That binding energy is reflected in the difference between the mass of a proton (or a neutron) – around 938 MeV/c2 – and the mass figure you get when you add two u‘s and one d, which is them 9.6 MeV/c2 only. This ratio – a factor of one hundred – illustrates once again the strength of the strong force: 99% of the ‘mass’ of a proton or an electron is due to the strong force.

But I am digressing too much, and I haven’t even started to talk about the bosons associated with the weak force. Well… I won’t just now. I’ll just move on the second- and third-generation world.

Second- and third-generation matter

When physicists started to look for those quarks in their particle accelerators, Nature had already confused them by producing lots of other particles in these accelerators: in the 1960s, there were more than four hundred of them. Yes. Too much. But they couldn’t get them back in the box. 🙂

Now, all these ‘other particles’ are unstable but they survive long enough – a muon, for example, disintegrates after 2.2 millionths of a second (on average) – to deserve the ‘particle’ title, as opposed to a ‘resonance’, whose lifetime can be as short as a billionth of a trillionth of a second. And so, yes, the physicists had to explain them too. So the guys who devised the quark-gluon model (the model is usually associated with Murray Gell-Mann but – as usual with great ideas – some others worked hard on it as well) had already included heavier versions of their quarks to explain (some of) these other particles. And so we do not only have heavier quarks, but also a heavier version of the electron (that’s the muon I mentioned) as well as a heavier version of the neutrino (the so-called muon neutrino). The two new ‘flavors’ of quarks were called s and c. [Feynman hates these names but let me give them: u stands for up, d for down, s for strange and c for charm. Why? Well… According to Feynman: “For no reason whatsoever.”]

Traces of the second-generation s and c quarks were found in experiments in 1968 and 1974 respectively (it took six years to boost the particle accelerators sufficiently), and the third-generation b quark (for beauty or bottom – whatever) popped up in Fermilab‘s particle accelerator in 1978. To be fully complete, it then took 17 years to detect the super-heavy t quark – which stands for truth. [Of all the quarks, this name is probably the nicest: “If beauty, then truth” – as Lederman and Schramm write in their 1989 history of all of this.]

What’s next? Will there be a fourth or even fifth generation? Back in 1985, Feynman didn’t exclude it (and actually seemed to expect it), but current assessments are more prosaic. Indeed, Wikipedia writes that, “According to the results of the statistical analysis by researchers from CERN and the Humboldt University of Berlin, the existence of further fermions can be excluded with a probability of 99.99999% (5.3 sigma).” If you want to know why… Well… Read the rest of the Wikipedia article. It’s got to do with the Higgs particle.

So the complete model of reality is the one I already inserted in a previous post and, if you find it complicated, remember that the first generation of matter is the one that matters and, among the bosons, it’s the photons and gluons. If you focus on these only, it’s not complicated at all – and surely a huge improvement over those 400+ particles no one understood in the 1960s.

As for the interactions, quarks stick together – and rather firmly so – by interchanging gluons. They thereby ‘change color’ (which is the same as saying there is some exchange of ‘charge’). I copy Feynman’s original illustration hereunder (not because there’s no better illustration: the stuff you can find on Wikipedia has actual colors !) but just because it’s reflects the other illustrations above (and, perhaps, maybe I also want to make sure – with this black-and-white thing – that you don’t think there’s something like ‘real’ color inside of a nucleus).

So what are the loose ends then? The problem of ‘mathematical consistency’ associated with the techniques used to calculate (or estimate) these coupling constants – which Feynman identifies as a key defect in 1985 – is is a form of skepticism about the Standard Model that is not shared by others. It’s more about the other forces. So let’s now talk about these.

The weak force as the weird force: about symmetry breaking

I included the weak force in the title of one of the sub-sections above (“The three-forces model”) and then talked about the other two forces only. The W+ , W– and Z bosons – usually referred to, as a group, as the W bosons, or the ‘intermediate vector bosons’ – are an odd bunch. First, note that they are the only ones that do not only have a (rest) mass (and not just a little bit: they’re almost 100 times heavier than a the proton or neutron – or a hydrogen atom !) but, on top of that, they also have electric charge (except for the Z boson). They are really the odd ones out. Feynman does not doubt their existence (a Fermilab team produced them in 1983, and they got a Nobel Prize for it, so little room for doubts here !), but it is obvious he finds the weak force interaction model rather weird.

He’s not the only one: in a wonderful publication designed to make a case for more powerful particle accelerators (probably successful, because the Large Hadron Collider came through – and discovered credible traces of the Higgs field, which is involved in the story that is about to follow), Leon Lederman and David Schramm look at the asymmety involved in having massive W bosons and massless photons and gluons, as just one of the many asymmetries associated with the weak force. Let me develop this point.

We like symmetries. They are aesthetic. But so I am talking something else here: in classical physics, characterized by strict causality and determinism, we can – in theory – reverse the arrow of time. In practice, we can’t – because of entropy – but, in theory, so-called reversible machines are not a problem. However, in quantum mechanics we cannot reverse time for reasons that have nothing to do with thermodynamics. In fact, there are several types of symmetries in physics:

- Parity (P) symmetry revolves around the notion that Nature should not distinguish between right- and left-handedness, so everything that works in our world, should also work in the mirror world. Now, the weak force does not respect P symmetry. That was shown by experiments on the decay of pions, muons and radioactive cobalt-60 in 1956 and 1957 already.

- Charge conjugation or charge (C) symmetry revolves around the notion that a world in which we reverse all (electric) charge signs (so protons would have minus one as charge, and electrons have plus one) would also just work the same. The same 1957 experiments showed that the weak force does also not respect C symmetry.

- Initially, smart theorists noted that the combined operation of CP was respected by these 1957 experiments (hence, the principle of P and C symmetry could be substituted by a combined CP symmetry principle) but, then, in 1964, Val Fitch and James Cronin, proved that the spontaneous decay of neutral kaons (don’t worry if you don’t know what particle this is: you can look it up) into pairs of pions did not respect CP symmetry. In other words, it was – again – the weak force not respecting symmetry. [Fitch and Cronin got a Nobel Prize for this, so you can imagine it did mean something !]

- We mentioned time reversal (T) symmetry: how is that being broken? In theory, we can imagine a film being made of those events not respecting P, C or CP symmetry and then just pressing the ‘reverse’ button, can’t we? Well… I must admit I do not master the details of what I am going to write now, but let me just quote Lederman (another Nobel Prize physicist) and Schramm (an astrophysicist): “Years before this, [Wolfgang] Pauli [Remember him from his neutrino prediction?] had pointed out that a sequence of operations like CPT could be imagined and studied; that is, in sequence, change all particles to antiparticles, reflect the system in a mirror, and change the sign of time. Pauli’s theorem was that all nature respected the CPT operation and, in fact, that this was closely connected to the relativistic invariance of Einstein’s equations. There is a consensus that CPT invariance cannot be broken – at least not at energy scales below 1019 GeV [i.e. the Planck scale]. However, if CPT is a valid symmetry, then, when Fitch and Cronin showed that CP is a broken symmetry, they also showed that T symmetry must be similarly broken.” (Lederman and Schramm, 1989, From Quarks to the Cosmos, p. 122-123)

So the weak force doesn’t care about symmetries. Not at all. That being said, there is an obvious difference between the asymmetries mentioned above, and the asymmetry involved in W bosons having mass and other bosons not having mass. That’s true. Especially because now we have that Higgs field to explain why W bosons have mass – and not only W bosons but also the matter particles (i.e. the three generations of leptons and quarks discussed above). The diagram shows what interacts with what.

But so the Higgs field does not interact with photons and gluons. Why? Well… I am not sure. Let me copy the Wikipedia explanation: “The Higgs field consists of four components, two neutral ones and two charged component fields. Both of the charged components and one of the neutral fields are Goldstone bosons, which act as the longitudinal third-polarization components of the massive W+, W– and Z bosons. The quantum of the remaining neutral component corresponds to (and is theoretically realized as) the massive Higgs boson.”

But so the Higgs field does not interact with photons and gluons. Why? Well… I am not sure. Let me copy the Wikipedia explanation: “The Higgs field consists of four components, two neutral ones and two charged component fields. Both of the charged components and one of the neutral fields are Goldstone bosons, which act as the longitudinal third-polarization components of the massive W+, W– and Z bosons. The quantum of the remaining neutral component corresponds to (and is theoretically realized as) the massive Higgs boson.”

Huh? […] This ‘answer’ probably doesn’t answer your question. What I understand from the explanation above, is that the Higgs field only interacts with W bosons because its (theoretical) structure is such that it only interacts with W bosons. Now, you’ll remember Feynman’s oft-quoted criticism of string theory: “I don’t like that for anything that disagrees with an experiment, they cook up an explanation–a fix-up to say.” Is the Higgs theory such cooked-up explanation? No. That kind of criticism would not apply here, in light of the fact that – some 50 years after the theory – there is (some) experimental confirmation at least !

But you’ll admit it does all look ‘somewhat ugly.’ However, while that’s a ‘loose end’ of the Standard Model, it’s not a fundamental defect or so. The argument is more about aesthetics, but then different people have different views on aesthetics – especially when it comes to mathematical attractiveness or unattractiveness.

So… No real loose end here I’d say.

Gravity

The other ‘loose end’ that Feynman mentions in his 1985 summary is obviously still very relevant today (much more than his worries about the weak force I’d say). It is the lack of a quantum theory of gravity. There is none. Of course, the obvious question is: why would we need one? We’ve got Einstein’s theory, don’t we? What’s wrong with it?

The short answer to the last question is: nothing’s wrong with it – on the contrary ! It’s just that it is – well… – classical physics. No uncertainty. As such, the formalism of quantum field theory cannot be applied to gravity. That’s it. What’s Feynman’s take on this? [Sorry I refer to him all the time, but I made it clear in the introduction of this post that I would be discussing ‘his’ loose ends indeed.] Well… He makes two points – a practical one and a theoretical one:

1. “Because the gravitation force is so much weaker than any of the other interactions, it is impossible at the present time to make any experiment that is sufficiently delicate to measure any effect that requires the precision of a quantum theory to explain it.”

Feynman is surely right about gravity being ‘so much weaker’. Indeed, you should note that, at a scale of 10–13 cm (that’s the picometer scale – so that’s the relevant scale indeed at the sub-atomic level), the coupling constants compare as follows: if the coupling constant of the strong force is 1, the coupling constant of the electromagnetic force is approximately 1/137, so that’s a factor of 10–2 approximately. The strength of the weak force as measured by the coupling constant would be smaller with a factor 10–13 (so that’s 1/10000000000000 smaller). Incredibly small, but so we do have a quantum field theory for the weak force ! However, the coupling constant for the gravitational force involves a factor 10–38. Let’s face it: this is unimaginably small.

However, Feynman wrote this in 1985 (i.e. thirty years ago) and scientists wouldn’t be scientists if they would not at least try to set up some kind of experiment. So there it is: LIGO. Let me quote Wikipedia on it:

“LIGO, which stands for the Laser Interferometer Gravitational-Wave Observatory, is a large-scale physics experiment aiming to directly detect gravitation waves. […] At the cost of $365 million (in 2002 USD), it is the largest and most ambitious project ever funded by the NSF. Observations at LIGO began in 2002 and ended in 2010; no unambiguous detections of gravitational waves have been reported. The original detectors were disassembled and are currently being replaced by improved versions known as “Advanced LIGO”.

So, let’s see what comes out of that. I won’t put my money on it just yet. 🙂 Let’s go to the theoretical problem now.

2. “Even though there is no way to test them, there are, nevertheless, quantum theories of gravity that involve ‘gravitons’ (which would appear under a new category of polarizations, called spin “2”) and other fundamental particles (some with spin 3/2). The best of these theories is not able to include the particles that we do find, and invents a lot of particles that we don’t find. [In addition] The quantum theories of gravity also have infinities in the terms with couplings [Feynman does not refer to a coupling constant but to a factor n appearing in the so-called propagator for an electron – don’t worry about it: just note it’s a problem with one of those constants actually being larger than one !], but the “dippy process” that is successful in getting rid of the infinities in quantum electrodynamics doesn’t get rid of them in gravitation. So not only have we no experiments with which to check a quantum theory of gravitation, we also have no reasonable theory.”

Phew ! After reading that, you wouldn’t apply for a job at that LIGO facility, would you? That being said, the fact that there is a LIGO experiment would seem to undermine Feynman’s practical argument. But then is his theoretical criticism still relevant today? I am not an expert, but it would seem to be the case according to Wikipedia’s update on it:

“Although a quantum theory of gravity is needed in order to reconcile general relativity with the principles of quantum mechanics, difficulties arise when one attempts to apply the usual prescriptions of quantum field theory. From a technical point of view, the problem is that the theory one gets in this way is not renormalizable and therefore cannot be used to make meaningful physical predictions. As a result, theorists have taken up more radical approaches to the problem of quantum gravity, the most popular approaches being string theory and loop quantum gravity.”

Hmm… String theory and loop quantum gravity? That’s the stuff that Penrose is exploring. However, I’d suspect that for these (string theory and loop quantum gravity), Feynman’s criticism probably still rings true – to some extent at least:

“I don’t like that they’re not calculating anything. I don’t like that they don’t check their ideas. I don’t like that for anything that disagrees with an experiment, they cook up an explanation–a fix-up to say, “Well, it might be true.” For example, the theory requires ten dimensions. Well, maybe there’s a way of wrapping up six of the dimensions. Yes, that’s all possible mathematically, but why not seven? When they write their equation, the equation should decide how many of these things get wrapped up, not the desire to agree with experiment. In other words, there’s no reason whatsoever in superstring theory that it isn’t eight out of the ten dimensions that get wrapped up and that the result is only two dimensions, which would be completely in disagreement with experience. So the fact that it might disagree with experience is very tenuous, it doesn’t produce anything; it has to be excused most of the time. It doesn’t look right.”

What to say by way of conclusion? Not sure. I think my personal “research agenda” is reasonably simple: I just want to try to understand all of the above somewhat better and then, perhaps, I might be able to understand some of what Roger Penrose is writing. 🙂