Note: I have published a paper that is very coherent and fully explains what’s going on. There is nothing magical about it these things. Check it out: The Meaning of the Fine-Structure Constant. No ambiguity. No hocus-pocus.

Jean Louis Van Belle, 23 December 2018

Original post:

This post is a continuation of the previous one: it is just going to elaborate the questions I raised in the post scriptum of that post. Let’s first review the basics once more.

The geometry of the elementary wavefunction

In the reference frame of the particle itself, the geometry of the wavefunction simplifies to what is illustrated below: an oscillation in two dimensions which, viewed together, form a plane that would be perpendicular to the direction of motion—but then our particle doesn’t move in its own reference frame, obviously. Hence, we could be looking at our particle from any direction and we should, presumably, see a similar two-dimensional oscillation. That is interesting because… Well… If we rotate this circle around its center (in whatever direction we’d choose), we get a sphere, right? It’s only when it starts moving, that it loses its symmetry. Now, that is very intriguing, but let’s think about that later.

Let’s assume we’re looking at it from some specific direction. Then we presumably have some charge (the green dot) moving about some center, and its movement can be analyzed as the sum of two oscillations (the sine and cosine) which represent the real and imaginary component of the wavefunction respectively—as we observe it, so to speak. [Of course, you’ve been told you can’t observe wavefunctions so… Well… You should probably stop reading this. :-)] We write:

ψ = = a·e−i∙θ = a·e−i∙E·t/ħ = a·cos(−E∙t/ħ) + i·a·sin(−E∙t/ħ) = a·cos(E∙t/ħ) − i·a·sin(E∙t/ħ)

So that’s the wavefunction in the reference frame of the particle itself. When we think of it as moving in some direction (so relativity kicks in), we need to add the p·x term to the argument (θ = E·t − p∙x). It is easy to show this term doesn’t change the argument (θ), because we also get a different value for the energy in the new reference frame: Ev = γ·E0 and so… Well… I’ll refer you to my post on this, in which I show the argument of the wavefunction is invariant under a Lorentz transformation: the way Ev and pv and, importantly, the coordinates x and t relativistically transform ensures the invariance.

In fact, I’ve always wanted to read de Broglie‘s original thesis because I strongly suspect he saw that immediately. If you click this link, you’ll find an author who suggests the same. Having said that, I should immediately add this does not imply there is no need for a relativistic wave equation: the wavefunction is a solution for the wave equation and, yes, I am the first to note the Schrödinger equation has some obvious issues, which I briefly touch upon in one of my other posts—and which is why Schrödinger himself and other contemporaries came up with a relativistic wave equation (Oskar Klein and Walter Gordon got the credit but others (including Louis de Broglie) also suggested a relativistic wave equation when Schrödinger published his). In my humble opinion, the key issue is not that Schrödinger’s equation is non-relativistic. It’s that 1/2 factor again but… Well… I won’t dwell on that here. We need to move on. So let’s leave the wave equation for what it is and go back to our wavefunction.

You’ll note the argument (or phase) of our wavefunction moves clockwise—or counterclockwise, depending on whether you’re standing in front of behind the clock. Of course, Nature doesn’t care about where we stand or—to put it differently—whether we measure time clockwise, counterclockwise, in the positive, the negative or whatever direction. Hence, I’ve argued we can have both left- as well as right-handed wavefunctions, as illustrated below (for p ≠ 0). Our hypothesis is that these two physical possibilities correspond to the angular momentum of our electron being either positive or negative: Jz = +ħ/2 or, else, Jz = −ħ/2. [If you’ve read a thing or two about neutrinos, then… Well… They’re kinda special in this regard: they have no charge and neutrinos and antineutrinos are actually defined by their helicity. But… Well… Let’s stick to trying to describing electrons for a while.]

The line of reasoning that we followed allowed us to calculate the amplitude a. We got a result that tentatively confirms we’re on the right track with our interpretation: we found that a = ħ/me·c, so that’s the Compton scattering radius of our electron. All good ! But we were still a bit stuck—or ambiguous, I should say—on what the components of our wavefunction actually are. Are we really imagining the tip of that rotating arrow is a pointlike electric charge spinning around the center? [Pointlike or… Well… Perhaps we should think of the Thomson radius of the electron here, i.e. the so-called classical electron radius, which is equal to the Compton radius times the fine-structure constant: rThomson = α·rCompton ≈ 3.86×10−13/137.]

So that would be the flywheel model.

In contrast, we may also think the whole arrow is some rotating field vector—something like the electric field vector, with the same or some other physical dimension, like newton per charge unit, or newton per mass unit? So that’s the field model. Now, these interpretations may or may not be compatible—or complementary, I should say. I sure hope they are but… Well… What can we reasonably say about it?

Let us first note that the flywheel interpretation has a very obvious advantage, because it allows us to explain the interaction between a photon and an electron, as I demonstrated in my previous post: the electromagnetic energy of the photon will drive the circulatory motion of our electron… So… Well… That’s a nice physical explanation for the transfer of energy. However, when we think about interference or diffraction, we’re stuck: flywheels don’t interfere or diffract. Only waves do. So… Well… What to say?

I am not sure, but here I want to think some more by pushing the flywheel metaphor to its logical limits. Let me remind you of what triggered it all: it was the mathematical equivalence of the energy equation for an oscillator (E = m·a2·ω2) and Einstein’s formula (E = m·c2), which tells us energy and mass are equivalent but… Well… They’re not the same. So what are they then? What is energy, and what is mass—in the context of these matter-waves that we’re looking at. To be precise, the E = m·a2·ω2 formula gives us the energy of two oscillators, so we need a two-spring model which—because I love motorbikes—I referred to as my V-twin engine model, but it’s not an engine, really: it’s two frictionless pistons (or springs) whose direction of motion is perpendicular to each other, so they are in a 90° degree angle and, therefore, their motion is, effectively, independent. In other words: they will not interfere with each other. It’s probably worth showing the illustration just one more time. And… Well… Yes. I’ll also briefly review the math one more time.

If the magnitude of the oscillation is equal to a, then the motion of these piston (or the mass on a spring) will be described by x = a·cos(ω·t + Δ). Needless to say, Δ is just a phase factor which defines our t = 0 point, and ω is the natural angular frequency of our oscillator. Because of the 90° angle between the two cylinders, Δ would be 0 for one oscillator, and –π/2 for the other. Hence, the motion of one piston is given by x = a·cos(ω·t), while the motion of the other is given by x = a·cos(ω·t–π/2) = a·sin(ω·t). The kinetic and potential energy of one oscillator – think of one piston or one spring only – can then be calculated as:

- K.E. = T = m·v2/2 = (1/2)·m·ω2·a2·sin2(ω·t + Δ)

- P.E. = U = k·x2/2 = (1/2)·k·a2·cos2(ω·t + Δ)

The coefficient k in the potential energy formula characterizes the restoring force: F = −k·x. From the dynamics involved, it is obvious that k must be equal to m·ω2. Hence, the total energy—for one piston, or one spring—is equal to:

E = T + U = (1/2)· m·ω2·a2·[sin2(ω·t + Δ) + cos2(ω·t + Δ)] = m·a2·ω2/2

Hence, adding the energy of the two oscillators, we have a perpetuum mobile storing an energy that is equal to twice this amount: E = m·a2·ω2. It is a great metaphor. Somehow, in this beautiful interplay between linear and circular motion, energy is borrowed from one place and then returns to the other, cycle after cycle. However, we still have to prove this engine is, effectively, a perpetuum mobile: we need to prove the energy that is being borrowed or returned by one piston is the energy that is being returned or borrowed by the other. That is easy to do, but I won’t bother you with that proof here: you can double-check it in the referenced post or – more formally – in an article I posted on viXra.org.

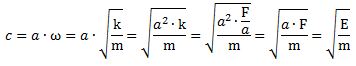

It is all beautiful, and the key question is obvious: if we want to relate the E = m·a2·ω2 and E = m·c2 formulas, we need to explain why we could, potentially, write c as c = a·ω = a·√(k/m). We’ve done that already—to some extent at least. The tangential velocity of a pointlike particle spinning around some axis is given by v = r·ω. Now, the radius r is given by a = ħ/(m·c), and ω = E/ħ = m·c2/ħ, so v is equal to to v = [ħ/(m·c)]·[m·c2/ħ] = c. Another beautiful result, but what does it mean? We need to think about the meaning of the ω = √(k/m) formula here. In the mentioned article, we boldly wrote that the speed of light is to be interpreted as the resonant frequency of spacetime, but so… Well… What do we really mean by that? Think of the following.

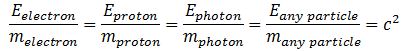

Einstein’s E = mc2 equation implies the ratio between the energy and the mass of any particle is always the same:

This effectively reminds us of the ω2 = C–1/L or ω2 = k/m formula for harmonic oscillators. The key difference is that the ω2= C–1/L and ω2 = k/m formulas introduce two (or more) degrees of freedom. In contrast, c2= E/m for any particle, always. However, that is exactly the point: we can modulate the resistance, inductance and capacitance of electric circuits, and the stiffness of springs and the masses we put on them, but we live in one physical space only: our spacetime. Hence, the speed of light (c) emerges here as the defining property of spacetime: the resonant frequency, so to speak. We have no further degrees of freedom here.

Let’s think about k. [I am not trying to avoid the ω2= 1/LC formula here. It’s basically the same concept: the ω2= 1/LC formula gives us the natural or resonant frequency for a electric circuit consisting of a resistor, an inductor, and a capacitor. Writing the formula as ω2= C−1/L introduces the concept of elastance, which is the equivalent of the mechanical stiffness (k) of a spring, so… Well… You get it, right? The ω2= C–1/L and ω2 = k/m sort of describe the same thing: harmonic oscillation. It’s just… Well… Unlike the ω2= C–1/L, the ω2 = k/m is directly compatible with our V-twin engine metaphor, because it also involves physical distances, as I’ll show you here.] The k in the ω2 = k/m is, effectively, the stiffness of the spring. It is defined by Hooke’s Law, which states that the force that is needed to extend or compress a spring by some distance x is linearly proportional to that distance, so we write: F = k·x.

Now that is interesting, isn’t it? We’re talking exactly the same thing here: spacetime is, presumably, isotropic, so it should oscillate the same in any direction—I am talking those sine and cosine oscillations now, but in physical space—so there is nothing imaginary here: all is real or… Well… As real as we can imagine it to be. 🙂

We can elaborate the point as follows. The F = k·x equation implies k is a force per unit distance: k = F/x. Hence, its physical dimension is newton per meter (N/m). Now, the x in this equation may be equated to the maximum extension of our spring, or the amplitude of the oscillation, so that’s the radius r = a in the metaphor we’re analyzing here. Now look at how we can re-write the c = a·ω = a·√(k/m) equation:

In case you wonder about the E = F·a substitution: just remember that energy is force times distance. [Just do a dimensional analysis: you’ll see it works out.] So we have a spectacular result here, for several reasons. The first, and perhaps most obvious reason, is that we can actually derive Einstein’s E = m·c2 formula from our flywheel model. Now, that is truly glorious, I think. However, even more importantly, this equation suggests we do not necessarily need to think of some actual mass oscillating up and down and sideways at the same time: the energy in the oscillation can be thought of a force acting over some distance, regardless of whether or not it is actually acting on a particle. Now, that energy will have an equivalent mass which is—or should be, I’d say… Well… The mass of our electron or, generalizing, the mass of the particle we’re looking at.

Huh? Yes. In case you wonder what I am trying to get at, I am trying to convey the idea that the two interpretations—the field versus the flywheel model—are actually fully equivalent, or compatible, if you prefer that term. In Asia, they would say: they are the “same-same but different” 🙂 but, using the language that’s used when discussing the Copenhagen interpretation of quantum physics, we should actually say the two models are complementary.

You may shrug your shoulders but… Well… It is a very deep philosophical point, really. 🙂 As far as I am concerned, I’ve never seen a better illustration of the (in)famous Complementarity Principle in quantum physics because… Well… It goes much beyond complementarity. This is about equivalence. 🙂 So it’s just like Einstein’s equation. 🙂

Post scriptum: If you read my posts carefully, you’ll remember I struggle with those 1/2 factors here and there. Textbooks don’t care about them. For example, when deriving the size of an atom, or the Rydberg energy, even Feynman casually writes that “we need not trust our answer [to questions like this] within factors like 2, π, etcetera.” Frankly, that’s disappointing. Factors like 2, 1/2, π or 2π are pretty fundamental numbers, and so they need an explanation. So… Well… I do loose sleep over them. Let me advance some possible explanation here.

As for Feynman’s model, and the derivation of electron orbitals in general, I think it’s got to do with the fact that electrons do want to pair up when thermal motion does not come into play: think of the Cooper pairs we use to explain superconductivity (so that’s the BCS theory). The 1/2 factor in Schrödinger’s equation also has weird consequences (when you plug in the elementary wavefunction and do the derivatives, you get a weird energy concept: E = m·v2, to be precise). This problem may also be solved when assuming we’re actually calculating orbitals for a pair of electrons, rather than orbitals for just one electron only. [We’d get twice the mass (and, presumably, the charge, so… Well… It might work—but I haven’t done it yet. It’s on my agenda—as so many other things, but I’ll get there… One day. :-)]

So… Well… Let’s get back to the lesson here. In this particular context (i.e. in the context of trying to find some reasonable physical interpretation of the wavefunction), you may or may not remember (if not, check my post on it) ‘ll remember I had to use the I = m·r2/2 formula for the angular momentum, as opposed to the I = m·r2 formula. I = m·r2/2 (with the 1/2 factor) gives us the angular momentum of a disk with radius r, as opposed to a point mass going around some circle with radius r. I noted that “the addition of this 1/2 factor may seem arbitrary”—and it totally is, of course—but so it gave us the result we wanted: the exact (Compton scattering) radius of our electron.

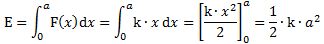

Now, the arbitrary 1/2 factor may or may be explained as follows. In the field model of our electron, the force is linearly proportional to the extension or compression. Hence, to calculate the energy involved in stretching it from x = 0 to x = a, we need to calculate it as the following integral:

So… Well… That will give you some food for thought, I’d guess. 🙂 If it racks your brain too much—or if you’re too exhausted by this point (which is OK, because it racks my brain too!)—just note we’ve also shown that the energy is proportional to the square of the amplitude here, so that’s a nice result as well… 🙂

Talking food for thought, let me make one final point here. The c2 = a2·k/m relation implies a value for k which is equal to k = m·c2/a = E/a. What does this tell us? In one of our previous posts, we wrote that the radius of our electron appeared as a natural distance unit. We wrote that because of another reason: the remark was triggered by the fact that we can write the c/ω ratio as c/ω = a·ω/ω = a. This implies the tangential and angular velocity in our flywheel model of an electron would be the same if we’d measure distance in units of a. Now, the E = a·k = a·F/x (just re-writing…) implies that the force is proportional to the energy— F = (x/a)·E — and the proportionality coefficient is… Well… x/a. So that’s the distance measured in units of a. So… Well… Isn’t that great? The radius of our atom appearing as a natural distance unit does fit in nicely with our geometric interpretation of the wavefunction, doesn’t it? I mean… Do I need to say more?

I hope not because… Well… I can’t explain any better for the time being. I hope I sort of managed to convey the message. Just to make sure, in case you wonder what I was trying to do here, it’s the following: I told you c appears as a resonant frequency of spacetime and, in this post, I tried to explain what that really means. I’d appreciate if you could let me know if you got it. If not, I’ll try again. 🙂 When everything is said and done, one only truly understands stuff when one is able to explain it to someone else, right? 🙂 Please do think of more innovative or creative ways if you can! 🙂

OK. That’s it but… Well… I should, perhaps, talk about one other thing here. It’s what I mentioned in the beginning of this post: this analysis assumes we’re looking at our particle from some specific direction. It could be any direction but… Well… It’s some direction. We have no depth in our line of sight, so to speak. That’s really interesting, and I should do some more thinking about it. Because the direction could be any direction, our analysis is valid for any direction. Hence, if our interpretation would happen to be some true—and that’s a big if, of course—then our particle has to be spherical, right? Why? Well… Because we see this circular thing from any direction, so it has to be a sphere, right?

Well… Yes. But then… Well… While that logic seems to be incontournable, as they say in French, I am somewhat reluctant to accept it at face value. Why? I am not sure. Something inside of me says I should look at the symmetries involved… I mean the transformation formulas for wavefunction when doing rotations and stuff. So… Well… I’ll be busy with that for a while, I guess. 😦

Post scriptum 2: You may wonder whether this line of reasoning would also work for a proton. Well… Let’s try it. Because its mass is so much larger than that of an electron (about 1835 times), the a = ħ/(m·c) formula gives a much smaller radius: 1835 times smaller, to be precise, so that’s around 2.1×10−16 m, which is about 1/4 of the so-called charge radius of a proton, as measured by scattering experiments. So… Well… We’re not that far off, but… Well… We clearly need some more theory here. Having said that, a proton is not an elementary particle, so its mass incorporates other factors than what we’re considering here (two-dimensional oscillations).