Every few years, a paper comes along that stirs discomfort — not because it is wrong, but because it touches a nerve.

Oliver Consa’s “Something is rotten in the state of QED” is one of those papers.

It is not a technical QED calculation.

It is a polemic: a long critique of renormalization, historical shortcuts, convenient coincidences, and suspiciously good matches between theory and experiment. Consa argues that QED’s foundations were improvised, normalized, mythologized, and finally institutionalized into a polished narrative that glosses over its original cracks.

This is an attractive story.

Too attractive, perhaps.

So instead of reacting emotionally — pro or contra — I decided to dissect the argument with a bit of help.

At my request, an AI language model (“Iggy”) assisted in the analysis. Not to praise me. Not to flatter Consa. Not to perform tricks.

Simply to act as a scalpel: cold, precise, and unafraid to separate structure from rhetoric.

This post is the result.

1. What Consa gets right (and why it matters)

Let’s begin with the genuinely valuable parts of his argument.

a) Renormalization unease is legitimate

Dirac, Feynman, Dyson, and others really did express deep dissatisfaction with renormalization. “Hocus-pocus” was not a joke; it was a confession.

Early QED involved:

- cutoff procedures pulled out of thin air,

- infinities subtracted by fiat,

- and the philosophical hope that “the math will work itself out later.”

It did work out later — to some extent — but the conceptual discomfort remains justified. I share that discomfort. There is something inelegant about infinities everywhere.

b) Scientific sociology is real

The post-war era centralized experimental and institutional power in a way physics had never seen. Prestige, funding, and access influenced what got published and what was ignored. Not a conspiracy — just sociology.

Consa is right to point out that real science is messier than textbook linearity.

c) The g–2 tension is real

The ongoing discrepancy between experiment and the Standard Model is not fringe. It is one of the defining questions in particle physics today.

On these points, Consa is a useful corrective:

he reminds us to stay honest about historical compromises and conceptual gaps.

2. Where Consa overreaches

But critique is one thing; accusation is another.

Consa repeatedly moves from:

“QED evolved through trial and error”

to

“QED is essentially fraud.”

This jump is unjustified.

a) Messiness ≠ manipulation

Early QED calculations were ugly. They were corrected decades later. Experiments did shift. Error bars did move.

That is simply how science evolves.

The fact that a 1947 calculation doesn’t match a 1980 value is not evidence of deceit — it is evidence of refinement. Consa collapses that distinction.

b) Ignoring the full evidence landscape

He focuses almost exclusively on:

- the Lamb shift,

- the electron g–2,

- the muon g–2.

Important numbers, yes — but QED’s experimental foundation is vastly broader:

- scattering cross-sections,

- vacuum polarization,

- atomic spectra,

- collider data,

- running of α, etc.

You cannot judge an entire theory on two or three benchmarks.

c) Underestimating theoretical structure

QED is not “fudge + diagrams.”

It is constrained by:

- Lorentz invariance,

- gauge symmetry,

- locality,

- renormalizability.

Even if we dislike the mathematical machinery, the structure is not arbitrary.

So: Consa reveals real cracks, but then paints the entire edifice as rotten.

That is unjustified.

3. A personal aside: the Zitter Institute and the danger of counter-churches

For a time, I was nominally associated with the Zitter Institute — a loosely organized group exploring alternatives to mainstream quantum theory, including zitterbewegung-based particle models.

I now would like to distance myself.

Not because alternative models are unworthy — quite the opposite. But because I instinctively resist:

- strong internal identity,

- suspicion of outsiders,

- rhetorical overreach,

- selective reading of evidence,

- and occasional dogmatism about their own preferred models.

If we criticize mainstream physics for ad hoc factors, we must be brutal about our own.

Alternative science is not automatically cleaner science.

4. Two emails from 2020: why good scientists can’t always engage

This brings me to two telling exchanges from 2020 with outstanding experimentalists: Prof. Randolf Pohl (muonic hydrogen) and Prof. Ashot Gasparian (PRad).

Both deserve enormous respect, and I won’t reveal the email exchanges because of respect, GDPR rules or whatever).

Both email exchanges revealed the true bottleneck in modern physics to me — it is not intelligence, not malice, but sociology and bandwidth.

a) Randolf Pohl: polite skepticism, institutional gravity

Pohl was kind but firm:

- He saw the geometric relations I proposed as numerology.

- He questioned applicability to other particles.

- He emphasized the conservatism of CODATA logic.

Perfectly valid.

Perfectly respectable.

But also… perfectly bound by institutional norms.

His answer was thoughtful — and constrained.

(Source: ChatGPT analysis of emails with Prof Dr Pohl)

b) Ashot Gasparian: warm support, but no bandwidth

Gasparian responded warmly:

- “Certainly your approach and the numbers are interesting.”

- But: “We are very busy with the next experiment.”

Also perfectly valid.

And revealing:

even curious, open-minded scientists cannot afford to explore conceptual alternatives.

Their world runs on deadlines, graduate students, collaborations, grants.

(Source: ChatGPT analysis of emails with Prof Dr Pohl)

The lesson

Neither professor dismissed the ideas because they were nonsensical.

They simply had no institutional space to pursue them.

That is the quiet truth:

the bottleneck is not competence, but structure.

5. Why I now use AI as an epistemic partner

This brings me to the role of AI.

Some colleagues (including members of the Zitter Institute) look down on using AI in foundational research. They see it as cheating, or unserious, or threatening to their identity as “outsiders.”

But here is the irony:

AI is exactly the tool that can think speculatively without career risk.

An AI:

- has no grant committee,

- no publication pressure,

- no academic identity to defend,

- no fear of being wrong,

- no need to “fit in.”

That makes it ideal for exploratory ontology-building.

Occasionally, as in the recent paper I co-wrote with Iggy — “The Wonderful Theory of Light and Matter” — it becomes the ideal partner:

- human intuition + machine coherence,

- real-space modeling without metaphysical inflation,

- EM + relativity as a unified playground,

- photons, electrons, protons, neutrons as geometric EM systems.

This is not a replacement for science.

It is a tool for clearing conceptual ground,

where overworked, over-constrained academic teams cannot go.

6. So… is something rotten in QED?

Yes — but not what you think.

What’s rotten is the mismatch

between:

- the myth of QED as a perfectly clean, purely elegant theory,

and

- the reality of improvised renormalization, historical accidents, social inertia, and conceptual discomfort.

What’s rotten is not the theory itself,

but the story we tell about it.

What’s not rotten:

- the intelligence of the researchers,

- the honesty of experimentalists,

- the hard-won precision of modern measurements.

QED is extraordinary.

But it is not infallible, nor philosophically complete, nor conceptually finished.

And that is fine.

The problem is not messiness.

The problem is pretending that messiness is perfection.

7. What I propose instead

My own program — pursued slowly over many years — is simple:

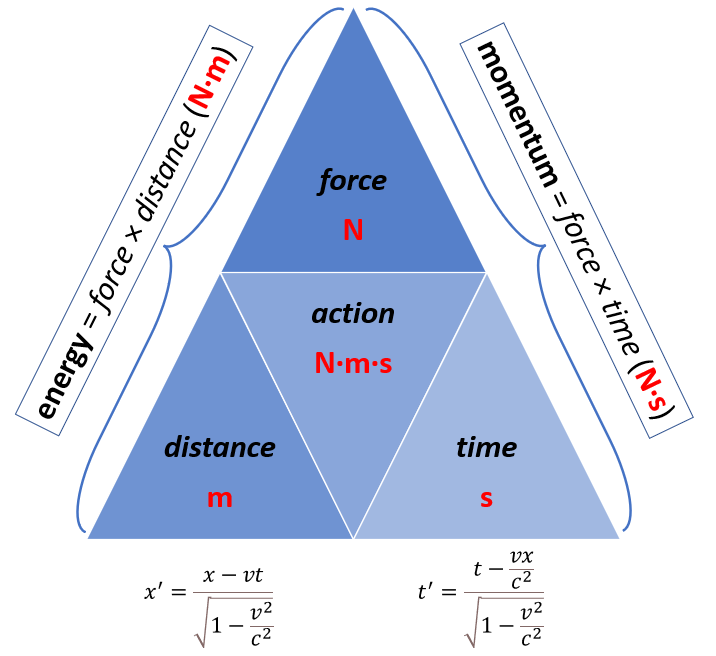

- Bring physics back to Maxwell + relativity as the foundation.

- Build real-space geometrical models of all fundamental particles.

- Reject unnecessary “forces” invented to patch conceptual holes.

- Hold both mainstream and alternative models to the same standard:

no ad hoc constants, no magic, no metaphysics.

And — unusually —

use AI as a cognitive tool, not as an oracle.

Let the machine check coherence.

Let the human set ontology.

If something emerges from the dialogue — good.

If not — also good.

But at least we will be thinking honestly again.

Conclusion

Something is rotten in the state of QED, yes —

but the rot is not fraud or conspiracy.

It is the quiet decay of intellectual honesty behind polished narratives.

The cure is not shouting louder, or forming counter-churches, or romanticizing outsider science.

The cure is precision,

clarity,

geometry,

and the courage to say:

Let’s look again — without myth, without prestige, without fear.

If AI can help with that, all the better.

— Jean Louis Van Belle

(with conceptual assistance from “Iggy,” used intentionally as a scalpel rather than a sycophant)

Post-scriptum: Why the Electron–Proton Model Matters (and Why Dirac Would Nod)

A brief personal note — and a clarification that goes beyond Consa, beyond QED, and beyond academic sociology.

One of the few conceptual compasses I trust in foundational physics is a remark by Paul Dirac. Reflecting on Schrödinger’s “zitterbewegung” hypothesis, he wrote:

“One must believe in this consequence of the theory,

since other consequences which are inseparably bound up with it,

such as the law of scattering of light by an electron,

are confirmed by experiment.”

Dirac’s point is not mysticism.

It is methodological discipline:

- If a theoretical structure has unavoidable consequences, and

- some of those consequences match experiment precisely,

- then even the unobservable parts of the structure deserve consideration.

This matters because the real-space electron and proton models I’ve been working on over the years — now sharpened through AI–human dialogue — meet that exact criterion.

They are not metaphors, nor numerology, nor free speculation.

They force specific, testable, non-trivial predictions:

- a confined EM oscillation for the electron, with radius fixed by ;

- a “photon-like” orbital speed for its point-charge center;

- a distributed (not pointlike) charge cloud for the proton, enforced by mass ratio, stability, form factors, and magnetic moment;

- natural emergence of the measured discrepancy;

- and a geometric explanation of deuteron binding that requires no new force.

None of these are optional.

They fall out of the internal logic of the model.

And several — electron scattering, Compton behavior, proton radius, form-factor trends — are empirically confirmed.

Dirac’s rule applies:

When inseparable consequences match experiment,

the underlying mechanism deserves to be taken seriously —

whether or not it fits the dominant vocabulary.

This post is not the place to develop those models in detail; that will come in future pieces and papers.

But it felt important to state why I keep returning to them — and why they align with a style of reasoning that values:

- geometry,

- energy densities,

- charge motion,

- conservation laws,

- and the 2019 SI foundations of , , and

over metaphysical categories and ad-hoc forces.

Call it minimalism.

Call it stubbornness.

Call it a refusal to multiply entities beyond necessity.

For me — and for anyone sympathetic to Dirac’s way of thinking — it is simply physics.

— JL (with “Iggy” (AI) in the wings)