[Pre-scriptum (May 2026): This blog post grew out of a broader reflection that has since taken a more structured form. Prompted in part by the critical perspective of Sabine Hossenfelder, I have developed these ideas further in a short paper—Physics Beyond Prediction: On Beauty, Meaning and the Interpretation of Theory—which revisits the distinction between theory, calculation, and explanation, and asks what may still be missing from our current understanding of “good physics.”]

I finally got around to reading Sabine Hossenfelder’s ‘Lost in Math‘ (2018).

It fully deserves its praise. The book is, as the reviewers write, accessible, well-informed, and engaging—at times even genuinely funny. The structure, built around interviews with leading theorists, gives it both breadth and credibility. It is, without doubt, one of the better popular accounts of modern theoretical physics.

It also felt familiar.

Hossenfelder and I belong to roughly the same generation. As teenagers in the 1980s, we were fascinated by the same questions: What is the Standard Model really about? Where did it come from? What problems did it solve that even Albert Einstein or Max Planck could not? And what new questions did it open?

And then, of course, the next layer: why do we need theories beyond it—string theory, supersymmetry—if the Standard Model already works so well? What are these theories trying to explain that the Standard Model cannot?

And what should we make of the experimental side of things? From the discovery of the Higgs boson to the evidence for dark matter, dark energy, and gravitational waves—what do these findings actually mean?

Hossenfelder chose to pursue these questions within academic physics. I did not. I studied economics, but continued to explore physics as a personal project—especially after 2012, when the Higgs boson was announced. By then, I had grown dissatisfied with popular science accounts and felt the need to understand the mathematics itself.

And yet, after working through the math, I found myself asking a different kind of question: not whether the equations work, but what they mean.

It is here that Hossenfelder’s book, for me, remains incomplete.

Beauty, Truth—and Something Missing

The central argument of Lost in Math is well known: modern theoretical physics has been led astray by an overreliance on aesthetic criteria—symmetry, elegance, mathematical beauty—at the expense of empirical grounding.

That critique is compelling, and I largely agree with it.

But it seems to stop halfway.

While Hossenfelder questions the role of beauty, she does not fundamentally question the underlying framework itself. The Standard Model and its extensions remain, in her account, the unquestioned language in which physical truth must ultimately be expressed.

What is largely absent is a deeper discussion of physical interpretation.

The Question of Meaning

Let me be more concrete.

The book does not attempt to explain why the strong force could not be understood in more classical terms, for example as some form of electromagnetic interaction arising from internal charge dynamics.

It does not address why abstract quantum numbers—color charge, flavour, isospin—should be regarded as physically compelling, rather than as mathematical constructs that work but lack intuitive grounding.

Likewise, the weak force appears mainly as part of a formal structure, without much discussion of what it might represent in more tangible terms—such as the distinction between stable and unstable particles.

And perhaps most strikingly, the book does not engage in any depth with the meaning of the most fundamental relations in physics: the quantization expressed in the Planck relation, or the significance of mass-energy equivalence. These are presented as known facts, not as conceptual puzzles.

None of this is a flaw in the usual sense. It is simply not the book Hossenfelder set out to write.

But it is the book I was hoping to read.

Old Physics, Reconsidered

So where does that leave us?

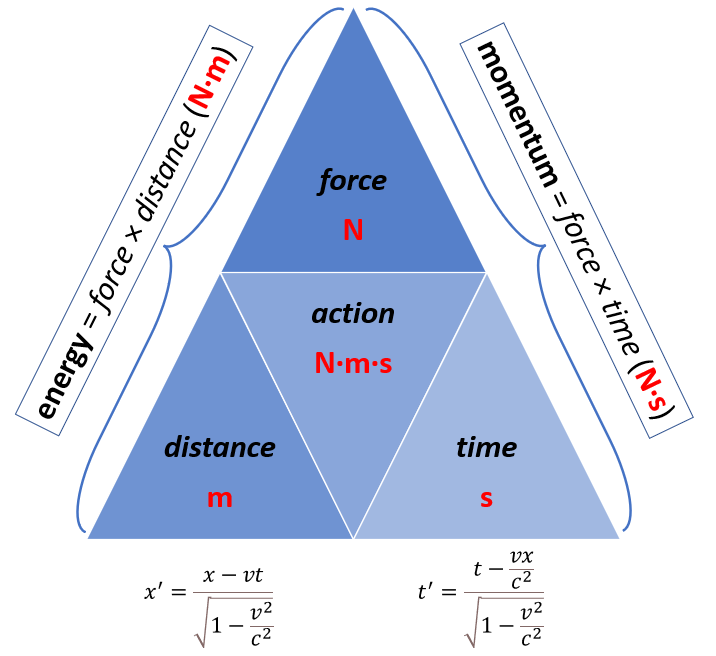

In my own work, I often find myself returning to what many would call “old physics”: Maxwell’s equations, together with relations like Planck–Einstein relation and mass–energy equivalence.

This may seem old-fashioned. Perhaps it is.

But I am increasingly convinced that the real challenge is not to extend the mathematical formalism, but to understand what the existing formalism is telling us about physical reality.

From that perspective, the problem is not only that modern physics may have followed beauty too far. It is also that it may have drifted too far from meaning.

A Different Kind of Dissatisfaction

Hossenfelder ends her book on a note of optimism. Physics, she argues, will continue to make breakthroughs, and those breakthroughs will—once again—be beautiful.

I hope she is right.

But closing the book, I was left with a different thought. Not frustration, but a kind of clarity.

I realized that I am quite content continuing to explore these questions from a more classical, more intuitive starting point—even if that places me outside the mainstream.

Because, in the end, the question that still matters most to me is a simple one:

Not whether the mathematics works, but whether we truly understand what it is saying.

Post scriptum on the 2019 revision of SI units

Sabine Hossenfelder finished and published her book in 2018—just before the 2019 revision of the SI units.

I find myself wondering whether that revision is, in its own quiet way, more meaningful than many of the theoretical developments discussed in her book. Perhaps I am over-interpreting, but this is how it looks to me.

The revised SI system fixes exact numerical values for a small number of fundamental constants, such as the Planck constant, the elementary charge, and the speed of light. In doing so, it anchors our system of measurement in quantities that are directly tied to observation and experiment.

What is striking, however, is what it does not include.

There is no place in the SI framework for the various additional “charges” or quantum numbers that appear in the Standard Model—no color charge, no flavour, no isospin. These concepts may be essential within the mathematical structure of modern particle physics, but they do not enter the system that defines how we measure physical reality.

This is not a flaw in the SI system—quite the contrary. It is designed to remain independent of theoretical interpretation, and to rely only on quantities that can be operationally defined and reproducibly measured.

But that, in itself, is revealing.

It suggests a distinction between what we can measure directly and what we introduce as part of a theoretical framework. And it raises a question—at least for me—about how closely our most advanced theories are tied to physically meaningful quantities.

None of this diminishes the achievements recognized by a Nobel Prize in Physics or other honours—or the remarkable success of modern theoretical physics more generally. But it does serve as a quiet reminder that predictive success is not the same as final understanding.

If anything, the SI revision reinforces my own inclination to look for interpretations of physics that remain as close as possible to what can be directly measured and understood.

Post-Post-Scriptum on what I would like to write

Since writing this, I’ve taken a small but meaningful step: I uploaded a somewhat older manuscript and a newly written Chapter 2 to ResearchGate, as companion documents to my Radial Genesis paper (thoughts on cosmology).

It is not as a finished book — far from it — but as a snapshot of where my thinking currently stands. If I were to write a full-blown book about this, it would not be a technical monograph, nor a speculative manifesto. It would be something in between: a guided journey. I would try to connect three layers:

- the physical intuition (what kind of universe are we actually living in?),

- the mathematical structure (how symmetry, geometry, and scaling laws shape that intuition),

- and the cosmological narrative (how a finite universe with emergent spacetime could naturally arise).

Most importantly, I would try to bridge particle physics and cosmology — not as separate domains, but as different perspectives on the same underlying structure.

The current documents are fragments of that attempt. For now, I will leave them as they are. Sometimes it is better to pause, let ideas settle, and return later with fresh eyes.

Post-post-post-scriptum

I couldn’t help thinking about this question: if the math in academic physics has become “ugly” or “lost,” then what would a beautiful alternative look like? Of course, ‘beauty’ (for me, at least) is a combination of simplicity and realism, and so that is my ‘RealQM’ world view. So I did a quick paper on ResearchGate on what Sabine Hossenfelder still thinks of as very ‘mysterious’ but which, to me, is easily explained in my ‘RealQM’ framework’:

- The “Ghost” Sector (Dark Matter): Two types of electromagnetism (defined by the fundamental asymmetry in Maxwell’s equations modern mainstream physicists completely ignore) share the same spacetime but do not interact otherwise. Because they share the same spacetime, they do interact ‘gravitationally’. Full stop: no further explanation needed.

- The Proton Radius: My two-line theoretical calculation gives a proton radius of 0.841 fm. Recent measurements clocked the proton at 0.8406(15) fm. What more confirmation is needed to urge physicists to think of particles as dynamical structures rather than abstract entities with lots of abstract or non-measurable properties?

- Needless to say: challenges are still out there, and AI baptizes one of them now officially as The Geometry Challenge or Proton Yarnball Puzzle.

Read this last (?) working paper on ResearchGate here.