In my previous post, I copied a simple animation from Wikipedia to show how one can move from Cartesian to polar coordinates. It’s really neat. Just watch it a few times to appreciate what’s going on here.

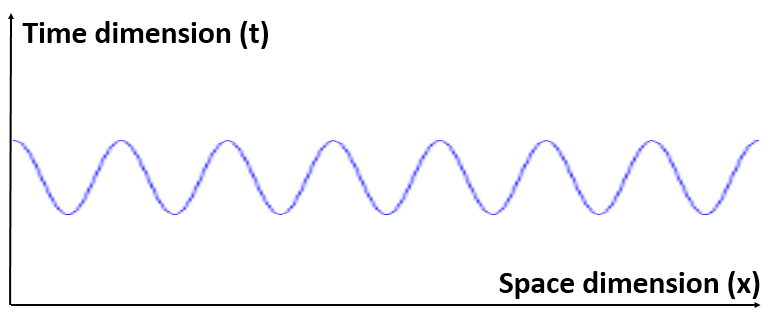

First, the function is being inverted, so we go from y = f(x) to x = g(y) with g = f−1. In this case, we know that if y = sin(6x) + 2 (that’s the function above), then x = (1/6)·arcsin(y – 2). [Note the troublesome convention to denote the inverse function by the -1 superscript: it’s troublesome because that superscript is also used for a reciprocal—and f−1 has, obviously, nothing to do with 1/f. In any case, let’s move on.] So we swap the x-axis for the y-axis, and vice versa. In fact, to be precise, we reflect them about the diagonal. In fact, w’re reflecting the whole space here, including the graph of the function. Note that, in three-dimensional space, this reflection can also be looked at as a rotation – again, of all space, including the graph and the axes – by 180 degrees. The axis of rotation is, obviously, the same diagonal. [I like how the animation visualizes this. Neat! It made me think!]

First, the function is being inverted, so we go from y = f(x) to x = g(y) with g = f−1. In this case, we know that if y = sin(6x) + 2 (that’s the function above), then x = (1/6)·arcsin(y – 2). [Note the troublesome convention to denote the inverse function by the -1 superscript: it’s troublesome because that superscript is also used for a reciprocal—and f−1 has, obviously, nothing to do with 1/f. In any case, let’s move on.] So we swap the x-axis for the y-axis, and vice versa. In fact, to be precise, we reflect them about the diagonal. In fact, w’re reflecting the whole space here, including the graph of the function. Note that, in three-dimensional space, this reflection can also be looked at as a rotation – again, of all space, including the graph and the axes – by 180 degrees. The axis of rotation is, obviously, the same diagonal. [I like how the animation visualizes this. Neat! It made me think!]

Of course, if we swap the axes, then the domain and the range of the function get swapped too. Let’s see how that works here: x goes from −π to +π, so that’s one cycle (but one that starts from −π and goes to +π, rather than from 0 to 2π), and, hence, y ranges between 1 and 3. [Whatever its argument, the sine function always yields a value between −1 and +1, but we add 2 to every value it takes, so we get the [1, 3] interval now.] After swapping the x- and y-axis, the angle, i.e. the interval between −π and +π, is now on the vertical axis. That’s clear enough. So far so good. 🙂 The operation that follows, however, is a much more complicated transformation of space and, therefore, much more interesting.

The transformation bends the graph around the origin so its head and tail meet. That’s easy to see. What’s a bit more difficult to understand is how the coordinate axes transform. I had to look at the animation several times – so please do the same. Note how this transformation wraps all of the vertical lines around a circle, and how the radius of those circles depends on the distance of those lines from the origin (as measured along the horizontal axis). What about the vertical axis? The animation is somewhat misleading here, as it gives the impression we’re first making another circle out of it, which we then sort of shrink—all the way down to a circle with zero radius! So the vertical axis becomes the origin of our new space. However, there’s no shrinking really. What happens is that we also wrap it around a circle—but one with zero radius indeed!

It’s a very weird operation because we’re dealing with a non-linear transformation here (unlike rotation or reflection) and, therefore, we’re not familiar with it. Even weirder is what happens to the horizontal axis: somehow, this axis becomes an infinite disc, so the distance out is now measured from the center outwards. I should figure out the math here, but that’s for later. The point is: the r = sin(6θ) + 2 function in the final graph (i.e. the curve that looks like a petaled flower) is the same as that y = sin(6x) + 2 curve, so y = r and x = θ, and so we can write what’s written above: r(θ) = sin(6·θ) + 2.

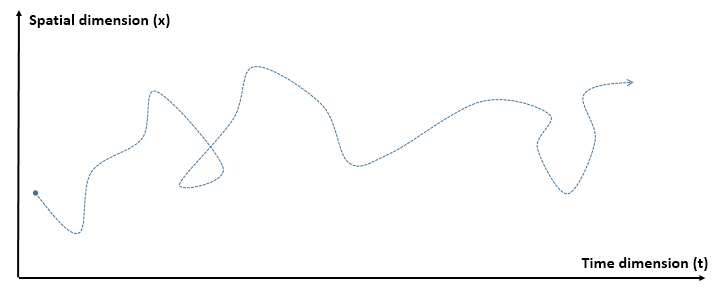

You’ll say: nice, but so what? Well… When I saw this animation, my first reaction was: what if the x and y would be time and space respectively? You’ll say: what space? Well… Just space: three-dimensional space. So think of one of the axes as packing three dimensions really, or three directions—like what’s depicted below. Now think of some point-like object traveling through spacetime, as shown below. It doesn’t need to be point-like, of course—just small enough so we can represent its trajectory by a line. You can also think of the movement of its center-of-mass if you don’t like point-like assumptions. 🙂

Of course, you’ll immediately say the trajectory above is not kosher, as our object travels back in time in three sections of this ‘itinerary’.

You’re right. Let’s correct that. It’s easy to see how we should correct it. We just need to ensure the itinerary is a well-defined function, which isn’t the case with the function above: for one value of t, we have only one value of x everywhere—except where we allow our particle to travel back in time. So… Well… We shouldn’t allow that. The concept of a well-defined function implies we need to choose one direction in time. 🙂 That’s neat, because this gives us an explanation for the unique direction of time without having to invoke entropy or other macro-concepts. So let’s replace that thing above by something more kosher traveling in spacetime, like the thing below.

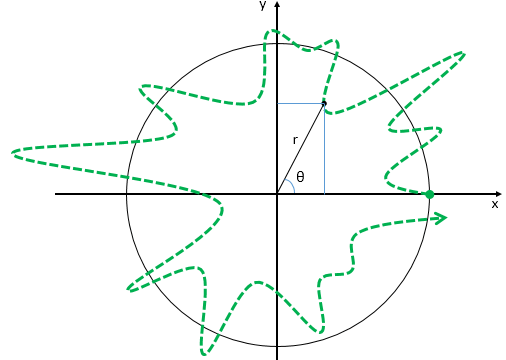

Now think of wrapping that around some circle. We’d get something like below. [Don’t worry about the precise shape of the graph, as I made up a new one. Note the remark on the need to have a well-behaved function applies here too!]

Now think of wrapping that around some circle. We’d get something like below. [Don’t worry about the precise shape of the graph, as I made up a new one. Note the remark on the need to have a well-behaved function applies here too!]

Neat, you’ll say, but so what? All we’ve done so far is show that we can represent some itinerary in spacetime in two different ways. In the first representation, we measure time along some linear axis, while, in the second representation, time becomes some angle—an angle that increases, counter-clockwise. To put it differently: time becomes an angular velocity.

Neat, you’ll say, but so what? All we’ve done so far is show that we can represent some itinerary in spacetime in two different ways. In the first representation, we measure time along some linear axis, while, in the second representation, time becomes some angle—an angle that increases, counter-clockwise. To put it differently: time becomes an angular velocity.

Likewise, the spatial dimension was a linear feature in the first representation, while in the second we think of it as some distance measured from some zero point. Well… In fact… No. That’s not correct. The r above has got nothing to do with the distance traveled: the distance traveled would need to be measured along the curve.

Hmm… What’s the deal here?

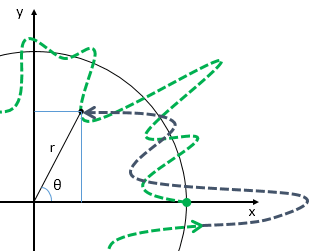

Frankly, I am not sure. Now that I look at it once more, I note that the exercise with our graph above involved one cycle of a periodic function only—so it’s really not like some object traveling in spacetime, because that’s not a periodic thing. But… Well… Does that matter all that much? It’s easy to imagine how our new representation would just involve some thing that keeps going around and around, as illustrated below.

So, in this representation, any movement in spacetime – regular or irregular – does become something periodic. But what is periodic here? My first answer is the simplest and, hence, probably the correct one: it’s just time. Time is the periodic thing here.

Having said that, I immediately thought of something else that’s periodic: the wavefunction that’s associated with this object—any object traveling in spacetime, really—is periodic too. So my guts instinct tells me there’s something here that we might want to explore further. 🙂 Could we replace the function for the trajectory with the wavefunction?

Huh? Yes. The wavefunction also associates each x and t, although the association is a bit more complex—literally, because we’ll associate it with two periodic functions: the real part and the imaginary part of the (complex-valued) wavefunction. But for the rest, no problem, I’d say. Remember our wavefunction, when squared, represents the probability of our object being there. [I should say “absolute-squared” rather than squared, but that sounds so weird.]

But… Yes? Well… Don’t we get in trouble here because the same complex number (i.e. r·eθ = x + i·y) may be related to two points in spacetime—as shown in the example above? My answer is the same: I don’t think so. It’s the same thing: our new representation implies stuff keeps going around and around in it. In fact, that just captures the periodicity of the wavefunction. So… Well… It’s fine. 🙂

The more important question is: what can we do with this new representation? Here I do not have any good answer. Nothing much for the moment. I just wanted to jot it down, because it triggers some deep thoughts—things I don’t quite understand myself, as yet.

First, I like the connection between a regular trajectory in spacetime – as represented by a well-defined function – and the unique direction in time it implies. It’s a simple thing: we know something can travel in any direction in space – forward, backwards, sideways, whatever – but time has one direction only. At least we can see why now: both in Cartesian as well as polar coordinates, we’d want to see a well-behaved function. 🙂 Otherwise we couldn’t work with it.

Another thought is the following. We associate the momentum of a particle with a linear trajectory in spacetime. But what’s linear in curved spacetime? Remember how we struggle to represent – or imagine, I would say – curved spacetime, as evidenced by the fact that most illustrations of curved spacetime represent a two-dimensional space in three-dimensional Cartesian space? Think of the typical illustration, like that rubber surface with the ball deforming it.

That’s why this transformation of a Cartesian coordinate space into a polar coordinate space is such an interesting exercise. We now measure distance along the circle. [Note that we suddenly need to keep track of the number of rotations, which we can do by keeping track of time, as time units become some angle, and linear speed becomes angular speed.] The whole thing underscores, in my view, that’s it’s only our mind that separates time and space: the reality of the object is just its movement or velocity – and that’s one movement.

My guts instinct tells me that this is what the periodicity of the wavefunction (or its component waves, I should say) captures, somehow. If the movement is linear, it’s linear both in space as well in time, so to speak:

- As a mental construct, time is always linear – it goes in one direction (and we think of the clock being regular, i.e. not slowing down or speeding up) – and, hence, the mathematical qualities of the time variable in the wavefunction are the same as those of the position variable: it’s a factor in one of its two terms. To be precise, it appears as the t in the E·t term in the argument θ = E·t – p·x. [Note the minus sign appears because we measure angles counter-clockwise when using polar coordinates or complex numbers.]

- The trajectory in space is also linear – whether or not space is curved because of the presence of other masses.

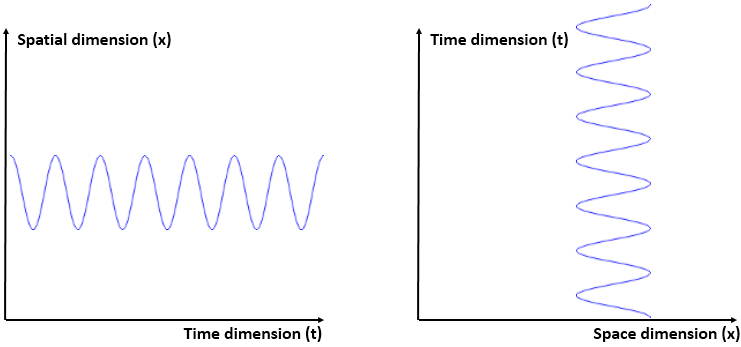

OK. I should conclude here, but I want take this conversation one step further. Think of the two graphs below as representing some oscillation in space. Some object that goes back and forth in space: it accelerates and decelerates—and reverses direction. Imagine the g-forces on it as it does so: if you’d be traveling with that object, you would sure feel it’s going back and forth in space! The graph on the left-hand side is our usual perspective on stuff like this: we measure time using some steadily ticking clock, and so the seconds, minutes, hours, days, etcetera just go by.

The graph on the right-hand side applies our inversion technique. But, frankly, it’s the same thing: it doesn’t give us any new information. It doesn’t look like a well-behaved function but it actually is. It’s just a matter of mathematical convention: if we’d be used to looking at the y-axis as the independent variable (rather than the dependent variable), the function would be acceptable.

This leads me to the idea I started to explore in my previous post, and that’s to try to think of wavefunctions as oscillations of spacetime, rather than oscillations in spacetime. I inserted the following graph in that post—but it doesn’t say all that much, as it suggests we’re doing the same thing here: we’re just swapping axes. The difference is that the θ in the first graph now combines both time and space. We might say it represents spacetime itself. So the wavefunction projects it into some other ‘space’, i.e. the complex space. And then in the second graph, we reflect the whole thing.

So the idea is the following: our functions sort of project one ‘space’ into another ‘space’. In this case: the wavefunction sort of transforms spacetime – i.e. what we like to think of as the ‘physical’ space – into a complex space – which is purely mathematical.

Hmm… This post is becoming way too long, so I need to wrap it up. Look at the graph below, and note the dimension of the axes. We’re looking at an oscillation once more, but an oscillation of time this time around.

Huh? Yes. Imagine that, for some reason, you don’t feel those g-forces while going up and down in space: it’s the rest of the world that’s moving. You think you’re stationary or—what amounts to the same according to the relativity principle—moving in a straight line at constant velocity. The only way how you could explain the rest of the world moving back and forth, accelerating and decelerating, is that time itself is oscillating: objects reverse their direction for no apparent reason—so that’s time reversal—and they do so a varying speeds, so we’ve got a clock going wild!

You’ll nod your head in agreement now and say: that’s Einstein’s intuition in regard to the gravitational force. There’s no force really: mass just bends spacetime in such a way a planet in orbit follows a straight line, in a curved spacetime continuum. What I am saying here is that there must be ways to think of the electromagnetic force in exactly the same way. If the accelerations and decelerations of an electron moving in some electron would really be due to an electromagnetic force in the classical picture of a force (i.e. something pulling or pushing), then it would radiate energy away. We know it doesn’t do that—because otherwise it would spiral down into the nucleus itself. So I’ve been thinking it must be traveling in its own curved spacetime, but then it’s curved because of the electromagnetic force—obviously, as that’s the force that’s much more relevant at this scale.

The underlying thought is simple enough: if gravity curves spacetime, why can’t we look at the other forces as doing the same? Why can’t we think of any force coming ‘with its own space’, so to say? The difference between the various forces is the curvature – which will, obviously, be much more complex (literally) for the electromagnetic force. Just think of the other forces as curving space in more than one dimension. 🙂

I am sure you’ll think I’ve just gone crazy. Perhaps. In any case, I don’t care too much. As mentioned, because the electromagnetic force is different—we don’t have negative masses attracting positive masses when discussing gravity—it’s going to be a much weirder type of curvature, but… Well… That’s probably why we need those ‘two-dimensional’ complex numbers when discussing quantum mechanics! 🙂 So we’ve got some more mathematical dimensions, but the physical principle behind all forces should be the same, no? All forces are measured using Newton’s Law, so we relate them to the motion of some mass. The principle is simple: if force is related to the change in motion of a mass, then the trajectory in the space that’s related to that force will be linear if the force is not acting.

So… Well… Hmm… What?

All of what I write above is a bit of a play with words, isn’t it? An oscillation of spacetime—but then spacetime must oscillate in something else, doesn’it? So in what then is it oscillating?

Great question. You’re right. It must be oscillating in something else or, to be precise, we need some other reference space so as to define what we mean by an oscillation of spacetime. That space is going to be some complex mathematical space—and I use complex both in its mathematical as well as in its everyday meaning here (complicated). Think of, for example, that x-axis representing three-dimensional space. We’d have something similar here: dimensions within dimensions.

There’s some great videos on YouTube that illustrate how one can turn a sphere inside out without punching a hole in it. That’s basically what we’re talking about here: it’s more than just switching the range for the domain of a function, which we can do by that reflection – or mirroring – using the 45º line. Conceptually, it’s really like turning a sphere inside out. Think of the surface of the curve connecting the two spaces.

Huh? Yes. But… Well… You’re right. Stuff like this is for the graduate level, I guess. So I’ll let you think about it—and do watch the videos that follow it. 🙂

In any case, I have to stop my wandering about here. Rather than wrapping up, however, I thought of something else yesterday—and so I’ll quickly jot that down as well, so I can re-visit it some other time. 🙂

Some other thinking on the Uncertainty Principle

I wanted to jot down something else too here. Something about the Uncertainty Principle once more. In my previous post, I noted we should think of Planck’s constant as expressing itself in time or in space, as we have two ways of looking at the dimension of Planck’s constant:

- [Planck’s constant] = [ħ] = N∙m∙s = (N∙m)∙s = [energy]∙[time]

- [Planck’s constant] = [ħ] = N∙m∙s = (N∙s)∙m = [momentum]∙[distance]

The bracket symbols [ and ] mean: ‘the dimension of what’s between the brackets’. Now, this may look like kids stuff, but the idea is quite fundamental: we’re thinking here of some amount of action (ħ, i.e. the quantum of action) expressing itself in time or, alternatively, expressing itself in space, indeed. In the former case, some amount of energy is expended during some time. In the latter case, some momentum is expended over some distance. We also know ħ can be written in terms of fundamental units, which are referred to as Planck units:

ħ = FP∙lP∙tP = Planck force unit × Planck distance unit × Planck time unit

Finally, we thought of the Planck distance unit and the Planck time unit as the smallest units of time and distance possible. As such, they become countable variables, so we’re talking of a trajectory in terms of discrete steps in space and time here, or discrete states of our particle. As such, the E·t and p·x in the argument (θ) of the wavefunction—remember: θ = (E/ħ)·t − (p/ħ)·x—should be some multiple of ħ as well. We may write:

E·t = m·ħ and p·x = n·ħ, with m and n both positive integers

Of course, there’s uncertainty: Δp·Δx ≥ ħ/2 and ΔE·Δt ≥ ħ/2. Now, if Δx and Δt also become countable variables, so Δx and Δt can only take on values like ±1, ±2, ±3, ±4, etcetera, then we can think of trying to model some kind of random walk through spacetime, combining various values for n and m, as well as various values for Δx and Δt. The relation between E and p, and the related difference between m and n, should determine in what direction our particle should be moving even if it can go along different trajectories. In fact, Feynman’s path integral formulation of quantum mechanics tells us it’s likely to move along different trajectories at the same time, with each trajectory having its own amplitude. Feynman’s formulation uses continuum theory, of course, but a discrete analysis – using a random walk approach – should yield the same result because, when everything is said and done, the fact that physics tells us time and space must become countable at some scale (the Planck scale), suggests that continuum theory may not represent reality, but just be an approximation: a limiting situation, in other words.

Hmmm… Interesting… I’ll need to do something more with this. Unfortunately, I have little time over the coming weeks. Again, I am just writing it down to re-visit it later—probably much later. 😦