Last year’s (2022) Nobel Prize in Physics went to Alain Aspect, John Clauser, and Anton Zeilinger for “for experiments with entangled photons, establishing the violation of Bell inequalities and pioneering quantum information science.”

I did not think much of that award last year. Proving that Bell’s No-Go Theorem cannot be right? Great. Finally! I think many scientists – including Bell himself – already knew this theorem was a typical GIGO argument: garbage in, garbage out. As the young Louis de Broglie famously wrote in the introduction of his thesis: hypotheses are worth only as much as the consequences that can be deduced from it, and the consequences of Bell’s Theorem did not make much sense. As I wrote in my post on it, Bell himself did not think much of his own theorem until, of course, he got nominated for a Nobel Prize: it is a bit hard to say you got nominated for a Nobel Prize for a theory you do not believe in yourself, isn’t it? In any case, Bell’s Theorem has now been experimentally disproved. That is – without any doubt – a rather good thing. 🙂 To save the face of the Nobel committee here (why award something that disproves something else that you would have given an award a few decades ago?): Bell would have gotten a Nobel Prize, but he died from brain hemorrhage before, and Nobel Prizes reward the living only.

As for entanglement, I repeat what I wrote many times already: the concept of entanglement – for which these scientists got a Nobel Prize last year – is just a fancy word for the simultaneous conservation of energy, linear and angular momentum (and – if we are talking matter-particles – charge). There is ‘no spooky action at a distance’, as Einstein would derogatorily describe it when the idea was first mentioned to him. So, I do not see why a Nobel Prize should be awarded for rephrasing a rather logical outcome of photon experiments in metamathematical terms.

Finally, the Nobel Prize committee writes that this has made a significant contribution to quantum information science. I wrote a paper on the quantum computing hype, in which I basically ask this question: qubits may or may not be better devices than MOSFETs to store data – they are not, and they will probably never be – but that is not the point. How does quantum information change the two-, three- or n-valued or other rule-based logic that is inherent to the processing of information? I wish the Nobel Prize committee could be somewhat more explicit on that because, when everything is said and done, one of the objectives of the Prize is to educate the general public about the advances of science, isn’t it?

However, all this ranting of mine is, of course, unimportant. We know that it took the distinguished Royal Swedish Science Academy more than 15 years to even recognize the genius of an Einstein, so it was already clear then that their selection criteria were not necessarily rational. [Einstein finally got a well-deserved Nobel Prize, not for relativity theory (strangely enough: if there is one thing on which all physicist are agreed, it is that relativity theory is the bedrock of all of physics, isn’t it?), but for a much less-noted paper on the photoelectric effect – in 1922: 17 years after his annus mirabilis papers had made a killing not only in academic circles but in the headlines of major newspapers as well, and 10 years after a lot of fellow scientists had nominated him for it (1910).]

Again, Mahatma Gandhi never got a Nobel Price for Peace (so Einstein should consider himself lucky to get some Nobel Prize, right?), while Ursula von der Leyen might be getting one for supporting the war with Russia, so I must remind myself of the fact that we do live in a funny world and, perhaps, we should not be trying to make sense of these rather weird historical things. 🙂

Let me turn to the main reason why I am writing this indignant post. It is this: I am utterly shocked by what Dr. John Clauser has done with his newly gained scientific prestige: he joined the CO2 coalition! For those who have never heard of it, it is a coalition of climate change deniers. A bunch of people who:

(1) vehemently deny the one and only consensus amongst all climate scientists, and that is the average temperature on Earth has risen with about two degrees Celsius since the Industrial Revolution, and

(2) say that, if climate change would be real (God forbid!), then we can reverse the trend by easy geo-engineering. We just need to use directed energy or whatever to create more white clouds. If that doesn’t work, then… Well… CO2 makes trees and plants grow, so it will all sort itself out by itself.

[…]

Yes. That is, basically, what Dr. Clauser and all the other scientific advisors of this lobby group – none of which have any credentials in the field they are criticizing (climate science) – are saying, and they say it loud and clearly. That is weird enough, already. What is even weirder, is that – to my surprise – a lot of people are actually buying such nonsense.

Frankly, I have not felt angry for a while, but this thing triggered an outburst of mine on YouTube, in which I state clearly what I think of Dr. Clauser and other eminent scientists who abuse their saint-like Nobel Prize status in society to deceive the general public. Watch my video rant, and think about it for yourself. Now, I am not interested in heated discussions on it: I know the basic facts. If you don’t, I listed them here. Look at the basic graphs and measurements before you would want to argue with me on this, please! To be clear on this: I will not entertain violent or emotional reactions to this post or my video. Moreover, I will delete them here on WordPress and also on my YouTube channel. Yes. For the first time in 10 years or so, I will exercise my right as a moderator of my channels, which is something I have never done before. 🙂

[…]

I will now calm down and write something about the mainstream interpretation of quantum physics again. 🙂 In fact, this morning I woke up with a joke in my head. You will probably think the joke is not very good, but then I am not a comedian and so it is what it is and you can judge for yourself. The idea is that you’d learn something from it. Perhaps. 🙂 So, here we go.

Imagine shooting practice somewhere. A soldier fires at some target with a fine gun, and then everyone looks at the spread of the hits around the bullseye. The quantum physicist says: “See: this is the Uncertainty Principle at work! What is the linear momentum of these bullets, and what is the distance to the target? Let us calculate the standard error.” The soldier looks astonished and says: “No. This gun is no good. One of the engineers should check it.” Then the drill sergeant says this: “The gun is fine. From this distance, all bullets should have hit the bullseye. You are a miserable shooter and you should really practice a lot more.” He then turns to the academic and says: “How did you get in here? I do not understand a word of what you just said and, if I do, it is of no use whatsoever. Please bugger off asap!”

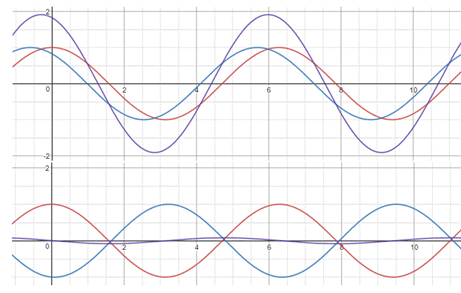

This is a stupid joke, perhaps, but there is a fine philosophical point to it: uncertainty is not inherent to Nature, and it also serves no purpose whatsoever in the science of engineering or in science in general. All in Nature is deterministic. Statistically deterministic, but deterministic nevertheless. We do not know the initial conditions of the system, perhaps, and that translates into seemingly random behavior, but if there is a pattern in that behavior (a diffraction pattern, in the case of electron or photon diffraction), then the conclusion should be that there is no such thing as metaphysical ‘uncertainty’. In fact, if you abandon that principle, then there is no point in trying to discover the laws of the Universe, is there? Because if Nature is uncertain, then there are no laws, right? 🙂

To underscore this point, I will, once again, remind you of what Heisenberg originally wrote about uncertainty. He wrote in German and distinguished three very different ideas of uncertainty:

(1) The precision of our measurements may be limited: Heisenberg originally referred to this as an Ungenauigkeit.

(2) Our measurement might disturb the position and, as such, cause the information to get lost and, as a result, introduce an uncertainty in our knowledge, but not in reality. Heisenberg originally referred to such uncertainty as an Unbestimmtheit.

(3) One may also think the uncertainty is inherent to Nature: that is what Heisenberg referred to as Ungewissheit. There is nothing in Nature – and also nothing in Heisenberg’s writings, really – that warrants the elevation of this Ungewissheit to a dogma in modern physics. Why? Because it is the equivalent of a religious conviction, like God exists or He doesn’t (both are theses we cannot prove: Ryle labeled such hypotheses as ‘category mistakes’).

Indeed, when one reads the proceedings of the Solvay Conferences of the late 1920s, 1930s and immediately after WW II (see my summary of it in https://www.researchgate.net/publication/341177799_A_brief_history_of_quantum-mechanical_ideas), then it is pretty clear that none of the first-generation quantum physicists believed in such dogma and – if they did – that they also thought what I am writing here: that it should not be part of science but part of one’s personal religious beliefs.

So, once again, I repeat that this concept of entanglement – for which John Clauser got a Nobel Prize last year – is in the same category: it is just a fancy word for the simultaneous conservation of energy, linear and angular momentum, and charge. There is ‘no spooky action at a distance’, as Einstein would derogatorily describe it when the idea was first mentioned to him.

Let me end by noting the dishonor of Nobel Prize winner John Clauser once again. Climate change is real: we are right in the middle of it, and it is going to get a lot worse before it gets any better – if it is ever going to get better (which, in my opinion, is a rather big ‘if‘…). So, no matter how many Nobel Prize winners deny it, they cannot change the fact that average temperature on Earth has risen by about 2 degrees Celsius since 1850 already. The question is not: is climate change happening? No. The question now is: how do we adapt to it – and that is an urgent question – and, then, the question is: can we, perhaps, slow down the trend, and how? In short, if these scientists from physics or the medical field or whatever other field they excel in are true and honest scientists, then they would do a great favor to mankind not by advocating geo-engineering schemes to reverse a trend they actually deny is there, but by helping to devise and promote practical measures to allow communities that are affected by natural disaster to better recover from them.

So, I’ll conclude this rant by repeating what I think of all of this. Loud and clear: John Clauser and the other scientific advisors of the CO2 coalition are a disgrace to what goes under the name of ‘science’, and this umpteenth ‘incident’ in the history of science or logical thinking makes me think that it is about time that the Royal Swedish Academy of Sciences does some serious soul-searching when, amongst the many nominations, it selects its candidates for a prestigious award like this. Alfred Nobel – one of those geniuses who regretted his great contribution to science and technology was (also) (ab)used to increase the horrors of war – must have turned too many times in his grave now…