Pre-script (dated 26 June 2020): Our ideas have evolved into a full-blown realistic (or classical) interpretation of all things quantum-mechanical. In addition, I note the dark force has amused himself by removing some material. So no use to read this. Read my recent papers instead. 🙂

Original post:

We didn’t get very far in our first post on Feynman’s Seminar on Superconductivity, and then I shifted my attention to other subjects over the past few months. So… Well… Let me re-visit the topic here.

One of the difficulties one encounters when trying to read this so-called seminar—which, according to Feynman, is ‘for entertainment only’ and, therefore, not really part of the Lectures themselves—is that Feynman throws in a lot of stuff that is not all that relevant to the topic itself but… Well… He apparently didn’t manage to throw all that he wanted to throw into his (other) Lectures on Quantum Mechanics and so he inserted a lot of stuff which he could, perhaps, have discussed elsewhere.  So let us try to re-construct the main lines of reasoning here.

So let us try to re-construct the main lines of reasoning here.

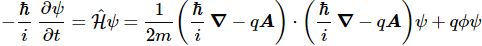

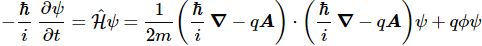

The first equation is Schrödinger’s equation for some particle with charge q that is moving in an electromagnetic field that is characterized not only by the (scalar) potential Φ but also by a vector potential A:

This closely resembles Schrödinger’s equation for an electron that is moving in an electric field only, which we used to find the energy states of electrons in a hydrogen atom: i·ħ·∂ψ/∂t = −(1/2)·(ħ2/m)∇2ψ + V·ψ. We just need to note the following:

- On the left-hand side, we can, obviously, replace −1/i by i.

- On the right-hand side, we can replace V by q·Φ, because the potential of a charge in an electric field is the product of the charge (q) and the (electric) potential (Φ).

- As for the other term on the right-hand side—so that’s the −(1/2)·(ħ2/m)∇2ψ term—we can re-write −ħ2·∇2ψ as [(ħ/i)·∇]·[(ħ/i)·∇]ψ because (1/i)·(1/i) = 1/i2 = 1/(−1) = −1. 🙂

- So all that’s left now, is that additional −q·A term in the (ħ/i)∇ − q·A expression. In our post, we showed that’s easily explained because we’re talking magnetodynamics: we’ve got to allow for the possibility of changing magnetic fields, and so that’s what the −q·A term captures.

Now, the latter point is not so easy to grasp but… Well… I’ll refer you that first post of mine, in which I show that some charge in a changing magnetic field will effectively gather some extra momentum, whose magnitude will be equal to p = m·v = −q·A. So that’s why we need to introduce another momentum operator here, which we write as:

OK. Next. But… Then… Well… All of what follows are either digressions—like the section on the local conservation of probabilities—or, else, quite intuitive arguments. Indeed, Feynman does not give us the nitty-gritty of the Bardeen-Cooper-Schrieffer theory, nor is the rest of the argument nearly as rigorous as the derivation of the electron orbitals from Schrödinger’s equation in an electrostatic field. So let us closely stick to what he does write, and try our best to follow the arguments.

Cooper pairs

The key assumption is that there is some attraction between electrons which, at low enough temperatures, can overcome the Coulomb repulsion. Where does this attraction come from? Feynman does not give us any clues here. He just makes a reference to the BCS theory but notes this theory is “not the subject of this seminar”, and that we should just “accept the idea that the electrons do, in some manner or other, work in pairs”, and that “we can think of thos−e pairs as behaving more or less like particles”, and that “we can, therefore, talk about the wavefunction for a pair.”

So we have a new particle, so to speak, which consists of two electrons who move through the conductor as one. To be precise, the electron pair behaves as a boson. Now, bosons have integer spin. According to the spin addition rule, we have four possibilities here but only three possible values:− 1/2 + 1/2 = 1; −1/2 + 1/2 = 0; +1/2 − 1/2 = 0; −1/2 − 1/2 = − 1. Of course, it is tempting to think these Cooper pairs are just like the electron pairs in the atomic orbitals, whose spin is always opposite because of the Fermi exclusion principle. Feynman doesn’t say anything about this, but the Wikipedia article on the BCS theory notes that the two electrons in a Cooper pair are, effectively, correlated because of their opposite spin. Hence, we must assume the Cooper pairs effectively behave like spin-zero particles.

Now, unlike fermions, bosons can collectively share the same energy state. In fact, they are likely to share the same state into what is referred to as a Bose-Einstein condensate. As Feynman puts it: “Since electron pairs are bosons, when there are a lot of them in a given state there is an especially large amplitude for other pairs to go to the same state. So nearly all of the pairs will be locked down at the lowest energy in exactly the same state—it won’t be easy to get one of them into another state. There’s more amplitude to go into the same state than into an unoccupied state by the famous factor √n, where n−1 is the occupancy of the lowest state. So we would expect all the pairs to be moving in the same state.”

Of course, this only happens at very low temperatures, because even if the thermal energy is very low, it will give the electrons sufficient energy to ensure the attractive force is overcome and all pairs are broken up. It is only at very low temperature that they will pair up and go into a Bose-Einstein condensate. Now, Feynman derives this √n factor in a rather abstruse introductory Lecture in the third volume, and I’d advise you to google other material on Bose-Einstein statistics because… Well… The mentioned Lecture is not among Feynman’s finest. OK. Next step.

Cooper pairs and wavefunctions

We know the probability of finding a Cooper pair is equal to the absolute square of its wavefunction. Now, it is very reasonable to assume that this probability will be proportional to the charge density (ρ), so we can write:

|ψ|2 = ψψ* ∼ ρ(r)

The argument here (r) is just the position vector. The next step, then, is to write ψ as the square root of ρ(r) times some phase factor θ. Abstracting away from time, this phase factor will also depend on r, of course. So this is what Feynman writes:

ψ = ρ(r)1/2eθ(r)

As Feynman notes, we can write any complex function of r like this but… Well… The charge density is, obviously, something real. Something we can measure, so we’re not writing the obvious here. The next step is even less obvious.

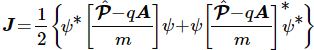

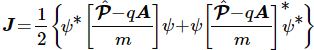

In our first post, we spent quite some time on Feynman’s digression on the local conservation of probability and… Well… I wrote above I didn’t think this digression was very useful. It now turns out it’s a central piece in the puzzle that Feynman is trying to solve for us here. The key formula here is the one for the so-called probability current, which—as Feynman shows—we write as:

This current J can also be written as:

Now, Feynman skips all of the math here (he notes “it’s just a change of variables” but so he doesn’t want to go through all of the algebra), and so I’ll just believe him when he says that, when substituting ψ for our wavefunction ψ = ρ(r)1/2eθ(r), then we can express this ‘current’ (J) in terms of ρ and θ. To be precise, he writes J as:  So what? Well… It’s really fascinating to see what happens next. While J was some rather abstract concept so far—what’s a probability current, really?—Feynman now suggests we may want to think of it as a very classical electric current—the charge density times the velocity of the fluid of electrons. Hence, we equate J to J = ρ·v. Now, if the equation above holds true, but J is also equal to J = ρ·v, then the equation above is equivalent to:

So what? Well… It’s really fascinating to see what happens next. While J was some rather abstract concept so far—what’s a probability current, really?—Feynman now suggests we may want to think of it as a very classical electric current—the charge density times the velocity of the fluid of electrons. Hence, we equate J to J = ρ·v. Now, if the equation above holds true, but J is also equal to J = ρ·v, then the equation above is equivalent to:

Now, that gives us a formula for ħ∇θ. We write:

ħ∇θ = m·v + q·A

Now, in my previous post on this Seminar, I noted that Feynman attaches a lot of importance to this m·v + q·A quantity because… Well… It’s actually an invariant quantity. The argument can be, very briefly, summarized as follows. During the build-up of (or a change in) a magnetic flux, a charge will pick up some (classical) momentum that is equal to p = m·v = −q·A. Hence, the m·v + q·A sum is zero, and so… Well… That’s it, really: it’s some quantity that… Well… It has a significance in quantum mechanics. What significance? Well… Think of what we’ve been writing here. The v and the A have a physical significance, obviously. Therefore, that phase factor θ(r) must also have a physical significance.

But the question remains: what physical significance, exactly? Well… Let me quote Feynman here:

“The phase is just as observable as the charge density ρ. It is a piece of the current density J. The absolute phase (θ) is not observable, but if the gradient of the phase (∇θ) is known everywhere, then the phase is known except for a constant. You can define the phase at one point, and then the phase everywhere is determined.”

That makes sense, doesn’t it? But it still doesn’t quite answer the question: what is the physical significance of θ(r). What is it, really? We may be able to answer that question after exploring the equations above a bit more, so let’s do that now.

Superconductivity

The phenomenon of superconductivity itself is easily explained by the mentioned condensation of the Cooper pairs: they all go into the same energy state. They form, effectively, a superconducting fluid. Feynman’s description of this is as follows:

“There is no electrical resistance. There’s no resistance because all the electrons are collectively in the same state. In the ordinary flow of current you knock one electron or the other out of the regular flow, gradually deteriorating the general momentum. But here to get one electron away from what all the others are doing is very hard because of the tendency of all Bose particles to go in the same state. A current once started, just keeps on going forever.”

Frankly, I’ve re-read this a couple of times, but I don’t think it’s the best description of what we think is going on here. I’d rather compare the situation to… Well… Electrons moving around in an electron orbital. That’s doesn’t involve any radiation or energy transfer either. There’s just movement. Flow. The kind of flow we have in the wavefunction itself. Here I think the video on Bose-Einstein condensates on the French Tout est quantique site is quite instructive: all of the Cooper pairs join to become one giant wavefunction—one superconducting fluid, really. 🙂

OK… Next.

The Meissner effect

Feynman describes the Meissner effect as follows:

“If you have a piece of metal in the superconducting state and turn on a magnetic field which isn’t too strong (we won’t go into the details of how strong), the magnetic field can’t penetrate the metal. If, as you build up the magnetic field, any of it were to build up inside the metal, there would be a rate of change of flux which would produce an electric field, and an electric field would immediately generate a current which, by Lenz’s law, would oppose the flux. Since all the electrons will move together, an infinitesimal electric field will generate enough current to oppose completely any applied magnetic field. So if you turn the field on after you’ve cooled a metal to the superconducting state, it will be excluded.

Even more interesting is a related phenomenon discovered experimentally by Meissner. If you have a piece of the metal at a high temperature (so that it is a normal conductor) and establish a magnetic field through it, and then you lower the temperature below the critical temperature (where the metal becomes a superconductor), the field is expelled. In other words, it starts up its own current—and in just the right amount to push the field out.”

The math here is interesting. Feynman first notes that, in any lump of superconducting metal, the divergence of the current must be zero, so we write: ∇·J = 0. At any point? Yes. The current that goes in must go out. No point is a sink or a source. Now the divergence operator (∇·J) is a linear operator. Hence, that means that, when applying the divergence operator to the J = (ħ/m)·[∇θ − (q/ħ)·A]·ρ equation, we’ll need to figure out what ∇·∇θ = = ∇2θ and ∇·A are. Now, as explained in my post on gauges, we can choose to make ∇·A equal to zero so… Well… We’ll make that choice and, hence, the term with ∇·A in it vanishes. So… Well… If ∇·J equals zero, then the term with ∇2θ has to be zero as well, so ∇2θ has to be zero. That, in turn, implies ∇θ has to be some constant (vector).

Now, there is a pretty big error in Feynman’s Lecture here, as it notes: “Now the only way that ∇2θ can be zero everywhere inside the lump of metal is for θ to be a constant.” It should read: ∇2θ can only be zero everywhere if ∇θ is a constant (vector). So now we need to remind ourselves of the reality of θ, as described by Feynman (quoted above): “The absolute phase (θ) is not observable, but if the gradient of the phase (∇θ) is known everywhere, then the phase is known except for a constant. You can define the phase at one point, and then the phase everywhere is determined.” So we can define, or choose, our constant (vector) ∇θ to be 0.

Hmm… We re-set not one but two gauges here: A and ∇θ. Tricky business, but let’s go along with it. [If we want to understand Feynman’s argument, then we actually have no choice than to go long with his argument, right?] The point is: the (ħ/m)·∇θ term in the J = (ħ/m)·[∇θ − (q/ħ)·A]·ρ vanishes, so the equation we’re left with tells us the current—so that’s an actual as well as a probability current!—is proportional to the vector potential:

Now, we’ve neglected any possible variation in the charge density ρ so far because… Well… The charge density in a superconducting fluid must be uniform, right? Why? When the metal is superconducting, an accumulation of electrons in one region would be immediately neutralized by a current, right? [Note that Feynman’s language is more careful here. He writes: the charge density is almost perfectly uniform.]

Now, we’ve neglected any possible variation in the charge density ρ so far because… Well… The charge density in a superconducting fluid must be uniform, right? Why? When the metal is superconducting, an accumulation of electrons in one region would be immediately neutralized by a current, right? [Note that Feynman’s language is more careful here. He writes: the charge density is almost perfectly uniform.]

So what’s next? Well… We have a more general equation from the equations of electromagnetism:

[In case you’d want to know how we get this equation out of Maxwell’s equations, you can look it up online in one of the many standard textbooks on electromagnetism.] You recognize this as a Poisson equation… Well… Three Poisson equations: one for each component of A and J. We can now combine the two equations above by substituting J in that Poisson equation, so we get the following differential equation, which we need to solve for A:

The λ2 in this equation is, of course, a shorthand for the following constant:

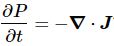

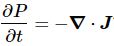

Now, it’s very easy to see that both e−λr as well as e−λr are solutions for that Poisson equation. But what do they mean? In one dimension, r becomes the one-dimensional position variable x. You can check the shapes of these solutions with a graphing tool.

Note that only one half of each graph counts: the vector potential must decrease when we go from the surface into the material, and there is a cut-off at the surface of the material itself, of course. So all depends on the size of λ, as compared to the size of our piece of superconducting metal (or whatever other substance our piece is made of). In fact, if we look at e−λx as as an exponential decay function, then τ = 1/λ is the so-called scaling constant (it’s the inverse of the decay constant, which is λ itself). [You can work this out yourself. Note that for x = τ = 1/λ, the value of our function e−λx will be equal to e−λ(1/λ) = e−1 ≈ 0.368, so it means the value of our function is reduced to about 36.8% of its initial value. For all practical purposes, we may say—as Feynman notes—that the field will, effectively, only penetrate to a thin layer at the surface: a layer of about 1/1/λ in thickness. He illustrates this as follows:

Moreover, he calculates the 1/λ distance for lead. Let me copy him here:

Well… That says it all, right? We’re talking two millionths of a centimeter here… 🙂

So what’s left? A lot, like flux quantization, or the equations of motion for the superconducting electron fluid. But we’ll leave that for the next posts. 🙂

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

![]()

Now, the line integral of a gradient from one point to another (say from point 1 to point 2) is the difference of the values of the function at the two points, so we can write:

Now, the line integral of a gradient from one point to another (say from point 1 to point 2) is the difference of the values of the function at the two points, so we can write:

![]()