Post scriptum note added on 11 July 2016: This is one of the more speculative posts which led to my e-publication analyzing the wavefunction as an energy propagation. With the benefit of hindsight, I would recommend you to immediately the more recent exposé on the matter that is being presented here, which you can find by clicking on the provided link. In fact, I actually made some (small) mistakes when writing the post below.

Original post:

I hope you find the title intriguing. A zero-mass particle? So I am talking a photon, right? Well… Yes and no. Just read this post and, more importantly, think about this story for yourself. 🙂

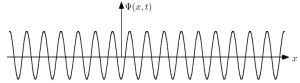

One of my acquaintances is a retired nuclear physicist. We mail every now and then—but he has little or no time for my questions: he usually just tells me to keep studying. I once asked him why there is never any mention of the wavefunction of a photon in physics textbooks. He bluntly told me photons don’t have a wavefunction—not in the sense I was talking at least. Photons are associated with a traveling electric and a magnetic field vector. That’s it. Full stop. Photons do not have a ψ or φ function. [I am using ψ and φ to refer to position or momentum wavefunction. You know both are related: if we have one, we have the other.] But then I never give up, of course. I just can’t let go out of the idea of a photon wavefunction. The structural similarity in the propagation mechanism of the electric and magnetic field vectors E and B just looks too much like the quantum-mechanical wavefunction. So I kept trying and, while I don’t think I fully solved the riddle, I feel I understand it much better now. Let me show you the why and how.

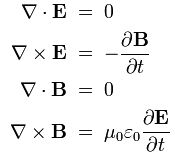

I. An electromagnetic wave in free space is fully described by the following two equations:

- ∂B/∂t = –∇×E

- ∂E/∂t = c2∇×B

We’re making abstraction here of stationary charges, and we also do not consider any currents here, so no moving charges either. So I am omitting the ∇·E = ρ/ε0 equation (i.e. the first of the set of four equations), and I am also omitting the j/ε0 in the second equation. So, for all practical purposes (i.e. for the purpose of this discussion), you should think of a space with no charges: ρ = 0 and j = 0. It’s just a traveling electromagnetic wave. To make things even simpler, we’ll assume our time and distance units are chosen such that c = 1, so the equations above reduce to:

- ∂B/∂t = –∇×E

- ∂E/∂t = ∇×B

Perfectly symmetrical! But note the minus sign in the first equation. As for the interpretation, I should refer you to previous posts but, briefly, the ∇× operator is the curl operator. It’s a vector operator: it describes the (infinitesimal) rotation of a (three-dimensional) vector field. We discussed heat flow a couple of times, or the flow of a moving liquid. So… Well… If the vector field represents the flow velocity of a moving fluid, then the curl is the circulation density of the fluid. The direction of the curl vector is the axis of rotation as determined by the ubiquitous right-hand rule, and its magnitude of the curl is the magnitude of rotation. OK. Next step.

II. For the wavefunction, we have Schrödinger’s equation, ∂ψ/∂t = i·(ħ/2m)·∇2ψ, which relates two complex-valued functions (∂ψ/∂t and ∇2ψ). Complex-valued functions consist of a real and an imaginary part, and you should be able to verify this equation is equivalent to the following set of two equations:

- Re(∂ψ/∂t) = −(ħ/2m)·Im(∇2ψ)

- Im(∂ψ/∂t) = (ħ/2m)·Re(∇2ψ)

[Two complex numbers a + ib and c + id are equal if, and only if, their real and imaginary parts are the same. However, note the −i factor in the right-hand side of the equation, so we get: a + ib = −i·(c + id) = d −ic.] The Schrödinger equation above also assumes free space (i.e. zero potential energy: V = 0) but, in addition – see my previous post – they also assume a zero rest mass of the elementary particle (E0 = 0). So just assume E0 = V = 0 in de Broglie’s elementary ψ(θ) = ψ(x, t) = e−iθ = a·e−i[(E0 + p2/(2m) + V)·t − p∙x]/ħ wavefunction. So, in essence, we’re looking at the wavefunction of a massless particle here. Sounds like nonsense, doesn’t it? But… Well… That should be the wavefunction of a photon in free space then, right? 🙂

Maybe. Maybe not. Let’s go as far as we can.

The energy of a zero-mass particle

What m would we use for a photon? It’s rest mass is zero, but it’s got energy and, hence, an equivalent mass. That mass is given by the m = E/c2 mass-energy equivalence. We also know a photon has momentum, and it’s equal to its energy divided by c: p = m·c = E/c. [I know the notation is somewhat confusing: E is, obviously, not the magnitude of E here: it’s energy!] Both yield the same result. We get: m·c = E/c ⇔ m = E/c2 ⇔ E = m·c2.

OK. Next step. Well… I’ve always been intrigued by the fact that the kinetic energy of a photon, using the E = m·v2/2 = E = m·c2/2 formula, is only half of its total energy E = m·c2. Half: 1/2. That 1/2 factor is intriguing. Where’s the rest of the energy? It’s really a contradiction: our photon has no rest mass, and there’s no potential here, but its total energy is still twice its kinetic energy. Quid?

There’s only one conclusion: just because of its sheer existence, it must have some hidden energy, and that hidden energy is also equal to E = m·c2/2, and so the kinetic and hidden energy add up to E = m·c2.

Huh? Hidden energy? I must be joking, right?

Well… No. No joke. I am tempted to call it the imaginary energy, because it’s linked to the imaginary part of the wavefunction—but then it’s everything but imaginary: it’s as real as the imaginary part of the wavefunction. [I know that sounds a bit nonsensical, but… Well… Think about it: it does make sense.]

Back to that factor 1/2. You may or may not remember it popped up when we were calculating the group and the phase velocity of the wavefunction respectively, again assuming zero rest mass, and zero potential. [Note that the rest mass term is mathematically equivalent to the potential term in both the wavefunction as well as in Schrödinger’s equation: (E0·t +V·t = (E0 + V)·t, and V·ψ + E0·ψ = (V+E0)·ψ—obviously!]

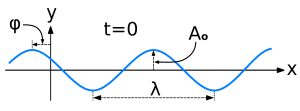

In fact, let me quickly show you that calculation again: the de Broglie relations tell us that the k and the ω in the ei(kx − ωt) = cos(kx−ωt) + i∙sin(kx−ωt) wavefunction (i.e. the spatial and temporal frequency respectively) are equal to k = p/ħ, and ω = E/ħ. If we would now use the kinetic energy formula E = m·v2/2 – which we can also write as E = m·v·v/2 = p·v/2 = p·p/2m = p2/2m, with v = p/m the classical velocity of the elementary particle that Louis de Broglie was thinking of – then we can calculate the group velocity of our ei(kx − ωt) = cos(kx−ωt) + i∙sin(kx−ωt) as:

vg = ∂ω/∂k = ∂[E/ħ]/∂[p/ħ] = ∂E/∂p = ∂[p2/2m]/∂p = 2p/2m = p/m = v

[Don’t tell me I can’t treat m as a constant when calculating ∂ω/∂k: I can. Think about it.] Now the phase velocity. The phase velocity of our ei(kx − ωt) is only half of that. Again, we get that 1/2 factor:

vp = ω/k = (E/ħ)/(p/ħ) = E/p = (p2/2m)/p = p/2m = v/2

Strange, isn’t it? Why would we get a different value for the phase velocity here? It’s not like we have two different frequencies here, do we? You may also note that the phase velocity turns out to be smaller than the group velocity, which is quite exceptional as well! So what’s the matter?

Well… The answer is: we do seem to have two frequencies here while, at the same time, it’s just one wave. There is only one k and ω here but, as I mentioned a couple of times already, that ei(kx − ωt) wavefunction seems to give you two functions for the price of one—one real and one imaginary: ei(kx − ωt) = cos(kx−ωt) + i∙sin(kx−ωt). So are we adding waves, or are we not? It’s a deep question. In my previous post, I said we were adding separate waves, but now I am thinking: no. We’re not. That sine and cosine are part of one and the same whole. Indeed, the apparent contradiction (i.e. the different group and phase velocity) gets solved if we’d use the E = m∙v2 formula rather than the kinetic energy E = m∙v2/2. Indeed, assuming that E = m∙v2 formula also applies to our zero-mass particle (I mean zero rest mass, of course), and measuring time and distance in natural units (so c = 1), we have:

E = m∙c2 = m and p = m∙c2 = m, so we get: E = m = p

Waw! What a weird combination, isn’t it? But… Well… It’s OK. [You tell me why it wouldn’t be OK. It’s true we’re glossing over the dimensions here, but natural units are natural units, and so c = c2 = 1. So… Well… No worries!] The point is: that E = m = p equality yields extremely simple but also very sensible results. For the group velocity of our ei(kx − ωt) wavefunction, we get:

vg = ∂ω/∂k = ∂[E/ħ]/∂[p/ħ] = ∂E/∂p = ∂p/∂p = 1

So that’s the velocity of our zero-mass particle (c, i.e. the speed of light) expressed in natural units once more—just like what we found before. For the phase velocity, we get:

vp = ω/k = (E/ħ)/(p/ħ) = E/p = p/p = 1

Same result! No factor 1/2 here! Isn’t that great? My ‘hidden energy theory’ makes a lot of sense. 🙂 In fact, I had mentioned a couple of times already that the E = m∙v2 relation comes out of the de Broglie relations if we just multiply the two and use the v = f·λ relation:

- f·λ = (E/h)·(h/p) = E/p

- v = f·λ ⇒ f·λ = v = E/p ⇔ E = v·p = v·(m·v) ⇒ E = m·v2

But so I had no good explanation for this. I have one now: the E = m·v2 is the correct energy formula for our zero-mass particle. 🙂

The quantization of energy and the zero-mass particle

Let’s now think about the quantization of energy. What’s the smallest value for E that we could possible think of? That’s h, isn’t it? That’s the energy of one cycle of an oscillation according to the Planck-Einstein relation (E = h·f). Well… Perhaps it’s ħ? Because… Well… We saw energy levels were separated by ħ, rather than h, when studying the blackbody radiation problem. So is it ħ = h/2π? Is the natural unit a radian (i.e. a unit distance), rather than a cycle?

Neither is natural, I’d say. We also have the Uncertainty Principle, which suggests the smallest possible energy value is ħ/2, because ΔxΔp = ΔtΔE = ħ/2.

Huh? What’s the logic here?

Well… I am not quite sure but my intuition tells me the quantum of energy must be related to the quantum of time, and the quantum of distance.

Huh? The quantum of time? The quantum of distance? What’s that? The Planck scale?

No. Or… Well… Let me correct that: not necessarily. I am just thinking in terms of logical concepts here. Logically, as we think of the smallest of smallest, then our time and distance variables must become count variables, so they can only take on some integer value n = 0, 1, 2 etcetera. So then we’re literally counting in time and/or distance units. So Δx and Δt are then equal to 1. Hence, Δp and ΔE are then equal to Δp = ΔE = ħ/2. Just think of the radian (i.e. the unit in which we measure θ) as measuring both time as well as distance. Makes sense, no?

No? Well… Sorry. I need to move on. So the smallest possible value for m = E = p would be ħ/2. Let’s substitute that in Schrödinger’s equation, or in that set of equations Re(∂ψ/∂t) = −(ħ/2m)·Im(∇2ψ) and Im(∂ψ/∂t) = (ħ/2m)·Re(∇2ψ). We get:

- Re(∂ψ/∂t) = −(ħ/2m)·Im(∇2ψ) = −(2ħ/2ħ)·Im(∇2ψ) = −Im(∇2ψ)

- Im(∂ψ/∂t) = (ħ/2m)·Re(∇2ψ) = (2ħ/2ħ)·Re(∇2ψ) = Re(∇2ψ)

Bingo! The Re(∂ψ/∂t) = −Im(∇2ψ) and Im(∂ψ/∂t) = Re(∇2ψ) equations were what I was looking for. Indeed, I wanted to find something that was structurally similar to the ∂B/∂t = –∇×E and ∂E/∂t = ∇×B equations—and something that was exactly similar: no coefficients in front or anything. 🙂

What about our wavefunction? Using the de Broglie relations once more (k = p/ħ, and ω = E/ħ), our ei(kx − ωt) = cos(kx−ωt) + i∙sin(kx−ωt) now becomes:

ei(kx − ωt) = ei(ħ·x/2 − ħ·t/2)/ħ = ei(x/2 − t/2) = cos[(x−t)/2] + i∙sin[(x−t)/2]

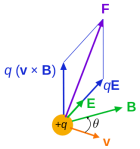

Hmm… Interesting! So we’ve got that 1/2 factor now in the argument of our wavefunction! I really feel I am close to squaring the circle here. 🙂 Indeed, it must be possible to relate the ∂B/∂t = –∇×E and ∂E/∂t = c2∇×B to the Re(∂ψ/∂t) = −Im(∇2ψ) and Im(∂ψ/∂t) = Re(∇2ψ) equations. I am sure it’s a complicated exercise. It’s likely to involve the formula for the Lorentz force, which says that the force on a unit charge is equal to E+v×B, with v the velocity of the charge. Why? Note the vector cross-product. Also note that ∂B/∂t and ∂E/∂t are vector-valued functions, not scalar-valued functions. Hence, in that sense, ∂B/∂t and ∂E/∂t and not like the Re(∂ψ/∂t) and/or Im(∂ψ/∂t) function. But… Well… For the rest, think of it: E and B are orthogonal vectors, and that’s how we usually interpret the real and imaginary part of a complex number as well: the real and imaginary axis are orthogonal too!

So I am almost there. Who can help me prove what I want to prove here? The two propagation mechanisms are the “same-same but different”, as they say in Asia. The difference between the two propagation mechanisms must also be related to that fundamental dichotomy in Nature: the distinction between bosons and fermions. Indeed, when combining two directional quantities (i.e. two vectors), we like to think there are four different ways of doing that, as shown below. However, when we’re only interested in the magnitude of the result (and not in its direction), then the first and third result below are really the same, as are the second and fourth combination. Now, we’ve got pretty much the same in quantum math: we can, in theory, combine complex-valued amplitudes in four different ways but, in practice, we only have two (rather than four) types of behavior only: photons versus bosons.

Is our zero-mass particle just the electric field vector?

Let’s analyze that ei(x/2 − t/2) = cos[(x−t)/2] + i∙sin[(x−t)/2] wavefunction some more. It’s easy to represent it graphically. The following animation does the trick:

I am sure you’ve seen this animation before: it represents a circularly polarized electromagnetic wave… Well… Let me be precise: it presents the electric field vector (E) of such wave only. The B vector is not shown here, but you know where and what it is: orthogonal to the E vector, as shown below—for a linearly polarized wave.

Let’s think some more. What is that ei(x/2 − t/2) function? It’s subject to conceiving time and distance as countable variables, right? I am tempted to say: as discrete variables, but I won’t go that far—not now—because the countability may be related to a particular interpretation of quantum physics. So I need to think about that. In any case… The point is that x can only take on values like 0, 1, 2, etcetera. And the same goes for t. To make things easy, we’ll not consider negative values for x right now (and, obviously, not for t either). So we’ve got a infinite set of points like:

- ei(0/2 − 0/2) = cos(0) + i∙sin(0)

- ei(1/2 − 0/2) = cos(1/2) + i∙sin(1/2)

- ei(0/2 − 1/2) = cos(−1/2) + i∙sin(−1/2)

- ei(1/2 − 1/2) = cos(0) + i∙sin(0)

- …

Now, I quickly opened Excel and calculated those cosine and sine values for x and t going from 0 to 14 below. It’s really easy. Just five minutes of work. You should do yourself as an exercise. The result is shown below. Both graphs connect 14×14 = 196 data points, but you can see what’s going on: this does effectively, represent the elementary wavefunction of a particle traveling in spacetime. In fact, you can see its speed is equal to 1, i.e. it effectively travels at the speed of light, as it should: the wave velocity is v = f·λ = (ω/2π)·(2π/k) = ω/k = (1/2)·(1/2) = 1. The amplitude of our wave doesn’t change along the x = t diagonal. As the Last Samurai puts it, just before he moves to the Other World: “Perfect! They are all perfect!” 🙂

In fact, in case you wonder how the quantum vacuum could possibly look like, you should probably think of these discrete spacetime points, and some complex-valued wave that travels as it does in the illustration above.

Of course, that elementary wavefunction above does not localize our particle. For that, we’d have to add a potentially infinite number of such elementary wavefunctions, so we’d write the wavefunction as ∑ aje−iθj functions. [I use the j symbol here for the subscript, rather than the more conventional i symbol for a subscript, so as to avoid confusion with the symbol used for the imaginary unit.] The aj coefficients are the contribution that each of these elementary wavefunctions would make to the composite wave. What could they possibly be? Not sure. Let’s first look at the argument of our elementary component wavefunctions. We’d inject uncertainty in it. So we’d say that m = E = p is equal to

m = E = p = ħ/2 + j·ħ with j = 0, 1, 2,…

That amounts to writing: m = E = p = ħ/2, ħ, 3ħ/2, 2ħ, 5/2ħ, etcetera. Waw! That’s nice, isn’t it? My intuition tells me that our aj coefficients will be smaller for higher j, so the aj(j) function would be some decreasing function. What shape? Not sure. Let’s first sum up our thoughts so far:

- The elementary wavefunction of a zero-mass particle (again, I mean zero rest mass) in free space is associated with an energy that’s equal to ħ/2.

- The zero-mass particle travels at the speed of light, obviously (because it has zero rest mass), and its kinetic energy is equal to E = m·v2/2 = m·c2/2.

- However, its total energy is equal to E = m·v2 = m·c2: it has some hidden energy. Why? Just because it exists.

- We may associate its kinetic energy with the real part of its wavefunction, and the hidden energy with its imaginary part. However, you should remember that the imaginary part of the wavefunction is as essential as its real part, so the hidden energy is equally real. 🙂

So… Well… Isn’t this just nice?

I think it is. Another obvious advantage of this way of looking at the elementary wavefunction is that – at first glance at least – it provides an intuitive understanding of why we need to take the (absolute) square of the wavefunction to find the probability of our particle being at some point in space and time. The energy of a wave is proportional to the square of its amplitude. Now, it is reasonable to assume the probability of finding our (point) particle would be proportional to the energy and, hence, to the square of the amplitude of the wavefunction, which is given by those aj(j) coefficients.

Huh?

OK. You’re right. I am a bit too fast here. It’s a bit more complicated than that, of course. The argument of probability being proportional to energy being proportional to the square of the amplitude of the wavefunction only works for a single wave a·e−iθ. The argument does not hold water for a sum of functions ∑ aje−iθj. Let’s write it all out. Taking our m = E = p = ħ/2 + j·ħ = ħ/2, ħ, 3ħ/2, 2ħ, 5/2ħ,… formula into account, this sum would look like:

a1ei(x − t)(1/2) + a2ei(x − t)(2/2) + a3ei(x − t)(3/2) + a4ei(x − t)(4/2) + …

But—Hey! We can write this as some power series, can’t we? We just need to add a0ei(x − t)(0/2) = a0, and then… Well… It’s not so easy, actually. Who can help me? I am trying to find something like this:

Or… Well… Perhaps something like this:

Whatever power series it is, we should be able to relate it to this one—I’d hope:

Hmm… […] It looks like I’ll need to re-visit this, but I am sure it’s going to work out. Unfortunately, I’ve got no more time today, I’ll let you have some fun now with all of this. 🙂 By the way, note that the result of the first power series is only valid for |x| < 1. 🙂

Note 1: What we should also do now is to re-insert mass in the equations. That should not be too difficult. It’s consistent with classical theory: the total energy of some moving mass is E = m·c2, out of which m·v2/2 is the classical kinetic energy. All the rest – i.e. m·c2 − m·v2/2 – is potential energy, and so that includes the energy that’s ‘hidden’ in the imaginary part of the wavefunction. 🙂

Note 2: I really didn’t pay much attentions to dimensions when doing all of these manipulations above but… Well… I don’t think I did anything wrong. Just to give you some more feel for that wavefunction ei(kx − ωt), please do a dimensional analysis of its argument. I mean, k = p/ħ, and ω = E/ħ, so check the dimensions:

- Momentum is expressed in newton·second, and we divide it by the quantum of action, which is expressed in newton·meter·second. So we get something per meter. But then we multiply it with x, so we get a dimensionless number.

- The same is true for the ωt term. Energy is expressed in joule, i.e. newton·meter, and so we divide it by ħ once more, so we get something per second. But then we multiply it with t, so… Well… We do get a dimensionless number: a number that’s expressed in radians, to be precise. And so the radian does, indeed, integrate both the time as well as the distance dimension. 🙂