Pre-scriptum (dated 26 June 2020): This is an interesting post. I think my thoughts on the relevance of scale – especially the role of the fine-structure constant in this regard – have evolved considerably, so you should probably read my papers instead of these old blog posts.

Original post:

What is that we are trying to understand? As a kid, when I first heard about atoms consisting of a nucleus with electrons orbiting around it, I had this vision of worlds inside worlds, like a set of babushka dolls, one inside the other. Now I know that this model – which is nothing but the 1911 Rutherford model basically – is plain wrong, even if it continues to be used in the logo of the International Atomic Energy Agency, or the US Atomic Energy Commission.

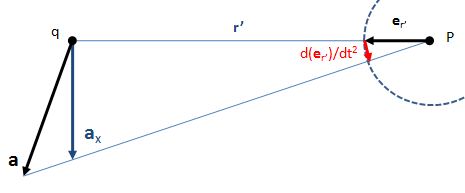

Electrons are not planet-like things orbiting around some center. If one wants to understand something about the reality of electrons, one needs to familiarize oneself with complex-valued wave functions whose argument represents a weird quantity referred to as a probability amplitude and, contrary to what you may think (unless you read my blog, or if you just happen to know a thing or two about quantum mechanics), the relation between that amplitude and the concept of probability tout court is not very straightforward.

Familiarizing oneself with the math involved in quantum mechanics is not an easy task, as evidenced by all those convoluted posts I’ve been writing. In fact, I’ve been struggling with these things for almost a year now and I’ve started to realize that Roger Penrose’s Road to Reality (or should I say Feynman’s Lectures?) may lead nowhere – in terms of that rather spiritual journey of trying to understand what it’s all about. If anything, they made me realize that the worlds inside worlds are not the same. They are different – very different.

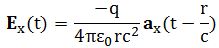

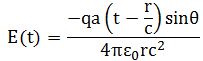

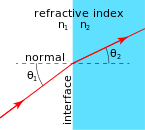

When everything is said and done, I think that’s what’s nagging us as common mortals. What we are all looking for is some kind of ‘Easy Principle’ that explains All and Everything, and we just can’t find it. The point is: scale matters. At the macro-scale, we usually analyze things using some kind of ‘billiard-ball model’. At a smaller scale, let’s say the so-called wave zone, our ‘law’ of radiation holds, and we can analyze things in terms of electromagnetic or gravitational fields. But then, when we further reduce scale, by another order of magnitude really – when trying to get very close to the source of radiation, or if we try to analyze what is oscillating really – we get in deep trouble: our easy laws do no longer hold, and the equally easy math – easy is relative of course 🙂 – we use to analyze fields or interference phenomena, becomes totally useless.

Religiously inclined people would say that God does not want us to understand all or, taking a somewhat less selfish picture of God, they would say that Reality (with a capital R to underline its transcendental aspects) just can’t be understood. Indeed, it is rather surprising – in my humble view at least – that things do seem to get more difficult as we drill down: in physics, it’s not the bigger things – like understanding thermonuclear fusion in the Sun, for example – but the smallest things which are difficult to understand. Of course, that’s partly because physics leaves some of the bigger things which are actually very difficult to understand – like how a living cell works, for example, or how our eye or our brain works – to other sciences to study (biology and biochemistry for cells, or for vision or brain functionality). In that respect, physics may actually be described as the science of the smallest things. The surprising thing, then, is that the smallest things are not necessarily the simplest things – on the contrary.

Still, that being said, I can’t help feeling some sympathy for the simpler souls who think that, if God exists, he seems to throw up barriers as mankind tries to advance its knowledge. Isn’t it strange, indeed, that the math describing the ‘reality’ of electrons and photons (i.e. quantum mechanics and quantum electrodynamics), as complicated as it is, becomes even more complicated – and, important to note, also much less accurate – when it’s used to try to describe the behavior of quarks and gluons? Additional ‘variables’ are needed (physicists call these ‘variables’ quantum numbers; however, when everything is said and done, that’s what quantum numbers actually are: variables in a theory), and the agreement between experimental results and predictions in QCD is not as obvious as it is in QED.

Frankly, I don’t know much about quantum chromodynamics – nothing at all to be honest – but when I read statements such as “analytic or perturbative solutions in low-energy QCD are hard or impossible due to the highly nonlinear nature of the strong force” (I just took this one line from the Wikipedia article on QCD), I instinctively feel that QCD is, in fact, a different world as well – and then I mean different from QED, in which analytic or perturbative solutions are the norm. Hence, I already know that, once I’ll have mastered Feynman’s Volume III, it won’t help me all that much to get to the next level of understanding: understanding quantum chromodynamics will be yet another long grind. In short, understanding quantum mechanics is only a first step.

Of course, that should not surprise us, because we’re talking very different order of magnitudes here: femtometers (10–15 m), in the case of electrons, as opposed to attometers (10–18 m) or even zeptometers (10–21 m) when we’re talking quarks. Hence, if past experience (I mean the evolution of scientific thought) is any guidance, we actually should expect an entirely different world. Babushka thinking is not the way forward.

Babushka thinking

What’s babushka thinking? You know what babushkas are, don’t you? These dolls inside dolls. [The term ‘babushka’ is actually Russian for an old woman or grandmother, which is what these dolls usually depict.] Babushka thinking is the fallacy of thinking that worlds inside worlds are the same. It’s what I did as a kid. It’s what many of us still do. It’s thinking that, when everything is said and done, it’s just a matter of not being able to ‘see’ small things and that, if we’d have the appropriate equipment, we actually would find the same doll within the larger doll – the same but smaller – and then again the same doll with that smaller doll. In Asia, they have these funny expression: “Same-same but different.” Well… That’s what babushka thinking all about: thinking that you can apply the same concepts, tools and techniques to what is, in fact, an entirely different ballgame.

Let me illustrate it. We discussed interference. We could assume that the laws of interference, as described by superimposing various waves, always hold, at every scale, and that it’s just the crudeness of our detection apparatus that prevents us from seeing what’s going on. Take two light sources, for example, and let’s say they are a billion wavelengths apart – so that’s anything between 400 to 700 meters for visible light (because the wavelength of visible light is 400 to 700 billionths of a meter). So then we won’t see any interference indeed, because we can’t register it. In fact, none of the standard equipment can. The interference term oscillates wildly up and down, from positive to negative and back again, if we move the detector just a tiny bit left or right – not more than the thickness of a hair (i.e. 0.07 mm or so). Hence, the range of angles θ (remember that angle θ was the key variable when calculating solutions for the resultant wave in previous posts) that are being covered by our eye – or by any standard sensor really – is so wide that the positive and negative interference averages out: all that we ‘see’ is the sum of the intensities of the two lights. The terms in the interference term cancel each other out. However, we are still essentially correct assuming there actually is interference: we just cannot see it – but it’s there.

Reinforcing the point, I should also note that, apart from this issue of ‘distance scale’, there is also the scale of time. Our eye has a tenth-of-a-second averaging time. That’s a huge amount of time when talking fundamental physics: remember that an atomic oscillator – despite its incredibly high Q – emits radiation for like 10-8 seconds only, so that’s one-hundred millionths of a second. Then another atom takes over, and another – and so that’s why we get unpolarized light: it’s all the same frequencies (because the electron oscillators radiate at their resonant frequencies), but so there is no fixed phase difference between all of these pulses: the interference between all of these pulses should result in ‘beats’ – as they interfere positively or negatively – but it all cancels out for us, because it’s too fast.

Indeed, while the ‘sensors’ in the retina of the human eye (there are actually four kind of cells there, but so the principal ones are referred to as ‘rod’ and ‘cone’ cells respectively) are, apparently, sensitive enough able to register individual photons, the “tenth-of-a-second averaging” time means that the cells – which are interconnected and ‘pre-process’ light really – will just amalgamate all those individual pulses into one signal of a certain color (frequency) and a certain intensity (energy). As one scientist puts it: “The neural filters only allow a signal to pass to the brain when at least about five to nine photons arrive within less than 100 ms.” Hence, that signal will not keep track of the spacing between those photons.

In short, information gets lost. But so that, in itself, does not invalidate babushka thinking. Let me visualize it by a non-very-mathematically-rigorous illustration. Suppose that we have some very regular wave train coming in, like the one below: one wave train consisting of three ‘groups’ separated between ‘nodes’.

All will depend on the period of the wave as compared to that one-tenth-of-a-second averaging time. In fact, we have two ‘periods’: the periodicity of the group – which is related to the concept of group velocity – and, hence, I’ll associate a ‘group wavelength’ and a ‘group period’ with that. [In case you haven’t heard of these terms before, don’t worry: I haven’t either. :-)] Now, if one tenth of a second covers like two or all three of the groups between the nodes (so that means that one tenth of a second is a multiple of the group period Tg), then even the envelope of the wave does not matter much in terms of ‘signal’: our brain will just get one pulse that averages it all out. We will see none of the detail of this wave train. Our eye will just get light in (remember that the intensity of the light is the square of the amplitude, so the negative amplitudes make contributions too) but we cannot distinguish any particular pulse: it’s just one signal. This is the most common situation when we are talking about electromagnetic radiation: many photons arrive but our eye just sends one signal to the brain: “Hey Boss! Light of color X and intensity Y coming from direction Z.”

In fact, it’s quite remarkable that our eye can distinguish colors in light of the fact that the wavelengths of various colors (violet, blue, green, yellow, orange and red) differs 30 to 40 billionths of a meter only! Better still: if the signal lasts long enough, we can distinguish shades whose wavelengths differ by 10 or 15 nm only, so that’s a difference of 1% or 2% only. In case you wonder how it works: Feynman devotes not less than two chapters in his Lectures to the physiology of the eye: not something you’ll find in other physics handbooks! There are apparently three pigments in the cells in our eyes, each sensitive to color in a different way and it is “the spectral absorption in those three pigments that produces the color sense.” So it’s a bit like the RGB system in a television – but then more complicated, of course!

But let’s go back to our wave there and analyze the second possibility. If a tenth of a second covers less than that ‘group wavelength’, then it’s different: we will actually see the individual groups as two or three separate pulses. Hence, in that case, our eye – or whatever detector (another detector will just have another averaging time – will average over a group, but not over the whole wave train. [Just in case you wonder how we humans compare with our living beings: from what I wrote above, it’s obvious we can see ‘flicker’ only if the oscillation is in the range of 10 or 20 Hz. The eye of a bee is made to see the vibrations of feet and wings of other bees and, hence, its averaging time is much shorter, like a hundredth of a second and, hence, it can see flicker up to 200 oscillations per second! In addition, the eye of a bee is sensitive over a much wider range of ‘color’ – it sees UV light down to a wavelength of 300 nm (where as we don’t see light with a wavelength below 400 nm) – and, to top it all off, it has got a special sensitivity for polarized light, so light that gets reflected or diffracted looks different to the bee.]

Let’s go to the third and final case. If a tenth of a second would cover less than the wavelength of the the so-called carrier wave, i.e. the actual oscillation, then we will be able to distinguish the individual peaks and troughs of the carrier wave!

Of course, this discussion is not limited to our eye as a sensor: any instrument will be able to measure individual phenomena only within a certain range, with an upper and a lower range, i.e. the ‘biggest’ thing it can see, and the ‘smallest’. So that explains the so-called resolution of an optical or an electron microscope: whatever the instrument, it cannot really ‘see’ stuff that’s smaller than the wavelength of the ‘light’ (real light or – in the case of an electron microscope – electron beams) it uses to ‘illuminate’ the object it is looking at. [The actual formula for the resolution of a microscope is obviously a bit more complicated, but this statement does reflect the gist of it.]

However, all that I am writing above, suggests that we can think of what’s going on here as ‘waves within waves’, with the wave between nodes not being any different – in substance that is – as the wave as a whole: we’ve got something that’s oscillating, and within each individual oscillation, we find another oscillation. From a math point of view, babushka thinking is thinking we can analyze the world using Fourier’s machinery to decompose some function (see my posts on Fourier analysis). Indeed, in the example above, we have a modulated carrier wave (it is an example of amplitude modulation – the old-fashioned way of transmitting radio signals), and we see a wave within a wave and, hence, just like the Rutherford model of an atom, you may think there will always be ‘a wave within a wave’.

In this regard, you may think of fractals too: fractals are repeating or self-similar patterns that are always there, at every scale. However, the point to note is that fractals do not represent an accurate picture of how reality is actually structured: worlds within worlds are not the same.

Reality is no onion

Reality is not some kind of onion, from which you peel off a layer and then you find some other layer, similar to the first: “same-same but different”, as they’d say in Asia. The Coast of Britain is, in fact, finite, and the grain of sand you’ll pick up at one of its beaches will not look like the coastline when you put it under a microscope. In case you don’t believe me: I’ve inserted a real-life photo below. The magnification factor is a rather modest 300 times. Isn’t this amazing? [The credit for this nice picture goes to a certain Dr. Gary Greenberg. Please do google his stuff. It’s really nice.]

In short, fractals are wonderful mathematical structures but – in reality – there are limits to how small things get: we cannot carve a babushka doll out of the cellulose and lignin molecules that make up most of what we call wood. Likewise, the atoms that make up the D-glucose chains in the cellulose will never resemble the D-glucose chains. Hence, the babushka doll, the D-glucose chains that make up wood, and the atoms that make up the molecules within those macro-molecules are three different worlds. They’re not like layers of the same onion. Scale matters. The worlds inside words are different, and fundamentally so: not “same-same but different” but just plain different. Electrons are no longer point-like negative charges when we look at them at close range.

In fact, that’s the whole point: we can’t look at them at close range because we can’t ‘locate’ them. They aren’t particles. They are these strange ‘wavicles’ which we described, physically and mathematically, with a complex wave function relating their position (or their momentum) with some probability amplitude, and we also need to remember these funny rules for adding these amplitudes, depending on whether or not the ‘wavicle’ obeys Fermi or Bose statistics.

Weird, but – come to think of it – not more weird, in terms of mathematical description, than these electromagnetic waves. Indeed, when jotting down all these equations and developing all those mathematical argument, one often tends to forget that we are not talking some physical wave here. The field vector E (or B) is a mathematical construct: it tells us what force a charge will feel when we put it here or there. It’s not like a water or sound wave that makes some medium (water or air) actually move. The field is an influence that travels through empty space. But how can something actually through empty space? When it’s truly empty, you can’t travel through it, can you?

Oh – you’ll say – but we’ve got these photons, don’t we? Waves are not actually waves: they come in little packets of energy – photons. Yes. You’re right. But, as mentioned above, these photons aren’t little bullets – or particles if you want. They’re as weird as the wave and, in any case, even a billiard ball view of the world is not very satisfying: what happens exactly when two billiard balls collide in a so-called elastic collision? What are the springs on the surface of those balls – in light of the quick reaction, they must resemble more like little explosive charges that detonate on impact, isn’t it? – that make the two balls recoil from each other?

So any mathematical description of reality becomes ‘weird’ when you keep asking questions, like that little child I was – and I still am, in a way, I guess. Otherwise I would not be reading physics at the age of 45, would I? 🙂

Conclusion

Let me wrap up here. All of what I’ve been blogging about over the past few months concerns the classical world of physics. It consists of waves and fields on the one hand, and solid particles on the other – electrons and nucleons. But so we know it’s not like that when we have more sensitive apparatuses, like the apparatus used in that 2012 double-slit electron interference experiment at the University of Nebraska–Lincoln, that I described at length in one of my earlier posts. That apparatus allowed control of two slits – both not more than 62 nanometer wide (so that’s the difference between the wavelength of dark-blue and light-blue light!), and the monitoring of single-electron detection events. Back in 1963, Feynman already knew what this experiment would yield as a result. He was sure about it, even if he thought such instrument could never be built. [To be fully correct, he did have some vague idea about a new science, for which he himself coined the term ‘nanotechnology’, but what we can do today surpasses, most probably, all his expectations at the time. Too bad he died too young to see his dreams come through.]

The point to note is that this apparatus does not show us another layer of the same onion: it shows an entirely different world. While it’s part of reality, it’s not ‘our’ reality, nor is it the ‘reality’ of what’s being described by classical electromagnetic field theory. It’s different – and fundamentally so, as evidenced by those weird mathematical concepts one needs to introduce to sort of start to ‘understand’ it.

So… What do I want to say here? Nothing much. I just had to remind myself where I am right now. I myself often still fall prey to babushka thinking. We shouldn’t. We should wonder about the wood these dolls are made of. In physics, the wood seems to be math. The models I’ve presented in this blog are weird: what are those fields? And just how do they exert a force on some charge? What’s the mechanics behind? To these questions, classical physics does not have an answer really.

But, of course, quantum mechanics does not have a very satisfactory answer either: what does it mean when we say that the wave function collapses? Out of all of the possibilities in that wonderful indeterminate world ‘inside’ the quantum-mechanical universe, one was ‘chosen’ as something that actually happened: a photon imparts momentum to an electron, for example. We can describe it, mathematically, but – somehow – we still don’t really understand what’s going on.

So what’s going on? We open a doll, and we do not find another doll that is smaller but similar. No. What we find is a completely different toy. However – Surprise ! Surprise ! – it’s something that can be ‘opened’ as well, to reveal even weirder stuff, for which we need even weirder ‘tools’ to somehow understand how it works (like lattice QCD, if you’d want an example: just google it if you want to get an inkling of what that’s about). Where is this going to end? Did it end with the ‘discovery’ of the Higgs particle? I don’t think so.

However, with the ‘discovery’ (or, to be generous, let’s call it an experimental confirmation) of the Higgs particle, we may have hit a wall in terms of verifying our theories. At the center of a set of babushka dolls, you’ll usually have a little baby: a solid little thing that is not like the babushkas surrounding it: it’s young, male and solid, as opposed to the babushkas. Well… It seems that, in physics, we’ve got several of these little babies inside: electrons, photons, quarks, gluons, Higgs particles, etcetera. And we don’t know what’s ‘inside’ of them. Just that they’re different. Not “same-same but different”. No. Fundamentally different. So we’ve got a lot of ‘babies’ inside of reality, very different from the ‘layers’ around them, which make up ‘our’ reality. Hence, ‘Reality’ is not a fractal structure. What is it? Well… I’ve started to think we’ll never know. For all of the math and wonderful intellectualism involved, do we really get closer to an ‘understanding’ of what it’s all about?

I am not sure. The more I ‘understand’, the less I ‘know’ it seems. But then that’s probably why many physicists still nurture an acute sense of mystery, and why I am determined to keep reading. 🙂

Post scriptum: On the issue of the ‘mechanistic universe’ and the (related) issue of determinability and indeterminability, that’s not what I wanted to write about above, because I consider that solved. This post is meant to convey some wonder – on the different models of understanding that we need to apply to different scales. It’s got little to do with determinability or not. I think that issue got solved long time ago, and I’ll let Feynman summarize that discussion:

“The indeterminacy of quantum mechanics has given rise to all kinds of nonsense and questions on the meaning of freedom of will, and of the idea that the world is uncertain. […] Classical physics is also indeterminate. It is true, classically, that if we knew the position and the velocity of every particle in the world, or in a box of gas, we could predict exactly what would happen. And therefore the classical world is deterministic. Suppose, however, we have a finite accuracy and do not know exactly where just one atom is, say to one part in a billion. Then as it goes along it hits another atom, and because we did not know the position better than one part in a billion, we find an even larger error in the position after the collision. And that is amplified, of course, in the next collision, so that if we start with only a tiny error it rapidly magnifies to a very great uncertainty. […] Speaking more precisely, given an arbitrary accuracy, no matter how precise, one can find a time long enough that we cannot make predictions valid for that long a time. That length of time is not very large. It is not that the time is millions of years if the accuracy is one part in a billion. The time goes only logarithmically with the error. In only a very, very tiny time – less than the time it took to state the accuracy – we lose all our information. It is therefore not fair to say that from the apparent freedom and indeterminacy of the human mind, we should have realized that classical ‘deterministic’ physics could not ever hope to understand, and to welcome quantum mechanics as a release from a completely ‘mechanistic’ universe. For already in classical mechanics, there was indeterminability from a practical point of view.” (Feynman, Lectures, 1963, p. 38-10)

That really says it all, I think. I’ll just continue to keep my head down – i.e. stay away from philosophy as for now – and try to find a way to open the toy inside the toy. 🙂