Pre-scriptum (dated 26 June 2020): These posts on elementary math and physics have not suffered much from the attack by the dark force—which is good because I still like them. While my views on the true nature of light, matter and the force or forces that act on them have evolved significantly as part of my explorations of a more realist (classical) explanation of quantum mechanics, I think most (if not all) of the analysis in this post remains valid and fun to read. In fact, I find the simplest stuff is often the best. 🙂

Original post:

This post is not about string theory. The goal of this post is much more limited: it’s to give you a better understanding of why the metaphor of the string is so appealing. Let’s recapitulate the basics by see how it’s used in classical as well as in quantum physics.

In my posts on music and math, or music and physics, I described how a simple single string always vibrates in various modes at the same time: every tone is a mixture of an infinite number of elementary waves. These elementary waves, which are referred to as harmonics (or as (normal) modes, indeed) are perfectly sinusoidal, and their amplitude determines their relative contribution to the composite waveform. So we can always write the waveform F(t) as the following sum:

F(t) = a1sin(ωt) + a2sin(2ωt) + a3sin(3ωt) + … + ansin(nωt) + …

[If this is your first reading of my post, and the formula shies you away, please try again. I am writing most of my posts with teenage kids in mind, and especially this one. So I will not use anything else than simple arithmetic in this post: no integrals, no complex numbers, no logarithms. Just a bit of geometry. That’s all. So, yes, you should go through the trouble of trying to understand this formula. The only thing that you may have some trouble with is ω, i.e. angular frequency: it’s the frequency expressed in radians per time unit, rather than oscillations per second, so ω = 2π·f = 2π/T, with f the frequency as you know it (i.e. oscillations per second) and T the period of the wave.]

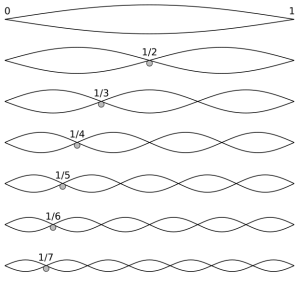

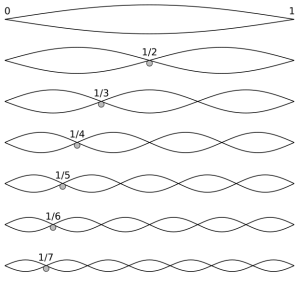

I also noted that the wavelength of these component waves (λ) is determined by the length of the string (L), and by its length only: λ1 = 2L, λ2 = L, λ3 = (2/3)·L. So these wavelengths do not depend on the material of the string, or its tension. At any point in time (so keeping t constant, rather than x, as we did in the equation above), the component waves look like this:

etcetera (1/8, 1/9,…,1/n,… 1/∞)

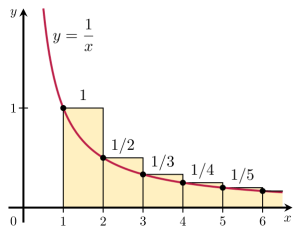

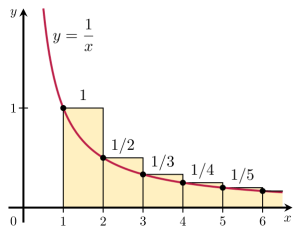

That the wavelengths of the harmonics of any actual string only depend on its length is an amazing result in light of the complexities behind: a simple wound guitar string, for example, is not simple at all (just click the link here for a quick introduction to guitar string construction). Simple piano wire isn’t simple either: it’s made of high-carbon steel, i.e. a very complex metallic alloy. In fact, you should never think any material is simple: even the simplest molecular structures are very complicated things. Hence, it’s quite amazing all these systems are actually linear systems and that, despite the underlying complexity, those wavelength ratios form a simple harmonic series, i.e. a simple reciprocal function y = 1/x, as illustrated below.

A simple harmonic series? Hmm… I can’t resist noting that the harmonic series is, in fact, a mathematical beast. While its terms approach zero as x (or n) increases, the series itself is divergent. So it’s not like 1+1/2+1/4+1/8+…+1/2n+…, which adds up to 2. Divergent series don’t add up to any specific number. Even Leonhard Euler – the most famous mathematician of all times, perhaps – struggled with this. In fact, as late as in 1826, another famous mathematician, Niels Henrik Abel (in light of the fact he died at age 26 (!), his legacy is truly amazing), exclaimed that a series like this was “an invention of the devil”, and that it should not be used in any mathematical proof. But then God intervened through Abel’s contemporary Augustin-Louis Cauchy 🙂 who finally cracked the nut by rigorously defining the mathematical concept of both convergent as well as divergent series, and equally rigorously determining their possibilities and limits in mathematical proofs. In fact, while medieval mathematicians had already grasped the essentials of modern calculus and, hence, had already given some kind of solution to Zeno’s paradox of motion, Cauchy’s work is the full and final solution to it. But I am getting distracted, so let me get back to the main story.

More remarkable than the wavelength series itself, is its implication for the respective energy levels of all these modes. The material of the string, its diameter, its tension, etc will determine the speed with which the wave travels up and down the string. [Yes, that’s what it does: you may think the string oscillates up and down, and it does, but the waveform itself travels along the string. In fact, as I explained in my previous post, we’ve got two waves traveling simultaneously: one going one way and the other going the other.] For a specific string, that speed (i.e. the wave velocity) is some constant, which we’ll denote by c. Now, c is, obviously, the product of the wavelength (i.e. the distance that the wave travels during one oscillation) and its frequency (i.e. the number of oscillations per time unit), so c = λ·f. Hence, f = c/λ and, therefore, f1 = (1/2)·c/L, f2 = (2/2)·c/L, f3 = (3/2)·c/L, etcetera. More in general, we write fn = (n/2)·c/L. In short, the frequencies are equally spaced. To be precise, they are all (1/2)·c/L apart.

Now, the energy of a wave is directly proportional to its frequency, always, in classical as well as in quantum mechanics. For example, for photons, we have the Planck-Einstein relation: E = h·f = ħ·ω. So that relation states that the energy is proportional to the (light) frequency of the photon, with h (i.e. he Planck constant) as the constant of proportionality. [Note that ħ is not some different constant. It’s just the ‘angular equivalent’ of h, so we have to use ħ = h/2π when frequencies are expressed in angular frequency, i.e. radians per second rather than hertz.] Because of that proportionality, the energy levels of our simple string are also equally spaced and, hence, inserting another proportionality constant, which I’ll denote by a instead of h (because it’s some other constant, obviously), we can write:

En = a·fn = (n/2)·a·c/L

Now, if we denote the fundamental frequency f1 = (1/2)·c/L, quite simply, by f (and, likewise, its angular frequency as ω), then we can re-write this as:

En = n·a·f = n·ā·ω (ā = a/2π)

This formula is exactly the same as the formula used in quantum mechanics when describing atoms as atomic oscillators, and why and how they radiate light (think of the blackbody radiation problem, for example), as illustrated below: En = n·ħ·ω = n·h·f. The only difference between the formulas is the proportionality constant: instead of a, we have Planck’s constant here: h, or ħ when the frequency is expressed as an angular frequency.

This grand result – that the energy levels associated with the various states or modes of a system are equally spaced – is referred to as the equipartition theorem in physics, and it is what connects classical and quantum physics in a very deep and fundamental way.

In fact, because they’re nothing but proportionality constants, the value of both a and h depends on our units. If w’d use the so-called natural units, i.e. equating ħ to 1, the energy formula becomes En = n·ω, and, hence, our unit of energy and our unit of frequency become one and the same. In fact, we can, of course, also re-define our time unit such that the fundamental frequency ω is one, i.e. one oscillation per (re-defined) time unit, so then we have the following remarkable formula:

En = n

Just think about it for a moment: what I am writing here is E0 = 0, E1 = 1, E2 = 2, E3 = 3, E4 = 4, etcetera. Isn’t that amazing? I am describing the structure of a system here – be it an atom emitting or absorbing photons, or a macro-thing like a guitar string – in terms of its basic components (i.e. its modes), and it’s as simple as counting: 0, 1, 2, 3, 4, etc.

You may think I am not describing anything real here, but I am. We cannot do whatever we wanna do: some stuff is grounded in reality, and in reality only—not in the math. Indeed, the fundamental frequency of our guitar string – which we used as our energy unit – is a property of the string, so that’s real: it’s not just some mathematical shape out: it depends on the string’s length (which determines its wavelength), and it also depends on the propagation speed of the wave, which depends on other basic properties of the string, such as its material, its diameter, and its tension. Likewise, the fundamental frequency of our atomic oscillator is a property of the atomic oscillator or, to use a much grander term, a property of the Universe. That’s why h is a fundamental physical constant. So it’s not like π or e. [When reading physics as a freshman, it’s always useful to clearly distinguish physical constants (like Avogadro’s number, for example) from mathematical constants (like Euler’s number).]

The theme that emerges here is what I’ve been saying a couple of times already: it’s all about structure, and the structure is amazingly simple. It’s really that equipartition theorem only: all you need to know is that the energy levels of the modes of a system – any system really: an atom, a molecular system, a string, or the Universe itself – are equally spaced, and that the space between the various energy levels depends on the fundamental frequency of the system. Moreover, if we use natural units, and also re-define our time unit so the fundamental frequency is equal to 1 (so the frequencies of the other modes are 2, 3, 4 etc), then the energy levels are just 0, 1, 2, 3, 4 etc. So, yes, God kept things extremely simple. 🙂

In order to not cause too much confusion, I should add that you should read what I am writing very carefully: I am talking the modes of a system. The system itself can have any energy level, of course, so there is no discreteness at the level of the system. I am not saying that we don’t have a continuum there. We do. What I am saying is that its energy level can always be written as a (potentially infinite) sum of the energies of its components, i.e. its fundamental modes, and those energy levels are discrete. In quantum-mechanical systems, their spacing is h·f, so that’s the product of Planck’s constant and the fundamental frequency. For our guitar, the spacing is a·f (or, using angular frequency, ā·ω: it’s the same amount). But that’s it really. That’s the structure of the Universe. 🙂

Let me conclude by saying something more about a. What information does it capture? Well… All of the specificities of the string (like its material or its tension) determine the fundamental frequency f and, hence, the energy levels of the basic modes of our string. So a has nothing to do with the particularities of our string, of our system in general. However, we can, of course, pluck our string very softly or, conversely, give it a big jolt. So our a coefficient is not related to the string as such, but to the total energy of our string. In other words, a is related to those amplitudes a1, a2, etc in our F(t) = a1sin(ωt) + a2sin(2ωt) + a3sin(3ωt) + … + ansin(nωt) + … wave equation.

How exactly? Well… Based on the fact that the total energy of our wave is equal to the sum of the energies of all of its components, I could give you some formula. However, that formula does use an integral. It’s an easy integral: energy is proportional to the square of the amplitude, and so we’re integrating the square of the wave function over the length of the string. But then I said I would not have any integral in this post, and so I’ll stick to that. In any case, even without the formula, you know enough now. For example, one of the things you should be able to reflect on is the relation between a and h. It’s got to do with structure, of course. 🙂 But I’ll let you think about that yourself.

[…] Let me help you. Think of the meaning of Planck’s constant h. Let’s suppose we’d have some elementary ‘wavicle’, like that elementary ‘string’ that string theorists are trying to define: the smallest ‘thing’ possible. It would have some energy, i.e. some frequency. Perhaps it’s just one full oscillation. Just enough to define some wavelength and, hence, some frequency indeed. Then that thing would define the smallest time unit that makes sense: it would the time corresponding to one oscillation. In turn, because of the E = h·f relation, it would define the smallest energy unit that makes sense. So, yes, h is the quantum (or fundamental unit) of energy. It’s very small indeed (h = 6.626070040(81)×10−34 J·s, so the first significant digit appears only after 33 zeroes behind the decimal point) but that’s because we’re living at the macro-scale and, hence, we’re measuring stuff in huge units: the joule (J) for energy, and the second (s) for time. In natural units, h would be one. [To be precise, physicist prefer to equate ħ, rather than h, to one when talking natural units. That’s because angular frequency is more ‘natural’ as well when discussing oscillations.]

What’s the conclusion? Well… Our a will be some integer multiple of h. Some incredibly large multiple, of course, but a multiple nevertheless. 🙂

Post scriptum: I didn’t say anything about strings in this post or, let me qualify, about those elementary ‘strings’ that string theorists try to define. Do they exist? Feynman was quite skeptical about it. He was happy with the so-called Standard Model of phyics, and he would have been very happy to know that the existence Higgs field has been confirmed experimentally (that discovery is what prompted my blog!), because that confirms the Standard Model. The Standard Model distinguishes two types of wavicles: fermions and bosons. Fermions are matter particles, such as quarks and electrons. Bosons are force carriers, like photons and gluons. I don’t know anything about string theory, but my guts instinct tells me there must be more than just one mathematical description of reality. It’s the principle of duality: concepts, theorems or mathematical structures can be translated into other concepts, theorems or structures. But… Well… We’re not talking equivalent descriptions here: string theory is a different theory, it seems. For a brief but totally incomprehensible overview (for novices at least), click on the following link, provided by the C.N. Yang Institute for Theoretical Physics. If anything, it shows I’ve got a lot more to study as I am inching forward on the difficult Road to Reality. 🙂

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/