Pre-script (dated 26 June 2020): Our ideas have evolved into a full-blown realistic (or classical) interpretation of all things quantum-mechanical. In addition, I note the dark force has amused himself by removing some material, which messed up the lay-out of this post as well. So no use to read this. Read my recent papers instead. 🙂

Original post:

We know how electromagnetic waves travel through space: they do so because of the mechanism described in Maxwell’s equation: a changing magnetic field causes a changing electric field, and a changing magnetic field causes a (changing) electric field, as illustrated below.

So we need some First Cause to get it all started 🙂 i.e. some current, i.e. some moving charge, but then the electromagnetic wave travels, all by itself, through empty space, completely detached from the cause. You know that by now – indeed, you’ve heard this a thousand times before – but, if you’re reading this, you want to know how it works exactly. 🙂

In my post on the Lorentz gauge, I included a few links to Feynman’s Lectures that explain the nitty-gritty of this mechanism from various angles. However, they’re pretty horrendous to read, and so I just want to summarize them a bit—if only for myself, so as to remind myself what’s important and not. In this post, I’ll focus on the speed of light: why do electromagnetic waves – light – travel at the speed of light?

You’ll immediately say: that’s a nonsensical question. It’s light, so it travels at the speed of light. Sure, smart-arse! Let me be more precise: how can we relate the speed of light to Maxwell’s equations? That is the question here. Let’s go for it.

Feynman deals with the matter of the speed of an electromagnetic wave, and the speed of light, in a rather complicated exposé on the fields from some infinite sheet of charge that is suddenly set into motion, parallel to itself, as shown below. The situation looks – and actually is – very simple, but the math is rather messy because of the rather exotic assumptions: infinite sheets and infinite acceleration are not easy to deal with. 🙂 But so the whole point of the exposé is just to prove that the speed of propagation (v) of the electric and magnetic fields is equal to the speed of light (c), and it does a marvelous job at that. So let’s focus on that here only. So what I am saying is that I am going to leave out most of the nitty-gritty and just try to get to that v = c result as fast as I possibly can. So, fasten your seat belt, please.

Most of the nitty-gritty in Feynman’s exposé is about how to determine the direction and magnitude of the electric and magnetic fields, i.e. E and B. Now, when the nitty-gritty business is finished, the grand conclusion is that both E and B travel out in both the positive as well as the negative x-direction at some speed v and sort of ‘fill’ the entire space as they do. Now, the region they are filling extends infinitely far in both the y- and z-direction but, because they travel along the x-axis, there are no fields (yet) in the region beyond x = ± v·t (t = 0 is the moment when the sheet started moving, and it moves in the positive y-direction). As you can see, the sheet of charge fills the yz-plane, and the assumption is that its speed goes from zero to u instantaneously, or very very quickly at least. So the E and B fields move out like a tidal wave, as illustrated below, and thereby ‘fill’ the space indeed, as they move out.

The magnitude of E and B is constant, but it’s not the same constant, and part of the exercise here is to determine the relationship between the two constants. As for their direction, you can see it in the first illustration: B points in the negative z-direction for x > 0 and in the positive z-direction for x < 0, while E‘s direction is opposite to u‘s direction everywhere, so E points in the negative y-direction. As said, you should just take my word for it, because the nitty-gritty on this – which we do not want to deal with here – is all in Feynman and so I don’t want to copy that.

The crux of the argument revolves around what happens at the wavefront itself, as it travels out. Feynman relates flux and circulation there. It’s the typical thing to do: it’s at the wavefront itself that the fields change: before they were zero, and now they are equal to that constant. The fields do not change anywhere else, so there’s no changing flux or circulation business to be analyzed anywhere else. So we define two loops at the wavefront itself: Γ1 and Γ2. They are normal to each other (cf. the top and side view of the situation below), because the E and B fields are normal to each other. And so then we use Maxwell’s equations to check out what happens with the flux and circulation there and conclude what needs to be concluded. 🙂

We start with rectangle Γ2. So one side is in the region where there are fields, and one side is in the region where the fields haven’t reached yet. There is some magnetic flux through this loop, and it is changing, so there is an emf around it, i.e. some circulation of E. The flux changes because the area in which B exists increases at speed v. Now, the time rate of change of the flux is, obviously, the width of the rectangle L times the rate of change of the area, so that’s (B·L·v·Δt)/Δt = B·L·v, with Δt some differential time interval co-defining how slow or how fast the field changes. Now, according to Faraday’s Law (see my previous post), this will be equal to minus the line integral of E around Γ2, which is E·L. So E·L = B·L·v and, hence, we find: E = v·B.

Interesting! To satisfy Faraday’s equation (which is just one of Maxwell’s equations in integral rather than in differential form), E must equal B times v, with v the speed of propagation of our ‘tidal’ wave. Now let’s look at Γ1. There we should apply:

Now the line integral is just B·L, and the right-hand side is E·L·v, so, not forgetting that c2 in front—i.e. the square of the speed of light, as you know!—we get: c2B = E·v, or E = (c2/v)·B.

Now the line integral is just B·L, and the right-hand side is E·L·v, so, not forgetting that c2 in front—i.e. the square of the speed of light, as you know!—we get: c2B = E·v, or E = (c2/v)·B.

Now, the E = v·B and E = (c2/v)·B equations must both apply (we’re talking one wave and one and the same phenomenon) and, obviously, that’s only possible if v = c2/v, i.e. if v = c. So the wavefront must travel at the speed of light! Waw ! That’s fast. 🙂 Yes. […] Jokes aside, that’s the result we wanted here: we just proved that the speed of travel of an electromagnetic wave must be equal to the speed of light.

As an added bonus, we also showed the mechanism of travel. It’s obvious from the equations we used to prove the result: it works through the derivatives of the fields with respect to time, i.e. ∂E/∂t and ∂B/∂t.

Done! Great! Enjoy the view!

Well… Yes and no. If you’re smart, you’ll say: we got this result because of the c2 factor in that equation, so Maxwell had already put it in, so to speak. Waw! You really are a smart-arse, aren’t you? 🙂

The thing is… Well… The answer is: no. Maxwell did not put it in. Well… Yes and no. Let me explain. Maxwell’s first equation was the electric flux law ∇·E = σ/ε0: the flux of E through a closed surface is proportional to the charge inside. So that’s basically an other way of writing Coulomb’s Law, and ε0 was just some constant in it, the electric constant. So it’s a constant of proportionality that depends on the unit in which we measure electric charge. The only reason that it’s there is to make the units come out alright, so if we’d measure charge not in coulomb (C) in a unit equal to 1 C/ε0, it would disappear. If we’d do that, our new unit would be equivalent to the charge of some 700,000 protons. You can figure that magical number yourself by checking the values of the proton charge and ε0. 🙂

OK. And then Faraday came up with the exact laws for magnetism, and they involved current and some other constant of proportionality, and Maxwell formalized that by writing ∇×B = μ0j, with μ0 the magnetic constant. It’s not a flux law but a circulation law: currents cause circulation of B. We get the flux rule from it by integrating it. But currents are moving charges, and so Maxwell knew magnetism was related to the same thing: electric charge. So Maxwell knew the two constants had to be related. In fact, when putting the full set of equations together – there are four, as you know – Maxwell figured out that μ0 times ε0 would have to be equal to the reciprocal of c2, with c the speed of propagation of the wave. So Maxwell knew that, whatever the unit of charge, we’d get two constants of proportionality, and electric and a magnetic constant, and that μ0·ε0 would be equal to 1/c2. However, while he knew that, at the time, light and electromagnetism were considered to be separate phenomena, and so Maxwell did not say that c was the speed of light: the only thing his equations told him was that c is the speed of propagation of that ‘electromagnetic’ wave that came out of his equations.

The rest is history. In 1856, the great Wilhelm Eduard Weber – you’ve seen his name before, didn’t you? – did a whole bunch of experiments which measured the electric constant rather precisely, and Maxwell jumped on it and calculated all the rest, i.e. μ0, and so then he took the reciprocal of the square root of μ0·ε0 and – Bang! – he had c, the speed of propagation of the electromagnetic wave he was thinking of. Now, c was some value of the order of 3×108 m/s, and so that happened to be the same as the speed of light, which suggested that Maxwell’s c and the speed of light were actually one and the same thing!

Now, I am a smart-arse too 🙂 and, hence, when I first heard this story, I actually wondered how Maxwell could possibly know the speed of light at the time: Maxwell died many years before the Michelson-Morley experiment unequivocally established the value of the speed of light. [In case, you wonder: the Michelson-Morley experiment was done in 1887. So I check it. The fact is that the Michelson-Morley experiment concluded that the speed of light was an absolute value and that, in the process of doing so, they got a rather precise value for it, but the value of c itself has already been established, more or less, that is, by a Danish astronomer, Ole Römer, in 1676 ! He did so by carefully observing the timing of the repeating eclipses of Io, one of Jupiter’s moons. Newton mentioned his results in his Principia, which he wrote in 1687, duly noting that it takes about seven to eight minutes for light to travel from the Sun to the Earth. Done! The whole story is fascinating, really, so you should check it out yourself. 🙂

In any case, to make a long story short, Maxwell was puzzled by this mysterious coincidence, but he was bold enough to immediately point to the right conclusion, tentatively at least, and so he told the Cambridge Philosophical Society, in the very same year, i.e. 1856, that “we can scarcely avoid the inference that light consists in the transverse undulations of the same medium which is the cause of electric and magnetic phenomena.”

So… Well… Maxwell still suggests light needs some medium here, so the ‘medium’ is a reference to the infamous aether theory, but that’s not the point: what he says here is what we all take for granted now: light is an electromagnetic wave. So now we know there’s absolute no reason whatsoever to avoid the ‘inference’, but… Well… 160 years ago, it was quite a big deal to suggest something like that. 🙂

So that’s the full story. I hoped you like it. Don’t underestimate what you just did: understanding an argument like this is like “climbing a great peak”, as Feynman puts it. So it is “a great moment” indeed. 🙂 The only thing left is, perhaps, to explain the ‘other’ flux rules I used above. Indeed, you know Faraday’s Law:

But that other one? Well… As I explained in my previous post, Faraday’s Law is the integral form of Maxwell’s second equation: −∂B/∂t = ∇×E. The ‘other’ flux rule above – so that’s the one with the c2 in front and without a minus sign, is the integral form of Maxwell’s fourth equation: c2∇×B = j/ε0 + ∂E/∂t, taking into account that we’re talking a wave traveling in free space, so there are no charges and currents (it’s just a wave in empty space—whatever that means) and, hence, the Maxwell equation reduces to c2∇×B = ∂E/∂t. Now, I could take you through the same gymnastics as I did in my previous post but, if I were you, I’d just apply the general principle that ”the same equations must yield the same solutions” and so I’d just switch E for B and vice versa in Faraday’s equation. 🙂

So we’re done… Well… Perhaps one more thing. We’ve got these flux rules above telling us that the electromagnetic wave will travel all by itself, through empty space, completely detached from its First Cause. But… […] Well… Again you may think there’s some trick here. In other words, you may think the wavefront has to remain connected to the First Cause somehow, just like the whip below is connected to some person whipping it. 🙂

There’s no such connection. The whip is not needed. 🙂 If we’d switch off the First Cause after some time T, so our moving sheet stops moving, then we’d have the pulse below traveling through empty space. As Feynman puts it: “The fields have taken off: they are freely propagating through space, no longer connected in any way with the source. The caterpillar has turned into a butterfly!“

Now, the last question is always the same: what are those fields? What’s their reality? Here, I should refer you to one of the most delightful sections in Feynman’s Lectures. It’s on the scientific imagination. I’ll just quote the introduction to it, but I warmly recommend you go and check it out for yourself: it has no formulas whatsoever, and so you should understand all of it without any problem at all. 🙂

“I have asked you to imagine these electric and magnetic fields. What do you do? Do you know how? How do I imagine the electric and magnetic field? What do I actually see? What are the demands of scientific imagination? Is it any different from trying to imagine that the room is full of invisible angels? No, it is not like imagining invisible angels. It requires a much higher degree of imagination to understand the electromagnetic field than to understand invisible angels. Why? Because to make invisible angels understandable, all I have to do is to alter their properties a little bit—I make them slightly visible, and then I can see the shapes of their wings, and bodies, and halos. Once I succeed in imagining a visible angel, the abstraction required—which is to take almost invisible angels and imagine them completely invisible—is relatively easy. So you say, “Professor, please give me an approximate description of the electromagnetic waves, even though it may be slightly inaccurate, so that I too can see them as well as I can see almost invisible angels. Then I will modify the picture to the necessary abstraction.”

I’m sorry I can’t do that for you. I don’t know how. I have no picture of this electromagnetic field that is in any sense accurate. I have known about the electromagnetic field a long time—I was in the same position 25 years ago that you are now, and I have had 25 years more of experience thinking about these wiggling waves. When I start describing the magnetic field moving through space, I speak of the E and B fields and wave my arms and you may imagine that I can see them. I’ll tell you what I see. I see some kind of vague shadowy, wiggling lines—here and there is an E and a B written on them somehow, and perhaps some of the lines have arrows on them—an arrow here or there which disappears when I look too closely at it. When I talk about the fields swishing through space, I have a terrible confusion between the symbols I use to describe the objects and the objects themselves. I cannot really make a picture that is even nearly like the true waves. So if you have some difficulty in making such a picture, you should not be worried that your difficulty is unusual.

Our science makes terrific demands on the imagination. The degree of imagination that is required is much more extreme than that required for some of the ancient ideas. The modern ideas are much harder to imagine. We use a lot of tools, though. We use mathematical equations and rules, and make a lot of pictures. What I realize now is that when I talk about the electromagnetic field in space, I see some kind of a superposition of all of the diagrams which I’ve ever seen drawn about them. I don’t see little bundles of field lines running about because it worries me that if I ran at a different speed the bundles would disappear, I don’t even always see the electric and magnetic fields because sometimes I think I should have made a picture with the vector potential and the scalar potential, for those were perhaps the more physically significant things that were wiggling.

Perhaps the only hope, you say, is to take a mathematical view. Now what is a mathematical view? From a mathematical view, there is an electric field vector and a magnetic field vector at every point in space; that is, there are six numbers associated with every point. Can you imagine six numbers associated with each point in space? That’s too hard. Can you imagine even one number associated with every point? I cannot! I can imagine such a thing as the temperature at every point in space. That seems to be understandable. There is a hotness and coldness that varies from place to place. But I honestly do not understand the idea of a number at every point.

So perhaps we should put the question: Can we represent the electric field by something more like a temperature, say like the displacement of a piece of jello? Suppose that we were to begin by imagining that the world was filled with thin jello and that the fields represented some distortion—say a stretching or twisting—of the jello. Then we could visualize the field. After we “see” what it is like we could abstract the jello away. For many years that’s what people tried to do. Maxwell, Ampère, Faraday, and others tried to understand electromagnetism this way. (Sometimes they called the abstract jello “ether.”) But it turned out that the attempt to imagine the electromagnetic field in that way was really standing in the way of progress. We are unfortunately limited to abstractions, to using instruments to detect the field, to using mathematical symbols to describe the field, etc. But nevertheless, in some sense the fields are real, because after we are all finished fiddling around with mathematical equations—with or without making pictures and drawings or trying to visualize the thing—we can still make the instruments detect the signals from Mariner II and find out about galaxies a billion miles away, and so on.

The whole question of imagination in science is often misunderstood by people in other disciplines. They try to test our imagination in the following way. They say, “Here is a picture of some people in a situation. What do you imagine will happen next?” When we say, “I can’t imagine,” they may think we have a weak imagination. They overlook the fact that whatever we are allowed to imagine in science must be consistent with everything else we know: that the electric fields and the waves we talk about are not just some happy thoughts which we are free to make as we wish, but ideas which must be consistent with all the laws of physics we know. We can’t allow ourselves to seriously imagine things which are obviously in contradiction to the known laws of nature. And so our kind of imagination is quite a difficult game. One has to have the imagination to think of something that has never been seen before, never been heard of before. At the same time the thoughts are restricted in a strait jacket, so to speak, limited by the conditions that come from our knowledge of the way nature really is. The problem of creating something which is new, but which is consistent with everything which has been seen before, is one of extreme difficulty.”

Isn’t that great? I mean: Feynman, one of the greatest physicists of all time, didn’t write what he wrote above when he was a undergrad student or so. No. He did so in 1964, when he was 45 years old, at the height of his scientific career! And it gets better, because Feynman then starts talking about beauty. What is beauty in science? Well… Just click and check what Feynman thinks about it. 🙂

Oh… Last thing. So what is the magnitude of the E and B field? Well… You can work it out yourself, but I’ll give you the answer. The geometry of the situation makes it clear that the electric field has a y-component only, and the magnetic field a z-component only. Their magnitudes are given in terms of J, i.e. the surface current density going in the positive y-direction:

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

The resemblance with the standard diffusion equation (shown below) is, effectively, very obvious:

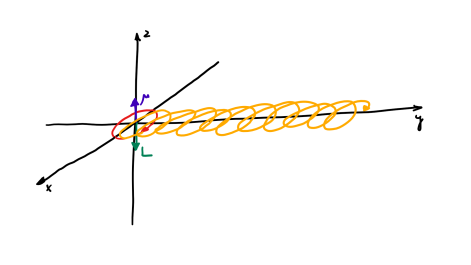

The resemblance with the standard diffusion equation (shown below) is, effectively, very obvious: As Feynman notes, it’s just that imaginary coefficient that makes the behavior quite different. How exactly? Well… You know we get all of those complicated electron orbitals (i.e. the various wave functions that satisfy the equation) out of Schrödinger’s differential equation. We can think of these solutions as (complex) standing waves. They basically represent some equilibrium situation, and the main characteristic of each is their energy level. I won’t dwell on this because – as mentioned above – I assume you master the math. Now, you know that – if we would want to interpret these wavefunctions as something real (which is surely what I want to do!) – the real and imaginary component of a wavefunction will be perpendicular to each other. Let me copy the animation for the elementary wavefunction ψ(θ) = a·e−i∙θ = a·e−i∙(E/ħ)·t = a·cos[(E/ħ)∙t] − i·a·sin[(E/ħ)∙t] once more:

As Feynman notes, it’s just that imaginary coefficient that makes the behavior quite different. How exactly? Well… You know we get all of those complicated electron orbitals (i.e. the various wave functions that satisfy the equation) out of Schrödinger’s differential equation. We can think of these solutions as (complex) standing waves. They basically represent some equilibrium situation, and the main characteristic of each is their energy level. I won’t dwell on this because – as mentioned above – I assume you master the math. Now, you know that – if we would want to interpret these wavefunctions as something real (which is surely what I want to do!) – the real and imaginary component of a wavefunction will be perpendicular to each other. Let me copy the animation for the elementary wavefunction ψ(θ) = a·e−i∙θ = a·e−i∙(E/ħ)·t = a·cos[(E/ħ)∙t] − i·a·sin[(E/ħ)∙t] once more:

But that shouldn’t distract you. 🙂 The question here is the following: could we possibly think of a new formulation of Schrödinger’s equation – using vectors (again, real vectors – not these weird state vectors) rather than complex algebra?

But that shouldn’t distract you. 🙂 The question here is the following: could we possibly think of a new formulation of Schrödinger’s equation – using vectors (again, real vectors – not these weird state vectors) rather than complex algebra?