Preliminary note: This post may cause brain damage. 🙂 If you haven’t worked yourself through a good introduction to physics – including the math – you will probably not understand what this is about. So… Well… Sorry. 😦 But if you have… Then this should be very interesting. Let’s go. 🙂

If you know one or two things about quantum math – Schrödinger’s equation and all that – then you’ll agree the math is anything but straightforward. Personally, I find the most annoying thing about wavefunction math are those transformation matrices: every time we look at the same thing from a different direction, we need to transform the wavefunction using one or more rotation matrices – and that gets quite complicated !

Now, if you have read any of my posts on this or my other blog, then you know I firmly believe the wavefunction represents something real or… Well… Perhaps it’s just the next best thing to reality: we cannot know das Ding an sich, but the wavefunction gives us everything we would want to know about it (linear or angular momentum, energy, and whatever else we have an operator for). So what am I thinking of? Let me first quote Feynman’s summary interpretation of Schrödinger’s equation (Lectures, III-16-1):

“We can think of Schrödinger’s equation as describing the diffusion of the probability amplitude from one point to the next. […] But the imaginary coefficient in front of the derivative makes the behavior completely different from the ordinary diffusion such as you would have for a gas spreading out along a thin tube. Ordinary diffusion gives rise to real exponential solutions, whereas the solutions of Schrödinger’s equation are complex waves.”

Feynman further formalizes this in his Lecture on Superconductivity (Feynman, III-21-2), in which he refers to Schrödinger’s equation as the “equation for continuity of probabilities”. His analysis there is centered on the local conservation of energy, which makes me think Schrödinger’s equation might be an energy diffusion equation. I’ve written about this ad nauseam in the past, and so I’ll just refer you to one of my papers here for the details, and limit this post to the basics, which are as follows.

The wave equation (so that’s Schrödinger’s equation in its non-relativistic form, which is an approximation that is good enough) is written as: The resemblance with the standard diffusion equation (shown below) is, effectively, very obvious:

The resemblance with the standard diffusion equation (shown below) is, effectively, very obvious: As Feynman notes, it’s just that imaginary coefficient that makes the behavior quite different. How exactly? Well… You know we get all of those complicated electron orbitals (i.e. the various wave functions that satisfy the equation) out of Schrödinger’s differential equation. We can think of these solutions as (complex) standing waves. They basically represent some equilibrium situation, and the main characteristic of each is their energy level. I won’t dwell on this because – as mentioned above – I assume you master the math. Now, you know that – if we would want to interpret these wavefunctions as something real (which is surely what I want to do!) – the real and imaginary component of a wavefunction will be perpendicular to each other. Let me copy the animation for the elementary wavefunction ψ(θ) = a·e−i∙θ = a·e−i∙(E/ħ)·t = a·cos[(E/ħ)∙t] − i·a·sin[(E/ħ)∙t] once more:

As Feynman notes, it’s just that imaginary coefficient that makes the behavior quite different. How exactly? Well… You know we get all of those complicated electron orbitals (i.e. the various wave functions that satisfy the equation) out of Schrödinger’s differential equation. We can think of these solutions as (complex) standing waves. They basically represent some equilibrium situation, and the main characteristic of each is their energy level. I won’t dwell on this because – as mentioned above – I assume you master the math. Now, you know that – if we would want to interpret these wavefunctions as something real (which is surely what I want to do!) – the real and imaginary component of a wavefunction will be perpendicular to each other. Let me copy the animation for the elementary wavefunction ψ(θ) = a·e−i∙θ = a·e−i∙(E/ħ)·t = a·cos[(E/ħ)∙t] − i·a·sin[(E/ħ)∙t] once more:

So… Well… That 90° angle makes me think of the similarity with the mathematical description of an electromagnetic wave. Let me quickly show you why. For a particle moving in free space – with no external force fields acting on it – there is no potential (U = 0) and, therefore, the Vψ term – which is just the equivalent of the the sink or source term S in the diffusion equation – disappears. Therefore, Schrödinger’s equation reduces to:

∂ψ(x, t)/∂t = i·(1/2)·(ħ/meff)·∇2ψ(x, t)

Now, the key difference with the diffusion equation – let me write it for you once again: ∂φ(x, t)/∂t = D·∇2φ(x, t) – is that Schrödinger’s equation gives us two equations for the price of one. Indeed, because ψ is a complex-valued function, with a real and an imaginary part, we get the following equations:

- Re(∂ψ/∂t) = −(1/2)·(ħ/meff)·Im(∇2ψ)

- Im(∂ψ/∂t) = (1/2)·(ħ/meff)·Re(∇2ψ)

Huh? Yes. These equations are easily derived from noting that two complex numbers a + i∙b and c + i∙d are equal if, and only if, their real and imaginary parts are the same. Now, the ∂ψ/∂t = i∙(ħ/meff)∙∇2ψ equation amounts to writing something like this: a + i∙b = i∙(c + i∙d). Now, remembering that i2 = −1, you can easily figure out that i∙(c + i∙d) = i∙c + i2∙d = − d + i∙c. [Now that we’re getting a bit technical, let me note that the meff is the effective mass of the particle, which depends on the medium. For example, an electron traveling in a solid (a transistor, for example) will have a different effective mass than in an atom. In free space, we can drop the subscript and just write meff = m.] 🙂 OK. Onwards ! 🙂

The equations above make me think of the equations for an electromagnetic wave in free space (no stationary charges or currents):

- ∂B/∂t = –∇×E

- ∂E/∂t = c2∇×B

Now, these equations – and, I must therefore assume, the other equations above as well – effectively describe a propagation mechanism in spacetime, as illustrated below:

You know how it works for the electromagnetic field: it’s the interplay between circulation and flux. Indeed, circulation around some axis of rotation creates a flux in a direction perpendicular to it, and that flux causes this, and then that, and it all goes round and round and round. 🙂 Something like that. 🙂 I will let you look up how it goes, exactly. The principle is clear enough. Somehow, in this beautiful interplay between linear and circular motion, energy is borrowed from one place and then returns to the other, cycle after cycle.

Now, we know the wavefunction consist of a sine and a cosine: the cosine is the real component, and the sine is the imaginary component. Could they be equally real? Could each represent half of the total energy of our particle? I firmly believe they do. The obvious question then is the following: why wouldn’t we represent them as vectors, just like E and B? I mean… Representing them as vectors (I mean real vectors here – something with a magnitude and a direction in a real space – as opposed to state vectors from the Hilbert space) would show they are real, and there would be no need for cumbersome transformations when going from one representational base to another. In fact, that’s why vector notation was invented (sort of): we don’t need to worry about the coordinate frame. It’s much easier to write physical laws in vector notation because… Well… They’re the real thing, aren’t they? 🙂

What about dimensions? Well… I am not sure. However, because we are – arguably – talking about some pointlike charge moving around in those oscillating fields, I would suspect the dimension of the real and imaginary component of the wavefunction will be the same as that of the electric and magnetic field vectors E and B. We may want to recall these:

- E is measured in newton per coulomb (N/C).

- B is measured in newton per coulomb divided by m/s, so that’s (N/C)/(m/s).

The weird dimension of B is because of the weird force law for the magnetic force. It involves a vector cross product, as shown by Lorentz’ formula:

F = qE + q(v×B)

Of course, it is only one force (one and the same physical reality), as evidenced by the fact that we can write B as the following vector cross-product: B = (1/c)∙ex×E, with ex the unit vector pointing in the x-direction (i.e. the direction of propagation of the wave). [Check it, because you may not have seen this expression before. Just take a piece of paper and think about the geometry of the situation.] Hence, we may associate the (1/c)∙ex× operator, which amounts to a rotation by 90 degrees, with the s/m dimension. Now, multiplication by i also amounts to a rotation by 90° degrees. Hence, if we can agree on a suitable convention for the direction of rotation here, we may boldly write:

B = (1/c)∙ex×E = (1/c)∙i∙E

This is, in fact, what triggered my geometric interpretation of Schrödinger’s equation about a year ago now. I have had little time to work on it, but think I am on the right track. Of course, you should note that, for an electromagnetic wave, the magnitudes of E and B reach their maximum, minimum and zero point simultaneously (as shown below). So their phase is the same.

In contrast, the phase of the real and imaginary component of the wavefunction is not the same, as shown below.

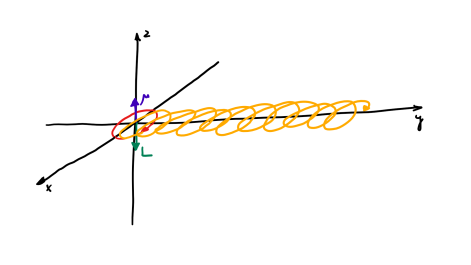

In fact, because of the Stern-Gerlach experiment, I am actually more thinking of a motion like this:

But that shouldn’t distract you. 🙂 The question here is the following: could we possibly think of a new formulation of Schrödinger’s equation – using vectors (again, real vectors – not these weird state vectors) rather than complex algebra?

But that shouldn’t distract you. 🙂 The question here is the following: could we possibly think of a new formulation of Schrödinger’s equation – using vectors (again, real vectors – not these weird state vectors) rather than complex algebra?

I think we can, but then I wonder why the inventors of the wavefunction – Heisenberg, Born, Dirac, and Schrödinger himself, of course – never thought of that. 🙂

Hmm… I need to do some research here. 🙂

Post scriptum: You will, of course, wonder how and why the matter-wave would be different from the electromagnetic wave if my suggestion that the dimension of the wavefunction component is the same is correct. The answer is: the difference lies in the phase difference and then, most probably, the different orientation of the angular momentum. Do we have any other possibilities? 🙂

P.S. 2: I also published this post on my new blog: https://readingeinstein.blog/. However, I thought the followers of this blog should get it first. 🙂

Quite interesting. Notably, Schrodinger’s equation is just a re-use of the wave equation that comes from harmonic motion of something tangible, which is why it’s rather bizarre to see quantum physicists interpreting it as a merely a probability wave. But when we consider the fact that real strings are “tied” to something – an endpoint of sorts – and must interact with it on a particular plane of existence, the analogy should hold true for the Schrodinger equation: The borders (what the wave is “tied” to) is potential energy, and therefore, the wave itself – by virtue of the fact that it must interact on the same plane of existence – should also be an energy wave, which seems to be what you’re saying (albeit you’ve come to these conclusions mathematically rather than analogously).

In comparing Schrodinger’s wave with the electromagnetic (EM) wave of light, it might be helpful to consider the EM wave as not needing to maintain some physical presence. If we rotate one component of the EM wave (say, the magnetic component) to be in the same plane as the other (say, the electric) but pointing in the opposite direction, we could consider the two waves to be binding each other as though each X-axis value had a rubber band pulling the components together, resulting in a bouncing of the wave. There is no “mass” to be maintained. Whereas for an electron, that mass must contain some level of energy along some plane. It can exist entirely in one or the other or part of each, but it cannot go to zero because it MUST exist. Perhaps it could be said that the wave itself contains the state of the particle at that point in space. You can’t simply delete the particle. It’s oscillations of going from one dimension to another are like squashing silly putty: when pressed out of one space, it just pops up somewhere else.

Thanks for your thoughtful comments. Fully agree. What we think of an electron is a weird combination of an orbital and a pointlike charge whizzing around in it. The shape of the orbital is not necessarily symmetrical (only s-orbitals are) and so it has some real non-symmetric geometry. As mentioned, I think the key difference between a photon and a electron is that – for an electron – the oscillation effectively has to push and pull that charge around. Even if we think of that charge having zero ‘rest mass’ (whatever that may be), it sort of creates an ‘electromagnetic mass’ (mass is just a measure of inertia, right), and that’s why an electron can’t travel through space at the speed of light. The standard representation of the wavefunction (and wavefunction) is, effectively, dependent on the pre-establishment of the ‘line of sight’: the line between the object and the observer already establishes (part of) the reference frame. In any physical interpretation of the wavefunction, the directions of the real and imaginary component then need to be defined to this line of sight. I originally thought both had to be perpendicular to it, but perhaps the real direction is just the same as that line of sight, and it’s only the imaginary axis that is perpendicular to it. In that case, the plane of orbit of our electron will comprise the line of sight, and we have a ‘flying saucer’ along the direction of motion, as opposed to the cork-screw-shaped motion of the EM wave. I like the idea that, in such interpretation, the key difference between the EM wave and the matter-wave is just: (1) geometry (the physical dimensions of the ‘components’ of both waves would be the same: we’re talking an oscillating electromagnetic field in both cases), and (2) the presence – or, in the case of a photon, the absence – of a pointlike charge in that whirling oscillation. Thanks again and have a great day ! JL

I agree. Since you’ve already posted another article, I’d like to extend our discussion there.

I read with interest (in your earlier post) your ideas concerning the Schroedinger equation as compared to your rewriting of Maxwell’s equation for magnetism; it was cool how you identified the dimension of acceleration and waves of acceleration. I wrote to comment about your model of the electron inspired by the Stern-Gerlach experiment in this post. Others are thinking along similar lines, in particular, (1) David Hestenes in his papers on Zitterbewegung and how he can derive an electron’s spin from a model using helical motion and (2) Oliver Consa in related work in “Helical Model of the Electron” (http://vixra.org/abs/1408.0203) and later papers. Consa is able to derive time effects of relativity to boot. Maybe a great simplification will happen soon.

Thanks a lot ! Sorry I didn’t look at my site for a while, so that’s why your comment wasn’t online. But many thanks ! I really appreciate. I’ve come across Hestenes paper and it’s on my desk ! Unfortunately, I do have a day job – so there’s a lot of stuff on my desk. But thanks for the appreciation and encouragement !