Pre-script (dated 26 June 2020): This post got mutilated by the removal of some material by the dark force. You should be able to follow the main story line, however. If anything, the lack of illustrations might actually help you to think things through for yourself. In any case, we now have different views on these concepts as part of our realist interpretation of quantum mechanics, so we recommend you read our recent papers instead of these old blog posts.

Original post:

Some other comment on an article on my other blog, inspired me to structure some thoughts that are spread over various blog posts. What follows below, is probably the first draft of an article or a paper I plan to write. Or, who knows, I might re-write my two introductory books on quantum physics and publish a new edition soon. 🙂

Physical dimensions and Uncertainty

The physical dimension of the quantum of action (h or ħ = h/2π) is force (expressed in newton) times distance (expressed in meter) times time (expressed in seconds): N·m·s. Now, you may think this N·m·s dimension is kinda hard to imagine. We can imagine its individual components, right? Force, distance and time. We know what they are. But the product of all three? What is it, really?

It shouldn’t be all that hard to imagine what it might be, right? The N·m·s unit is also the unit in which angular momentum is expressed – and you can sort of imagine what that is, right? Think of a spinning top, or a gyroscope. We may also think of the following:

- [h] = N·m·s = (N·m)·s = [E]·[t]

- [h] = N·m·s = (N·s)·m = [p]·[x]

Hence, the physical dimension of action is that of energy (E) multiplied by time (t) or, alternatively, that of momentum (p) times distance (x). To be precise, the second dimensional equation should be written as [h] = [p]·[x], because both the momentum and the distance traveled will be associated with some direction. It’s a moot point for the discussion at the moment, though. Let’s think about the first equation first: [h] = [E]·[t]. What does it mean?

Energy… Hmm… In real life, we are usually not interested in the energy of a system as such, but by the energy it can deliver, or absorb, per second. This is referred to as the power of a system, and it’s expressed in J/s, or watt. Power is also defined as the (time) rate at which work is done. Hmm… But so here we’re multiplying energy and time. So what’s that? After Hiroshima and Nagasaki, we can sort of imagine the energy of an atomic bomb. We can also sort of imagine the power that’s being released by the Sun in light and other forms of radiation, which is about 385×1024 joule per second. But energy times time? What’s that?

I am not sure. If we think of the Sun as a huge reservoir of energy, then the physical dimension of action is just like having that reservoir of energy guaranteed for some time, regardless of how fast or how slow we use it. So, in short, it’s just like the Sun – or the Earth, or the Moon, or whatever object – just being there, for some definite amount of time. So, yes: some definite amount of mass or energy (E) for some definite amount of time (t).

Let’s bring the mass-energy equivalence formula in here: E = mc2. Hence, the physical dimension of action can also be written as [h] = [E]·[t] = [mc]2·[t] = (kg·m2/s2)·s = kg·m2/s. What does that say? Not all that much – for the time being, at least. We can get this [h] = kg·m2/s through some other substitution as well. A force of one newton will give a mass of 1 kg an acceleration of 1 m/s per second. Therefore, 1 N = 1 kg·m/s2 and, hence, the physical dimension of h, or the unit of angular momentum, may also be written as 1 N·m·s = 1 (kg·m/s2)·m·s = 1 kg·m2/s, i.e. the product of mass, velocity and distance.

Hmm… What can we do with that? Nothing much for the moment: our first reading of it is just that it reminds us of the definition of angular momentum – some mass with some velocity rotating around an axis. What about the distance? Oh… The distance here is just the distance from the axis, right? Right. But… Well… It’s like having some amount of linear momentum available over some distance – or in some space, right? That’s sufficiently significant as an interpretation for the moment, I’d think…

Fundamental units

This makes one think about what units would be fundamental – and what units we’d consider as being derived. Formally, the newton is a derived unit in the metric system, as opposed to the units of mass, length and time (kg, m, s). Nevertheless, I personally like to think of force as being fundamental: a force is what causes an object to deviate from its straight trajectory in spacetime. Hence, we may want to think of the quantum of action as representing three fundamental physical dimensions: (1) force, (2) time and (3) distance – or space. We may then look at energy and (linear) momentum as physical quantities combining (1) force and distance and (2) force and time respectively.

Let me write this out:

- Force times length (think of a force that is acting on some object over some distance) is energy: 1 joule (J) = 1 newton·meter (N). Hence, we may think of the concept of energy as a projection of action in space only: we make abstraction of time. The physical dimension of the quantum of action should then be written as [h] = [E]·[t]. [Note the square brackets tell us we are looking at a dimensional equation only, so [t] is just the physical dimension of the time variable. It’s a bit confusing because I also use square brackets as parentheses.]

- Conversely, the magnitude of linear momentum (p = m·v) is expressed in newton·seconds: 1 kg·m/s = 1 (kg·m/s2)·s = 1 N·s. Hence, we may think of (linear) momentum as a projection of action in time only: we make abstraction of its spatial dimension. Think of a force that is acting on some object during some time. The physical dimension of the quantum of action should then be written as [h] = [p]·[x]

Of course, a force that is acting on some object during some time, will usually also act on the same object over some distance but… Well… Just try, for once, to make abstraction of one of the two dimensions here: time or distance.

It is a difficult thing to do because, when everything is said and done, we don’t live in space or in time alone, but in spacetime and, hence, such abstractions are not easy. [Of course, now you’ll say that it’s easy to think of something that moves in time only: an object that is standing still does just that – but then we know movement is relative, so there is no such thing as an object that is standing still in space in an absolute sense: Hence, objects never stand still in spacetime.] In any case, we should try such abstractions, if only because of the principle of least action is so essential and deep in physics:

- In classical physics, the path of some object in a force field will minimize the total action (which is usually written as S) along that path.

- In quantum mechanics, the same action integral will give us various values S – each corresponding to a particular path – and each path (and, therefore, each value of S, really) will be associated with a probability amplitude that will be proportional to some constant times e−i·θ = ei·(S/ħ). Because ħ is so tiny, even a small change in S will give a completely different phase angle θ. Therefore, most amplitudes will cancel each other out as we take the sum of the amplitudes over all possible paths: only the paths that nearly give the same phase matter. In practice, these are the paths that are associated with a variation in S of an order of magnitude that is equal to ħ.

The paragraph above summarizes, in essence, Feynman’s path integral formulation of quantum mechanics. We may, therefore, think of the quantum of action expressing itself (1) in time only, (2) in space only, or – much more likely – (3) expressing itself in both dimensions at the same time. Hence, if the quantum of action gives us the order of magnitude of the uncertainty – think of writing something like S ± ħ, we may re-write our dimensional [ħ] = [E]·[t] and [ħ] = [p]·[x] equations as the uncertainty equations:

You should note here that it is best to think of the uncertainty relations as a pair of equations, if only because you should also think of the concept of energy and momentum as representing different aspects of the same reality, as evidenced by the (relativistic) energy-momentum relation (E2 = p2c2 – m02c4). Also, as illustrated below, the actual path – or, to be more precise, what we might associate with the concept of the actual path – is likely to be some mix of Δx and Δt. If Δt is very small, then Δx will be very large. In order to move over such distance, our particle will require a larger energy, so ΔE will be large. Likewise, if Δt is very large, then Δx will be very small and, therefore, ΔE will be very small. You can also reason in terms of Δx, and talk about momentum rather than energy. You will arrive at the same conclusions: the ΔE·Δt = h and Δp·Δx = h relations represent two aspects of the same reality – or, at the very least, what we might think of as reality.

Also think of the following: if ΔE·Δt = h and Δp·Δx = h, then ΔE·Δt = Δp·Δx and, therefore, ΔE/Δp must be equal to Δx/Δt. Hence, the ratio of the uncertainty about x (the distance) and the uncertainty about t (the time) equals the ratio of the uncertainty about E (the energy) and the uncertainty about p (the momentum).

Of course, you will note that the actual uncertainty relations have a factor 1/2 in them. This may be explained by thinking of both negative as well as positive variations in space and in time.

We will obviously want to do some more thinking about those physical dimensions. The idea of a force implies the idea of some object – of some mass on which the force is acting. Hence, let’s think about the concept of mass now. But… Well… Mass and energy are supposed to be equivalent, right? So let’s look at the concept of energy too.

Action, energy and mass

What is energy, really? In real life, we are usually not interested in the energy of a system as such, but by the energy it can deliver, or absorb, per second. This is referred to as the power of a system, and it’s expressed in J/s. However, in physics, we always talk energy – not power – so… Well… What is the energy of a system?

According to the de Broglie and Einstein – and so many other eminent physicists, of course – we should not only think of the kinetic energy of its parts, but also of their potential energy, and their rest energy, and – for an atomic system – we may add some internal energy, which may be binding energy, or excitation energy (think of a hydrogen atom in an excited state, for example). A lot of stuff. 🙂 But, obviously, Einstein’s mass-equivalence formula comes to mind here, and summarizes it all:

E = m·c2

The m in this formula refers to mass – not to meter, obviously. Stupid remark, of course… But… Well… What is energy, really? What is mass, really? What’s that equivalence between mass and energy, really?

I don’t have the definite answer to that question (otherwise I’d be famous), but… Well… I do think physicists and mathematicians should invest more in exploring some basic intuitions here. As I explained in several posts, it is very tempting to think of energy as some kind of two-dimensional oscillation of mass. A force over some distance will cause a mass to accelerate. This is reflected in the dimensional analysis:

[E] = [m]·[c2] = 1 kg·m2/s2 = 1 kg·m/s2·m = 1 N·m

The kg and m/s2 factors make this abundantly clear: m/s2 is the physical dimension of acceleration: (the change in) velocity per time unit.

Other formulas now come to mind, such as the Planck-Einstein relation: E = h·f = ω·ħ. We could also write: E = h/T. Needless to say, T = 1/f is the period of the oscillation. So we could say, for example, that the energy of some particle times the period of the oscillation gives us Planck’s constant again. What does that mean? Perhaps it’s easier to think of it the other way around: E/f = h = 6.626070040(81)×10−34 J·s. Now, f is the number of oscillations per second. Let’s write it as f = n/s, so we get:

E/f = E/(n/s) = E·s/n = 6.626070040(81)×10−34 J·s ⇔ E/n = 6.626070040(81)×10−34 J

What an amazing result! Our wavicle – be it a photon or a matter-particle – will always pack 6.626070040(81)×10−34 joule in one oscillation, so that’s the numerical value of Planck’s constant which, of course, depends on our fundamental units (i.e. kg, meter, second, etcetera in the SI system).

Of course, the obvious question is: what’s one oscillation? If it’s a wave packet, the oscillations may not have the same amplitude, and we may also not be able to define an exact period. In fact, we should expect the amplitude and duration of each oscillation to be slightly different, shouldn’t we? And then…

Well… What’s an oscillation? We’re used to counting them: n oscillations per second, so that’s per time unit. How many do we have in total? We wrote about that in our posts on the shape and size of a photon. We know photons are emitted by atomic oscillators – or, to put it simply, just atoms going from one energy level to another. Feynman calculated the Q of these atomic oscillators: it’s of the order of 108 (see his Lectures, I-33-3: it’s a wonderfully simple exercise, and one that really shows his greatness as a physics teacher), so… Well… This wave train will last about 10–8 seconds (that’s the time it takes for the radiation to die out by a factor 1/e). To give a somewhat more precise example, for sodium light, which has a frequency of 500 THz (500×1012 oscillations per second) and a wavelength of 600 nm (600×10–9 meter), the radiation will lasts about 3.2×10–8 seconds. [In fact, that’s the time it takes for the radiation’s energy to die out by a factor 1/e, so(i.e. the so-called decay time τ), so the wavetrain will actually last longer, but so the amplitude becomes quite small after that time.] So… Well… That’s a very short time but… Still, taking into account the rather spectacular frequency (500 THz) of sodium light, that makes for some 16 million oscillations and, taking into the account the rather spectacular speed of light (3×108 m/s), that makes for a wave train with a length of, roughly, 9.6 meter. Huh? 9.6 meter!? But a photon is supposed to be pointlike, isn’it it? It has no length, does it?

That’s where relativity helps us out: as I wrote in one of my posts, relativistic length contraction may explain the apparent paradox. Using the reference frame of the photon – so if we’d be traveling at speed c,’ riding’ with the photon, so to say, as it’s being emitted – then we’d ‘see’ the electromagnetic transient as it’s being radiated into space.

However, while we can associate some mass with the energy of the photon, none of what I wrote above explains what the (rest) mass of a matter-particle could possibly be. There is no real answer to that, I guess. You’ll think of the Higgs field now but… Then… Well. The Higgs field is a scalar field. Very simple: some number that’s associated with some position in spacetime. That doesn’t explain very much, does it? 😦 When everything is said and done, the scientists who, in 2013 only, got the Nobel Price for their theory on the Higgs mechanism, simply tell us mass is some number. That’s something we knew already, right? 🙂

The reality of the wavefunction

The wavefunction is, obviously, a mathematical construct: a description of reality using a very specific language. What language? Mathematics, of course! Math may not be universal (aliens might not be able to decipher our mathematical models) but it’s pretty good as a global tool of communication, at least.

The real question is: is the description accurate? Does it match reality and, if it does, how good is the match? For example, the wavefunction for an electron in a hydrogen atom looks as follows:

ψ(r, t) = e−i·(E/ħ)·t·f(r)

As I explained in previous posts (see, for example, my recent post on reality and perception), the f(r) function basically provides some envelope for the two-dimensional e−i·θ = e−i·(E/ħ)·t = cosθ + i·sinθ oscillation, with r = (x, y, z), θ = (E/ħ)·t = ω·t and ω = E/ħ. So it presumes the duration of each oscillation is some constant. Why? Well… Look at the formula: this thing has a constant frequency in time. It’s only the amplitude that is varying as a function of the r = (x, y, z) coordinates. 🙂 So… Well… If each oscillation is to always pack 6.626070040(81)×10−34 joule, but the amplitude of the oscillation varies from point to point, then… Well… We’ve got a problem. The wavefunction above is likely to be an approximation of reality only. 🙂 The associated energy is the same, but… Well… Reality is probably not the nice geometrical shape we associate with those wavefunctions.

In addition, we should think of the Uncertainty Principle: there must be some uncertainty in the energy of the photons when our hydrogen atom makes a transition from one energy level to another. But then… Well… If our photon packs something like 16 million oscillations, and the order of magnitude of the uncertainty is only of the order of h (or ħ = h/2π) which, as mentioned above, is the (average) energy of one oscillation only, then we don’t have much of a problem here, do we? 🙂

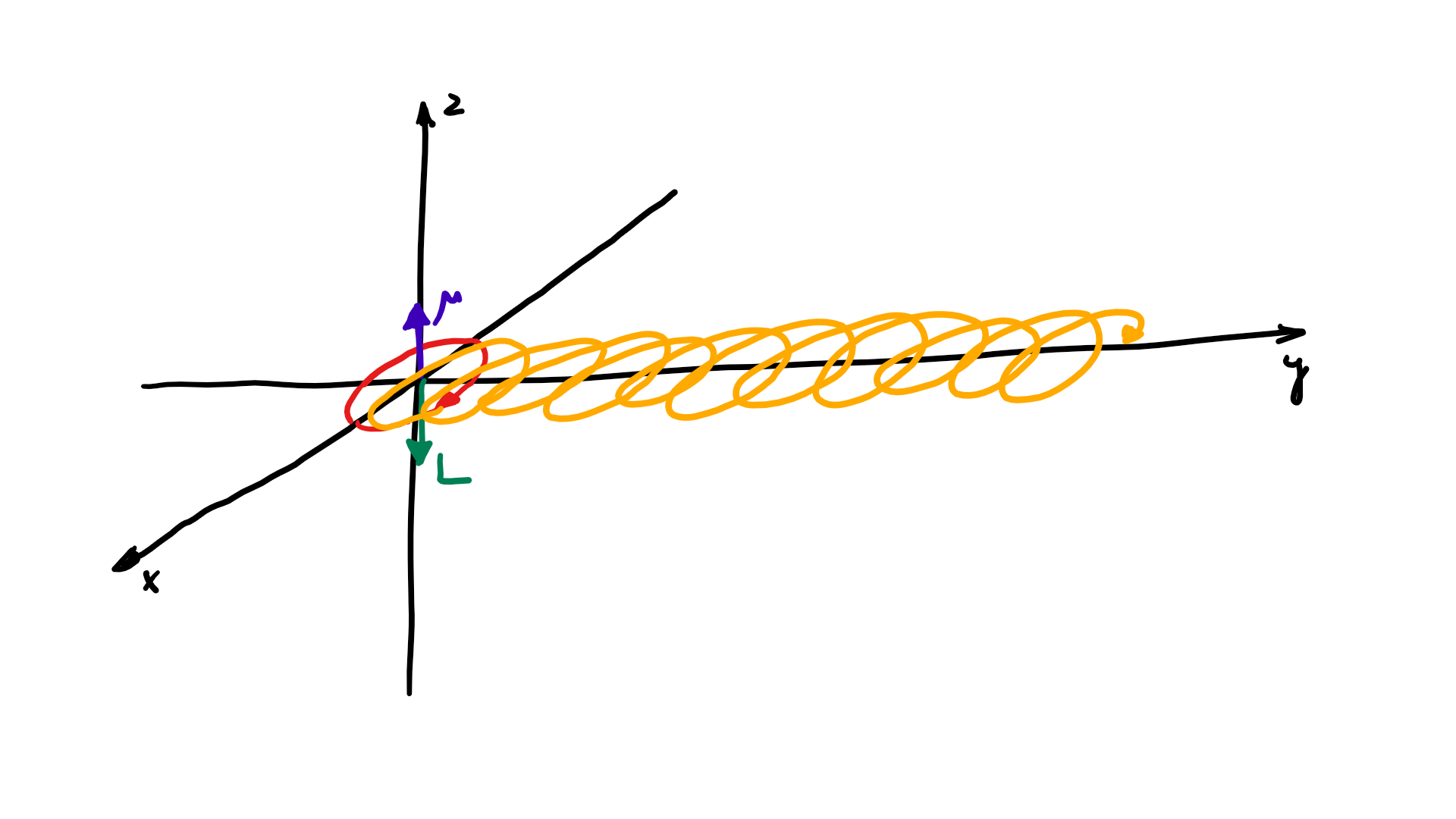

Post scriptum: In previous posts, we offered some analogies – or metaphors – to a two-dimensional oscillation (remember the V-2 engine?). Perhaps it’s all relatively simple. If we have some tiny little ball of mass – and its center of mass has to stay where it is – then any rotation – around any axis – will be some combination of a rotation around our x- and z-axis – as shown below. Two axes only. So we may want to think of a two-dimensional oscillation as an oscillation of the polar and azimuthal angle. 🙂

The resemblance with the standard diffusion equation (shown below) is, effectively, very obvious:

The resemblance with the standard diffusion equation (shown below) is, effectively, very obvious: As Feynman notes, it’s just that imaginary coefficient that makes the behavior quite different. How exactly? Well… You know we get all of those complicated electron orbitals (i.e. the various wave functions that satisfy the equation) out of Schrödinger’s differential equation. We can think of these solutions as (complex) standing waves. They basically represent some equilibrium situation, and the main characteristic of each is their energy level. I won’t dwell on this because – as mentioned above – I assume you master the math. Now, you know that – if we would want to interpret these wavefunctions as something real (which is surely what I want to do!) – the real and imaginary component of a wavefunction will be perpendicular to each other. Let me copy the animation for the elementary wavefunction ψ(θ) = a·e−i∙θ = a·e−i∙(E/ħ)·t = a·cos[(E/ħ)∙t] − i·a·sin[(E/ħ)∙t] once more:

As Feynman notes, it’s just that imaginary coefficient that makes the behavior quite different. How exactly? Well… You know we get all of those complicated electron orbitals (i.e. the various wave functions that satisfy the equation) out of Schrödinger’s differential equation. We can think of these solutions as (complex) standing waves. They basically represent some equilibrium situation, and the main characteristic of each is their energy level. I won’t dwell on this because – as mentioned above – I assume you master the math. Now, you know that – if we would want to interpret these wavefunctions as something real (which is surely what I want to do!) – the real and imaginary component of a wavefunction will be perpendicular to each other. Let me copy the animation for the elementary wavefunction ψ(θ) = a·e−i∙θ = a·e−i∙(E/ħ)·t = a·cos[(E/ħ)∙t] − i·a·sin[(E/ħ)∙t] once more:

But that shouldn’t distract you. 🙂 The question here is the following: could we possibly think of a new formulation of Schrödinger’s equation – using vectors (again, real vectors – not these weird state vectors) rather than complex algebra?

But that shouldn’t distract you. 🙂 The question here is the following: could we possibly think of a new formulation of Schrödinger’s equation – using vectors (again, real vectors – not these weird state vectors) rather than complex algebra?