Pre-script (dated 26 June 2020): This post got severely mutilated by the removal of material by the dark force. It may, therefore, be difficult to follow the main story-line.

Original post:

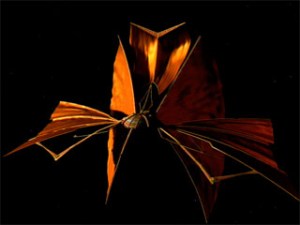

I’ve done quite a few posts already on electromagnetism. They were all focused on the math one needs to understand Maxwell’s equations. Maxwell’s equations are a set of (four) differential equations, so they relate some function with its derivatives. To be specific, they relate E and B, i.e. the electric and magnetic field vector respectively, with their derivatives in space and in time. [Let me be explicit here: E and B have three components, but depend on both space as well as time, so we have three dependent and four independent variables for each function: E = (Ex, Ey, Ez) = E(x, y, z, t) and B = (Bx, By, Bz) = B(x, y, z, t).] That’s simple enough to understand, but the dynamics involved are quite complicated, as illustrated below.

I now want to do a series on the more interesting stuff, including an exploration of the concept of gauge in field theory, and I also want to show how one can derive the wave equation for electromagnetic radiation from Maxwell’s equations. Before I start, let’s recall the basic concept of a field.

I now want to do a series on the more interesting stuff, including an exploration of the concept of gauge in field theory, and I also want to show how one can derive the wave equation for electromagnetic radiation from Maxwell’s equations. Before I start, let’s recall the basic concept of a field.

The reality of fields

I said a couple of time already that (electromagnetic) fields are real. They’re more than just a mathematical structure. Let me show you why. Remember the formula for the electrostatic potential caused by some charge q at the origin:

We know that the (negative) gradient of this function, at any point in space, gives us the electric field vector at that point: E = –∇Φ. [The minus sign is there because of convention: we take the reference point Φ = 0 at infinity.] Now, the electric field vector gives us the force on a unit charge (i.e. the charge of a proton) at that point. If q is some positive charge, the force will be repulsive, and the unit charge will accelerate away from our q charge at the origin. Hence, energy will be expended, as force over distance implies work is being done: as the charges separate, potential energy is converted into kinetic energy. Where does the energy come from? The energy conservation law tells us that it must come from somewhere.

It does: the energy comes from the field itself. Bringing in more or bigger charges (from infinity, or just from further away) requires more energy. So the new charges change the field and, therefore, its energy. How exactly? That’s given by Gauss’ Law: the total flux out of a closed surface is equal to:

You’ll say: flux and energy are two different things. Well… Yes and no. The energy in the field depends on E. Indeed, the formula for the energy density in space (i.e. the energy per unit volume) is

Getting the energy over a larger space is just another integral, with the energy density as the integral kernel:

Feynman’s illustration below is not very sophisticated but, as usual, enlightening. 🙂

Gauss’ Theorem connects both the math as well as the physics of the situation and, as such, underscores the reality of fields: the energy is not in the electric charges. The energy is in the fields they produce. Everything else is just the principle of superposition of fields – i.e. E = E1 + E2 – coming into play. I’ll explain Gauss’ Theorem in a moment. Let me first make some additional remarks.

First, the formulas are valid for electrostatics only (so E and B only vary in space, not in time), so they’re just a piece of the larger puzzle. 🙂 As for now, however, note that, if a field is real (or, to be precise, if its energy is real), then the flux is equally real.

Second, let me say something about the units. Field strength (E or, in this case, its normal component En = E·n) is measured in newton (N) per coulomb (C), so in N/C. The integral above implies that flux is measured in (N/C)·m2. It’s a weird unit because one associates flux with flow and, therefore, one would expect flux is some quantity per unit time and per unit area, so we’d have the m2 unit (and the second) in the denominator, not in the numerator. But so that’s true for heat transfer, for mass transfer, for fluid dynamics (e.g. the amount of water flowing through some cross-section) and many other physical phenomena. But for electric flux, it’s different. You can do a dimensional analysis of the expression above: the sum of the charges is expressed in coulomb (C), and the electric constant (i.e. the vacuum permittivity) is expressed in C2/(N·m2), so, yes, it works: C/[C2/(N·m2)] = (N/C)·m2. To make sense of the units, you should think of the flux as the total flow, and of the field strength as a surface density, so that’s the flux divided by the total area, so (field strength) = (flux)/(area). Conversely, (flux) = (field strength)×(area). Hence, the unit of flux is [flux] = [field strength]×[area] = (N/C)·m2.

OK. Now we’re ready for Gauss’ Theorem. 🙂 I’ll also say something about its corollary, Stokes’ Theorem. It’s a bit of a mathematical digression but necessary, I think, for a better understanding of all those operators we’re going to use.

Gauss’ Theorem

The concept of flux is related to the divergence of a vector field through Gauss’ Theorem. Gauss’s Theorem has nothing to do with Gauss’ Law, except that both are associated with the same genius. Gauss’ Theorem is:

The ∇·C in the integral on the right-hand side is the divergence of a vector field. It’s the volume density of the outward flux of a vector field from an infinitesimal volume around a given point.

Huh? What’s a volume density? Good question. Just substitute C for E in the surface and volume integral above (the integral on the left is a surface integral, and the one on the right is a volume integral), and think about the meaning of what’s written. To help you, let me also include the concept of linear density, so we have (1) linear, (2) surface and (3) volume density. Look at that representation of a vector field once again: we said the density of lines represented the magnitude of E. But what density? The representation hereunder is flat, so we can think of a linear density indeed, measured along the blue line: so the flux would be six (that’s the number of lines), and the linear density (i.e. the field strength) is six divided by the length of the blue line.

However, we defined field strength as a surface density above, so that’s the flux (i.e. the number of field lines) divided by the surface area (i.e. the area of a cross-section): think of the square of the blue line, and field lines going through that square. That’s simple enough. But what’s volume density? How do we count the number of lines inside of a box? The answer is: mathematicians actually define it for an infinitesimally small cube by adding the fluxes out of the six individual faces of an infinitesimally small cube:

So, the truth is: volume density is actually defined as a surface density, but for an infinitesimally small volume element. That, in turn, gives us the meaning of the divergence of a vector field. Indeed, the sum of the derivatives above is just ∇·C (i.e. the divergence of C), and ΔxΔyΔz is the volume of our infinitesimal cube, so the divergence of some field vector C at some point P is the flux – i.e. the outgoing ‘flow’ of C – per unit volume, in the neighborhood of P, as evidenced by writing

Indeed, just bring ΔV to the other side of the equation to check the ‘per unit volume’ aspect of what I wrote above. The whole idea is to determine whether the small volume is like a sink or like a source, and to what extent. Think of the field near a point charge, as illustrated below. Look at the black lines: they are the field lines (the dashed lines are equipotential lines) and note how the positive charge is a source of flux, obviously, while the negative charge is a sink.

Now, the next step is to acknowledge that the total flux from a volume is the sum of the fluxes out of each part. Indeed, the flux through the part of the surfaces common to two parts will cancel each other out. Feynman illustrates that with a rough drawing (below) and I’ll refer you to his Lecture on it for more detail.

So… Combining all of the gymnastics above – and integrating the divergence over an entire volume, indeed – we get Gauss’ Theorem:

Stokes’ Theorem

There is a similar theorem involving the circulation of a vector, rather than its flux. It’s referred to as Stokes’ Theorem. Let me jot it down:

We have a contour integral here (left) and a surface integral (right). The reasoning behind is quite similar: a surface bounded by some loop Γ is divided into infinitesimally small squares, and the circulation around Γ is the sum of the circulations around the little loops. We should take care though: the surface integral takes the normal component of ∇×C, so that’s (∇×C)n = (∇×C)·n. The illustrations below should help you to understand what’s going on.

The electric versus the magnetic force

There’s more than just the electric force: we also have the magnetic force. The so-called Lorentz force is the combination of both. The formula, for some charge q in an electromagnetic field, is equal to:

Hence, if the velocity vector v is not equal to zero, we need to look at the magnetic field vector B too! The simplest situation is magnetostatics, so let’s first have a look at that.

Magnetostatics imply that that the flux of E doesn’t change, so Maxwell’s third equation reduces to c2∇×B = j/ε0. So we just have a steady electric current (j): no accelerating charges. Maxwell’s fourth equation, ∇•B = 0, remains what is was: there’s no such thing as a magnetic charge. The Lorentz force also remains what it is, of course: F = q(E+v×B) = qE +qv×B. Also note that the v, j and the lack of a magnetic charge all point to the same: magnetism is just a relativistic effect of electricity.

What about units? Well… While the unit of E, i.e. the electric field strength, is pretty obvious from the F = qE term – hence, E = F/q, and so the unit of E must be [force]/[charge] = N/C – the unit of the magnetic field strength is more complicated. Indeed, the F = qv×B identity tells us it must be (N·s)/(m·C), because 1 N = 1C·(m/s)·(N·s)/(m·C). Phew! That’s as horrendous as it looks, and that’s why it’s usually expressed using its shorthand, i.e. the tesla: 1 T = 1 (N·s)/(m·C). Magnetic flux is the same concept as electric flux, so it’s (field strength)×(area). However, now we’re talking magnetic field strength, so its unit is T·m2 = (N·s·m2 )/(m·C) = (N·s·m)/C, which is referred to as the weber (Wb). Remembering that 1 volt = 1 N·m/C, it’s easy to see that a weber is also equal to 1 Wb = 1 V·s. In any case, it’s a unit that is not so easy to interpret.

Magnetostatics is a bit of a weird situation. It assumes steady fields, so the ∂E/∂t and ∂B/∂t terms in Maxwell’s equations can be dropped. In fact, c2∇×B = j/ε0 implies that ∇·(c2∇×B ) = ∇·(j/ε0) and, therefore, that ∇·j = 0. Now, ∇·j = –∂ρ/∂t and, therefore, magnetostatics is a situation which assumes ∂ρ/∂t = 0. So we have electric currents but no change in charge densities. To put it simply, we’re not looking at a condenser that is charging or discharging, although that condenser may act like the battery or generator that keeps the charges flowing! But let’s go along with the magnetostatics assumption. What can we say about it? Well… First, we have the equivalent of Gauss’ Law, i.e. Ampère’s Law:

We have a line integral here around a closed curve, instead of a surface integral over a closed surface (Gauss’ Law), but it’s pretty similar: instead of the sum of the charges inside the volume, we have the current through the loop, and then an extra c2 factor in the denominator, of course. Combined with the ∇•B = 0 equation, this equation allows us to solve practical problems. But I am not interested in practical problems. What’s the theory behind?

The magnetic vector potential

The ∇•B = 0 equation is true, always, unlike the ∇×E = 0 expression, which is true for electrostatics only (no moving charges). It says the divergence of B is zero, always, and, hence, it means we can represent B as the curl of another vector field, always. That vector field is referred to as the magnetic vector potential, and we write:

∇·B = ∇·(∇×A) = 0 and, hence, B = ∇×A

In electrostatics, we had the other theorem: if the curl of a vector field is zero (everywhere), then the vector field can be represented as the gradient of some scalar function, so if ∇×C = 0, then there is some Ψ for which C = ∇Ψ. Substituting C for E, and taking into account our conventions on charge and the direction of flow, we get E = –∇Φ. Substituting E in Maxwell’s first equation (∇•E = ρ/ε0) then gave us the so-called Poisson equation: ∇2Φ = ρ/ε0, which sums up the whole subject of electrostatics really! It’s all in there!

Except magnetostatics, of course. Using the (magnetic) vector potential A, all of magnetostatics is reduced to another expression:

∇2A= −j/ε0, with ∇·A = 0

Note the qualifier: ∇·A = 0. Why should the divergence of A be equal to zero? You’re right. It doesn’t have to be that way. We know that ∇·(∇×C) = 0, for any vector field C, and always (it’s a mathematical identity, in fact, so it’s got nothing to do with physics), but choosing A such that ∇·A = 0 is just a choice. In fact, as I’ll explain in a moment, it’s referred to as choosing a gauge. The ∇·A = 0 choice is a very convenient choice, however, as it simplifies our equations. Indeed, c2∇×B = j/ε0 = c2∇×(∇×A), and – from our vector calculus classes – we know that ∇×(∇×C) = ∇(∇·C) – ∇2C. Combining that with our choice of A (which is such that ∇·A = 0, indeed), we get the ∇2A= −j/ε0 expression indeed, which sums up the whole subject of magnetostatics!

The point is: if the time derivatives in Maxwell’s equations, i.e. ∂E/∂t and ∂B/∂t, are zero, then Maxwell’s four equations can be nicely separated into two pairs: the electric and magnetic field are not interconnected. Hence, as long as charges and currents are static, electricity and magnetism appear as distinct phenomena, and the interdependence of E and B does not appear. So we re-write Maxwell’s set of four equations as:

- Electrostatics: ∇•E = ρ/ε0 and ∇×E = 0

- Magnetostatics: ∇×B = j/c2ε0 and ∇•B = 0

Note that electrostatics is a neat example of a vector field with zero curl and a given divergence (ρ/ε0), while magnetostatics is a neat example of a vector field with zero divergence and a given curl (j/c2ε0).

Electrodynamics

But reality is usually not so simple. With time-varying fields, Maxwell’s equations are what they are, and so there is interdependence, as illustrated in the introduction of this post. Note, however, that the magnetic field remains divergence-free in dynamics too! That’s because there is no such thing as a magnetic charge: we only have electric charges. So ∇·B = 0 and we can define a magnetic vector potential A and re-write B as B = ∇×A, indeed.

I am writing a vector potential field because, as I mentioned a couple of times already, we can choose A. Indeed, as long as ∇·A = 0, it’s fine, so we can add curl-free components to the magnetic potential: it won’t make a difference. This condition is referred to as gauge invariance. I’ll come back to that, and also show why this is what it is.

While we can easily get B from A because of the B = ∇×A, getting E from some potential is a different matter altogether. It turns out we can get E using the following expression, which involves both Φ (i.e. the electric or electrostatic potential) as well as A (i.e. the magnetic vector potential):

E = –∇Φ – ∂A/∂t

Likewise, one can show that Maxwell’s equations can be re-written in terms of Φ and A, rather than in terms of E and B. The expression looks rather formidable, but don’t panic:

Just look at it. We have two ‘variables’ here (Φ and A) and two equations, so the system is fully defined. [Of course, the second equation is three equations really: one for each component x, y and z.] What’s the point? Why would we want to re-write Maxwell’s equations? The first equation makes it clear that the scalar potential (i.e. the electric potential) is a time-varying quantity, so things are not, somehow, simpler. The answer is twofold. First, re-writing Maxwell’s equations in terms of the scalar and vector potential makes sense because we have (fairly) easy expressions for their value in time and in space as a function of the charges and currents. For statics, these expressions are:

So it is, effectively, easier to first calculate the scalar and vector potential, and then get E and B from them. For dynamics, the expressions are similar:

So it is, effectively, easier to first calculate the scalar and vector potential, and then get E and B from them. For dynamics, the expressions are similar:

Indeed, they are like the integrals for statics, but with “a small and physically appealing modification”, as Feynman notes: when doing the integrals, we must use the so-called retarded time t′ = t − r12/ct’. The illustration below shows how it works: the influences propagate from point (2) to point (1) at the speed c, so we must use the values of ρ and j at the time t′ = t − r12/ct’ indeed!

The second aspect of the answer to the question of why we’d be interested in Φ and A has to do with the topic I wanted to write about here: the concept of a gauge and a gauge transformation.

The second aspect of the answer to the question of why we’d be interested in Φ and A has to do with the topic I wanted to write about here: the concept of a gauge and a gauge transformation.

Gauges and gauge transformations in electromagnetics

Let’s see what we’re doing really. We calculate some A and then solve for B by writing: B = ∇×A. Now, I say some A because any A‘ = A + ∇Ψ, with Ψ any scalar field really. Why? Because the curl of the gradient of Ψ – i.e. curl(gradΨ) = ∇×(∇Ψ) – is equal to 0. Hence, ∇×(A + ∇Ψ) = ∇×A + ∇×∇Ψ = ∇×A.

So we have B, and now we need E. So the next step is to take Faraday’s Law, which is Maxwell’s second equation: ∇×E = –∂B/∂t. Why this one? It’s a simple one, as it does not involve currents or charges. So we combine this equation and our B = ∇×A expression and write:

∇×E = –∂(∇×A)/∂t

Now, these operators are tricky but you can verify this can be re-written as:

∇×(E + ∂A/∂t) = 0

Looking carefully, we see this expression says that E + ∂A/∂t is some vector whose curl is equal to zero. Hence, this vector must be the gradient of something. When doing electrostatics, When we worked on electrostatics, we only had E, not the ∂A/∂t bit, and we said that E tout court was the gradient of something, so we wrote E = −∇Φ. We now do the same thing for E + ∂A/∂t, so we write:

E + ∂A/∂t = −∇Φ

So we use the same symbol Φ but it’s a bit of a different animal, obviously. However, it’s easy to see that, if the ∂A/∂t would disappear (as it does in electrostatics, where nothing changes with time), we’d get our ‘old’ −∇Φ. Now, E + ∂A/∂t = −∇Φ can be written as:

E = −∇Φ – ∂A/∂t

So, what’s the big deal? We wrote B and E as a function of Φ and A. Well, we said we could replace A by any A‘ = A + ∇Ψ but, obviously, such substitution would not yield the same E. To get the same E, we need some substitution rule for Φ as well. Now, you can verify we will get the same E if we’d substitute Φ for Φ’ = Φ – ∂Ψ/∂t. You should check it by writing it all out:

E = −∇Φ’–∂A’/∂t = −∇(Φ–∂Ψ/∂t)–∂(A+∇Ψ)/∂t

= −∇Φ+∇(∂Ψ/∂t)–∂A/∂t–∂(∇Ψ)/∂t = −∇Φ – ∂A/∂t = E

Again, the operators are a bit tricky, but the +∇(∂Ψ/∂t) and –∂(∇Ψ)/∂t terms do cancel out. Where are we heading to? When everything is said and done, we do need to relate it all to the currents and the charges, because that’s the real stuff out there. So let’s take Maxwell’s ∇•E = ρ/ε0 equation, which has the charges in it, and let’s substitute E for E = −∇Φ – ∂A/∂t. We get:

That equation can be re-written as:

So we have one equation here relating Φ and A to the sources. We need another one, and we also need to separate Φ and A somehow. How do we do that?

Maxwell’s fourth equation, i.e. c2∇×B = j/ε0 + ∂E/∂t can, obviously, be written as c2∇×B − ∂E/∂t = j/ε0. Substituting both E and B yields the following monstrosity:

We can now apply the general ∇×(∇×C) = ∇(∇·C) – ∇2C identity to the first term to get:

It’s equally monstrous, obviously, but we can simplify the whole thing by choosing Φ and A in a clever way. For the magnetostatic case, we chose A such that ∇·A = 0. We could have chosen something else. Indeed, it’s not because B is divergence-free, that A has to be divergence-free too! For example, I’ll leave it to you to show that choosing ∇·A such that

also respects the general condition that any A and Φ we choose must respect the A‘ = A + ∇Ψ and Φ’ = Φ – ∂Ψ/∂t equalities. Now, if we choose ∇·A such that ∇·A = −c–2·∂Φ/∂t indeed, then the two middle terms in our monstrosity cancel out, and we’re left with a much simpler equation for A:

also respects the general condition that any A and Φ we choose must respect the A‘ = A + ∇Ψ and Φ’ = Φ – ∂Ψ/∂t equalities. Now, if we choose ∇·A such that ∇·A = −c–2·∂Φ/∂t indeed, then the two middle terms in our monstrosity cancel out, and we’re left with a much simpler equation for A:

In addition, doing the substitution in our other equation relating Φ and A to the sources yields an equation for Φ that has the same form:

What’s the big deal here? Well… Let’s write it all out. The equation above becomes:

That’s a wave equation in three dimensions. In case you wonder, just check one of my posts on wave equations. The one-dimensional equivalent for a wave propagating in the x direction at speed c (like a sound wave, for example) is ∂2Φ/∂x2 = c–2·∂2Φ/∂t2, indeed. The equation for A yields above yields similar wave functions for A‘s components Ax, Ay, and Az.

So, yes, it is a big deal. We’ve written Maxwell’s equations in terms of the scalar (Φ) and vector (A) potential and in a form that makes immediately apparent that we’re talking electromagnetic waves moving out at the speed c. Let me copy them again:

You may, of course, say that you’d rather have a wave equation for E and B, rather than for A and Φ. Well… That can be done. Feynman gives us two derivations that do so. The first derivation is relatively simple and assumes the source our electromagnetic wave moves in one direction only. The second derivation is much more complicated and gives an equation for E that, if you’ve read the first volume of Feynman’s Lectures, you’ll surely remember:

The links are there, and so I’ll let you have fun with those Lectures yourself. I am finished here, indeed, in terms of what I wanted to do in this post, and that is to say a few words about gauges in field theory. It’s nothing much, really, and so we’ll surely have to discuss the topic again, but at least you now know what a gauge actually is in classical electromagnetic theory. Let’s quickly go over the concepts:

- Choosing the ∇·A is choosing a gauge, or a gauge potential (because we’re talking scalar and vector potential here). The particular choice is also referred to as gauge fixing.

- Changing A by adding ∇ψ is called a gauge transformation, and the scalar function Ψ is referred to as a gauge function. The fact that we can add curl-free components to the magnetic potential without them making any difference is referred to as gauge invariance.

- Finally, the ∇·A = −c–2·∂Φ/∂t gauge is referred to as a Lorentz gauge.

Just to make sure you understand: why is that Lorentz gauge so special? Well… Look at the whole argument once more: isn’t it amazing we get such beautiful (wave) equations if we stick it in? Also look at the functional shape of the gauge itself: it looks like a wave equation itself! […] Well… No… It doesn’t. I am a bit too enthusiastic here. We do have the same 1/c2 and a time derivative, but it’s not a wave equation. 🙂 In any case, it all confirms, once again, that physics is all about beautiful mathematical structures. But, again, it’s not math only. There’s something real out there. In this case, that ‘something’ is a traveling electromagnetic field. 🙂

But why do we call it a gauge? That should be equally obvious. It’s really like choosing a gauge in another context, such as measuring the pressure of a tyre, as shown below. 🙂

Gauges and group theory

You’ll usually see gauges mentioned with some reference to group theory. For example, you will see or hear phrases like: “The existence of arbitrary numbers of gauge functions ψ(r, t) corresponds to the U(1) gauge freedom of the electromagnetic theory.” The U(1) notation stands for a unitary group of degree n = 1. It is also known as the circle group. Let me copy the introduction to the unitary group from the Wikipedia article on it:

In mathematics, the unitary group of degree n, denoted U(n), is the group of n × n unitary matrices, with the group operation that of matrix multiplication. The unitary group is a subgroup of the general linear group GL(n, C). In the simple case n = 1, the group U(1) corresponds to the circle group, consisting of all complex numbers with absolute value 1 under multiplication. All the unitary groups contain copies of this group.

The unitary group U(n) is a real Lie group of of dimension n2. The Lie algebra of U(n) consists of n × n skew-Hermitian matrices, with the Lie bracket given by the commutator. The general unitary group (also called the group of unitary similitudes) consists of all matrices A such that A*A is a nonzero multiple of the identity matrix, and is just the product of the unitary group with the group of all positive multiples of the identity matrix.

Phew! Does this make you any wiser? If anything, it makes me realize I’ve still got a long way to go. 🙂 The Wikipedia article on gauge fixing notes something that’s more interesting (if only because I more or less understand what it says):

Although classical electromagnetism is now often spoken of as a gauge theory, it was not originally conceived in these terms. The motion of a classical point charge is affected only by the electric and magnetic field strengths at that point, and the potentials can be treated as a mere mathematical device for simplifying some proofs and calculations. Not until the advent of quantum field theory could it be said that the potentials themselves are part of the physical configuration of a system. The earliest consequence to be accurately predicted and experimentally verified was the Aharonov–Bohm effect, which has no classical counterpart.

This confirms, once again, that the fields are real. In fact, what this says is that the potentials are real: they have a meaningful physical interpretation. I’ll leave it to you to expore that Aharanov-Bohm effect. In the meanwhile, I’ll study what Feynman writes on potentials and all that as used in quantum physics. It will probably take a while before I’ll get into group theory though.

Indeed, it’s probably best to study physics at a somewhat less abstract level first, before getting into the more sophisticated stuff.

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 17, 2020 as a result of a DMCA takedown notice from Michael A. Gottlieb, Rudolf Pfeiffer, and The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 17, 2020 as a result of a DMCA takedown notice from Michael A. Gottlieb, Rudolf Pfeiffer, and The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 20, 2020 as a result of a DMCA takedown notice from Michael A. Gottlieb, Rudolf Pfeiffer, and The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Simply substituting symbols then gives us the solution for Ax:

Simply substituting symbols then gives us the solution for Ax: with n the number of turns per unit length of the solenoid, and I the current going through it. However, in the mentioned post, we assumed that the magnetic field outside of the solenoid was zero, for all practical purposes, but it is not. It is very weak but not zero, as shown below. In fact, it’s fairly strong at very short distances from the solenoid! Calculating the vector potential allows us to calculate its exact value, everywhere. So let’s go for it.

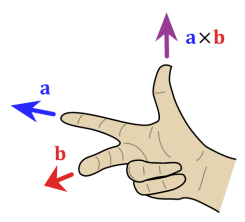

with n the number of turns per unit length of the solenoid, and I the current going through it. However, in the mentioned post, we assumed that the magnetic field outside of the solenoid was zero, for all practical purposes, but it is not. It is very weak but not zero, as shown below. In fact, it’s fairly strong at very short distances from the solenoid! Calculating the vector potential allows us to calculate its exact value, everywhere. So let’s go for it. As for the direction of A, it’s shown on the illustration, of course, but let me remind you of the right-hand rule for the vector cross product a×b once again, so you can make sense of the direction of A = (1/2)B0×r’ indeed:

As for the direction of A, it’s shown on the illustration, of course, but let me remind you of the right-hand rule for the vector cross product a×b once again, so you can make sense of the direction of A = (1/2)B0×r’ indeed: Also note the magnitude this formula implies: a×b = |a|·|b|·sinθ·n, with θ the angle between a and b, and n the normal unit vector in the direction given by that right-hand rule above. Now, unlike a vector dot product, the magnitude of the vector cross product is not zero for perpendicular vectors. In fact, when θ = π/2, which is the case for B0 and r’, then sinθ = 1, and, hence, we can write:

Also note the magnitude this formula implies: a×b = |a|·|b|·sinθ·n, with θ the angle between a and b, and n the normal unit vector in the direction given by that right-hand rule above. Now, unlike a vector dot product, the magnitude of the vector cross product is not zero for perpendicular vectors. In fact, when θ = π/2, which is the case for B0 and r’, then sinθ = 1, and, hence, we can write: