Post scriptum note added on 11 July 2016: This is one of the more speculative posts which led to my e-publication analyzing the wavefunction as an energy propagation. With the benefit of hindsight, I would recommend you to immediately the more recent exposé on the matter that is being presented here, which you can find by clicking on the provided link.

Original post:

In the movie about Stephen Hawking’s life, The Theory of Everything, there is talk about a single unifying equation that would explain everything in the universe. I must assume the real Stephen Hawking is familiar with Feynman’s unworldliness equation: U = 0, which – as Feynman convincingly demonstrates – effectively integrates all known equations in physics. It’s one of Feynman’s many jokes, of course, but an exceptionally clever one, as the argument convincingly shows there’s no such thing as one equation that explains all. Or, to be precise, one can, effectively, ‘hide‘ all the equations you want in a single equation, but it’s just a trick. As Feynman puts it: “When you unwrap the whole thing, you get back where you were before.”

Having said that, some equations in physics are obviously more fundamental than others. You can readily think of obvious candidates: Einstein’s mass-energy equivalence (m = E/c2); the wavefunction (ψ = e–i(ω·t − k·x)) and the two de Broglie relations that come with it (ω = E/ħ and k = p/ħ); and, of course, Schrödinger’s equation, which we wrote as:

In my post on quantum-mechanical operators, I drew your attention to the fact that this equation is structurally similar to the heat diffusion equation. Indeed, assuming the heat per unit volume (q) is proportional to the temperature (T) – which is the case when expressing T in degrees Kelvin (K), so we can write q as q = k·T – we can write the heat diffusion equation as:

Moreover, I noted the similarity is not only structural. There is more to it: both equations model energy flows and/or densities. Look at it: the dimension of the left- and right-hand side of Schrödinger’s equation is the energy dimension: both quantities are expressed in joule. [Remember: a time derivative is a quantity expressed per second, and the dimension of Planck’s constant is the joule·second. You can figure out the dimension of the right-hand side yourself.] Now, the time derivative on the left-hand side is expressed in K/s. The constant in front (k) is just the (volume) heat capacity of the substance, which is expressed in J/(m3·K). So the dimension of k·(∂T/∂t) is J/(m3·s). On the right-hand side we have that Laplacian, whose dimension is K/m2, multiplied by the thermal conductivity, whose dimension is W/(m·K) = J/(m·s·K). Hence, the dimension of the product is the same as the left-hand side: J/(m3·s).

We can present the thing in various ways: if we bring k to the other side, then we’ve got something expressed per second on the left-hand side, and something expressed per square meter on the right-hand side—but the k/κ factor makes it alright. The point is: both Schrödinger’s equation as well as the diffusion equation are actually an expression of the energy conservation law. They’re both expressions of Gauss’ flux theorem (but in differential form, rather than in integral form) which, as you know, pops up everywhere when talking energy conservation.

Huh?

Yep. I’ll give another example. Let me first remind you that the k·(∂T/∂t) = ∂q/∂t = κ·∇2T equation can also be written as:

The h in this equation is, obviously, not Planck’s constant, but the heat flow vector, i.e. the heat that flows through a unit area in a unit time, and h is, obviously, equal to h = −κ∇T. And, of course, you should remember your vector calculus here: ∇· is the divergence operator. In fact, we used the equation above, with ∇·h rather than ∇2T to illustrate the energy conservation principle. Now, you may or may not remember that we gave you a similar equation when talking about the energy of fields and the Poynting vector:

This immediately triggers the following reflection: if there’s a ‘Poynting vector’ for heat flow (h), and for the energy of fields (S), then there must be some kind of ‘Poynting vector’ for amplitudes too! I don’t know which one, but it must exist! And it’s going to be some complex vector, no doubt! But it should be out there.

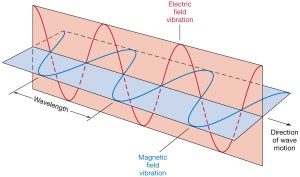

It also makes me think of a point I’ve made a couple of times already—about the similarity between the E and B vectors that characterize the traveling electromagnetic field, and the real and imaginary part of the traveling amplitude. Indeed, the similarity between the two illustrations below cannot be a coincidence. In both cases, we’ve got two oscillating magnitudes that are orthogonal to each other, always. As such, they’re not independent: one follows the other, or vice versa.

The only difference is the phase shift. Euler’s formula incorporates a phase shift—remember: sinθ = cos(θ − π/2)—and so you don’t have that with the E and B vectors. But – Hey! – isn’t that why bosons and fermions are different? 🙂

[…]

This is great fun, and I’ll surely come back to it. As for now, however, I’ll let you ponder the matter for yourself. 🙂

Post scriptum: I am sure that all that the questions I raise here will be answered at the Masters’ level, most probably in some course dealing with quantum field theory, of course. 🙂 In any case, I am happy I can already anticipate such questions. 🙂

Oh – and, as for those two illustrations above, the animation below is one that should help you to think things through. It’s the electric field vector of a traveling circularly polarized electromagnetic wave, as opposed to the linearly polarized light that was illustrated above.

One thought on “Schrödinger’s equation as an energy conservation law”