Schrödinger’s equation, for a particle moving in free space (so we have no external force fields acting on it, so V = 0 and, therefore, the Vψ term disappears) is written as:

∂ψ(x, t)/∂t = i·(1/2)·(ħ/meff)·∇2ψ(x, t)

We already noted and explained the structural similarity with the ubiquitous diffusion equation in physics:

∂φ(x, t)/∂t = D·∇2φ(x, t) with x = (x, y, z)

The big difference between the wave equation and an ordinary diffusion equation is that the wave equation gives us two equations for the price of one: ψ is a complex-valued function, with a real and an imaginary part which, despite their name, are both equally fundamental, or essential. Whatever word you prefer. 🙂 That’s also what the presence of the imaginary unit (i) in the equation tells us. But for the rest it’s the same: the diffusion constant (D) in Schrödinger’s equation is equal to (1/2)·(ħ/meff).

Why the 1/2 factor? It’s ugly. Think of the following: If we bring the (1/2)·(ħ/meff) to the other side, we can write it as meff/(ħ/2). The ħ/2 now appears as a scaling factor in the diffusion constant, just like ħ does in the de Broglie equations: ω = E/ħ and k = p/ħ, or in the argument of the wavefunction: θ = (E·t − p∙x)/ħ. Planck’s constant is, effectively, a physical scaling factor. As a physical scaling constant, it usually does two things:

- It fixes the numbers (so that’s its function as a mathematical constant).

- As a physical constant, it also fixes the physical dimensions. Note, for example, how the 1/ħ factor in ω = E/ħ and k = p/ħ ensures that the ω·t = (E/ħ)·t and k·x = (p/ħ)·x terms in the argument of the wavefunction are both expressed as some dimensionless number, so they can effectively be added together. Physicists don’t like adding apples and oranges.

The question is: why did Schrödinger use ħ/2, rather than ħ, as a scaling factor? Let’s explore the question.

The 1/2 factor

We may want to think that 1/2 factor just echoes the 1/2 factor in the Uncertainty Principle, which we should think of as a pair of relations: σx·σp ≥ ħ/2 and σE·σt ≥ ħ/2. However, the 1/2 factor in those relations only makes sense because we chose to equate the fundamental uncertainty (Δ) in x, p, E and t with the mathematical concept of the standard deviation (σ), or the half-width, as Feynman calls it in his wonderfully clear exposé on it in one of his Lectures on quantum mechanics (for a summary with some comments, see my blog post on it). We may just as well choose to equate Δ with the full-width of those probability distributions we get for x and p, or for E and t. If we do that, we get σx·σp ≥ ħ and σE·σt ≥ ħ.

It’s a bit like measuring the weight of a person on an old-fashioned (non-digital) bathroom scale with 1 kg marks only: do we say this person is x kg ± 1 kg, or x kg ± 500 g? Do we take the half-width or the full-width as the margin of error? In short, it’s a matter of appreciation, and the 1/2 factor in our pair of uncertainty relations is not there because we’ve got two relations. Likewise, it’s not because I mentioned we can think of Schrödinger’s equation as a pair of relations that, taken together, represent an energy propagation mechanism that’s quite similar in its structure to Maxwell’s equations for an electromagnetic wave (as shown below), that we’d insert (or not) that 1/2 factor: either of the two representations below works. It just depends on our definition of the concept of the effective mass.

The 1/2 factor is really a matter of choice, because the rather peculiar – and flexible – concept of the effective mass takes care of it. However, we could define some new effective mass concept, by writing: meffNEW = 2∙meffOLD, and then Schrödinger’s equation would look more elegant:

∂ψ/∂t = i·(ħ/meffNEW)·∇2ψ

Now you’ll want the definition, of course! What is that effective mass concept? Feynman talks at length about it, but his exposé is embedded in a much longer and more general argument on the propagation of electrons in a crystal lattice, which you may not necessarily want to go through right now. So let’s try to answer that question by doing something stupid: let’s substitute ψ in the equation for ψ = a·e−i·[E·t − p∙x]/ħ (which is an elementary wavefunction), calculate the time derivative and the Laplacian, and see what we get. If we do that, the ∂ψ/∂t = i·(1/2)·(ħ/meff)·∇2ψ equation becomes:

−i·a·(E/ħ)·e−i∙(E·t − p∙x)/ħ = −i·a·(1/2)·(ħ/meff)(p2/ħ2)·e−i∙(E·t − p∙x)/ħ

⇔ E = (1/2)·p2/meff = (1/2)·(m·v)2/meff ⇔ meff = (1/2)·(m/E)·m·v2

⇔ meff = (1/c2)·(m·v2/2) = m·β2/2

Hence, the effective mass appears in this equation as the equivalent mass of the kinetic energy (K.E.) of the elementary particle that’s being represented by the wavefunction. Now, you may think that sounds good – and it does – but you should note the following:

1. The K.E. = m·v2/2 formula is only correct for non-relativistic speeds. In fact, it’s the kinetic energy formula if, and only if, if m ≈ m0. The relativistically correct formula for the kinetic energy calculates it as the difference between (1) the total energy (which is given by the E = m·c2 formula, always) and (2) its rest energy, so we write:

K.E. = E − E0 = mv·c2 − m0·c2 = m0·γ·c2 − m0·c2 = m0·c2·(γ − 1)

2. The energy concept in the wavefunction ψ = a·e−i·[E·t − p∙x]/ħ is, obviously, the total energy of the particle. For non-relativistic speeds, the kinetic energy is only a very small fraction of the total energy. In fact, using the formula above, you can calculate the ratio between the kinetic and the total energy: you’ll find it’s equal to 1 − 1/γ = 1 − √(1−v2/c2), and its graph goes from 0 to 1.

Now, if we discard the 1/2 factor, the calculations above yield the following:

−i·a·(E/ħ)·e−i∙(E·t − p∙x)/ħ = −i·a·(ħ/meff)(p2/ħ2)·e−i∙(E·t − p∙x)/ħ

⇔ E = p2/meff = (m·v)2/meff ⇔ meff = (m/E)·m·v2

⇔ meff = m·v2/c2 = m·β2

In fact, it is fair to say that both definitions are equally weird, even if the dimensions come out alright: the effective mass is measured in old-fashioned mass units, and the β2 or β2/2 factor appears as a sort of correction factor, varying between 0 and 1 (for β2) or between 0 and 1/2 (for β2/2). I prefer the new definition, as it ensures that meff becomes equal to m in the limit for the velocity going to c. In addition, if we bring the ħ/meff or (1/2)∙ħ/meff factor to the other side of the equation, the choice becomes one between a meffNEW/ħ or a 2∙meffOLD/ħ coefficient.

It’s a choice, really. Personally, I think the equation without the 1/2 factor – and, hence, the use of ħ rather than ħ/2 as the scaling factor – looks better, but then you may argue that – if half of the energy of our particle is in the oscillating real part of the wavefunction, and the other is in the imaginary part – then the 1/2 factor should stay, because it ensures that meff becomes equal to m/2 as v goes to c (or, what amounts to the same, β goes to 1). But then that’s the argument about whether or not we should have a 1/2 factor because we get two equations for the price of one, like we did for the Uncertainty Principle.

So… What to do? Let’s first ask ourselves whether that derivation of the effective mass actually makes sense. Let’s therefore look at both limit situations.

1. For v going to c (or β = v/c going to 1), we do not have much of a problem: meff just becomes the total mass of the particle that we’re looking at, and Schrödinger’s equation can easily be interpreted as an energy propagation mechanism. Our particle has zero rest mass in that case ( we may also say that the concept of a rest mass is meaningless in this situation) and all of the energy – and, therefore, all of the equivalent mass – is kinetic: m = E/c2 and the effective mass is just the mass: meff = m·c2/c2 = m. Hence, our particle is everywhere and nowhere. In fact, you should note that the concept of velocity itself doesn’t make sense in this rather particular case. It’s like a photon (but note it’s not a photon: we’re talking some theoretical particle here with zero spin and zero rest mass): it’s a wave in its own frame of reference, but as it zips by at the speed of light, we think of it as a particle.

2. Let’s look at the other limit situation. For v going to 0 (or β = v/c going to 0), Schrödinger’s equation no longer makes sense, because the diffusion constant goes to zero, so we get a nonsensical equation. Huh? What’s wrong with our analysis?

Well… I must be honest. We started off on the wrong foot. You should note that it’s hard – in fact, plain impossible – to reconcile our simple a·e−i·[E·t − p∙x]/ħ function with the idea of the classical velocity of our particle. Indeed, the classical velocity corresponds to a group velocity, or the velocity of a wave packet, and so we just have one wave here: no group. So we get nonsense. You can see the same when equating p to zero in the wave equation: we get another nonsensical equation, because the Laplacian is zero! Check it. If our elementary wavefunction is equal to ψ = a·e−i·(E/ħ)·t, then that Laplacian is zero.

Hence, our calculation of the effective mass is not very sensical. Why? Because the elementary wavefunction is a theoretical concept only: it may represent some box in space, that is uniformly filled with energy, but it cannot represent any actual particle. Actual particles are always some superposition of two or more elementary waves, so then we’ve got a wave packet (as illustrated below) that we can actually associate with some real-life particle moving in space, like an electron in some orbital indeed. 🙂

I must credit Oregon State University for the animation above. It’s quite nice: a simple particle in a box model without potential. As I showed on my other page (explaining various models), we must add at least two waves – traveling in opposite directions – to model a particle in a box. Why? Because we represent it by a standing wave, and a standing wave is the sum of two waves traveling in opposite directions.

So, if our derivation above was not very meaningful, then what is the actual concept of the effective mass?

The concept of the effective mass

I am afraid that, at this point, I do have to direct you back to the Grand Master himself for the detail. Let me just try to sum it up very succinctly. If we have a wave packet, there is – obviously – some energy in it, and it’s energy we may associate with the classical concept of the velocity of our particle – because it’s the group velocity of our wave packet. Hence, we have a new energy concept here – and the equivalent mass, of course. Now, Feynman’s analysis – which is Schrödinger’s analysis, really – shows we can write that energy as:

E = meff·v2/2

So… Well… That’s the classical kinetic energy formula. And it’s the very classical one, because it’s not relativistic. 😦 But that’s OK for relatively small-moving electrons! [Remember the typical (relative) velocity is given by the fine-structure constant: α = β = v/c. So that’s impressive (about 2,188 km per second), but it’s only a tiny fraction of the speed of light, so non-relativistic formulas should work.]

Now, the meff factor in this equation is a function of the various parameters of the model he uses. To be precise, we get the following formula out of his model (which, as mentioned above, is a model of electrons propagating in a crystal lattice):

meff = ħ2/(2·A·b2 )

Now, the b in this formula is the spacing between the atoms in the lattice. The A basically represents an energy barrier: to move from one atom to another, the electron needs to get across it. I talked about this in my post on it, and so I won’t explain the graph below – because I did that in that post. Just note that we don’t need that factor 2: there is no reason whatsoever to write E0 + 2·A and E0 − 2·A. We could just re-define a new A: (1/2)·ANEW = AOLD. The formula for meff then simplifies to ħ2/(2·AOLD·b2) = ħ2/(ANEW·b2). We then get an Eeff = meff·v2 formula for the extra energy.

Eeff = meff·v2?!? What energy formula is that? Schrödinger must have thought the same thing, and so that’s why we have that ugly 1/2 factor in his equation. However, think about it. Our analysis shows that it is quite straightforward to model energy as a two-dimensional oscillation of mass. In this analysis, both the real and the imaginary component of the wavefunction each store half of the total energy of the object, which is equal to E = m·c2. Remember, indeed, that we compared it to the energy in an oscillator, which is equal to the sum of kinetic and potential energy, and for which we have the T + U = m·ω02/2 formula. But so we have two oscillators here and, hence, twice the energy. Hence, the E = m·c2 corresponds to m·ω02 and, hence, we may think of c as the natural frequency of the vacuum.

Therefore, the Eeff = meff·v2 formula makes much more sense. It nicely mirrors Einstein’s E = m·c2 formula and, in fact, naturally merges into E = m·c2 for v approaching c. But, I admit, it is not so easy to interpret. It’s much easier to just say that the effective mass is the mass of our electron as it appears in the kinetic energy formula, or – alternatively – in the momentum formula. Indeed, Feynman also writes the following formula:

meff·v = p = ħ·k

Now, that is something we easily recognize! 🙂

So… Well… What do we do now? Do we use the 1/2 factor or not?

It would be very convenient, of course, to just stick with tradition and use meff as everyone else uses it: it is just the mass as it appears in whatever medium we happen to look it, which may be a crystal lattice (or a semi-conductor), or just free space. In short, it’s the mass of the electron as it appears to us, i.e. as it appears in the (non-relativistic) kinetic energy formula (K.E. = meff·v2/2), the formula for the momentum of an electron (p = meff·v), or in the wavefunction itself (k = p/ħ = (meff·v)/ħ. In fact, in his analysis of the electron orbitals, Feynman (who just follows Schrödinger here) drops the eff subscript altogether, and so the effective mass is just the mass: meff = m. Hence, the apparent mass of the electron in the hydrogen atom serves as a reference point, and the effective mass in a different medium (such as a crystal lattice, rather than free space or, I should say, a hydrogen atom in free space) will also be different.

The thing is: we get the right results out of Schrödinger’s equation, with the 1/2 factor in it. Hence, Schrödinger’s equation works: we get the actual electron orbitals out of it. Hence, Schrödinger’s equation is true – without any doubt. Hence, if we take that 1/2 factor out, then we do need to use the other effective mass concept. We can do that. Think about the actual relation between the effective mass and the real mass of the electron, about which Feynman writes the following: “The effective mass has nothing to do with the real mass of an electron. It may be quite different—although in commonly used metals and semiconductors it often happens to turn out to be the same general order of magnitude: about 0.1 to 30 times the free-space mass of the electron.” Hence, if we write the relation between meff and m as meff = g(m), then the same relation for our meffNEW = 2∙meffOLD becomes meffNEW = 2·g(m), and the “about 0.1 to 30 times” becomes “about 0.2 to 60 times.”

In fact, in the original 1963 edition, Feynman writes that the effective mass is “about 2 to 20 times” the free-space mass of the electron. Isn’t that interesting? I mean… Note that factor 2! If we’d write meff = 2·m, then we’re fine. We can then write Schrödinger’s equation in the following two equivalent ways:

- (meff/ħ)·∂ψ/∂t = i·∇2ψ

- (2m/ħ)·∂ψ/∂t = i·∇2ψ

Both would be correct, and it explains why Schrödinger’s equation works. So let’s go for that compromise and write Schrödinger’s equation in either of the two equivalent ways. 🙂 The question then becomes: how to interpret that factor 2? The answer to that question is, effectively, related to the fact that we get two waves for the price of one here. So we have two oscillators, so to speak. Now that‘s quite deep, and I will explore that in one of my next posts.

Let me now address the second weird thing in Schrödinger’s equation: the energy factor. I should be more precise: the weirdness arises when solving Schrödinger’s equation. Indeed, in the texts I’ve read, there is this constant switching back and forth between interpreting E as the energy of the atom, versus the energy of the electron. Now, both concepts are obviously quite different, so which one is it really?

The energy factor E

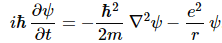

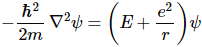

It’s a confusing point—for me, at least and, hence, I must assume for students as well. Let me indicate, by way of example, how the confusion arises in Feynman’s exposé on the solutions to the Schrödinger equation. Initially, the development is quite straightforward. Replacing V by −e2/r, Schrödinger’s equation becomes:

As usual, it is then assumed that a solution of the form ψ (r, t) = e−(i/ħ)·E·t·ψ(r) will work. Apart from the confusion that arises because we use the same symbol, ψ, for two different functions (you will agree that ψ (r, t), a function in two variables, is obviously not the same as ψ(r), a function in one variable only), this assumption is quite straightforward and allows us to re-write the differential equation above as:

To get this, you just need to actually to do that time derivative, noting that the ψ in our equation is now ψ(r), not ψ (r, t). Feynman duly notes this as he writes: “The function ψ(r) must solve this equation, where E is some constant—the energy of the atom.” So far, so good. In one of the (many) next steps, we re-write E as E = ER·ε, with ER = m·e4/2ħ2. So we just use the Rydberg energy (ER ≈ 13.6 eV) here as a ‘natural’ atomic energy unit. That’s all. No harm in that.

Then all kinds of complicated but legitimate mathematical manipulations follow, in an attempt to solve this differential equation—attempt that is successful, of course! However, after all these manipulations, one ends up with the grand simple solution for the s-states of the atom (i.e. the spherically symmetric solutions):

En = −ER/n2 with 1/n2 = 1, 1/4, 1/9, 1/16,…, 1

So we get: En = −13.6 eV, −3.4 eV, −1.5 eV, etcetera. Now how is that possible? How can the energy of the atom suddenly be negative? More importantly, why is so tiny in comparison with the rest energy of the proton (which is about 938 mega-electronvolt), or the electron (0.511 MeV)? The energy levels above are a few eV only, not a few million electronvolt. Feynman answers this question rather vaguely when he states the following:

“There is, incidentally, nothing mysterious about negative numbers for the energy. The energies are negative because when we chose to write V = −e2/r, we picked our zero point as the energy of an electron located far from the proton. When it is close to the proton, its energy is less, so somewhat below zero. The energy is lowest (most negative) for n = 1, and increases toward zero with increasing n.”

We picked our zero point as the energy of an electron located far away from the proton? But we were talking the energy of the atom all along, right? You’re right. Feynman doesn’t answer the question. The solution is OK – well, sort of, at least – but, in one of those mathematical complications, there is a ‘normalization’ – a choice of some constant that pops up when combining and substituting stuff – that is not so innocent. To be precise, at some point, Feynman substitutes the ε variable for the square of another variable – to be even more precise, he writes: ε = −α2. He then performs some more hat tricks – all legitimate, no doubt – and finds that the only sensible solutions to the differential equation require α to be equal to 1/n, which immediately leads to the above-mentioned solution for our s-states.

The real answer to the question is given somewhere else. In fact, Feynman casually gives us an explanation in one of his very first Lectures on quantum mechanics, where he writes the following:

“If we have a “condition” which is a mixture of two different states with different energies, then the amplitude for each of the two states will vary with time according to an equation like a·e−iωt, with ħ·ω = E0 = m·c2. Hence, we can write the amplitude for the two states, for example as:

e−i(E1/ħ)·t and e−i(E2/ħ)·t

And if we have some combination of the two, we will have an interference. But notice that if we added a constant to both energies, it wouldn’t make any difference. If somebody else were to use a different scale of energy in which all the energies were increased (or decreased) by a constant amount—say, by the amount A—then the amplitudes in the two states would, from his point of view, be

All of his amplitudes would be multiplied by the same factor e−i(A/ħ)·t, and all linear combinations, or interferences, would have the same factor. When we take the absolute squares to find the probabilities, all the answers would be the same. The choice of an origin for our energy scale makes no difference; we can measure energy from any zero we want. For relativistic purposes it is nice to measure the energy so that the rest mass is included, but for many purposes that aren’t relativistic it is often nice to subtract some standard amount from all energies that appear. For instance, in the case of an atom, it is usually convenient to subtract the energy Ms·c2, where Ms is the mass of all the separate pieces—the nucleus and the electrons—which is, of course, different from the mass of the atom. For other problems, it may be useful to subtract from all energies the amount Mg·c2, where Mg is the mass of the whole atom in the ground state; then the energy that appears is just the excitation energy of the atom. So, sometimes we may shift our zero of energy by some very large constant, but it doesn’t make any difference, provided we shift all the energies in a particular calculation by the same constant.”

It’s a rather long quotation, but it’s important. The key phrase here is, obviously, the following: “For other problems, it may be useful to subtract from all energies the amount Mg·c2, where Mg is the mass of the whole atom in the ground state; then the energy that appears is just the excitation energy of the atom.” So that’s what he’s doing when solving Schrödinger’s equation. However, I should make the following point here: if we shift the origin of our energy scale, it does not make any difference in regard to the probabilities we calculate, but it obviously does make a difference in terms of our wavefunction itself. To be precise, its density in time will be very different. Hence, if we’d want to give the wavefunction some physical meaning – which is what I’ve been trying to do all along – it does make a huge difference. When we leave the rest mass of all of the pieces in our system out, we can no longer pretend we capture their energy.

This is a rather simple observation, but one that has profound implications in terms of our interpretation of the wavefunction. Personally, I admire the Great Teacher’s Lectures, but I am really disappointed that he doesn’t pay more attention to this. 😦

One thought on “The energy and 1/2 factor in Schrödinger’s equation”