Pre-script (dated 26 June 2020): This post got mutilated by the removal of some material by the dark force. You should be able to follow the main story line, however. If anything, the lack of illustrations might actually help you to think things through for yourself. In any case, we now have different views on these concepts as part of our realist interpretation of quantum mechanics, so we recommend you read our recent papers instead of these old blog posts.

Original post:

As I was posting some remarks on the Exercises that come with Feynman’s Lectures, I was thinking I should do another post on the Principle of Least Action, and how it is used in quantum mechanics. It is an interesting matter, because the Principle of Least Action sort of connects classical and quantum mechanics.

Let us first re-visit the Principle in classical mechanics. The illustrations which Feynman uses in his iconic exposé on it are copied below. You know what they depict: some object that goes up in the air, and then comes back down because of… Well… Gravity. Hence, we have a force field and, therefore, some potential which gives our object some potential energy. The illustration is nice because we can apply it any (uniform) force field, so let’s analyze it a bit more in depth.

We know the actual trajectory – which Feynman writes as x(t) = x(t) + η(t) so as to distinguish it from some other nearby path x(t) – will minimize the value of the following integral:

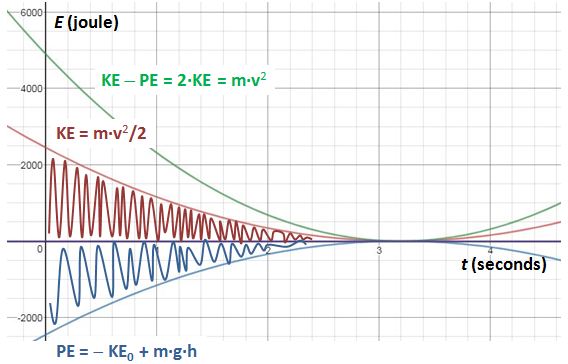

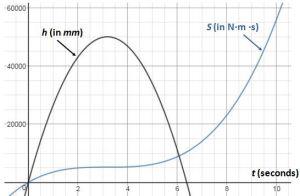

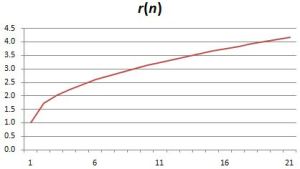

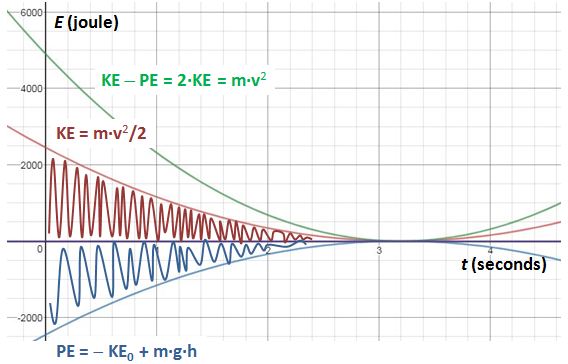

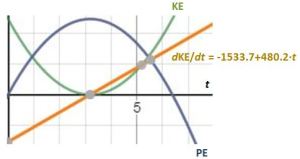

In the mentioned post, I try to explain what the formula actually means by breaking it up in two separate integrals: one with the kinetic energy in the integrand and – you guessed it 🙂 – one with the potential energy. We can choose any reference point for our potential energy, of course, but to better reflect the energy conservation principle, we assume PE = 0 at the highest point. This ensures that the sum of the kinetic and the potential energy is zero. For a mass of 5 kg (think of the ubiquitous cannon ball), and a (maximum) height of 50 m, we got the following graph.

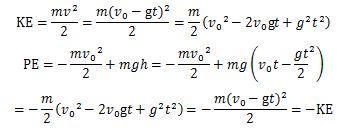

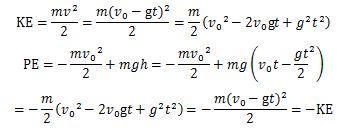

Just to make sure, here is how we calculate KE and PE as a function of time:

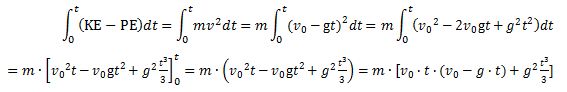

We can, of course, also calculate the action as a function of time:

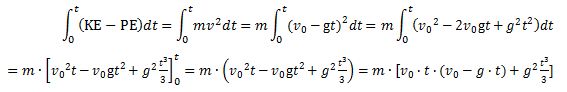

Note the integrand: KE − PE = m·v2. Strange, isn’t it? It’s like E = m·c2, right? We get a weird cubic function, which I plotted below (blue). I added the function for the height (but in millimeter) because of the different scales.

Note the integrand: KE − PE = m·v2. Strange, isn’t it? It’s like E = m·c2, right? We get a weird cubic function, which I plotted below (blue). I added the function for the height (but in millimeter) because of the different scales.

So what’s going on? The action concept is interesting. As the product of force, distance and time, it makes intuitive sense: it’s force over distance over time. To cover some distance in some force field, energy will be used or spent but, clearly, the time that is needed should matter as well, right? Yes. But the question is: how, exactly? Let’s analyze what happens from t = 0 to t = 3.2 seconds, so that’s the trajectory from h = 0 to the highest point (h = 50 m). The action that is required to bring our 5 kg object there would be equal to F·h·t = m·g·h·t = 5×9.8×50×3.2 = 7828.9 J·s. [I use non-rounded values in my calculations.] However, our action integral tells us it’s only 5219.6 J·s. The difference (2609.3 J·s) is explained by the initial velocity and, hence, the initial kinetic energy, which we got for free, so to speak, and which, over the time interval, is spent as action. So our action integral gives us a net value, so to speak.

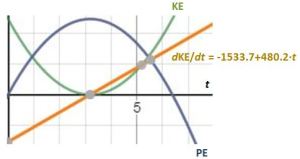

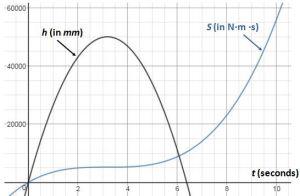

To be precise, we can calculate the time rate of change of the kinetic energy as d(KE)/dt = −1533.7 + 480.2·t, so that’s a linear function of time. The graph below shows how it works. The time rate of change is initially negative, as kinetic energy gets spent and increases the potential energy of our object. At the maximum height, the time of rate of change is zero. The object then starts falling, and the time rate of change becomes positive, as the velocity of our object goes from zero to… Well… The velocity is a linear function of time as well: v = v0 − g·t, remember? Hence, at t = v0/g = 31.3/9.8 = 3.2 s, the velocity becomes negative so our cannon ball is, effectively, falling down. Of course, as it falls down and gains speed, it covers more and more distance per second and, therefore, the associated action also goes up exponentially. Just re-define our starting point at t = 3.2 s. The m·v0t·(v0 − gt) term is zero at that point, and so then it’s only the m·g2·t3/3 term that counts.

So… Yes. That’s clear enough. But it still doesn’t answer the fundamental question: how does that minimization of S (or the maximization of −S) work, exactly? Well… It’s not like Nature knows it wants to go from point a to point b, and then sort of works out some least action algorithm. No. The true path is given by the force law which, at every point in spacetime, will accelerate, or decelerate, our object at a rate a that is equal to the ratio of the force and the mass of our object. In this case, we write: a = F/m = m·g/m = g, so that’s the acceleration of gravity. That’s the only real thing: all of the above is just math, some mental construct, so to speak.

Of course, this acceleration, or deceleration, then gives the velocity and the kinetic energy. Hence, once again, it’s not like we’re choosing some average for our kinetic energy: the force (gravity, in this particular case) just give us that average. Likewise, the potential energy depends on the position of our object, which we get from… Well… Where it starts and where it goes, so it also depends on the velocity and, hence, the acceleration or deceleration from the force field. So there is no optimization. No teleology. Newton’s force law gives us the true path. If we drop something down, it will go down in a straight line, because any deviation from it would add to the distance. A more complicated illustration is Fermat’s Principle of Least Time, which combines distance and time. But we won’t go into any further detail here. Just note that, in classical mechanics, the true path can, effectively, be associated with a minimum value for that action integral: any other path will be associated with a higher S. So we’re done with classical mechanics here. What about the Principle of Least Action in quantum mechanics?

The Principle of Least Action in quantum mechanics

We have the uncertainty in quantum mechanics: there is no unique path. However, we can, effectively, associate each possible path with a definite amount of action, which we will also write as S. However, instead of talking velocities, we’ll usually want to talk momentum. Photons have no rest mass (m0 = 0), but they do have momentum because of their energy: for a photon, the E = m·c2 equation can be rewritten as E = p·c, and the Einstein-Planck relation for photons tells us the photon energy (E) is related to the frequency (f): E = h·f. Now, for a photon, the wavelength is given by f = c/λ. Hence, p = E/c = h·f/c= h/λ = ħ·k.

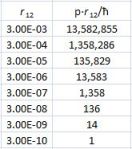

OK. What’s the action integral? What’s the kinetic and potential energy? Let’s just try the energy: E = m·c2. It reflects the KE − PE = m·v2 formula we used above. Of course, the energy of a photon does not vary, so the value of our integral is just the energy times the travel time, right? What is the travel time? Let’s do things properly by using vector notations here, so we will have two position vectors r1 and r2 for point a and b respectively. We can then define a vector pointing from r1 to r2, which we will write as r12. The distance between the two points is then, obviously, equal to|r12| = √r122 = r12. Our photon travels at the speed of light, so the time interval will be equal to t = r12/c. So we get a very simple formula for the action: S = E·t = p·c·t = p·c·r12/c = p·r12. Now, it may or may not make sense to assume that the direction of the momentum of our photon and the direction of r12 are somewhat different, so we’ll want to re-write this as a vector dot product: S = p·r12. [Of course, you know the p∙r12 dot product equals |p|∙|r12|· cosθ = p∙r12·cosθ, with θ the angle between p and r12. If the angle is the same, then cosθ is equal to 1. If the angle is ± π/2, then it’s 0.]

So now we minimize the action so as to determine the actual path? No. We have this weird stopwatch stuff in quantum mechanics. We’ll use this S = p·r12 value to calculate a probability amplitude. So we’ll associate trajectories with amplitudes, and we just use the action values to do so. This is how it works (don’t ask me why – not now, at least):

- We measure action in units of ħ, because… Well… Planck’s constant is a pretty fundamental unit of action, right? 🙂 So we write θ = S/ħ = p·r12/ħ.

- θ usually denotes an angle, right? Right. θ = p·r12/ħ is the so-called phase of… Well… A proper wavefunction:

ψ(p, r12) = a·ei·θ = (1/r12)·ei·p∙r12/ħ

Wow ! I realize you may never have seen this… Well… It’s my derivation of what physicists refer to as the propagator function for a photon. If you google it, you may see it written like this (most probably not, however, as it’s usually couched in more abstract math): This formulation looks slightly better because it uses Diracs bra-ket notation: the initial state of our photon is written as 〈 r1| and its final state is, accordingly, |r2〉. But it’s the same: it’s the amplitude for our photon to go from point a to point b. In case you wonder, the 1/r12 coefficient is there to take care of the inverse square law. I’ll let you think about that for yourself. It’s just like any other physical quantity (or intensity, if you want): they get diluted as the distance increases. [Note that we get the inverse square (1/r122) when calculating a probability, which we do by taking the absolute square of our amplitude: |(1/r12)·ei·p∙r12/ħ|2 = |1/r122)|2·|ei·p∙r12/ħ|2 = 1/r122.]

This formulation looks slightly better because it uses Diracs bra-ket notation: the initial state of our photon is written as 〈 r1| and its final state is, accordingly, |r2〉. But it’s the same: it’s the amplitude for our photon to go from point a to point b. In case you wonder, the 1/r12 coefficient is there to take care of the inverse square law. I’ll let you think about that for yourself. It’s just like any other physical quantity (or intensity, if you want): they get diluted as the distance increases. [Note that we get the inverse square (1/r122) when calculating a probability, which we do by taking the absolute square of our amplitude: |(1/r12)·ei·p∙r12/ħ|2 = |1/r122)|2·|ei·p∙r12/ħ|2 = 1/r122.]

So… Well… Now we are ready to understand Feynman’s own summary of his path integral formulation of quantum mechanics: explanation words:

“Here is how it works: Suppose that for all paths, S is very large compared to ħ. One path contributes a certain amplitude. For a nearby path, the phase is quite different, because with an enormous S even a small change in S means a completely different phase—because ħ is so tiny. So nearby paths will normally cancel their effects out in taking the sum—except for one region, and that is when a path and a nearby path all give the same phase in the first approximation (more precisely, the same action within ħ). Only those paths will be the important ones.”

You are now, finally, ready to understand that wonderful animation that’s part of the Wikipedia article on Feynman’s path integral formulation of quantum mechanics. Check it out, and let the author (not me, but a guy who identifies himself as Juan David) I think it’s great ! 🙂

Explaining diffraction

All of the above is nice, but how does it work? What’s the geometry? Let me be somewhat more adventurous here. So we have our formula for the amplitude of a photon to go from one point to another: The formula is far too simple, if only because it assumes photons always travel at the speed of light. As explained in an older post of mine, a photon also has an amplitude to travel slower or faster than c (I know that sounds crazy, but it is what it is) and a more sophisticated propagator function will acknowledge that and, unsurprisingly, ensure the spacetime intervals that are more light-like make greater contributions to the ‘final arrow’, as Feynman (or his student, Ralph Leighton, I should say) put it in his Strange Theory of Light and Matter. However, then we’d need to use four-vector notation and we don’t want to do that here. The simplified formula above serves the purpose. We can re-write it as:

The formula is far too simple, if only because it assumes photons always travel at the speed of light. As explained in an older post of mine, a photon also has an amplitude to travel slower or faster than c (I know that sounds crazy, but it is what it is) and a more sophisticated propagator function will acknowledge that and, unsurprisingly, ensure the spacetime intervals that are more light-like make greater contributions to the ‘final arrow’, as Feynman (or his student, Ralph Leighton, I should say) put it in his Strange Theory of Light and Matter. However, then we’d need to use four-vector notation and we don’t want to do that here. The simplified formula above serves the purpose. We can re-write it as:

ψ(p, r12) = a·ei·θ = (1/r12)·ei·S/ħ = ei·p∙r12/ħ/r12

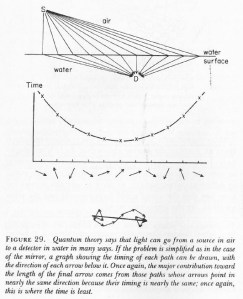

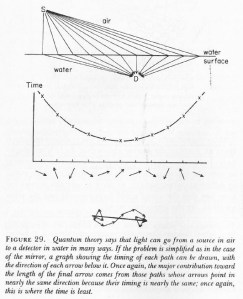

Again, S = p·r12 is just the amount of action we calculate for the path. Action is energy over some time (1 N·m·s = 1 J·s), or momentum over some distance (1 kg·(m/s)·m = 1 N·(s2/m)·(m/s)·m) = 1 N·m·s). For a photon traveling at the speed of light, we have E = p·c, and t = r12/c, so we get a very simple formula for the action: S = E·t = p·r12. Now, we know that, in quantum mechanics, we have to add the amplitudes for the various paths between r1 and r2 so we get a ‘final arrow’ whose absolute square gives us the probability of… Well… Our photon going from r1 and r2. You also know that we don’t really know what actually happens in-between: we know amplitudes interfere, but that’s what we’re modeling when adding the arrows. Let me copy one of Feynman’s famous drawings so we’re sure we know what we’re talking about. Our simplified approach (the assumption of light traveling at the speed of light) reduces our least action principle to a least time principle: the arrows associated with the path of least time and the paths immediately left and right of it that make the biggest contribution to the final arrow. Why? Think of the stopwatch metaphor: these stopwatches arrive around the same time and, hence, their hands point more or less in the same direction. It doesn’t matter what direction – as long as it’s more or less the same.

Our simplified approach (the assumption of light traveling at the speed of light) reduces our least action principle to a least time principle: the arrows associated with the path of least time and the paths immediately left and right of it that make the biggest contribution to the final arrow. Why? Think of the stopwatch metaphor: these stopwatches arrive around the same time and, hence, their hands point more or less in the same direction. It doesn’t matter what direction – as long as it’s more or less the same.

Now let me copy the illustrations he uses to explain diffraction. Look at them carefully, and read the explanation below.

When the slit is large, our photon is likely to travel in a straight line. There are many other possible paths – crooked paths – but the amplitudes that are associated with those other paths cancel each other out. In contrast, the straight-line path and, importantly, the nearby paths, are associated with amplitudes that have the same phase, more or less.

However, when the slit is very narrow, there is a problem. As Feynman puts it, “there are not enough arrows to cancel each other out” and, therefore, the crooked paths are also associated with sizable probabilities. Now how does that work, exactly? Not enough arrows? Why? Let’s have a look at it.

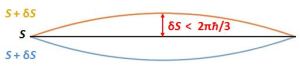

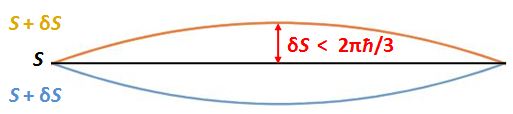

The phase (θ) of our amplitudes a·ei·θ = (1/r12)·ei·S/ħ is measured in units of ħ: θ = S/ħ. Hence, we should measure the variation in S in units of ħ. Consider two paths, for example: one for which the action is equal to S, and one for which the action is equal to S + δS = S + π·ħ, so δS = π·ħ. They will cancel each other out:

ei·S/ħ/r12 + ei·(S + δS)/ħ/r12 = (1/r12)·(ei·S/ħ/r12 + ei·(S+π·ħ)/ħ/r12 )

= (1/r12)·(ei·S/ħ + ei·S/ħ·ei·π) = (1/r12)·(ei·S/ħ − ei·S/ħ) = 0

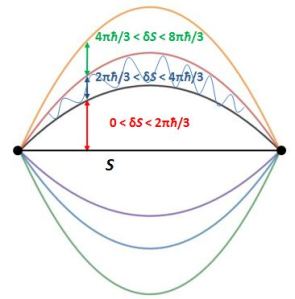

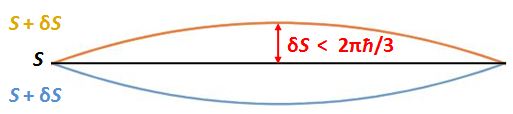

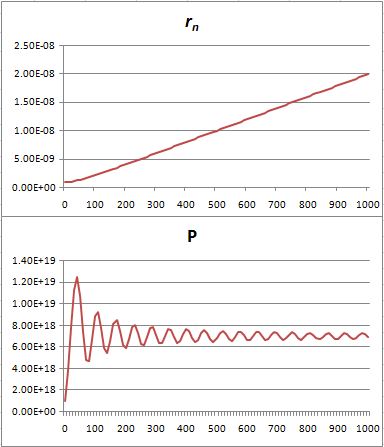

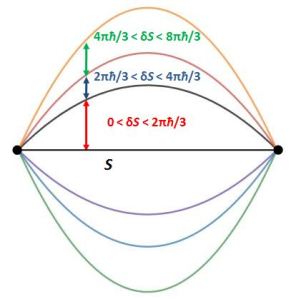

So nearby paths will interfere constructively, so to speak, by making the final arrow larger. In order for that to happen, δS should be smaller than 2πħ/3 ≈ 2ħ, as shown below.

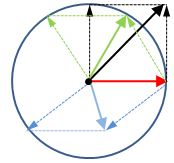

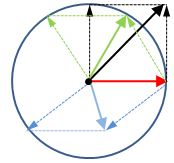

Why? That’s just the way the addition of angles work. Look at the illustration below: if the red arrow is the amplitude to which we are adding another, any amplitude whose phase angle is smaller than 2πħ/3 ≈ 2ħ will add something to its length. That’s what the geometry of the situation tells us. [If you have time, you can perhaps find some algebraic proof: let me know the result!]

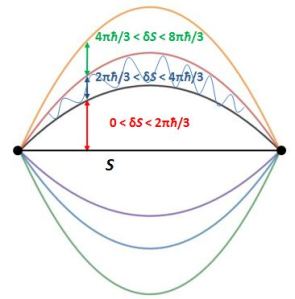

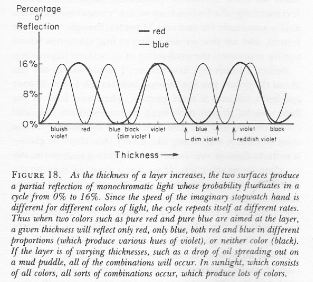

We need to note a few things here. First, unlike what you might think, the amplitudes of the higher and lower path in the drawing do not cancel. On the contrary, the action S is the same, so their magnitudes just add up. Second, if this logic is correct, we will have alternating zones with paths that interfere positively and negatively, as shown below.

We need to note a few things here. First, unlike what you might think, the amplitudes of the higher and lower path in the drawing do not cancel. On the contrary, the action S is the same, so their magnitudes just add up. Second, if this logic is correct, we will have alternating zones with paths that interfere positively and negatively, as shown below.

Interesting geometry. How relevant are these zones as we move out from the center, steadily increasing δS? I am not quite sure. I’d have to get into the math of it all, which I don’t want to do in a blog like this. What I do want to do is re-examine is Feynman’s intuitive explanation of diffraction: when the slit is very narrow, “there are not enough arrows to cancel each other out.”

Huh? What’s that? Can’t we add more paths? It’s a tricky question. We are measuring action in units of ħ, but do we actually think action comes in units of ħ? I am not sure. It would make sense, intuitively, but… Well… There’s uncertainty on the energy (E) and the momentum (p) of our photon, right? And how accurately can we measure the distance? So there’s some randomness everywhere. Having said that, the whole argument does requires us to assume action effectively comes in units of ħ: ħ is, effectively, the scaling factor here.

So how can we have more paths? More arrows? I don’t think so. We measure S as energy over some time, or as momentum over some distance, and we express all these quantities in old-fashioned SI units: newton for the force, meter for the distance, and second for the time. If we want smaller arrows, we’ll have to use other units, but then the numerical value for ħ will change too! So… Well… No. I don’t think so. And it’s not because of the normalization rule (all probabilities have to add up to one, so we do some have some re-scaling for that). That doesn’t matter, really. What matters is the physics behind the formula, and the formula tells us the physical reality is ħ. So the geometry of the situation is what it is.

Hmm… I guess that, at this point, we should wrap up our rather intuitive discussion here, and resort to the mathematical formalism of Feynman’s path integral formulation, but you can find that elsewhere.

Post scriptum: I said I would show how the Principle of Least Action is relevant to both classical as well as quantum mechanics. Well… Let me quote the Master once more:

“So in the limiting case in which Planck’s constant ħ goes to zero, the correct quantum-mechanical laws can be summarized by simply saying: ‘Forget about all these probability amplitudes. The particle does go on a special path, namely, that one for which S does not vary in the first approximation.’”

So that’s how the Principle of Least Action sort of unifies quantum mechanics as well as classical mechanics. 🙂

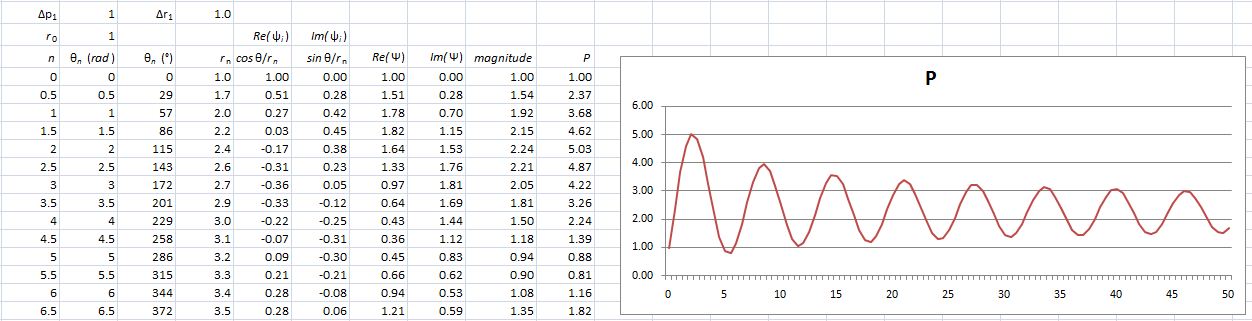

Post scriptum 2: In my next post, I’ll be doing some calculations. They will answer the question as to how relevant those zones of positive and negative interference further away from the straight-line path. I’ll give a numerical example which shows the 1/r12 factor does its job. 🙂 Just have a look at it. 🙂

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

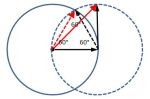

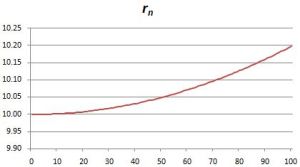

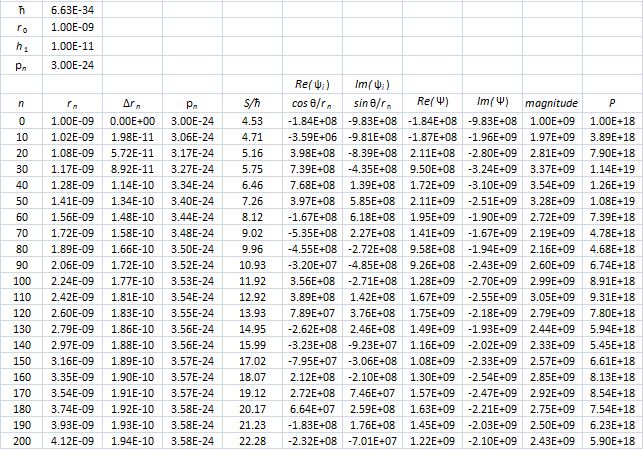

Hence, if S0 is the action for r0, then S1 = S0 + ħ and S2 = S0 + 2·ħ are still good, but S3 = S0 + 3·ħ is not good. Why? Because the difference in the phase angles is Δθ = S1/ħ − S0/ħ = (S0 + ħ)/ħ − S0/ħ = 1 and Δθ = S2/ħ − S0/ħ = (S0 + 2·ħ)/ħ − S0/ħ = 2 respectively, so that’s 57.3° and 114.6° respectively and that’s, effectively, less than 120°. In contrast, for the next path, we find that Δθ = S3/ħ − S0/ħ = (S0 + 3·ħ)/ħ − S0/ħ = 3, so that’s 171.9°. So that amplitude gives us a negative contribution.

Hence, if S0 is the action for r0, then S1 = S0 + ħ and S2 = S0 + 2·ħ are still good, but S3 = S0 + 3·ħ is not good. Why? Because the difference in the phase angles is Δθ = S1/ħ − S0/ħ = (S0 + ħ)/ħ − S0/ħ = 1 and Δθ = S2/ħ − S0/ħ = (S0 + 2·ħ)/ħ − S0/ħ = 2 respectively, so that’s 57.3° and 114.6° respectively and that’s, effectively, less than 120°. In contrast, for the next path, we find that Δθ = S3/ħ − S0/ħ = (S0 + 3·ħ)/ħ − S0/ħ = 3, so that’s 171.9°. So that amplitude gives us a negative contribution. Well… We get a weird result. It reminds me of

Well… We get a weird result. It reminds me of

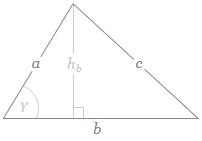

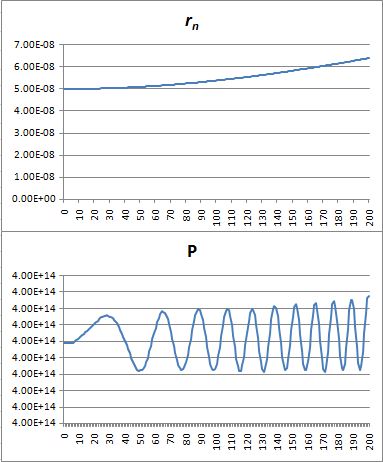

If b is the straight-line path (r0), then ac could be one of the crooked paths (rn). To simplify, we’ll assume isosceles triangles, so a equals c and, hence, rn = 2·a = 2·c. We will also assume the successive paths are separated by the same vertical distance (h = h1) right in the middle, so hb = hn = n·h1. It is then easy to show the following:

If b is the straight-line path (r0), then ac could be one of the crooked paths (rn). To simplify, we’ll assume isosceles triangles, so a equals c and, hence, rn = 2·a = 2·c. We will also assume the successive paths are separated by the same vertical distance (h = h1) right in the middle, so hb = hn = n·h1. It is then easy to show the following:

That’s because we increase the weight of the paths that are further removed from the center. So… Well… We shouldn’t be doing that, I guess. 🙂 I’ll you look for the right formula, OK? Let me know when you found it. 🙂

That’s because we increase the weight of the paths that are further removed from the center. So… Well… We shouldn’t be doing that, I guess. 🙂 I’ll you look for the right formula, OK? Let me know when you found it. 🙂

Note the integrand: KE − PE = m·v2. Strange, isn’t it? It’s like E = m·c2, right? We get a weird cubic function, which I plotted below (

Note the integrand: KE − PE = m·v2. Strange, isn’t it? It’s like E = m·c2, right? We get a weird cubic function, which I plotted below (

Our simplified approach (the assumption of light traveling at the speed of light) reduces our least action principle to a least time principle: the arrows associated with the path of least time and the paths immediately left and right of it that make the biggest contribution to the final arrow. Why? Think of the stopwatch metaphor: these stopwatches arrive around the same time and, hence, their hands point more or less in the same direction. It doesn’t matter what direction – as long as it’s more or less the same.

Our simplified approach (the assumption of light traveling at the speed of light) reduces our least action principle to a least time principle: the arrows associated with the path of least time and the paths immediately left and right of it that make the biggest contribution to the final arrow. Why? Think of the stopwatch metaphor: these stopwatches arrive around the same time and, hence, their hands point more or less in the same direction. It doesn’t matter what direction – as long as it’s more or less the same.

We need to note a few things here. First, unlike what you might think, the amplitudes of the higher and lower path in the drawing do not cancel. On the contrary, the action S is the same, so their magnitudes just add up. Second, if this logic is correct, we will have alternating zones with paths that interfere positively and negatively, as shown below.

We need to note a few things here. First, unlike what you might think, the amplitudes of the higher and lower path in the drawing do not cancel. On the contrary, the action S is the same, so their magnitudes just add up. Second, if this logic is correct, we will have alternating zones with paths that interfere positively and negatively, as shown below.