Note: I have published a paper that is very coherent and fully explains this so-called God-given number. There is nothing magical about it. It is just a scaling constant. Check it out: The Meaning of the Fine-Structure Constant. No ambiguity. No hocus-pocus.

Jean Louis Van Belle, 23 December 2018

Original post:

I think the post scriptum to my previous post is interesting enough to separate it out as a piece of its own, so let me do that here. You’ll remember that we were trying to find some kind of a model for the electron, picturing it like a tiny little ball of charge, and then we just applied the classical energy formulas to it to see what comes out of it. The key formula is the integral that gives us the energy that goes into assembling a charge. It was the following thing:

This is a double integral which we simplified in two stages, so we’re looking at an integral within an integral really, but we can substitute the integral over the ρ(2)·dV2 product by the formula we got for the potential, so we write that as Φ(1), and so the integral above becomes:

Now, this integral integrates the ρ(1)·Φ(1)·dV1 product over all of space, so that’s over all points in space, and so we just dropped the index and wrote the whole thing as the integral of ρ·Φ·dV over all of space:

Now, this integral integrates the ρ(1)·Φ(1)·dV1 product over all of space, so that’s over all points in space, and so we just dropped the index and wrote the whole thing as the integral of ρ·Φ·dV over all of space:

We then established that this integral was mathematically equivalent to the following equation:

So this integral is actually quite simple: it just integrates E•E = E2 over all of space. The illustration below shows E as a function of the distance r for a sphere of radius R filled uniformly with charge.

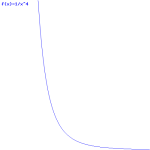

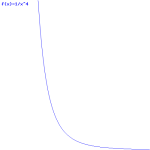

So the field (E) goes as r for r ≤ R and as 1/r2 for r ≥ R. So, for r ≥ R, the integral will have (1/r2)2 = 1/r4 in it. Now, you know that the integral of some function is the surface under the graph of that function. Look at the 1/r4 function below: it blows up between 1 and 0. That’s where the problem is: there needs to be some kind of cut-off, because that integral will effectively blow up when the radius of our little sphere of charge gets ‘too small’. So that makes it clear why it doesn’t make sense to use this formula to try to calculate the energy of a point charge. It just doesn’t make sense to do that.

In fact, the need for a ‘cut-off factor’ so as to ensure our energy function doesn’t ‘blow up’ is not because of the exponent in the 1/r4 expression: the need is also there for any 1/r relation, as illustrated below. All 1/rn function have the same pivot point, as you can see from the simple illustration below. So, yes, we cannot go all the way to zero from there when integrating: we have to stop somewhere.

So what’s the ‘cut-off point’? What’s ‘too small’ a radius? Let’s look at the formula we got for our electron as a shell of charge (so the assumption here is that the charge is uniformly distributed on the surface of a sphere with radius a):

So what’s the ‘cut-off point’? What’s ‘too small’ a radius? Let’s look at the formula we got for our electron as a shell of charge (so the assumption here is that the charge is uniformly distributed on the surface of a sphere with radius a):

So we’ve got an even simpler formula here: it’s just a 1/r relation (a is r in this formula), not 1/r4. Why is that? Well… It’s just the way the math turns out: we’re integrating over volumes and so that involves an r3 factor and so it all simplifies to 1/r, and so that gives us this simple inversely proportional relationship between U and r, i.e a, in this case. 🙂 I copied the detail of Feynman’s calculation in my previous post, so you can double-check it. It’s quite wonderful, really. Look at it again: we have a very simple inversely proportional relationship between the radius of our electron and its energy as a sphere of charge. We could write it as:

Uelect = α/a, with α = e2/2

Still… We need the cut-off point’. Also note that, as I pointed out, we don’t necessarily need to assume that the charge in our little ball of charge (i.e. our electron) sits on the surface only: if we’d assume it’s a uniformly charged sphere of charge, we’d just get another constant of proportionality: our 1/2 factor would become a 3/5 factor, so we’d write: Uelect = (3/5)·e2/a. But we’re not interested in finding the right model here. We know the Uelect = (3/5)·e2/a gives us a value for a that differs with a 2/5 factor as the classical electron radius. That’s not so bad and so let’s go along with it. 🙂

We’re going to look at the simple structure of this relation, and all of its implications. The simple equation above says that the energy of our electron is (a) proportional to the square of its charge and (b) inversely proportional to its radius. Now, that is a very remarkable result. In fact, we’ve seen something like this before, and we were astonished. We saw it when we were discussing the wonderful properties of that magical number, the fine-structure constant, which we also denoted by α. However, because we used α already, I’ll denote the fine-structure constant as αe here, so you don’t get confused. You’ll remember that the fine-structure constant is a God-like number indeed: it links all of the fundamental properties of the electron, i.e. its charge, its radius, its distance to the nucleus (i.e. the Bohr radius), its velocity, its mass (and, hence, its energy), its de Broglie wavelength. Whatever: all these physical constants are all related through the fine-structure constant.

In my various posts on this topic, I’ve repeatedly said that, but I never showed why it’s true, and so it was a very magical number indeed. I am going to take some of the magic out now. Not too much but… Well… You can judge for yourself how much of the magic remains after I am done here. 🙂

So, at this stage of the argument, α can be anything, and αe cannot, of course. It’s just that magical number out there, which relates everything to everything: it’s the God-given number we don’t understand, or didn’t understand, I should say. Past tense. Indeed, we’re going to get some understanding here because we know that one of the many expressions involving αe was the following one:

me = αe/re

This says that the mass of the electron is equal to the ratio of the fine-structure constant and the electron radius. [Note that we express everything in natural units here, so that’s Planck units. For the detail of the conversion, please see the relevant section on that in my one of my posts on this and other stuff.] In fact, the U = (3/5)·e2/a and me = αe/re relations looks exactly the same, because one of the other equations involving the fine-structure constant was: αe = eP2. So we’ve got the square of the charge here as well! Indeed, as I’ll explain in a moment, the difference between the two formulas is just a matter of units.

Now, mass is equivalent to energy, of course: it’s just a matter of units, so we can equate me with Ee (this amounts to expressing the energy of the electron in a kg unit—bit weird, but OK) and so we get:

Ee = αe/re

So there we have: the fine-structure constant αe is Nature’s ‘cut-off’ factor, so to speak. Why? Only God knows. 🙂 But it’s now (fairly) easy to see why all the relations involving αe are what they are. As I mentioned already, we also know that αe is the square of the electron charge expressed in Planck units, so we have:

αe = eP2 and, therefore, Ee = eP2/re

Now, you can check for yourself: it’s just a matter of re-expressing everything in standard SI units, and relating eP2 to e2, and it should all work: you should get the Eelect = (2/3)·e2/a expression. So… Well… At least this takes some of the magic out the fine-structure constant. It’s still a wonderful thing, but so you see that the fundamental relationship between (a) the energy (and, hence, the mass), (b) the radius and (c) the charge of an electron is not something God-given. What’s God-given are Maxwell’s equations, and so the Ee = αe/re = eP2/re is just one of the many wonderful things that you can get out of them.

So we found God’s ‘cut-off factor’ 🙂 It’s equal to αe ≈ 0.0073 = 7.3×10−3. So 7.3 thousands of… What? Well… Nothing. It’s just a pure ratio between the energy and the radius of an electron (if both are expressed in Planck units, of course). And so it determines the electron charge (again, expressed in Planck units). Indeed, we write:

eP = √αe

Really? Yes. Just do all these formulas:

eP = √αe ≈ √0.0073·1.9×10−18 coulomb ≈ 1.6 ×10−19 C

Just re-check it with all the known decimals: you’ll see it’s bang on. Let’s look at the Ee = me = αe/re ratio once again. What’s the meaning of it? Let’s first calculate the value of re and me, i.e. the electron radius and electron mass expressed in Planck units. It’s equal to the classical electron radius divided by the Planck length, and then the same for the mass, so we get the following thing:

re ≈ (2.81794×10−15 m)/(1.6162×10−35 m) = 1.7435×1020

me ≈ (9.1×10−31 kg)/(2.17651×10−8 kg) = 4.18×10−23

αe = (4.18×10−23)·(1.7435×1020) ≈ 0.0073

It works like a charm, but what does it mean? Well… It’s just a ratio between two physical quantities, and the scale you use to measure those quantities matters very much. We’ve explained that the Planck mass is a rather large unit at the atomic scale and, therefore, it’s perhaps not quite appropriate to use it here. In fact, out of the many interesting expressions for αe, I should highlight the following one:

αe = e2/(ħ·c) ≈ (1.60217662×10−19 C)2/(4πε0·[(1.054572×10−34 N·m·s)·(2.998×108 m/s)]) ≈ 0.0073 once more 🙂

Note that the elementary charge e is actually equal to qe/4πε0, which is what I am using in the formula. I know that’s confusing, but it what it is. As for the units, it’s a bit tedious to write it all out, but you’ll get there. Note that ε0 ≈ 8.8542×10−12 C2/(N·m2) so… Well… All the units do cancel out, and we get a dimensionless number indeed, which is what αe is.

The point is: this expression links αe to the the de Broglie relation (p = h/λ), with λ the wavelength that’s associated with the electron. Of course, because of the Uncertainty Principle, we know we’re talking some wavelength range really, so we should write the de Broglie relation as Δp = h·Δ(1/λ). Now, that, in turn, allows us to try to work out the Bohr radius, which is the other ‘dimension’ we associate with an electron. Of course, now you’ll say: why would you do that. Why would you bring in the de Broglie relation here?

Well… We’re talking energy, and so we have the Planck-Einstein relation first: the energy of some particle can always be written as the product of h and some frequency f: E = h·f. The only thing that de Broglie relation adds is the Uncertainty Principle indeed: the frequency f will be some frequency range, associated with some momentum range, and so that’s what the Uncertainty Principle really says. I can’t dwell too much on that here, because otherwise this post would become a book. 🙂 For more detail, you can check out one of my many posts on the Uncertainty Principle. In fact, the one I am referring to here has Feynman’s calculation of the Bohr radius, so I warmly recommend you check it out. The thrust of the argument is as follows:

- If we assume that (a) an electron takes some space – which I’ll denote by r 🙂 – and (b) that it has some momentum p because of its mass m and its velocity v, then the ΔxΔp = ħ relation (i.e. the Uncertainty Principle in its roughest form) suggests that the order of magnitude of r and p should be related in the very same way. Hence, let’s just boldly write r ≈ ħ/p and see what we can do with that.

- We know that the kinetic energy of our electron equals mv2/2, which we can write as p2/2m so we get rid of the velocity factor.Well… Substituting our p ≈ ħ/r conjecture, we get K.E. = ħ2/2mr2. So that’s a formula for the kinetic energy. Next is potential.

- The formula for the potential energy is U = q1q2/4πε0r12. Now, we’re actually talking about the size of an atom here, so one charge is the proton (+e) and the other is the electron (–e), so the potential energy is U = P.E. = –e2/4πε0r, with r the ‘distance’ between the proton and the electron—so that’s the Bohr radius we’re looking for!

- We can now write the total energy (which I’ll denote by E, but don’t confuse it with the electric field vector!) as E = K.E. + P.E. = ħ2/2mr2 – e2/4πε0r. Now, the electron (whatever it is) is, obviously, in some kind of equilibrium state. Why is that obvious? Well… Otherwise our hydrogen atom wouldn’t or couldn’t exist. 🙂 Hence, it’s in some kind of energy ‘well’ indeed, at the bottom. Such equilibrium point ‘at the bottom’ is characterized by its derivative (in respect to whatever variable) being equal to zero. Now, the only ‘variable’ here is r (all the other symbols are physical constants), so we have to solve for dE/dr = 0. Writing it all out yields: dE/dr = –ħ2/mr3 + e2/4πε0r2 = 0 ⇔ r = 4πε0ħ2/me2

- We can now put the values in: r = 4πε0h2/me2 = [(1/(9×109) C2/N·m2)·(1.055×10–34 J·s)2]/[(9.1×10–31 kg)·(1.6×10–19 C)2] = 53×10–12 m = 53 pico-meter (pm)

Done. We’re right on the spot. The Bohr radius is, effectively, about 53 trillionths of a meter indeed!

Phew!

Yes… I know… Relax. We’re almost done. You should now be able to figure out why the classical electron radius and the Bohr radius can also be related to each other through the fine-structure constant. We write:

me = α/re = α/α2r = 1/αr

So we get that α/re = 1/αr and, therefore, we get re/r = α2, which explains why α is also equal to the so-called junction number, or the coupling constant, for an electron-photon coupling (see my post on the quantum-mechanical aspects of the photon-electron interaction). It gives a physical meaning to the probability (which, as you know, is the absolute square of the probability amplitude) in terms of the chance of a photon actually ‘hitting’ the electron as it goes through the atom. Indeed, the ratio of the Thomson scattering cross-section and the Bohr size of the atom should be of the same order as re/r, and so that’s α2.

[Note: To be fully correct and complete, I should add that the coupling constant itself is not α2 but √α = eP. Why do we have this square root? You’re right: the fact that the probability is the absolute square of the amplitude explains one square root (√α2 = α), but not two. The thing is: the photon-electron interaction consists of two things. First, the electron sort of ‘absorbs’ the photon, and then it emits another one, that has the same or a different frequency depending on whether or not the ‘collision’ was elastic or not. So if we denote the coupling constant as j, then the whole interaction will have a probability amplitude equal to j2. In fact, the value which Feynman uses in his wonderful popular presentation of quantum mechanics (The Strange Theory of Light and Matter), is −α ≈ −0.0073. I am not quite sure why the minus sign is there. It must be something with the angles involved (the emitted photon will not be following the trajectory of the incoming photon) or, else, with the special arithmetic involved in boson-fermion interactions (we add amplitudes when bosons are involved, but subtract amplitudes when it’s fermions interacting. I’ll probably find out once I am true through Feynman’s third volume of Lectures, which focus on quantum mechanics only.]

Finally, the last bit of unexplained ‘magic’ in the fine-structure constant is that the fine-structure constant (which I’ve started to write as α again, instead of αe) also gives us the (classical) relative speed of an electron, so that’s its speed as it orbits around the nucleus (according to the classical theory, that is), so we write

α = v/c = β

I should go through the motions here – I’ll probably do so in the coming days – but you can see we must be able to get it out somehow from all what we wrote above. See how powerful our Uelect ∼ e2/a relation really is? It links the electron, charge, its radius and its energy, and it’s all we need to all the rest out of it: its mass, its momentum, its speed and – through the Uncertainty Principle – the Bohr radius, which is the size of the atom.

We’ve come a long way. This is truly a milestone. We’ve taken the magic out of God’s number—to some extent at least. 🙂

You’ll have one last question, of course: if proportionality constants are all about the scale in which we measure the physical quantities on either side of an equation, is there some way the fine-structure constant would come out differently? That’s the same as asking: what if we’d measure energy in units that are equivalent to the energy of an electron, and the radius of our electron just as… Well… What if we’d equate our unit of distance with the radius of the electron, so we’d write re = 1? What would happen to α? Well… I’ll let you figure that one out yourself. I am tired and so I should go to bed now. 🙂

[…] OK. OK. Let me tell you. It’s not that simple here. All those relationships involving α, in one form or the other, are very deep. They relate a lot of stuff to a lot of stuff, and we can appreciate that only when doing a dimensional analysis. A dimensional analysis of the Ee = αe/re = eP2/r yields [eP2/r] = C2/m on the right-hand side and [Ee] = J = N·m on the left-hand side. How can we reconcile both? The coulomb is an SI base unit , so we can’t ‘translate’ it into something with N and m. [To be fully correct, for some reason, the ampère (i.e. coulomb per second) was chosen as an SI base unit, but they’re interchangeable in regard to their place in the international system of units: they can’t be reduced.] So we’ve got a problem. Yes. That’s where we sort of ‘smuggled’ the 4πε0 factor in when doing our calculations above. That ε0 constant is, obviously, not ‘as fundamental’ as c or α (just think of the c−2 = ε0μ0 relationship to understand what I mean here) but, still, it was necessary to make the dimensions come out alright: we need the reciprocal dimension of ε0, i.e. (N·m2)/C2, to make the dimensional analysis work. We get: (C2/m)·(N·m2)/C2 = N·m = J, i.e. joule, so that’s the unit in which we measure energy or – using the E = mc2 equivalence – mass, which is the aspect of energy emphasizing its inertia.

So the answer is: no. Changing units won’t change alpha. So all that’s left is to play with it now. Let’s try to do that. Let me first plot that Ee = me = αe/re = 0.00729735256/re:

Unsurprisingly, we find the pivot point of this curve is at the intersection of the diagonal and the curve itself, so that’s at the (0.00729735256, 0.00729735256) point, where slopes are ± 1, i.e. plus or minus unity. What does this show? Nothing much. What? I can hear you: I should be excited because… Well… Yes! Think of it. If you would have to chose a cut-off point, you’d chose this one, wouldn’t you? 🙂 Sure, you’re right. How exciting! Let me show you. Look at it! It proves that God thinks in terms of logarithms. He has chosen α such that ln(E) = ln(α/r) = lnα – lnr = lnα – lnr = 0, so ln α = lnr and, therefore, α = r. 🙂

Unsurprisingly, we find the pivot point of this curve is at the intersection of the diagonal and the curve itself, so that’s at the (0.00729735256, 0.00729735256) point, where slopes are ± 1, i.e. plus or minus unity. What does this show? Nothing much. What? I can hear you: I should be excited because… Well… Yes! Think of it. If you would have to chose a cut-off point, you’d chose this one, wouldn’t you? 🙂 Sure, you’re right. How exciting! Let me show you. Look at it! It proves that God thinks in terms of logarithms. He has chosen α such that ln(E) = ln(α/r) = lnα – lnr = lnα – lnr = 0, so ln α = lnr and, therefore, α = r. 🙂

Huh? Excuse me?

I am sorry. […] Well… I am not, of course… 🙂 I just wanted to illustrate the kind of exercise some people are tempted to do. It’s no use. The fine-structure constant is what it is: it sort of summarizes an awful lot of formulas. It basically shows what Maxwell’s equation imply in terms of the structure of an atom defined as a negative charge orbiting around some positive charge. It shows we can get calculate everything as a function of something else, and that’s what the fine-structure constant tells us: it relates everything to everything. However, when everything is said and done, the fine-structure constant shows us two things:

- Maxwell’s equations are complete: we can construct a complete model of the electron and the atom, which includes: the electron’s energy and mass, its velocity, its own radius, and the radius of the atom. [I might have forgotten one of the dimensions here, but you’ll add it. :-)]

- God doesn’t want our equations to blow up. Our equations are all correct but, in reality, there’s a cut-off factor that ensures we don’t go to the limit with them.

So the fine-structure constant anchors our world, so to speak. In other words: of all the worlds that are possible, we live in this one.

[…] It’s pretty good as far as I am concerned. Isn’t it amazing that our mind is able to just grasp things like that? I know my approach here is pretty intuitive, and with ‘intuitive’, I mean ‘not scientific’ here. 🙂 Frankly, I don’t like the talk about physicists “looking into God’s mind.” I don’t think that’s what they’re trying to do. I think they’re just trying to understand the fundamental unity behind it all. And that’s religion enough for me. 🙂

So… What’s the conclusion? Nothing much. We’ve sort of concluded our description of the classical world… Well… Of its ‘electromagnetic sector’ at least. 🙂 That sector can be summarized in Maxwell’s equations, which describe an infinite world of possible worlds. However, God fixed three constants: h, c and α. So we live in a world that’s defined by this Trinity of fundamental physical constants. Why is it not two, or four?

My guts instinct tells me it’s because we live in three dimensions, and so there’s three degrees of freedom really. But what about time? Time is the fourth dimension, isn’t it? Yes. But time is symmetric in the ‘electromagnetic’ sector: we can reverse the arrow of time in our equations and everything still works. The arrow of time involves other theories: statistics (physicists refer to it as ‘statistical mechanics‘) and the ‘weak force’ sector, which I discussed when talking about symmetries in physics. So… Well… We’re not done. God gave us plenty of other stuff to try to understand. 🙂