OK. No escape. It’s part of physics. I am not going to go into the nitty-gritty of it all (because this is a blog about physics, not about engineering) but it’s good to review the basics, which are, essentially, Kirchoff’s rules. Just for the record, Gustav Kirchhoff was a German genius who formulated these circuit laws while he was still a student, when he was like 20 years old or so. He did it as a seminar exercise 170 years ago, and then turned it into doctoral dissertation. Makes me think of that Dire Straits song—That’s the way you do it—Them guys ain’t dumb. 🙂

So this post is, in essence, just an ‘explanation’ of Feynman’s presentation of Kirchoff’s rules, so I am writing this post basically for myself, so as to ensure I am not missing anything. To be frank, Feynman’s use of notation when working with complex numbers is confusing at times and so, yes, I’ll do some ‘re-writing’ here. The nice thing about Feynman’s presentation of electrical circuits is that he sticks to Maxwell’s Laws when describing all ideal circuit elements, so he keeps using line integrals of the electric field E around closed paths (that’s what a circuit is, indeed) to describe the so-called passive circuit elements, and he also recapitulates the idea of the electromotive force when discussing the so-called active circuit element, so that’s the generator. That’s nice, because it links it all with what we’ve learned so far, i.e. the fundamentals as expressed in Maxwell’s set of equations. Having said that, I won’t make that link here in this post, because I feel it makes the whole approach rather heavy.

OK. Let’s go for it. Let’s first recall the concept of impedance.

The impedance concept

There are three ideal (passive) circuit elements: the resistor, the capacitor and the inductor. Real circuit elements usually combine characteristics of all of them, even if they are designed to work like ideal circuit elements. Collectively, these ideal (passive) circuit elements are referred to as impedances, because… Well… Because they have some impedance. In fact, you should note that, if we reserve the terms ending with -ance for the property of the circuit elements, and those ending on -or for the objects themselves, then we should call them impedors. However, that term does not seem to have caught on.

You already know what impedance is. I explained it before, notably in my post on the intricacies related to self- and mutual inductance. Impedance basically extends the concept of resistance, as we know it from direct current (DC) circuits, to alternating current (AC) circuits. To put it simply, when AC currents are involved – so when the flow of charge periodically changes reverses direction – then it’s likely that, because of the properties of the circuit, the current signal will lag the voltage signal, and so we’ll have some phase difference telling us by how much. So, resistance is just a simple real number R – it’s the ratio between (1) the voltage that is being applied across the resistor and (2) the current through it, so we write R = V/I – and it’s got a magnitude only, but impedance is a ‘number’ that has both a magnitude as well as phase, so it’s a complex number, or a vector.

In engineering, such ‘numbers’ with a magnitude as well as a phase are referred to as phasors. A phasor represents voltages, currents and impedances as a phase vector (note the bold italics: they explain how we got the pha-sor term). It’s just a rotating vector really. So a phasor has a varying magnitude (A) and phase (φ) , which is determined by (1) some maximum magnitude A0, (2) some angular frequency ω and (3) some initial phase (θ). So we can write the amplitude A as:

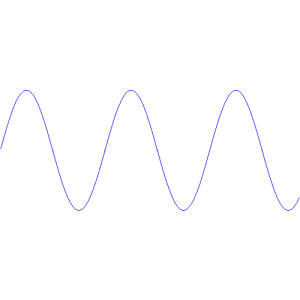

A = A(φ) = A0·cos(φ) = A0·cos(ωt + θ)

As usual, Wikipedia has a nice animation for it:

In case you wonder why I am using a cosine rather than a sine function, the answer is that it doesn’t matter: the sine and the cosine are the same function except for a π/2 phase difference: just rotate the animation above by 90 degrees, or think about the formula: sinφ = cos(φ−π/2). 🙂

So A = A0·cos(ωt + θ) is the amplitude. It could be the voltage, or the current, or whatever real variable. The phase vector itself is represented by a complex number, i.e. a two-dimensional number, so to speak, which we can write as all of the following:

A = A0·eiφ = A0·cosφ + i·A0·sinφ = A0·cos(ωt+θ) + i·A0·sin(ωt+θ)

= A0·ei(ωt+θ) = A0·eiθ·eiωt = A0·eiωt with A0 = A0·eiθ

That’s just Euler’s formula, and I am afraid I have to refer you to my page on the essentials if you don’t get this. I know what you are thinking: why do we need the vector notation? Why can’t we just be happy with the A = A0·cos(ωt+θ) formula? The truthful answer is: it’s just to simplify calculations: it’s easier to work with exponentials than with cosines or sines. For example, writing ei(ωt + θ) = eiθ·eiωt is easier than writing cos(ωt + θ) = … […] Well? […] Hmm… 🙂

See! You’re stuck already. You’d have to use the cos(α+β) = cosα·cosβ − sinα·sinβ formula: you’d get the same results (just do it for the simple calculation of the impedance below) but it takes a lot more time, and it’s easier to make mistake. Having said why complex number notation is great, I also need to warn you. There are a few things you have to watch out for. One of these things is notation. The other is the kind of mathematical operations we can do: it’s usually alright but we need to watch out with the i2 = –1 thing when multiplying complex numbers. However, I won’t talk about that here because it would only confuse you even more. 🙂

Just for the notation, let me note that Feynman would write A0 as A0 with the little hat or caret symbol (∧) on top of it, so as to indicate the complex coefficient is not a variable. So he writes A0 as Â0 = A0·eiθ. However, I find that confusing and, hence, I prefer using bold-type for any complex number, variable or not. The disadvantage is that we need to remember that the coefficient in front of the exponential is not a variable: it’s a complex number alright, but not a variable. Indeed, do look at that A0 = A0·eiθ equality carefully: A0 is a specific complex number that captures the initial phase θ. So it’s not the magnitude of the phasor itself, i.e. |A| = A0. In fact, magnitude, amplitude, phase… We’re using a lot confusing terminology here, and so that’s why you need to ‘get’ the math.

The impedance is not a variable either. It’s some constant. Having said that, this constant will depend on the angular frequency ω. So… Well… Just think about this as you continue to read. 🙂 So the impedance is some number, just like resistance, but it’s a complex number. We’ll denote it by Z and, using Euler’s formula once again, we’ll write it as:

Z = |Z|eiθ = V/I = |V|ei(ωt + θV)/|I|ei(ωt + θI) = [|V|/|I|]·ei(θV − θI)

So, as you can see, it is, literally, some complex ratio, just like R = V/I was some real ratio: it is a complex ratio because it has a magnitude and a direction, obviously. Also please do note that, as I mentioned already, the impedance is, in general, some function of the frequency ω, as evidenced by the ωt term in the exponential, but so we’re not looking at ω as a variable: V and I are variables and, as such, they depend on ω, but so you should look at ω as some parameter. I know I should, perhaps, not be so explicit on what’s going on, but I want to make sure you understand.

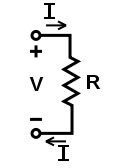

So what’s going on? The illustration below (credit goes to Wikipedia, once again) explains. It’s a pretty generic view of a very simple AC circuit. So we don’t care what the impedance is: it might be an inductor or a capacitor, or a combination of both, but we don’t care: we just call it an impedance, or an impedor if you want. 🙂 The point is: if we apply an alternating current, then the current and the voltage will both go up and down, but the current signal will lag the voltage signal, and some phase factor θ tells us by how much, so θ will be the phase difference.

Now, we’re dividing one complex number by another in that Z = V/I formula above, and dividing one complex number by another is not all that straightforward, so let me re-write that formula for Z above as:

V = I∗Z = I∗|Z|eiθ

Now, while that V = I∗Z formula resembles the V = I·R formula, you should note the bold-face type for V and I, and the ∗ symbol I am using here for multiplication. The bold-face for V and I implies they’re vectors, or complex numbers. As for the ∗ symbol, that’s to make it clear we’re not talking a vector cross product A×B here, but a product of two complex numbers. [It’s obviously not a vector dot product either, because a vector dot product yields a real number, not some other vector.]

Now we write V and I as you’d expect us to write them:

- V = |V|ei(ωt + θV) = V0·ei(ωt + θV)

- I = |I|ei(ωt + θI) = I0·ei(ωt + θI)

θV and θI are, obviously, the so-called initial phase of the voltage and the current respectively. These ‘initial’ phases are not independent: we’re talking a phase difference really, between the voltage and the current signal, and it’s determined by the properties of the circuit. In fact, that’s the whole point here: the impedance is a property of the circuit and determines how the current signal varies as a function of the voltage signal. In fact, we’ll often choose the t = 0 point such that θV and so then we need to find θI. […] OK. Let’s get on with it. Writing out all of the factors in the V = I∗Z = I∗|Z|eiθ equation yields:

V = |V|ei(ωt + θV) = I∗Z = |I|ei(ωt + θI)∗|Z|eiθ = |I||Z|ei(ωt + θI + θ)

Now, this equation must hold for all values of t, so we can equate the magnitudes and phases and, hence, the following equalities must hold:

- |V| = |I||Z| ⇔ |Z| = |V|/|I|

- ωt + θV = ωt + θI + θ ⇔ θ = θV − θI

Done!

Of course, you’ll complain once again about those complex numbers: voltage and current are something real, isn’t it? And so what is really about this complex numbers? Well… I can just say what I said already. You’re right. I’ve used the complex notation only to simplify the calculus, so it’s only the real part of those complex-valued functions that counts.

OK. We’re done with impedance. We can now discuss the impedors, including resistors (for which we won’t have such lag or phase difference, but the concept of impedance applies nevertheless).

Before I start, however, you should think about what I’ve done above: I explained the concept of impedance, but I didn’t do much with it. The real-life problem will usually be that you get the voltage as a function of time, and then you’ll have to calculate the impedance of a circuit and, then, the current as a function of time. So I just showed the fundamental relations but, in real life, you won’t know what θ and θI could possibly be. Well… Let me correct that statement: we’ll give you formulas for θ as we discuss the various circuit elements and their impedance below, and so then you can use these formulas to calculate θI. 🙂

Resistors

Let’s start with what seems to be the easiest thing: a resistor. A real resistor is actually not easy to understand, because it requires us to understand the properties of real materials. Indeed, it may or may not surprise you, but the linear relation between the voltage and the current for real materials is only approximate. Also, the way resistors dissipate energy is not easy to understand. Indeed, unlike inductors and capacitors, i.e. the other two passive components of an electrical circuit, a resistor does not store but dissipates energy, as shown below.

It’s a nice animation (credit for it has to go to Wikipedia once more), as it shows how energy is being used in an electric circuit. Note that the little moving pluses are in line with the convention that a current is defined as the movement of positive charges, so we write I = dQ/dt instead of I = −dQ/dt. That also explains the direction of the field line E, which has been added to show that the charges move with the field that is being generated by the power source (which is not shown here). So, what we have here is that, on one side of the circuit, some generator or voltage source will create an emf pushing the charges, and so the animation shows how some load – i.e. the resistor in this case – will consume their energy, so they lose their push (as shown by the change in color from yellow to black). So power, i.e.energy per unit time, is supplied, and is then consumed.

To increase the current in the circuit above, you need to increase the voltage, but increasing both amounts to increasing the power that’s being consumed in the circuit. Electric power is voltage times current, so P = V·I (or v·i, if I use the small letters that are used in the two animations below). Now, Ohm’s Law (I = V/R) says that, if we’d want to double the current, we’d need to double the voltage, and so we’re quadrupling the power then: P2 = V2·I2 = (2·V1)·(2·I1) = 4·V1·I1 = 22·P1. So we have a square-cube law for the power, which we get by substituting V for R·I or by substituting I for V/R, so we can write the power P as P = V2/R = I2·R. This square-cube law says exactly the same: if you want to double the voltage or the current, you’ll actually have to double both and, hence, you’ll quadruple the power.

But back to the impedance: Ohm’s Law is the Z = V/I law for resistors, but we can simplify it because we know the voltage across the resistor and the current that’s going through are in phase. Hence, θV and θI are identical and, therefore, the θ = θV − θI in Z = |Z|eiθ is equal to zero and, hence, Z = |Z|. Now, |Z| = |V|/|I| = V0/I0. So the impedance Z is just some real number R = V0/I0, which we can also write as:

R = V0/I0 = (V0·ei(ωt + α))/(I0·ei(ωt + α)) = V(t)/I(t), with α = θV = θI

The equation above goes from R = V0/I0 to R = V(t)/I(t) = V/I. It’s note the same thing: the second equation says that, at any point in time, the voltage and the current will be proportional to each other, with R or its reciprocal as the proportionality constant. In any case, we have our formula for Z here:

Z = R = V/I = V0/I0

So that’s simple. Before we move to the next, let me note that the resistance of a real resistor may depend on its temperature, so in real-life applications one will want to keep its temperature as stable as possible. That’s why real-life resistors have power ratings and recommended operating temperatures. The image below illustrates how so-called heat-sink resistors can be mounted on a heat sink with a simple spring clip so as to ensure the dissipated heat is transported away. These heat-sink resistors are rather small (10 by 15 mm only) but are rated for 35 watt – so that’s quite a lot for such small thing – if correctly mounted.

As mentioned, the linear relation between the voltage and the current is only approximate, and the observed relation is also there only for frequencies that are not ‘too high’ because, if the frequency becomes very high, the free electrons will start radiating energy away, as they produce electromagnetic radiation. So one always needs to look at the tolerances of real-life resistors, which may be ± 5%, ± 10%, or whatever. In any case… On to the next.

Capacitors (condensers)

We talked at length about capacitors (aka condensers) in our post explaining capacitance or, the more widely used term, capacity: the capacity of a capacitor is the observed proportionality between (1) the voltage (V) across and (2) the charge (Q) on the capacitor, so we wrote it as:

C = Q/V

Now, it’s easy to confuse the C here with the C for coulomb, which I’ll also use in a moment, and so… Well… Just don’t! 🙂 The meaning of the symbol is usually obvious from the context.

As for the explanation of this relation, it’s quite simple: a capacitor consists of two separate conductors in space, with positive charge on one, and an equal and opposite (i.e. negative) charge on the other. Now, the logic of the superposition of fields implies that, if we double the charges, we will also double the fields, and so the work one needs to do to carry a unit charge from one conductor to the other is also doubled! So that’s why the potential difference between the conductors is proportional to the charge.

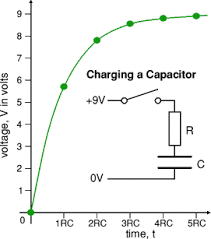

The C = Q/V formula actually measures the ability of the capacitor to store electric charge and, therefore, to store energy, so that’s why the term capacity is really quite appropriate. I’ll let you google a few illustrations like the one below, that shows how a capacitor is actually being charged in a circuit. Usually, some resistance will be there in the circuit, so as to limit the current when it’s connected to the voltage source and, therefore, as you can see, the R times C factor (R·C) determines how fast or how slow the capacitor charges and/or discharges. Also note that the current is equal to the time rate of change of the charge: I = dQ/dt.

In the above-mentioned post, we also give a few formulas for the capacity of specific types of condensers. For example, for a parallel-plate condenser, the formula was C = ε0A/d. We also mentioned its unit, which is is coulomb/volt, obviously, but – in honor of Michael Faraday, who gave us Faraday’s Law, and many other interesting formulas – it’s referred to as the farad: 1 F = 1 C/V. The C here is coulomb, of course. Sorry we have to use C to denote two different things but, as I mentioned, the meaning of the symbol is usually clear from the context.

We also talked about how dielectrics actually work in that post, but we did not talk about the impedance of a capacitor, so let’s do that now. The calculation is pretty straightforward. Its interpretation somewhat less so. But… Well… Let’s go for it.

It’s the current that’s charging the condenser (sorry I keep using both terms interchangeably), and we know that the current is the time rate of change of the charge (I = dQ/dt). Now, you’ll remember that, in general, we’d write a phasor A as A = A0·eiωt with A0 = A0·eiθ, so A0 is a complex coefficient incorporating the initial phase, which we wrote as θV and θI for the voltage and for the current respectively. So we’ll represent the voltage and the current now using that notation, so we write: V = V0·eiωt and I = I0·eiωt. So let’s now use that C = Q/V by re-writing it as Q = C·V and, because C is some constant, we can write:

I = dQ/dt = d(C·V)/dt = C·dV/dt

Now, what’s dV/dt? Oh… You’ll say: V is the magnitude of V, so it’s equal to |V| = |V0·eiωt| = |V0|·|eiωt| = |V0| = |V0·eiθ| = |V0|·|eiθ| = |V0| = V0. So… Well… What? V0 is some constant here! It’s the maximum amplitude of V, so… Well… It’s time derivative is zero: dV0/dt = 0.

Yes. Indeed. We did something very wrong here! You really need to watch out with this complex-number notation, and you need to think about what you’re doing. V is not the magnitude of V but its (varying) amplitude. So it’s the real voltage V that varies with time: it’s equal to V0·cos(ωt + θV), which is the real part of our phasor V. Huh? Yes. Just hang in for a while. I know it’s difficult and, frankly, Feynman doesn’t help us very much here. Let’s take one step back and so – you will see why I am doing this in a moment – let’s calculate the time derivative of our phasor V, instead of the time derivative of our real voltage V. So we calculate dV/dt, which is equal to:

dV/dtd(V0·eiωt)/dt = V0·d(eiωt)/dt = V0·(iω)·eiωt = iω·V0·eiωt = iω·V

Remarkable result, isn’t it? We take the time derivative of our phasor, and the result is the phasor itself multiplied with iω. Well… Yes. It’s a general property of exponentials, but still… Remarkable indeed! We’d get the same with I, but we don’t need that for the moment. What we do need to do is go from our I = C·dV/dt relation, which connects the real parts of I and V one to another, to the I = C·dV/dt relation, which relates the (complex) phasors. So we write:

I = C·dV/dt ⇔ I = C·dV/dt

Can we do that? Just like that? We just replace I and V by I and V? Yes, we can. Why? Well… We know that I is the real part of I and so we can write I = Re(I)+ Im(I)·i = I + Im(I)·i, and then we can write the right-hand side of the equation as C·dV/dt = Re(C·dV/dt)+ Im(C·dV/dt)·i. Now, two complex numbers are equal if, and only if, their real and imaginary parts are the same, so… Well… Write it all out, if you want, using Euler’s formula, and you’ll see it all makes sense indeed.

So what do we get? The I = C·dV/dt gives us:

I = C·dV/dt = C·(iω)·V

That implies that I/V = C·(iω) and, hence, we get – finally! – what we need to get:

Z = V/I = 1/(iωC)

This is a grand result and, while I am sorry I made you suffer for it, I think it did a good job here because, if you’d check Feynman on it, you’ll see he – or, more probably, his assistants, – just skate over this without bothering too much about mathematical rigor. OK. All that’s left now is to interpret this ‘number’ Z = 1/(iωC). It is a purely imaginary number, and it’s a constant indeed, albeit a complex constant. It can be re-written as:

Z = 1/(iωC) = i-1/(ωC) = –i/(ωC) = (1/ωC)·e−i·π/2

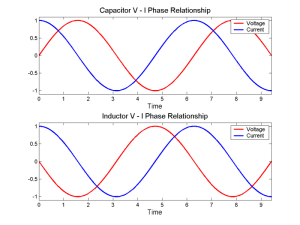

[Sorry. I can’t be more explicit here. It’s just of the wonders of complex numbers: i-1 = –i. Just check one my posts on complex numbers for more detail.] Now, a –i factor corresponds to a rotation of minus 90 degrees, and so that gives you the true meaning of what’s usually said about a circuit with a capacitor: the voltage across the capacitor will lag the current with a phase difference equal to π/2, as shown below. Of course, as it’s the voltage driving the current, we should say it’s the current that is lagging with a phase difference of 3π/2, rather than stating it the other way around! Indeed, i-1 = –i = –1·i = i2·i = i3, so that amounts to three ‘turns’ of the phase in the counter-clockwise direction, which is the direction in which our ωt angle is ‘turning’.

It is a remarkable result, though. The illustration above assumes the maximum amplitude of the voltage and the current are the same, so |Z| = |V|/|I| = 1, but what if they are not the same? What are the real bits then? I can hear you, indeed: “To hell with the bold-face letters: what’s V and I? What’s the real thing?”

Well… V and I are the real bits of V = |V|ei(ωt+θV) = V0·ei(ωt+θV) and of I = |I|ei(ωt+θI) = I0·ei(ωt+θV−θ) = I0·ei(ωt−θ) = I0·ei(ωt+π/2) respectively so, assuming θV = 0 (as mentioned above, that’s just a matter of choosing a convenient t = 0 point), we get:

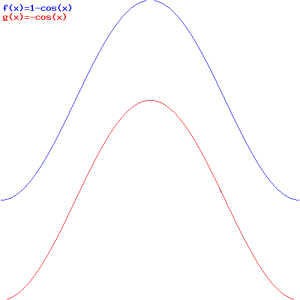

- V = V0·cos(ωt)

- I = I0·cos(ωt + π/2)

So the π/2 phase difference is there (you need to watch out with the signs, of course: θ = −π/2, but so it’s the current that seems to lead here) but the V0/I0 ratio doesn’t have to be one, so the real voltage and current could look like something below, where the maximum amplitude of the current is only half of the maximum amplitude of the voltage.

So let’s analyze this quickly: the V0/I0 ratio is equal to |Z| = |V|/|I| = V0/I0 = 1/ωC = (1/ω)(1/C) (note that it’s not equal to V/I = V(t)/I(t), which is a ratio that doesn’t make sense because I(t) goes through zero as the current switches direction). So what? Well… It means the ratio is inversely proportional to both the frequency ω as well as the capacity C, as shown below. Think about this: if ω goes to zero, V0/I0 goes to ∞, which means that, for a given voltage, the current must go to zero. That makes sense, because we’re talking DC current when ω → 0, and the capacitor charges itself and then that’s it: no more currents. Now, if C goes to zero, so we’re talking capacitors with hardly any capacity, we’ll also get tiny currents. Conversely, for large C, we’ll get huge currents, as the capacitor can take pretty much any charge you throw at it, so that makes for small V0/I0 ratios. The most interesting thing to consider is ω going to infinity, as the V0/I0 ratio is also quite small then. What happens? The capacitor doesn’t get the time to charge, and so it’s always in this state where it has large currents flowing in and out of it, as it can’t build the voltage that would counter the electromotive force that’s being supplied by the voltage source.

OK. That’s it. Le’s discuss the last (passive) element.

OK. That’s it. Le’s discuss the last (passive) element.

Inductors

We’ve spoiled the party a bit with that illustration above, as it gives the phase difference for an inductor already:

Z = iωL = ωL·ei·π/2, with L the inductance of the coil

So, again assuming that θV = 0, we can calculate I as:

I = |I|ei(ωt+θI) = I0·ei(ωt+θV−θ) = I0·ei(ωt−θ) = I0·ei(ωt−π/2)

Of course, you’ll want to relate this, once again, to the real voltage and the real current, so let’s write the real parts of our phasors:

- V = V0·cos(ωt)

- I = I0·cos(ωt − π/2)

Just to make sure you’re not falling asleep as you’re reading, I’ve made another graph of how things could look like. So now’s it’s the current signal that’s lagging the voltage signal with a phase difference equal to θ = π/2.

Also, to be fully complete, I should show you how the V0/I0 ratio now varies with L and ω. Indeed, here also we can write that |Z| = |V|/|I| = V0/I0, but so here we find that V0/I0 = ωL, so we have a simple linear proportionality here! For example, for a given voltage V0, we’ll have smaller currents as ω increases, so that’s the opposite of what happens with our ideal capacitors. I’ll let you think about that… 🙂

Now how do we get that Z = iωL formula? In my post on inductance, I explained what an inductor is: a coil of wire, basically. Its defining characteristic is that a changing current will cause a changing magnetic field in it and, hence, some change in the flux of the magnetic field. Now, Faraday’s Law tells us that that will cause some circulation of the electric field in the coil, which amounts to an induced potential difference which is referred to as the electromotive force (emf). Now, it turns out that the induced emf is proportional to the change in current. So we’ve got another constant of proportionality here, so it’s like how we defined resistance, or capacitance. So, in many ways, the inductance is just another proportionality coefficient. If we denote it by L – the symbol is said to honor the Russian phyicist Heinrich Lenz, whom you know from Lenz’ Law – then we define it as:

L = −Ɛ/(dI/dt)

The dI/dt factor is, obviously, the time rate of change of the current, and the negative sign indicates that the emf opposes the change in current, so it will tend to cause an opposing current. However, the power of our voltage source will ensure the current does effectively change, so it will counter the ‘back emf’ that’s being generated by the inductor. To be precise, the voltage across the terminals of our inductor, which we denote by V, will be equal and opposite to Ɛ, so we write:

V = −Ɛ = L·(dI/dt)

Now, this very much resembles the I = C·dV/dt relation we had for capacitors, and it’s completely analogous indeed: we just need to switch the I and V, and C and L symbols. So we write:

V = L·dI/dt⇔ V = L·dI/dt

Now, dI/dt is a similar time derivative as dV/dt. We calculate it as:

dI/dtd(I0·eiωt)/dt = I0·d(eiωt)/dt = I0·(iω)·eiωt = iω·I0·eiωt = iω·I

So we get what we want and have to get:

V = L·dI/dt = iωL·I

Now, Z = V/I, so Z = iωL indeed!

Summary of conclusions

Let’s summarize what we found:

- For a resistor, we have Z(resistor) = ZR = R = V/I = V0/I0

- For an capacitor, we have Z(capacitor) = ZC = 1/(iωC) = –i/(ωC)

- For an inductor, we have Z(inductance) = ZL= iωL

Note that the impedance of capacitors decreases as frequency increases, while for inductors, it’s the other way around. We explained that by making you think of the currents: for a given voltage, we’ll have large currents for high frequencies, and, hence, a small V0/I0 ratio. Can you think of what happens with an inductor? It’s not so easy, so I’ll refer you to the addendum below for some more explanation.

Let me also note that, as you can see, the impedance of (ideal) inductors and capacitors is a pure imaginary number, so that’s a complex number which has no real part. In engineering, the imaginary part of the impedance is referred to as the reactance, so engineers will say that ideal capacitors and inductors have a purely imaginary reactive impedance.

However, in real life, the impedance will usually have both a real as well as an imaginary part, so it will be some kind of mix, so to speak. The real part is referred to as the ‘resistance’ R, and the ‘imaginary’ part is referred to as the ‘reactance’ X. The formula for both is given below:

But here I have to end my post on circuit elements. It’s become quite long, so I’ll discuss Kirchoff’s rules in my next post.

Addendum: Why is V = − Ɛ?

Inductors are not easy to understand—intuitively, that is. That’s why I spent so much time writing on them in my other post on them, to which I should be referring you here. But let me recapitulate the key points. The key idea is that we’re pumping energy into an inductor when applying a current and, as you know, the time rate of change is power: P = dW/dt, so we’re talking power here too, which is voltage times current: P = dW/dt = V·I. The illustration below shows what happens when an alternating current is applied to the circuit with the inductor. So the assumption is that the current goes in one and then in the other direction, so I > 0, and then I < 0, etcetera. We’re also assuming some nice sinusoidal curve for the current here (i.e. the blue curve), and so we get what we get for U (i.e. the red curve), which is the energy that’s stored in the inductor really, as it tries to resist the changing current: the energy goes up and down between zero and some maximum amplitude that’s determined by the maximum current.

So, yes, building up current requires energy from some external source, which is used to overcome the ‘back emf’ in the inductor, and that energy is stored in the inductor itself. [If you still wonder why it’s stored in the inductor, think about the other question: where else would it be stored?] How is stored? Look at the graph and think: it’s stored as kinetic energy of the charges, obviously. That explains why the energy is zero when the current is zero, and why the energy maxes out when the current maxes out. So, yes, it all makes sense! 🙂

Let me give another example. The graph below assumes the current builds up to some maximum. As it reaches its maximum, the stored energy will also max out. This example assumes direct current, so it’s a DC circuit: the current builds up, but then stabilizes at some maximum that we can find by applying Ohm’s Law to the resistance of the circuit: I = V/R. Resistance? But we were talking an ideal inductor? We are. If there’s no other resistance in the circuit, we’ll have a short-circuit, so the assumption is that we do have some resistance in the circuit and, therefore, we should also think of some energy loss to heat from the current in the resistance. If not, well… Your power source will obviously soon reach its limits. 🙂

So what’s going on then? We have some changing current in the coil but, obviously, some kind of inertia also: the coil itself opposes the change in current through the ‘back emf’. Now, it requires energy, or power, to overcome the inertia, so that’s the power that comes from our voltage source: it will offset the ‘back emf’, so we may effectively think of a little circuit with an inductor and a voltage source, as shown below.

But why do we write V = − Ɛ? Our voltage source can have any voltage, can’t it? Yes. Sure. But so the coil will always provide an emf that’s exactly the opposite of this voltage. Think of it: we have some voltage that’s being applied across the terminals of the inductor, and so we’ll have some current. A current that’s changing. And it’s that current will generate an emf that’s equal to Ɛ = –L·(dI/dt). So don’t think of Ɛ as some constant: it’s the self-inductance coefficient L that’s constant, but I (and, hence, dI/dt) and V are variable.

The point is: we cannot have any potential difference in a perfect conductor, which is what the terminals are: any potential difference, i.e. any electric field really, would cause huge currents. In other words, the voltage V and the emf Ɛ have to cancel each other out, all of the time. If not, we’d have huge currents in the wires re-establishing the V = −Ɛ equality.

Let me use Feynman’s argument here. Perhaps that will work better. 🙂 Our ideal inductor is shown below: it’s shielded by some metal box so as to ensure it does not interact with the rest of the circuit. So we have some current I, which we assume to be an AC current, and we know some voltage is needed to cause that current, so that’s the potential difference V between the terminals.

The total circulation of E – around the whole circuit – can be written as the sum of two parts:

Now, we know circulation of E can only be caused by some changing magnetic field, which is what’s going on in the inductor:

So this change in the magnetic flux is what it causing the ‘back emf’, and so the integral on the left is, effectively, equal to Ɛ, not minus Ɛ but +Ɛ. Now, the second integral is equal to V, because that’s the voltage V between the two terminals a and b. So the whole integral is equal to 0 = Ɛ + V and, therefore, we have that:

V = − Ɛ = L·dI/dt

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here: