Tag: magnetic moment

Working with amplitudes

Note (22 May 2020): I am in the process of reviewing many of my posts—including the one below—as a result of my realist interpretation of quantum mechanics, which is based on the ring current model of an electron. It is a delicate exercise because I would like to keep track of the old posts. Perhaps I should copy all of them to some new site. Any case, the point is: I would significantly rephrase some of the below because I now believe spin is very real in quantum mechanics as well.

Original post:

Don’t worry: I am not going to introduce the Hamiltonian matrix—not yet, that is. But this post is going a step further than my previous ones, in the sense that it will be more abstract. At the same time, I do want to stick to real physical examples so as to illustrate what we’re doing when working with those amplitudes. The example that I am going to use involves spin. So let’s talk about that first.

Spin, angular momentum and the magnetic moment

You know spin: it allows experienced pool players to do the most amazing tricks with billiard balls, making a joke of what a so-called elastic collision is actually supposed to look like. So it should not come as a surprise that spin complicates the analysis in quantum mechanics too. We dedicated several posts to that (see, for example, my post on spin and angular momentum in quantum physics) and I won’t repeat these here. Let me just repeat the basics:

1. Classical and quantum-mechanical spin do share similarities: the basic idea driving the quantum-mechanical spin model is that of a electric charge – positive or negative – spinning about its own axis (this is often referred to as intrinsic spin) as well as having some orbital motion (presumably around some other charge, like an electron in orbit with a nucleus at the center). This intrinsic spin, and the orbital motion, give our charge some angular momentum (J) and, because it’s an electric charge in motion, there is a magnetic moment (μ). To put things simply: the classical and quantum-mechanical view of things converge in their analysis of atoms or elementary particles as tiny little magnets. Hence, when placed in an external magnetic field, there is some interaction – a force – and their potential and/or kinetic energy changes. The whole system, in fact, acquires extra energy when placed in an external magnetic field.

Note: The formula for that magnetic energy is quite straightforward, both in classical as well as in quantum physics, so I’ll quickly jot it down: U = −μ•B = −|μ|·|B|·cosθ = −μ·B·cosθ. So it’s just the scalar product of the magnetic moment and the magnetic field vector, with a minus sign in front so as to get the direction right. [θ is the angle between the μ and B vectors and determines whether U as a whole is positive or negative.

2. The classical and quantum-mechanical view also diverge, however. They diverge, first, because of the quantum nature of spin in quantum mechanics. Indeed, while the angular momentum can take on any value in classical mechanics, that’s not the case in quantum mechanics: in whatever direction we measure, we get a discrete set of values only. For example, the angular momentum of a proton or an electron is either −ħ/2 or +ħ/2, in whatever direction we measure it. Therefore, they are referred to as spin-1/2 particles. All elementary fermions, i.e. the particles that constitute matter (as opposed to force-carrying particles, like photons), have spin 1/2.

Note: Spin-1/2 particles include, remarkably enough, neutrons too, which has the same kind of magnetic moment that a rotating negative charge would have. The neutron, in other words, is not exactly ‘neutral’ in the magnetic sense. One can explain this by noting that a neutron is not ‘elementary’, really: it consists of three quarks, just like a proton, and, therefore, it may help you to imagine that the electric charges inside are, somehow, distributed unevenly—although physicists hate such simplifications. I am noting this because the famous Stern-Gerlach experiment, which established the quantum nature of particle spin, used silver atoms, rather than protons or electrons. More in general, we’ll tend to forget about the electric charge of the particles we’re describing, assuming, most of the time, or tacitly, that they’re neutral—which helps us to sort of forget about classical theory when doing quantum-mechanical calculations!

3. The quantum nature of spin is related to another crucial difference between the classical and quantum-mechanical view of the angular momentum and the magnetic moment of a particle. Classically, the angular momentum and the magnetic moment can have any direction.

Note: I should probably briefly remind you that J is a so-called axial vector, i.e. a vector product (as opposed to a scalar product) of the radius vector r and the (linear) momentum vector p = m·v, with v the velocity vector, which points in the direction of motion. So we write: J = r×p = r×m·v = |r|·|p|·sinθ·n. The n vector is the unit vector perpendicular to the plane containing r and p (and, hence, v, of course) given by the right-hand rule. I am saying this to remind you that the direction of the magnetic moment and the direction of motion are not the same: the simple illustration below may help to see what I am talking about.]

Back to quantum mechanics: the image above doesn’t work in quantum mechanics. We do not have an unambiguous direction of the angular momentum and, hence, of the magnetic moment. That’s where all of the weirdness of the quantum-mechanical concept of spin comes out, really. I’ll talk about that when discussing Feynman’s ‘filters’ – which I’ll do in a moment – but here I just want to remind you of the mathematical argument that I presented in the above-mentioned post. Just like in classical mechanics, we’ll have a maximum (and, hence, also a minimum) value for J, like +ħ, 0 and +ħ for a Lithium-6 nucleus. [I am just giving this rather special example of a spin-1 article so you’re reminded we can have particles with an integer spin number too!] So, when we measure its angular momentum in any direction really, it will take on one of these three values: +ħ, 0 or +ħ. So it’s either/or—nothing in-between. Now that leads to a funny mathematical situation: one would usually equate the maximum value of a quantity like this to the magnitude of the vector, which is equal to the (positive) square root of J2 = J•J = Jx2 + Jy2 + Jz2, with Jx, Jy and Jz the components of J in the x-, y- and z-direction respectively. But we don’t have continuity in quantum mechanics, and so the concept of a component of a vector needs to be carefully interpreted. There’s nothing definite there, like in classical mechanics: all we have is amplitudes, and all we can do is calculate probabilities, or expected values based on those amplitudes.

Huh? Yes. In fact, the concept of the magnitude of a vector itself becomes rather fuzzy: all we can do really is calculate its expected value. Think of it: in the classical world, we have a J2 = J•J product that’s independent of the direction of J. For example, if J is all in the x-direction, then Jy and Jz will be zero, and J2 = Jx2. If it’s all in the y-direction, then Jx and Jz will be zero and all of the magnitude of J will be in the y-direction only, so we write: J2 = Jy2. Likewise, if J does not have any z-component, then our J•J product will only include the x- and y-components: J•J = Jx2 + Jy2. You get the idea: the J2 = J•J product is independent of the direction of J exactly because, in classical mechanics, J actually has a precise and unambiguous magnitude and direction and, therefore, actually has a precise and unambiguous component in each direction. So we’d measure Jx, Jy, and Jz and, regardless of the actual direction of J, we’d find its magnitude |J| = J = +√J2 = +(Jx2 + Jy2 + Jz2)1/2.

In quantum mechanics, we just don’t have quantities like that. We say that Jx, Jy and Jz have an amplitude to take on a value that’s equal to +ħ, 0 or +ħ (or whatever other value is allowed by the spin number of the system). Now that we’re talking spin numbers, please note that this characteristic number is usually denoted by j, which is a bit confusing, but so be it. So j can be 0, 1/2, 1, 3/2, etcetera, and the number of ‘permitted values’ is 2j + 1 values, with each value being separated by an amount equal to ħ. So we have 1, 2, 3, 4, 5 etcetera possible values for Jx, Jy and Jz respectively. But let me get back to the lesson. We just can’t do the same thing in quantum mechanics. For starters, we can’t measure Jx, Jy, and Jz simultaneously: our Stern-Gerlach apparatus has a certain orientation and, hence, measures one component of J only. So what can we do?

Frankly, we can only do some math here. The wave-mechanical approach does allow to think of the expected value of J2 = J•J = Jx2 + Jy2 + Jz2 value, so we write:

E[J2] = E[J•J] = E[Jx2 + Jy2 + Jz2] = ?

[Feynman’s use of the 〈 and 〉 brackets to denote an expected value is hugely confusing, because these brackets are also used to denote an amplitude. So I’d rather use the more commonly used E[X] notation.] Now, it is a rather remarkable property, but the expected value of the sum of two or more random variables is equal to the sum of the expected values of the variables, even if those variables may not be independent. So we can confidently use the linearity property of the expected value operator and write:

E[Jx2 + Jy2 + Jz2] = E[Jx2] + E[Jx2] + E[Jx2]

Now we need something else. It’s also just part of the quantum-mechanical approach to things and so you’ll just have to accept it. It sounds rather obvious but it’s actually quite deep: if we measure the x-, y- or z-component of the angular momentum of a random particle, then each of the possible values is equally likely to occur. So that means, in our case, that the +ħ, 0 or +ħ values are equally likely, so their likelihood is one into three, i.e. 1/3. Again, that sounds obvious but it’s not. Indeed, please note, once again, that we can’t measure Jx, Jy, and Jz simultaneously, so the ‘or’ in x-, y- or z-component is an exclusive ‘or’. Of course, I must add this equipartition of likelihoods is valid only because we do not have a preferred direction for J: the particles in our beam have random ‘orientations’. Let me give you the lingo for this: we’re looking at an unpolarized beam. You’ll say: so what? Well… Again, think about what we’re doing here: we may of may not assume that the Jx, Jy, and Jz variables are related. In fact, in classical mechanics, they surely are: they’re determined by the magnitude and direction of J. Hence, they are not random at all ! But let me continue, so you see what comes out.

Because the +ħ, 0 and +ħ values are equally, we can write: E[Jx2] = ħ2/3 + 0/3 + (−ħ)2/3 = [ħ2 + 0 + (−ħ)2]/3 = 2ħ2/3. In case you wonder, that’s just the definition of the expected value operator: E[X] = p1x1 + p2x2 + … = ∑pixi, with pi the likelihood of the possible value xi . So we take a weighted average with the respective probabilities as the weights. However, in this case, with an unpolarized beam, the weighted average becomes a simple average.

Now, E[Jy2] and E[Jz2] are – rather unsurprisingly – also equal to 2ħ2/3, so we find that E[J2] = E[Jx2] + E[Jx2] + E[Jx2] = 3·(2ħ2/3) = 2ħ2 and, therefore, we’d say that the magnitude of the angular momentum is equal to |J| = J = +√2·ħ ≈ 1.414·ħ. Now that value is not equal to the maximum value of our x-, y-, z-component of J, or the component of J in whatever direction we’d want to measure it. That maximum value is ħ, without the √2 factor, so that’s some 40% less than the magnitude we’ve just calculated!

Now, you’ve probably fallen asleep by now but, what this actually says, is that the angular momentum, in quantum mechanics, is never completely in any direction. We can state this in another way: it implies that, in quantum mechanics, there’s no such thing really as a ‘definite’ direction of the angular momentum.

[…]

OK. Enough on this. Let’s move on to a more ‘real’ example. Before I continue though, let me generalize the results above:

[I] A particle, or a system, will have a characteristic spin number: j. That number is always an integer or a half-integer, and it determines a discrete set of possible values for the component of the angular momentum J in any direction.

[II] The number of values is equal to 2j + 1, and these values are separated by ħ, which is why they are usually measured in units of ħ, i.e. Planck’s reduced constant: ħ ≈ 1×10−34 J·s, so that’s tiny but real. 🙂 [It’s always good to remind oneself that we’re actually trying to describe reality.] For example, the permitted values for a spin-3/2 particle are +3ħ/2, +ħ/2, −ħ/2 and −3ħ/2 or, measured in units of ħ, +3/2, +1/2, −1/2 and −3/2. When discussing spin-1/2 particles, we’ll often refer to the two possible states as the ‘up’ and the ‘down’ state respectively. For example, we may write the amplitude for an electron or a proton to have a angular momentum in the x-direction equal to +ħ/2 or −ħ/2 as 〈+x〉 and 〈−x〉 respectively. [Don’t worry too much about it right now: you’ll get used to the notation quickly.]

[III] The classical concepts of angular momentum, and the related magnetic moment, have their limits in quantum mechanics. The magnitude of a vector quantity like angular momentum is generally not equal to the maximum value of the component of that quantity in any direction. The general rule is:

J2 = j·(j+1)ħ2 > j2·ħ2

So the maximum value of any component of J in whatever direction (i.e. j·ħ) is smaller than the magnitude of J (i.e. √[ j·(j+1)]·ħ). This implies we cannot associate any precise and unambiguous direction with quantities like the angular momentum J or the magnetic moment μ. As Feynman puts it:

“That the energy of an atom [or a particle] in a magnetic field can have only certain discrete energies is really not more surprising than the fact that atoms in general have only certain discrete energy levels—something we mentioned often in Volume I. Why should the same thing not hold for atoms in a magnetic field? It does. But it is the attempt to correlate this with the idea of an oriented magnetic moment that brings out some of the strange implications of quantum mechanics.”

A real example: the disintegration of a muon in a magnetic field

I talked about muon integration before, when writing a much more philosophical piece on symmetries in Nature and time reversal in particular. I used the illustration below. We’ve got an incoming muon that’s being brought to rest in a block of material, and then, as muons do, it disintegrates, emitting an electron and two neutrinos. As you can see, the decay direction is (mostly) in the direction of the axial vector that’s associated with the spin direction, i.e. the direction of the grey dashed line. However, there’s some angular distribution of the decay direction, as illustrated by the blue arrows, that are supposed to visualize the decay products, i.e. the electron and the neutrinos.

This disintegration process is very interesting from a more philosophical side. The axial vector isn’t ‘real’: it’s a mathematical concept—a pseudovector. A pseudo- or axial vector is the product of two so-called true vectors, aka as polar vectors. Just look back at what I wrote about the angular momentum: the J in the J = r×p = r×m·v formula is such vector, and its direction depends on the spin direction, which is clockwise or counter-clockwise, depending from what side you’re looking at it. Having said that, who’s to judge if the product of two ‘true’ vectors is any less ‘true’ than the vectors themselves? 🙂

The point is: the disintegration process does not respect what is referred to as P-symmetry. That’s because our mathematical conventions (like all of these right-hand rules that we’ve introduced) are unambiguous, and they tell us that the pseudovector in the mirror image of what’s going on, has the opposite direction. It has to, as per our definition of a vector product. Hence, our fictitious muon in the mirror should send its decay products in the opposite direction too! So… Well… The mirror image of our muon decay process is actually something that’s not going to happen: it’s physically impossible. So we’ve got a process in Nature here that doesn’t respect ‘mirror’ symmetry. Physicists prefer to call it ‘P-symmetry’, for parity symmetry, because it involves a flip of sign of all space coordinates, so there’s a parity inversion indeed. So there’s processes in Nature that don’t respect it but, while that’s all very interesting, it’s not what I want to write about. [Just check that post of mine if you’d want to read more.] Let me, therefore, use another illustration—one that’s more to the point in terms of what we do want to talk about here:

So we’ve got the same muon here – well… A different one, of course! 🙂 – entering that block (A) and coming to a grinding halt somewhere in the middle, and then it disintegrates in a few micro-seconds, which is an eternity at the atomic or sub-atomic scale. It disintegrates into an electron and two neutrinos, as mentioned above, with some spread in the decay direction. [In case you wonder where we can find muons… Well… I’ll let you look it up yourself.] So we have:

Now it turns out that the presence of a magnetic field (represented by the B arrows in the illustration above) can drastically change the angular distribution of decay directions. That shouldn’t surprise us, of course, but how does it work, exactly? Well… To simplify the analysis, we’ve got a polarized beam here: the spin direction of all muons before they enter the block and/or the magnetic field, i.e. at time t = 0, is in the +x-direction. So we filtered them just, before they entered the block. [I will come back to this ‘filtering’ process.] Now, if the muon’s spin would stay that way, then the decay products – and the electron in particular – would just go straight, because all of the angular momentum is in that direction. However, we’re in the quantum-mechanical world here, and so things don’t stay the same. In fact, as we explained, there’s no such things as a definite angular momentum: there’s just an amplitude to be in the +x state, and that amplitude changes in time and in space.

How exactly? Well… We don’t know, but we can apply some clever tricks here. The first thing to note is that our magnetic field will add to the energy of our muon. So, as I explained in my previous post, the magnetic field adds to the E in the exponent of our complex-valued wavefunction a·e−(i/ħ)(E·t − p∙x). In our example, we’ve got a magnetic field in the z-direction only, so that U = −μ•B reduces to U = −μz·B, and we can re-write our wavefunction as:

a·e−(i/ħ)[(E+U)·t − p∙x] = a·e−(i/ħ)(E·t − p∙x)·e(i/ħ)(μz·B·t)

Of course, the magnetic field only acts from t = 0 to when the muon disintegrates, which we’ll denote by the point t = τ. So what we get is that the probability amplitude of a particle that’s been in a uniform magnetic field changes by a factor e(i/ħ)(μz·B·τ). Note that it’s a factor indeed: we use it to multiply. You should also note that this is a complex exponential, so it’s a periodic function, with its real and imaginary part oscillating between zero and one. Finally, we know that μz can take on only certain values: for a spin-1/2 particle, they are plus or minus some number, which we’ll simply denote as μ, so that’s without the subscript, so our factor becomes:

e(i/ħ)(±μ·B·t)

[The plus or minus sign needs to be explained here, so let’s do that quickly: we have two possible states for a spin-1/2 particle, one ‘up’, and the other ‘down’. But then we also know that the phase of our complex-valued wave function turns clockwise, which is why we have a minus sign in the exponent of our e−iθ expression. In short, for the ‘up’ state, we should take the positive value, i.e. +μ, but the minus sign in the exponent of our e−iθ function makes it negative again, so our factor is e−(i/ħ)(μ·B·t) for the ‘up’ state, and e+(i/ħ)(μ·B·t) for the ‘down’ state.]

OK. We get that, but that doesn’t get us anywhere—yet. We need another trick first. One of the most fundamental rules in quantum-mechanics is that we can always calculate the amplitude to go from one state, say φ (read: ‘phi’), to another, say χ (read: ‘khi’), if we have a complete set of so-called base states, which we’ll denote by the index i or j (which you shouldn’t confuse with the imaginary unit, of course), using the following formula:

〈 χ | φ 〉 = ∑ 〈 χ | i 〉〈 i | φ 〉

I know this is a lot to swallow, so let me start with the notation. You should read 〈 χ | φ 〉 from right to left: it’s the amplitude to go from state φ to state χ. This notation is referred to as the bra-ket notation, or the Dirac notation. [Dirac notation sounds more scientific, doesn’t it?] The right part, i.e. | φ 〉, is the bra, and the left part, i.e. 〈 χ | is the ket. In our example, we wonder what the amplitude is for our muon staying in the +x state. Because that amplitude is time-dependent, we can write it as A+(τ) = 〈 +x at time t = τ | +x at time t = 0 〉 = 〈 +x at t = τ | +x at t = 0 〉or, using a very misleading shorthand, 〈 +x | +x 〉. [The shorthand is misleading because the +x in the ket obviously means something else than the +x in the bra.]

But let’s apply the rule. We’ve got two states with respect to each coordinate axis only here. For example, in respect to the z-axis, the spin values are +z and −z respectively. [As mentioned above, we actually mean that the angular momentum in this direction is either +ħ/2 or −ħ/2, aka as ‘up’ or ‘down’ respectively, but then quantum theorists seem to like all kinds of symbols better, so we’ll use the +z and −z notations for these two base states here. So now we can use our rule and write:

A+(t) = 〈 +x | +x 〉 = 〈 +x | +z 〉〈 +z | +x 〉 + 〈 +x | −z 〉〈 −z | +x 〉

You’ll say this doesn’t help us any further, but it does, because there is another set of rules, which are referred to as transformation rules, which gives us those 〈 +z | +x 〉 and 〈 −z | +x 〉 amplitudes. They’re real numbers, and it’s the same number for both amplitudes.

〈 +z | +x 〉 = 〈 −z | +x 〉 = 1/√2

This shouldn’t surprise you too much: the square root disappears when squaring, so we get two equal probabilities – 1/2, to be precise – that add up to one which – you guess it – they have to add up to because of the normalization rule: the sum of all probabilities has to add up to one, always. [I can feel your impatience, but just hang in here for a while, as I guide you through what is likely to be your very first quantum-mechanical calculation.] Now, the 〈 +z | +x 〉 = 〈 −z | +x 〉 = 1/√2 amplitudes are the amplitudes at time t = 0, so let’s be somewhat less sloppy with our notation and write 〈 +z | +x 〉 as C+(0) and 〈 −z | +x 〉 as C−(0), so we write:

〈 +z | +x 〉 = C+(0) = 1/√2

〈 −z | +x 〉 = C−(0) = 1/√2

Now we know what happens with those amplitudes over time: that e(i/ħ)(±μ·B·t) factor kicks in, and so we have:

C+(t) = C+(0)·e−(i/ħ)(μ·B·t) = e−(i/ħ)(μ·B·t)/√2

C−(t) = C−(0)·e+(i/ħ)(μ·B·t) = e+(i/ħ)(μ·B·t)/√2

As for the plus and minus signs, see my remark on the tricky ± business in regard to μ. To make a long story somewhat shorter :-), our expression for A+(t) = 〈 +x at t | +x 〉 now becomes:

A+(t) = 〈 +x | +z 〉·C+(t) + 〈 +x | −z 〉·C−(t)

Now, you wouldn’t be too surprised if I’d just tell you that the 〈 +x | +z 〉 and 〈 +x | −z 〉 amplitudes are also real-valued and equal to 1/√2, but you can actually use yet another rule we’ll generalize shortly: the amplitude to go from state φ to state χ is the complex conjugate of the amplitude to to go from state χ to state φ, so we write 〈 χ | φ 〉 = 〈 φ | χ 〉*, and therefore:

〈 +x | +z 〉 = 〈 +z | +x 〉* = (1/√2)* = (1/√2)

〈 +x | −z 〉 = 〈 −z | +x 〉* = (1/√2)* = (1/√2)

So our expression for A+(t) = 〈 +x at t | +x 〉 now becomes:

A+(t) = e−(i/ħ)(μ·B·t)/2 + e(i/ħ)(μ·B·t)/2

That’s the sum of a complex-valued function and its complex conjugate, and we’ve shown more than once (see my page on the essentials, for example) that such sum reduces to the sum of the real parts of the complex exponentials. [You should not expect any explanation of Euler’s eiθ = cosθ + i·sinθ rule at this level of understanding.] In short, we get the following grand result:

The big question, of course: what does this actually mean? 🙂 Well… Just square this thing and you get the probabilities shown below. [Note that the period of a squared cosine function is π, instead of 2π, which you can easily verify using an online graphing tool.]

Because you’re tired of this business, you probably don’t realize what we’ve just done. It’s spectacular and mundane at the same time. Let me quote Feynman to summarize the results:

“We find that the chance of catching the decay electron in the electron counter varies periodically with the length of time the muon has been sitting in the magnetic field. The frequency depends on the magnetic moment μ. The magnetic moment of the muon has, in fact, been measured in just this way.”

As far as I am concerned, the key result is that we’ve learned how to work with those mysterious amplitudes, and the wavefunction, in a practical way, thereby using all of the theoretical rules of the quantum-mechanical approach to real-life physical situations. I think that’s a great leap forward, and we’ll re-visit those rules in a more theoretical and philosophical démarche in the next post. As for the example itself, Feynman takes it much further, but I’ll just copy the Grand Master here:

Huh? Well… I am afraid I have to leave it at this, as I discussed the precession of ‘atomic’ magnets elsewhere (see my post on precession and diamagnetism), which gives you the same formula: ωp = μ·B/J (just substitute J for ±ħ/2). However, the derivation above approaches it from an entirely different angle, which is interesting. Of course, all fits. 🙂 However, I’ll let you do your own homework now. I hope to see you tomorrow for the mentioned theoretical discussion. Have a nice evening, or weekend – or whatever ! 🙂

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

Spin and angular momentum in quantum mechanics

Note: A few years after writing the post below, I published a paper on the anomalous magnetic moment which makes (some of) what is written below irrelevant. It gives a clean classical explanation for it. Have a look by clicking on the link here !

Original blog post:

Feynman starts his Volume of Lectures on quantum mechanics (so that’s Volume III of the whole series) with the rules we already know, so that’s the ‘special’ math involving probability amplitudes, rather than probabilities. However, these introductory chapters assume theoretical zero-spin particles, which means they don’t have any angular momentum. While that makes it much easier to understand the basics of quantum math, real elementary particles do have angular momentum, which makes the analysis much more complicated. Therefore, Feynman makes it very clear, after his introductory chapters, that he expects all prospective readers of his third volume to first work their way through chapter 34 and 35 of the second volume, which discusses the angular momentum of elementary particles from both a classical as well as a quantum-mechanical perspective. So that’s what we will do here. I have to warn you, though: while the mentioned two chapters are more generous with text than other textbooks on quantum mechanics I’ve looked at, the matter is still quite hard to digest. By way of introduction, Feynman writes the following:

“The behavior of matter on a small scale—as we have remarked many times—is different from anything that you are used to and is very strange indeed. Understanding of these matters comes very slowly, if at all. One never gets a comfortable feeling that these quantum-mechanical rules are ‘natural’. Of course they are, but they are not natural to our own experience at an ordinary level. The attitude that we are going to take with regard to this rule about angular momentum is quite different from many of the other things we have talked about. We are not going to try to ‘explain’ it but tell you what happens.”

I personally feel it’s not all as mysterious as Feynman claims it to be, but I’ll let you judge for yourself. So let’s just go for it and see what comes out. 🙂

Atomic magnets and the g-factor

When discussing electromagnetic radiation, we introduced the concept of atomic oscillators. It was a very useful model to help us understand what’s supposed to be going on. Now we’re going to introduce atomic magnets. It is based on the classical idea of an electron orbiting around a proton. Of course, we know this classical idea is wrong: we don’t have nice circular electron orbitals, and our discussion on the radius of an the electron in our previous post makes it clear that the idea of the electron itself is rather fuzzy. Nevertheless, the classical concepts used to analyze rotation are also used, mutatis mutandis, in quantum mechanics. Mutatis mutandis means: with necessary alterations. So… Well… Let’s go for it. 🙂 The basic idea is the following: an electron in a circular orbit is a circular current and, hence, it causes a magnetic field, i.e. a magnetic flux through the area of the loop—as illustrated below.

As such, we’ll have a magnetic (dipole) moment, and you may want to review my post(s) on that topic so as to ensure you understand what follows. The magnetic moment (μ) is the product of the current (I) and the area of the loop (π·r2), and its conventional direction is given by the μ vector in the illustration below, which also shows the other relevant scalar and/or vector quantities, such as the velocity v and the orbital angular momentum J. The orbital angular momentum is to be distinguished from the spin angular momentum, which results from the spin around its own axis. So the spin angular momentum – which is often referred to as the spin tout court – is not depicted below, and will only be discussed in a few minutes.

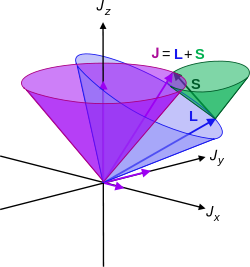

Let me interject something on notation here. Feynman’s always uses J, for whatever momentum. That’s not so usual. Indeed, if you’d google a bit, you’ll see the convention is to use S and L respectively to distinguish spin and orbital angular momentum respectively. If we’d use S and L, we can write the total angular momentum as J = S + L, and the illustration below shows how the S and L vectors are to be added. It looks a bit complicated, so you can skip this for now and come back to it later. But just try to visualize things:

- The L vector is moving around, so that assumes the orbital plane is moving around too. That happens when we’d put our atomic system in a magnetic field. We’ll come back to that. In what follows, we’ll assume the orbital plane is not moving.

- The S vector here is also moving, which also assumes the axis of rotation is not steady. What’s going on here is referred to as precession, and we discussed it when presenting the math one needs to understand gyroscopes.

- Adding S and L yields J, the total angular momentum. Unsurprisingly, this vector wiggles around too. Don’t worry about the magnitudes of the vectors here. Also, in case you’d wonder why the axis of symmetry for the movement of the J vector happens to be the Jz axis, the answer is simple: we chose the coordinate system so as to ensure that was the case.

But I am digressing. I just inserted the illustration above to give you an inkling of where we’re going with this. Indeed, what’s shown above will make it easier for you to see how we can generalize the analysis that we’ll do now, which is an analysis of the orbital angular momentum and the related magnetic moment only. Let me copy the illustration we started with once more, so you don’t have to scroll up to see what we’re talking about.

So we have a charge orbiting around some center. It’s a classical analysis, and so it’s really like a planet around the Sun, except that we should remember that likes repel, and opposites attract, so we’ve got a minus sign in the force law here.

Let’s go through the math. The magnetic moment is the current times the area of the loop. As the velocity is constant, the current is just the charge q times the frequency of rotation. The frequency of rotation is, of course, the velocity (i.e. the distance traveled per second) divided by the circumference of the orbit (i.e. 2π·r). Hence, we write: I = (qe·v)/(2π·r) and, therefore: μ = (qe/·v)·π·r2)/(2π·r) = qe·v·r/2. Note that, as per the convention, current is defined as a flow of positive charges, so the illustration above actually assumes we’re talking some proton in orbit, so q = qe would be the elementary charge +1. If we’d be talking an electron, then its charge is to be denoted as –qe (minus qe, i.e. −1), and we’d need to reverse the direction of μ, which we’ll do in a moment. However, to simplify the discussion, you should just think of some positive charge orbiting the way it does in the illustration above.

OK. That’s all there’s to say about the magnetic moment—for the time being, that is. Let’s think about the angular momentum now. It’s orbital angular momentum here, and so that’s the type of angular momentum we discussed in our post on gyroscopes. We denoted it as L indeed – i.e. not as J, but that’s just a matter of conventions – and we noted that L could be calculated as the vector cross product of the position vector r and the momentum vector p, as shown in the animation below, which also shows the torque vector τ.

The angular momentum L changes in the animation above. In our J case above, it doesn’t. Also, unlike what’s happening with the angular momentum of that swinging ball above, the magnitude of our J doesn’t change. It remains constant, and it’s equal to |J| = J = |r×p| = |r|·|p|·sinθ = r·p = r·m·v. One should note this is a non-relativistic formula, but as the relative velocity of an electron v/c is equal to the fine-structure constant, so that’s α ≈ 0.0073 (see my post on the fine-structure constant if you wonder where this formula comes from), it’s OK to not include the Lorentz factor in our formulas as for now.

Now, as I mentioned already, the illustration we’re using to explain μ and J is somewhat unreal because it assumes a positive charge q, and so μ and J point in the same direction in this case, which is not the case if we’d be talking an actual atomic system with an electron orbiting around a proton. But let’s go along with it as for now and so we’ll put the required minus sign in later. We can combine the J = r·m·v and μ = q·v·r/2 formulas to write:

μ = (q/2m)·J or μ/J = (q/2m) (electron orbit)

In other words, the ratio of the magnetic moment and the angular moment depends on (1) the charge (q) and (2) the mass of the charge, and on those two variables only. So the ratio does not depend on the velocity v nor on the radius r. It can be noted that the q/2m factor is often referred to as the gyromagnetic factor (not to be confused with the g-factor, which we’ll introduce shortly). It’s good to do a quick dimensional check of this relation: the magnetic moment is expressed in ampère per second times the loop area, so that’s (C/s)·m2. On the right-hand side, we have the dimension of the gyromagnetic factor, which is C/kg, times the dimension of the angular momentum, which is m·kg·m/s, so we have the same units on both sides: C·m2/s, which is often written as joule per tesla (J/T): the joule is the energy unit (1 J = 1 N·m), and the tesla measures the strength of the magnetic field (1 T = 1 (N·s)/(C·m). OK. So that works out.

So far, so good. The story is a little bit different for the spin angular momentum and the spin magnetic moment. The formula is the following:

μ = (q/m)·J (electron spin)

This formula says that the μ/J ratio is twice what it is for the orbital motion of the electron. Why is that? Feynman says “the reasons are pure quantum-mechanical—there is no classical explanation.” So I’d suggest we just leave that question open for the moment and see if we’re any wiser once we’ve worked ourselves through all of his Lectures on quantum physics. 🙂 Let’s just go along with it as for now.

Now, we can write both formulas – i.e. the formula for the spin and the orbital angular momentum – in a more general way using the format below:

μ = –g·(qe/2me)·J

Why the minus sign? Well… I wanted to get the sign right this time. Our model assumed some positive charge in orbit, but so we want a formula for a atomic system, and so our circling charge should be an electron. So the formula above is the formula for a electron, and the direction of the magnetic moment and of the angular motion will be opposite for electrons: it just replaces q by –qe. The format above also applies to any atomic system: as Feynman writes, “For an isolated atom, the direction of the magnetic moment will always be exactly opposite to the direction of the angular momentum.” So the g-factor will be characteristic of the state of the atom. It will be 1 for a pure orbital moment, 2 for a pure spin moment, or some other number in-between for a complicated system like an atom, indeed.

You may have one last question: why qe/2m instead of qe/m in the middle? Well… If we’d take qe/m, then g would be 1/2 for the orbital angular momentum, and the initial idea with g was that it would be some integer (we’ll quickly see that’s an idea only). So… Well… It’s just one more convention. Of course, conventions are not always respected so sometimes you’ll see the expression above written without the minus sign, so you may see it as μ = g·(qe/2me)·J. In that case, the g-factor for our example involving the spin angular momentum and the spin magnetic moment, will obviously have to be written as minus 2.

Of course, it’s easy to see that the formula for the spin of a proton will look the same, except that we should take the mass of the proton in the formula, so that’s mp instead of me. Having said that, the elementary charge remains what it is, but so we write it without the minus sign here. To make a long story short, the formula for the proton is:

μ = g·(qe/2mp)·J

OK. That’s clear enough. For electrons, the g-factor is referred to as the Landé g-factor, while the g-factor for protons or, more generally, for any spinning nucleus, is referred to as the nuclear g-factor. Now, you may or may not be surprised, but there’s a g-factor for neutrons too, despite the fact that they do not carry a net charge: the explanation for it must have something to do with the quarks that make up the neutron but that’s a complicated matter which we will not get into here. Finally, there is a g-factor for a whole atom, or a whole atomic system, and that’s referred to as… Well… Just the g-factor. 🙂 It’s, obviously, a number that’s characteristic of the state of the atom.

So… This was a big step forward. We’ve got all of the basics on that ‘magical’ spin number here, and so I hope it’s somewhat less ‘magical’ now. 🙂 Let me just copy the values of the g-factor for some elementary particles. It also shows how hard physicists have been trying to narrow down the uncertainty in the measurement. Quite impressive! The table comes from the Wikipedia article on it. I hope the explanations above will now enable you to read and understand that. 🙂

Let’s now move on to the next topic.

Spin numbers and states

Of course, we’re talking quantum mechanics and, therefore, J can only take on a finite number of values. While that’s weird – as weird as other quantum-mechanical things, such as the boson-fermion math, for example – it should not surprise us. As we will see in a moment, the values of J will determine the magnetic energy our system will acquire when we put in some magnetic field and, as Feynman writes: “That the energy of an atom in the magnetic field can have only certain discrete energies is really not more surprising than the fact that atoms in general have only certain discrete energy levels. Why should the same thing not hold for atoms in a magnetic field? It does. It is just correlation of this with the idea of an oriented magnetic moment that brings out some of the strange implications of quantum mechanics.” Yep. That’s true. We’ll talk about that later.

Of course, you’ll probably want some ‘easier’ explanation. I am afraid I can’t give you that. All I can say is that, perhaps, you should think of our discussion on the fine-structure constant, which made it clear that the various radii of the electron, its velocity and its mass and/or energy are all related one to another and, hence, that they can only take on certain values. Indeed, of all the relations we discussed, there’s two you should always remember. The first relationship is the U = (e2/r) = α/r. So that links the energy (which we can express in equivalent mass units), the electron charge and its radius. The second thing you should remember is that the Bohr radius and the classical electron radius are also related through α: α re/r = α2. So you may want to think of the different values for J as being associated with different ‘orbitals’, so to speak. But that’s a very crude way of thinking about it, so I’d say: just accept the fact and see where it leads us. You’ll see, in a few moments from now, that the whole thing is not unlike the quantum-mechanical explanation of the blackbody radiation problem, which assumes that the permitted energy levels (or states) are equally spaced and h·f apart, with f the frequency of the light that’s being absorbed and/or emitted. So the atom takes up energies only h·f at a time. Here we’ve got something similar: the energy levels that we’ll associate with the discrete values of J – or J‘s components , I should say – will also be equally spaced. Let me show you how it works, as that will make things somewhat more clear.

If we have an object with a given total angular momentum J in classical mechanics, then any of its components x, y or z, could take on any value from +J to −J. That’s not the case here. The rule is that the ‘system’ – the atom, the nucleus, or anything really – will have a characteristic number, which is referred to as the ‘spin’ of the system and, somewhat confusingly, it’s denoted by j (as you can, it’s extremely important, indeed, to distinguish capital letters (like J) from small letters (like j) if you want to keep track of what we’re explaining here). Now, if we have that characteristic spin number j, then any component of J (think of the z-direction, for example) can take on only (one of) the following values:

Note that we will always have 2j + 1 values. For example, if j = 3/2, we’ll have 2·(3/2) + 1 = 4 permitted values, and in the extreme case where j is zero, we’ll still have 2·0 + 1 = 1 permitted value: zero itself. So that basically says we have no angular momentum. […] OK. That should be clear enough, but let’s pause here for a moment and analyze this—just to make sure we ‘get’ this indeed. What’s being written here? What are those numbers? Let’s do a quick dimensional analysis first. Because j, j − 1, j − 2, etcetera are pure numbers, it’s only the dimension of ħ that we need to look at. We know ħ: it’s the Planck constant h, which is expressed in joule·second, i.e. J·s = N·m·s, divided by 2π. That makes sense, because we get the same dimension for the angular momentum. Indeed, the L or J = r·m·v formula also gives us the dimension of physical action, i.e. N·m·s. Just check it: [r]·[m]·[v] = m·kg·m/s = m·(N·s2/m)·m/s = N·m·s. Done!

So we’ve got some kind of unit of action once more here, even if it’s not h but ħ = h/2π. That makes it a quantum of action expressed for a radian, so that’s a unit of length, rather than for a full cycle. Just so you know, ħ = h/2π is 1×10−34 J·s ≈ 6.6×10−16 eV·s, and we could chose to express the components of J in terms of h by multiplying the whole thing with 2π. That would boil down to saying that our unit length is not unity but the unit circle, which is 2π times unity. Huh? Just think about it: h is a fundamental unit linked to one full cycle of something, so it all makes sense. Before we move on, you may want to compare the value of h or ħ with the energy of a photon, which is 1.6 to 3.2 eV in the visible light spectrum, but you should note that energy does not have the time dimension, and a second is an eternity in quantum physics, so the comparison is a bit tricky. So… […] Well… Let’s just move on. What about those coefficients? What constraints are there?

Well… The constraint is that the difference between +j and −j must be some integer, so +j−(−j) = 2j must be an integer. That implies that the spin number j is always an integer or a half-integer, depending on whether j is even or odd. Let’s do a few examples:

- A lithium (Li-7) nucleus has spin j = 3/2 and, therefore, the permitted values for the angular momentum around any axis (the z-axis, for example) are: 3/2, 3/2−1=1/2, 3/2−2=−1/2, and −3/2—all times ħ of course! Note that the difference between +j and –j is 3, and each ‘step’ between those two levels is ħ, as we’d like it to be.

- The nucleus of the much rarer Lithium-6 isotope is one of the few stable nuclei that has spin j = 1, so the permitted values are 1, 0 and −1. Again, all needs to be multiplied with ħ to get the actual value for the J-component that we’re looking at. So each step is ‘one’ again, and the total difference (between +j and –j) is 2.]

- An electron is a spin-1/2 particle, and so there are only two permitted values: +ħ/2 and −ħ/2. So there is just one ‘step’ and it’s equal to the whole difference between +j and –j. In fact, this is the most common situation, because we’ll be talking elementary fermions most of the time.

- Photons are an example of spin-1 ‘particles’, and ‘particles’ with integer spin are referred to as bosons. In this regard, you may heard of superfluid Helium-4, which is caused by Bose-Einstein condensation near the zero temperature point, and demonstrates the integer spin number of Helium-4, so it resembles Lithium-6 in this regard.

The four ‘typical’ examples makes it clear that the actual situations that we’ll be analyzing will usually be quite simple: we’ll only have 2, 3 or 4 permitted values only. As mentioned, there is this fundamental dichotomy between fermions and bosons. Fermions have half-integer spin, and all elementary fermions, such as protons, neutrons, electrons, neutrinos and quarks are spin-1/2 particles. [Note that a proton and a neutron are, strictly speaking, not elementary, as their constituent parts are quarks.] Bosons have integer spin, and the bosons we know of are spin-one particles, (except for the newly discovered Higgs boson, which is an actual spin-zero particle). The photon is an example, but the helium nucleus (He-4) also has spin one, which – as mentioned above – gives rise to superfluidity when its cooled near the absolute zero point.

In any case, to make a long story short, in practice, we’ll be dealing almost exclusively with spin-1, spin-1/2 particles and, occasionally, with spin-3/2 particles. In addition, to analyze simple stuff, we’ll often pretend particles do not have any spin, so our ‘theoretical’ particles will often be spin zero. That’s just to simplify stuff.

We now need to learn how to do a bit of math with all of this. Before we do so, let me make some additional remarks on these permitted values. Regardless of whether or not J is ‘wobbling’ or moving or not – let me be clear: J is not moving in the analysis above, but we’ll discuss the phenomenon of precession in the next post, and that will involve a J like that J circling around the Jz axis, so I am just preparing the terrain here – J‘s magnitude will always be some constant, which we denoted by |J| = J.

Now there’s something really interesting here, which again distinguishes classical mechanics from quantum mechanics. As mentioned, in classical mechanics, any of J‘s components Jx, Jy or Jz, could take on any value from +J to −J and, therefore, the maximum value of any component of J – say Jz – would be equal to J. To be precise, J would be the value of the component of J in the direction of J itself. So, in classical mechanics, we’d write: |J| = +√(J·J) = +√J2 = J, and it would be the maximum value of any component of J. But so we said that, if the spin number of J is j, then the maximum value of any component of J was equal to j·ħ. So, naturally, one would think that J = |J| = +√(J·J) = +√J2 = j·ħ.

However, that’s not the case in quantum mechanics: the maximum value of any component of J is not J = j·ħ but the square root of j·(j+1)·ħ.

Huh? Yes. Let me spell it out: |J| = +√(J·J) = +√J2 ≠ jħ. Indeed, quantum math has many particularities, and this is one of them. The magnitude of J is not equal to the largest possible value of any component of J:

J‘s magnitude is not jħ but √(j(j+1)ħ).

As for the proof of this, let me simplify my life and just copy Feynman here:

The formula can be easily generalized for j ≠ 3/2. Also note that we used a fact that we didn’t mention as yet: all possible values of the z-component (or of whatever component) of J are equally likely.

Now, the result is fascinating, but the implications are even better. Let me paraphrase Feynman as he relates them:

- From what we have so far, we can get another interesting and somewhat surprising conclusion. In certain classical calculations the quantity that appears in the final result is the square of the magnitude of the angular momentum J—in other words, J⋅J = J2. It turns out that it is often possible to guess at the correct quantum-mechanical formula by using the classical calculation and the following simple rule: Replace J2 = J⋅J by j(j+1)ħ. This rule is commonly used, and usually gives the correct result.

- The second implication is the one we announced already: although we would think classically that the largest possible value of the any component of J is just the magnitude of J, quantum-mechanically the maximum of any component of J is always less than that, because jħ is always less than √(j(j+1)ħ). For example, for j = 3/2 = 1.5, we have j(j+1) = (3/2)·(5/2) = 15/4 = 3.75. Now, the square root of this value is √3.75 ≈ 1.9365, so the magnitude of J is about 30% larger than the maximum value of any of J‘s components. That’s a pretty spectacular difference, obviously!

The second point is quite deep: it implies that the angular momentum is ‘never completely along any direction’. Why? Well… Think of it: “any of J‘s components” also includes the component in the direction of J itself! But if the maximum value of that component is 30% less than the magnitude of J, what does that mean really? All we can say is that it implies that the concept of the direction of the magnitude itself is actually quite fuzzy in quantum mechanics! Of course, that’s got to do with the Uncertainty Principle, and so we’ll come back to this later.

In fact, if you look at the math, you may think: what’s that business with those average or expected values? A magnitude is a magnitude, isn’t it? It’s supposed to be calculated from the actual values of Jx, Jy and Jz, not from some average that’s based on the (equal) likelihoods of the permitted values. You’re right. Feynman’s derivation here is quantum-mechanical from the start and, therefore, we get a quantum-mechanical result indeed: the magnitude of J is calculated as the magnitude of a quantum-mechanical variable in the derivation above, not as the magnitude of a classical variable.

[…] OK. On to the next.

The magnetic energy of atoms

Before we start talking about this topic, we should, perhaps, relate the angular momentum to the magnetic moment once again. We can do that using the μ = (q/2m)·J and/or μ = (q/m)·J formula (so that’s the simple formulas for the orbital and spin angular momentum respectively) or, else, by using the more general μ = – g·(q/2m)·J formula.

Let’s use the simpler μ = (qe/2m)·J formula, which is the one for the orbital angular momentum. What’s qe/2m? It should be equal to 1.6×10−19 C divided by 2·9.1×10−31 kg, so that’s about 0.0879×1012 C/kg, or 0.0879×1012 (C·m)/(N·s2). Now we multiply by ħ/2 ≈ 0.527×10−34 J·s. We get something like 0.0463×10−22 m2·C/s or J/T. These numbers are ridiculously small, so they’re usually measured in terms of a so-called natural unit: the Bohr magneton, which I’ll explain in a moment but so here we’re interested in its value only, which is μB = 9.274×10−24 J/T. Hence, μ/μB = 0.5 = 1/2. What a nice number!

Hmm… This cannot be a coincidence… […] You’re right. It isn’t. To get the full picture, we need to include the spin angular momentum, so we also need to see what the μ = (q/m)·J will yield. That’s easy, of course, as it’s twice the value of (q/2m)·J, so μ/μB = 1, and so the total is equal to 3/2. So the magnetic moment of an electron has the same value (when expressed in terms of the Bohr magneton) as the spin (when expressed in terms of ħ). Now that’s just sweet!

Yes, it is. All our definitions and formulas were formulated so as to make it sweet. Having said that, we do have a tiny little problem. If we use the general μ = −g·(q/2m)·J to write the result we found for the spin of the electron only (so we’re not looking at the orbital momentum here), then we’d write: μ = 2·(q/2m)·J = (q/m)·J and, hence, the g-factor here is −2. Yes. We know that. You told me so already. What’s the issue? Well… The problem is: experiments reveal the actual value of g is not exactly −2: it’s −2.00231930436182(52) instead, with the last two digits (in brackets) the uncertainty in the current measurements. Just check it for yourself on the NIST website. 🙂 [Please do check it: it brings some realness to this discussion.]

Hmm…. The accuracy of the measurement suggests we should take it seriously, even if we’re talking a difference of 0.1% only. We should. It can be explained, of course: it’s something quantum-mechanical. However, we’ll talk about this later. As for now, just try to understand the basics here. It’s complicated enough already, and so we’ll stay away from the nitty-gritty as long as we can.

Let’s now get back to the magnetic energy of our atoms. From our discussion on the torque on a magnetic dipole in an external magnetic field, we know that our magnetic atoms will have some extra magnetic energy when placed in an external field. So now we have an external magnetic field B, and we derived the formula for the energy is

Umag = −μ·B·cosθ = −μ·B

I won’t explain the whole thing once again, but it might help to visualize the situation, which we do below. The loop here is not circular but square, and it’s a current-carrying wire instead of an electron in orbit, but I hope you get the point.

We need to chose some coordinate system to calculate stuff and so we’ll just choose our z-axis along the direction of the external magnetic field B so as to simplify those calculations. If we do that, we can just take the z-component of μ and then combine the interim result with our general μ = – g·(q/2m)·J formula, so we write:

Umag = −μz·B = g·(q/2m)·Jz·B

Now, we know that the maximum value of Jz is equal to j·ħ, and so the maximum value of Umag will be equal g(q/2m)jħB. Let’s now simplify this expression by choosing some natural unit, and that’s the unit we introduced already above: the Bohr magneton. It’s equal to (qeħ)/(2me) and its value is μB ≈ 9.274×10−24 J/T. So we get the result we wanted, and that is:

Let me make a few remarks here. First on that magneton: you should note there’s also something which is known as the nuclear magneton which, you guessed it, is calculated using the proton charge and the proton mass: μN = (qpħ)/(2mp) ≈ 5.05×10−27 J/T. My second remark is a question: what does that formula mean, really? Well… Let me quote Feynman on that. The formula basically says the following:

“The energy of an atomic system is changed when it is put in a magnetic field by an amount that is proportional to the field, and proportional to Jz. We say that the energy of an atomic system is ‘split’ into 2j + 1 ‘levels’ by a magnetic field. For instance, an atom whose energy is U0 outside a magnetic field and whose j is 3/2, will have four possible energies when placed in a field. We can show these energies by an energy-level diagram like that drawn below. Any particular atom can have only one of the four possible energies in any given field B. That is what quantum mechanics says about the behavior of an atomic system in a magnetic field.”

Of course, the simplest ‘atomic’ system is a single electron, which has spin 1/2 only (like most fermions really: the example in the diagram above, with spin 3/2, would be that Li-7 system or something similar). If the spin is 1/2, then there are only two energy levels, with Jz = ±ħ/2 and, as we mentioned already, the g-factor for an electron is −2 (again, the use of minus signs (or not) is quite confusing: I am sorry for that), and so our formula above becomes very simple:

Umag = ± μB·B

The graph above becomes the graph below, and we can now speak more loosely and say that the electron either has its spin ‘up’ (so that’s along the field), or ‘down’ (so that’s opposite the field).

By now, you’re probably tired of the math and you’ll wonder: how can we prove all of this permitted value business? Well… That question leads me to the last topic of my post: the Stern-Gerlach experiment.

The Stern-Gerlach experiment

Here again, I can just copy straight of out of Feynman, and so I hope you’ll forgive me if I just do that, as I don’t think there’s any advantage to me trying to summarize what he writes on it:

“The fact that the angular momentum is quantized is such a surprising thing that we will talk a little bit about it historically. It was a shock from the moment it was discovered (although it was expected theoretically). It was first observed in an experiment done in 1922 by Stern and Gerlach. If you wish, you can consider the experiment of Stern-Gerlach as a direct justification for a belief in the quantization of angular momentum. Stern and Gerlach devised an experiment for measuring the magnetic moment of individual silver atoms. They produced a beam of silver atoms by evaporating silver in a hot oven and letting some of them come out through a series of small holes. This beam was directed between the pole tips of a special magnet, as shown in the illustration below. Their idea was the following. If the silver atom has a magnetic moment μ, then in a magnetic field B it has an energy −μzB, where z is the direction of the magnetic field. In the classical theory, μz would be equal to the magnetic moment times the cosine of the angle between the moment and the magnetic field, so the extra energy in the field would be

ΔU = −μ·B·cosθ

Of course, as the atoms come out of the oven, their magnetic moments would point in every possible direction, so there would be all values of θ. Now if the magnetic field varies very rapidly with z—if there is a strong field gradient—then the magnetic energy will also vary with position, and there will be a force on the magnetic moments whose direction will depend on whether cosine θ is positive or negative. The atoms will be pulled up or down by a force proportional to the derivative of the magnetic energy; from the principle of virtual work,

Stern and Gerlach made their magnet with a very sharp edge on one of the pole tips in order to produce a very rapid variation of the magnetic field. The beam of silver atoms was directed right along this sharp edge, so that the atoms would feel a vertical force in the inhomogeneous field. A silver atom with its magnetic moment directed horizontally would have no force on it and would go straight past the magnet. An atom whose magnetic moment was exactly vertical would have a force pulling it up toward the sharp edge of the magnet. An atom whose magnetic moment was pointed downward would feel a downward push. Thus, as they left the magnet, the atoms would be spread out according to their vertical components of magnetic moment. In the classical theory all angles are possible, so that when the silver atoms are collected by deposition on a glass plate, one should expect a smear of silver along a vertical line. The height of the line would be proportional to the magnitude of the magnetic moment. The abject failure of classical ideas was completely revealed when Stern and Gerlach saw what actually happened. They found on the glass plate two distinct spots. The silver atoms had formed two beams.

That a beam of atoms whose spins would apparently be randomly oriented gets split up into two separate beams is most miraculous. How does the magnetic moment know that it is only allowed to take on certain components in the direction of the magnetic field? Well, that was really the beginning of the discovery of the quantization of angular momentum, and instead of trying to give you a theoretical explanation, we will just say that you are stuck with the result of this experiment just as the physicists of that day had to accept the result when the experiment was done. It is an experimental fact that the energy of an atom in a magnetic field takes on a series of individual values. For each of these values the energy is proportional to the field strength. So in a region where the field varies, the principle of virtual work tells us that the possible magnetic force on the atoms will have a set of separate values; the force is different for each state, so the beam of atoms is split into a small number of separate beams. From a measurement of the deflection of the beams, one can find the strength of the magnetic moment.”

I should note one point which Feynman hardly addresses in the analysis above: why do we need a non-homogeneous field? Well… Think of it. The individual silver atoms are not like electrons in some electric field. They are tiny little magnets, and magnets do not behave like electrons. Remember we said there’s no such thing as a magnetic charge? So that applies here. If the silver atoms are tiny magnets, with a magnetic dipole moment, then the only thing they will do is turn, so as to minimize their energy U = −μBcosθ.

That energy is minimized when μ and B are at right angles of each other, so as to make the cosθ factor zero, which happens when θ = π/2. Hence, in a homogeneous magnetic field, we will have a torque on the loop of current – think of our electron(s) in orbit here – but no net force pulling it in this or that direction as a whole. So the atoms would just rotate but not move in our classical analysis here.

To make the atoms themselves move towards or away one of the poles (with or without a sharp tip), the magnetic field must be non-homogeneous, so as to ensure that the force that’s pulling on one side of the loop of current is slightly different from the force that’s pulling (in the opposite direction) on the other side of the loop of current. So that’s why the field has to be non-homogeneous (or inhomogeneous as Feynman calls it), and so that’s why one pole needs to have a sharply pointed tip.

As for the force formula, it’s crucial to remember that energy (or work) is force times distance. To be precise, it’s the ∫F∙ds integral. This integral will have a minus sign in front when we’re doing work against the force, so that’s when we’re increasing the potential energy of an object. Conversely, we’ll just take the positive value when we’re converting potential energy into kinetic energy. So that explains the F = −∂U/∂z formula above. In fact, in the analysis above, Feynman assumes the magnetic moment doesn’t turn at all. That’s pretty obvious from the Fz = −∂U/∂z = −μ∙cosθ∙∂B/∂z formula, in which μ is clearly being treated as a constant. So the Fz in this formula is a net force in the z-direction, and it’s crucially dependent on the variation of the magnetic field in the z-direction. If the field would not be varying, ∂B/∂z would be zero and, therefore, we would not have any net force in the z-direction. As mentioned above, we would only have a torque.

Well… This sort of covers all of what we wanted to cover today. 🙂 I hope you enjoyed it.

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:https://wordpress.com/support/copyright-and-the-dmca/

Some content on this page was disabled on June 16, 2020 as a result of a DMCA takedown notice from The California Institute of Technology. You can learn more about the DMCA here:

Magnetic dipoles and their torque and energy

We studied the magnetic dipole in very much detail in one of my previous posts but, while we talked about an awful lot of stuff there, we actually managed to not talk about the torque on a it, when it’s placed in the magnetic field of other currents, or some other magnetic field tout court. Now, that’s what drives electric motors and generators, of course, and so we should talk about it, which is what I’ll do here. Let me first remind you of the concept of torque, and then we’ll apply it to a loop of current. 🙂

The concept of torque

The concept of torque is easy to grasp intuitively, but the math involved is not so easy. Let me sum up the basics (for the detail, I’ll refer you to my posts on spin and angular momentum). In essence, for rotations in space (i.e. rotational motion), the torque is what the force is for linear motion:

- It’s the torque (τ) that makes an object spin faster or slower around some axis, just like the force would accelerate or decelerate that very same object when it would be moving along some curve.

- There’s also a similar ‘law of Newton’ for torque: you’ll remember that the force equals the time rate of change of a vector quantity referred to as (linear) momentum: F = dp/dt = d(mv)/dt = ma (the mass times the acceleration). Likewise, we have a vector quantity that is referred to as angular momentum (L), and we can write: τ (i.e. the Greek tau) = dL/dt.

- Finally, instead of linear velocity, we’ll have an angular velocity ω (omega), which is the time rate of change of the angle θ that defines how far the object has gone around (as opposed to the distance in linear dynamics, describing how far the object has gone along). So we have ω = dθ/dt. This is actually easy to visualize because we know that θ, expressed in radians, is actually the length of the corresponding arc on the unit circle. Hence, the equivalence with the linear distance traveled is easily ascertained.

There are many more similarities, like an angular acceleration: α = dω/dt = d2θ/dt2, and we should also note that, just like the force, the torque is doing work – in its conventional definition as used in physics – as it turns an object instead of just moving it, so we can write:

ΔW = τ·Δθ

So it’s all the same-same but different once more 🙂 and so now we also need to point out some differences. The animation below does that very well, as it relates the ‘new’ concepts – i.e. torque and angular momentum – to the ‘old’ concepts – i.e. force and linear momentum. It does so using the vector cross product, which is really all you need to understand the math involved. Just look carefully at all of the vectors involved, which you can identify by their colors, i.e. red-brown (r), light-blue (τ), dark-blue (F), light-green (L), and dark-green (p).

So what do we have here? We have vector quantities once again, denoted by symbols in bold-face. Having said that, I should note that τ, L and ω are ‘special’ vectors: they are referred to as axial vectors, as opposed to the polar vectors F, p and v. To put it simply: polar vectors represent something physical, and axial vectors are more like mathematical vectors, but that’s a very imprecise and, hence, essential non-correct definition. 🙂 Axial vectors are directed along the axis of spin – so that is, strangely enough, at right angles to the direction of spin, or perpendicular to the ‘plane of the twist’ as Feynman calls it – and the direction of the axial vector is determined by a convention which is referred to as the ‘right-hand screw rule’. 🙂

Now, I know it’s not so easy to visualize vector cross products, so it may help to first think of torque (also known, for some obscure reason, as the moment of the force) as a twist on an object or a plane. Indeed, the torque’s magnitude can be defined in another way: it’s equal to the tangential component of the force, i.e. F·sin(Δθ), times the distance between the object and the axis of rotation (we’ll denote this distance by r). This quantity is also equal to the product of the magnitude of the force itself and the length of the so-called lever arm, i.e. the perpendicular distance from the axis to the line of action of the force (this lever arm length is denoted by r0). So, we can define τ without the use of the vector cross-product, and in not less than three different ways actually. Indeed, the torque is equal to:

- The product of the tangential component of the force times the distance r: τ = r·Ft= r·F·sin(Δθ);

- The product of the length of the lever arm times the force: τ = r0·F;

- The work done per unit of distance traveled: τ = ΔW/Δθ or τ = dW/dθ in the limit.

Phew! Yeah. I know. It’s not so easy… However, I regret to have to inform you that you’ll need to go even further in your understanding of torque. More specifically, you really need to understand why and how we define the torque as a vector cross product, and so please do check out that post of mine on the fundamentals of ‘torque math’. If you don’t want to do that, then just try to remember the definition of torque as an axial vector, which is:

τ = (τyz, τzx, τxy) = (τx, τy, τz) with

τx = τyz = yFz – zFy (i.e. the torque about the x-axis, i.e. in the yz-plane),

τy = τzx = zFx – xFz (i.e. the torque about the y-axis, i.e. in the zx-plane), and

τz = τxy = xFy – yFx (i.e. the torque about the z-axis, i.e. in the xy-plane).

The angular momentum L is defined in the same way:

L = (Lyz, Lzx, Lxy) = (Lx, Ly, Lz) with

Lx = Lyz = ypz – zpy (i.e. the angular momentum about the x-axis),

Ly = Lzx = zpx – xpz (i.e. the angular momentum about the y-axis), and

Lz = Lxy = xpy – ypx (i.e. the angular momentum about the z-axis).

Let’s now apply the concepts to a loop of current.

The forces on a current loop

The geometry of the situation is depicted below. I know it looks messy but let me help you identifying the moving parts, so to speak. 🙂 We’ve got a loop with current and so we’ve got a magnetic dipole with some moment μ. From my post on the magnetic dipole, you know that μ‘s magnitude is equal to |μ| = μ = (current)·(area of the loop) = I·a·b.

Now look at the B vectors, i.e. the magnetic field. Please note that these vectors represent some external magnetic field! So it’s not like what we did in our post on the dipole: we’re not looking at the magnetic field caused by our loop, but at how it behaves in some external magnetic field. Now, because it’s kinda convenient to analyze, we assume that the direction of our external field B is the direction of the z-axis, so that’s what you see in this illustration: the B vectors all point north. Now look at the force vectors, remembering that the magnetic force is equal to:

Fmagnetic = qv×B

So that gives the F1, F2, F3, and F4 vectors (so that’s the force on the first, second, third and fourth leg of the loop respectively) the magnitude and direction they’re having. Now, it’s easy to see that the opposite forces, i.e. the F1–F2 and F3–F4 pair respectively, create a torque. The torque because of F1 and F2 is a torque which will tend to rotate the loop about the y-axis, so that’s a torque in the xz-plane, while the torque because of F3 and F4 will be some torque about the x-axis and/or the z-axis. As you can see, the torque is such that it will try to line up the moment vector μ with the magnetic field B. In fact, the geometry of the situation above is such that F3 and F4 have already done their job, so to speak: the moment vector μ is already lined up with the xz-plane, so there’s not net torque in that plane. However, that’s just because of the specifics of the situation here: the more general situation is that we’d have some torque about all three axes, and so we need to find that vector τ.

If we’d be talking some electric dipole, the analysis would be very straightforward, because the electric force is just Felectric = qE, which we can also write as E = Felectric =/q, so the field is just the force on one unit of electric charge, and so it’s (relatively) easy to see that we’d get the following formula for the torque vector:

τ = p×E

Of course, the p is the electric dipole moment here, not some linear momentum. [And, yes, please do try to check this formula. Sorry I can’t elaborate on it, but the objective of this blog is not substitute for a textbook!]

Now, all of the analogies between the electric and magnetic dipole field, which we explored in the above-mentioned post of mine, would tend to make us think that we can write τ here as:

τ = μ×B

Well… Yes. It works. Now you may want to know why it works 🙂 and so let me give you the following hint. Each charge in a wire feels that Fmagnetic = qv×B force, so the total magnetic force on some volume ΔV, which I’ll denote by ΔF for a while, is the sum of the forces on all of the individual charges. So let’s assume we’ve got N charges per unit volume, then we’ve got N·ΔV charges in our little volume ΔV, so we write: ΔF = N·ΔV·q·v×B. You’re probably confused now: what’s the v here? It’s the (drift) velocity of the (free) electrons that make up our current I. Indeed, the protons don’t move. 🙂 So N·q·v is just the current density j, so we get: ΔF = j×BΔV, which implies that the force per unit volume is equal to j×B. But we need to relate it to the current in our wire, not the current density. Relax. We’re almost there. The ΔV in a wire is just its cross-sectional area A times some length, which I’ll denote by ΔL, so ΔF = j×BΔV becomes ΔF = j×BAΔL. Now, jA is the vector current I, so we get the simple result we need here: ΔF = I×BΔL, i.e. the magnetic force per unit length on a wire is equal to ΔF/ΔL = I×B.

Let’s now get back to our magnetic dipole and calculate F1 and F2. The length of ‘wire’ is the length of the leg of the loop, i.e. b, so we can write:

F1 = −F2 = b·I×B

So the magnitude of these forces is equal F1 = F2 = I·B·b. Now, The length of the moment or lever arm is, obviously, equal to a·sinθ, so the magnitude of the torque is equal to the force times the lever arm (cf. the τ = r0·F formula above) and so we can write:

τ = I·B·b·a·sinθ

But I·a·b is the magnitude of the magnetic moment μ, so we get:

τ = μ·B·sinθ

Now that’s consistent with the definition of the vector cross product:

τ = μ×B = |μ|·|B|·sinθ·n = μ·B·sinθ·n

Done! Now, electric motors and generators are all about work and, therefore, we also need to briefly talk about energy here.

The energy of a magnetic dipole

Let me remind you that we could also write the torque as the work done per unit of distance traveled, i.e. as τ = ΔW/Δθ or τ = dW/dθ in the limit. Now, the torque tries to line up the moment with the field, and so the energy will be lowest when μ and B are parallel, so we need to throw in a minus sign when writing:

τ = −dU/dθ ⇔ dU = −τ·dθ

We should now integrate over the [0, θ] interval to find U, also using our τ = μ·B·sinθ formula. That’s easy, because we know that d(cosθ)/dθ = −sinθ, so that integral yields:

U = 1 − μ·B·cosθ + a constant

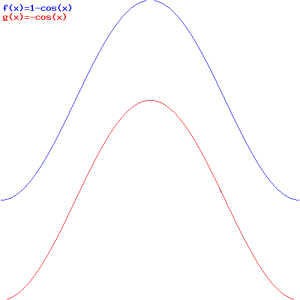

If we choose the constant to be zero, and if we equate μ·B with 1, we get the blue graph below:

The μ·B in the U = 1 − μ·B·cosθ formula is just a scaling factor, obviously, so it determines the minimum and maximum energy. Now, you may want to limit the relevant range of θ to [0, π], but that’s not necessary: the energy of our loop of current does go up and down as shown in the graph. Just think about it: it all makes perfect sense!

Now, there is, of course, more energy in the loop than this U energy because energy is needed to maintain the current in the loop, and so we didn’t talk about that here. Therefore, we’ll qualify this ‘energy’ and call it the mechanical energy, which we’ll abbreviate by Umech. In addition, we could, and will, choose some other constant of integration, so that amounts to choosing some other reference point for the lowest energy level. Why? Because it then allows us to write Umech as a vector dot product, so we get:

Umech = −μ·B·cosθ = −μ·B

The graph is pretty much the same, but it now goes from −μ·B to +μ·B, as shown by the red graph in the illustration above.

Finally, you should note that the Umech = −μ·B formula is similar to what you’ll usually see written for the energy of an electric dipole: U = −p·E. So that’s all nice and good! However, you should remember that the electrostatic energy of an electric dipole (i.e. two opposite charges separated by some distance d) is all of the energy, as we don’t need to maintain some current to create the dipole moment!

Now, Feynman does all kinds of things with these formulas in his Lectures on electromagnetism but I really think this is all you need to know about it—for the moment, at least. 🙂