Note: I have published a paper that is very coherent and fully explains what the fine-structure constant actually is. There is nothing magical about it. It’s not some God-given number. It’s a scaling constant – and then some more. But not God-given. Check it out: The Meaning of the Fine-Structure Constant. No ambiguity. No hocus-pocus.

Jean Louis Van Belle, 23 December 2018

Original post:

A brother of mine sent me a link to an article he liked. Now, because we share some interest in physics and math and other stuff, I looked at it and…

Well… I was disappointed. Despite the impressive credentials of its author – a retired physics professor – it was very poorly written. It made me realize how much badly written stuff is around, and I am glad I am no longer wasting my time on it. However, I do owe my brother some explanation of (a) why I think it was bad, and of (b) what, in my humble opinion, he should be wasting his time on. 🙂 So what it is all about?

The article talks about physicists deriving the speed of light from “the electromagnetic properties of the quantum vacuum.” Now, it’s the term ‘quantum‘, in ‘quantum vacuum’, that made me read the article.

Indeed, deriving the theoretical speed of light in empty space from the properties of the classical vacuum – aka empty space – is a piece of cake: it was done by Maxwell himself as he was figuring out his equations back in the 1850s (see my post on Maxwell’s equations and the speed of light). And then he compared it to the measured value, and he saw it was right on the mark. Therefore, saying that the speed of light is a property of the vacuum, or of empty space, is like a tautology: we may just as well put it the other way around, and say that it’s the speed of light that defines the (properties of the) vacuum!

Indeed, as I’ll explain in a moment: the speed of light determines both the electric as well as the magnetic constants μ0 and ε0, which are the (magnetic) permeability and the (electric) permittivity of the vacuum respectively. Both constants depend on the units we are working with (i.e. the units for electric charge, for distance, for time and for force – or for inertia, if you want, because force is defined in terms of overcoming inertia), but so they are just proportionality coefficients in Maxwell’s equations. So once we decide what units to use in Maxwell’s equations, then μ0 and ε0 are just proportionality coefficients which we get from c. So they are not separate constants really – I mean, they are not separate from c – and all of the ‘properties’ of the vacuum, including these constants, are in Maxwell’s equations.

In fact, when Maxwell compared the theoretical value of c with its presumed actual value, he didn’t compare c‘s theoretical value with the speed of light as measured by astronomers (like that 17th century Ole Roemer, to which our professor refers: he had a first go at it by suggesting some specific value for it based on his observations of the timing of the eclipses of one of Jupiter’s moons), but with c‘s value as calculated from the experimental values of μ0 and ε0! So he knew very well what he was looking at. In fact, to drive home the point, it may also be useful to note that the Michelson-Morley experiment – which accurately measured the speed of light – was done some thirty years later. So Maxwell had already left this world by then—very much in peace, because he had solved the mystery all 19th century physicists wanted to solve through his great unification: his set of equations covers it all, indeed: electricity, magnetism, light, and even relativity!

I think the article my brother liked so much does a very lousy job in pointing all of that out, but that’s not why I wouldn’t recommend it. It got my attention because I wondered why one would try to derive the speed of light from the properties of the quantum vacuum. In fact, to be precise, I hoped the article would tell me what the quantum vacuum actually is. Indeed, as far as I know, there’s only one vacuum—one ’empty space’: empty is empty, isn’t it? 🙂 So I wondered: do we have a ‘quantum’ vacuum? And, if so, what is it, really?

Now, that is where the article is really disappointing, I think. The professor drops a few names (like the Max Planck Institute, the University of Paris-Sud, etcetera), and then, promisingly, mentions ‘fleeting excitations of the quantum vacuum’ and ‘virtual pairs of particles’, but then he basically stops talking about quantum physics. Instead, he wanders off to share some philosophical thoughts on the fundamental physical constants. What makes it all worse is that even those thoughts on the ‘essential’ constants are quite off the mark.

So… This post is just a ‘quick and dirty’ thing for my brother which, I hope, will be somewhat more thought-provoking than that article. More importantly, I hope that my thoughts will encourage him to try to grind through better stuff.

On Maxwell’s equations and the properties of empty space

Let me first say something about the speed of light indeed. Maxwell’s four equations may look fairly simple, but that’s only until one starts unpacking all those differential vector equations, and it’s only when going through all of their consequences that one starts appreciating their deep mathematical structure. Let me quickly copy how another blogger jotted them down: 🙂

As I showed in my above-mentioned post, the speed of light (i.e. the speed with which an electromagnetic pulse or wave travels through space) is just one of the many consequences of the mathematical structure of Maxwell’s set of equations. As such, the speed of light is a direct consequence of the ‘condition’, or the properties, of the vacuum indeed, as Maxwell suggested when he wrote that “we can scarcely avoid the inference that light consists in the transverse undulations of the same medium which is the cause of electric and magnetic phenomena”.

Of course, while Maxwell still suggests light needs some ‘medium’ here – so that’s a reference to the infamous aether theory – we now know that’s because he was a 19th century scientist, and so we’ve done away with the aether concept (because it’s a redundant hypothesis), and so now we also know there’s absolutely no reason whatsoever to try to “avoid the inference.” 🙂 It’s all OK, indeed: light is some kind of “transverse undulation” of… Well… Of what?

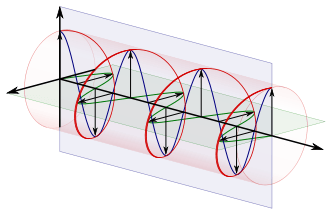

We analyze light as traveling fields, represented by two vectors, E and B, whose direction and magnitude varies both in space as well as in time. E and B are field vectors, and represent the electric and magnetic field respectively. An equivalent formulation – more or less, that is (see my post on the Liénard-Wiechert potentials) – for Maxwell’s equations when only one (moving) charge is involved is:

This re-formulation, which is Feynman’s preferred formula for electromagnetic radiation, is interesting in a number of ways. It clearly shows that, while we analyze the electric and magnetic field as separate mathematical entities, they’re one and the same phenomenon really, as evidenced by the B = –er‘×E/c equation, which tells us the magnetic field from a single moving charge is always normal (i.e. perpendicular) to the electric field vector, and also that B‘s magnitude is 1/c times the magnitude of E, so |B| = B = |E|/c = E/c. In short, B is fully determined by E, or vice versa: if we have one of the two fields, we have the other, so they’re ‘one and the same thing’ really—not in a mathematical sense, but in a real sense.

Also note that E and B‘s magnitude is just the same if we’re using natural units, so if we equate c with 1. Finally, as I pointed out in my post on the relativity of electromagnetic fields, if we would switch from one reference frame to another, we’ll have a different mix of E and B, but that different mix obviously describes the same physical reality. More in particular, if we’d be moving with the charges, the magnetic field sort of disappears to re-appear as an electric field. So the Lorentz force F = Felectric + Fmagnetic = qE + qv×B is one force really, and its ‘electric’ and ‘magnetic’ component appear the way they appear in our reference frame only. In some other reference frame, we’d have the same force, but its components would look different, even if they, obviously, would and should add up to the same. [Well… Yes and no… You know there’s relativistic corrections to be made to the forces to, but that’s a minor point, really. The force surely doesn’t disappear!]

All of this reinforces what you know already: electricity and magnetism are part and parcel of one and the same phenomenon, the electromagnetic force field, and Maxwell’s equations are the most elegant way of ‘cutting it up’. Why elegant? Well… Click the Occam tab. 🙂

Now, after having praised Maxwell once more, I must say that Feynman’s equations above have another advantage. In Maxwell’s equations, we see two constants, the electric and magnetic constant (denoted by μ0 and ε0 respectively), and Maxwell’s equations imply that the product of the electric and magnetic constant is the reciprocal of c2: μ0·ε0 = 1/c2. So here we see ε0 and c only, so no μ0, so that makes it even more obvious that the magnetic and electric constant are related one to another through c.

[…] Let me digress briefly: why do we have c2 in μ0·ε0 = 1/c2, instead of just c? That’s related to the relativistic nature of the magnetic force: think about that B = E/c relation. Or, better still, think about the Lorentz equation F = Felectric + Fmagnetic = qE + qv×B = q[E + (v/c)×(E×er‘)]: the 1/c factor is there because the magnetic force involves some velocity, and any velocity is always relative—and here I don’t mean relative to the frame of reference but relative to the (absolute) speed of light! Indeed, it’s the v/c ratio (usually denoted by β = v/c) that enters all relativistic formulas. So the left-hand side of the μ0·ε0 = 1/c2 equation is best written as (1/c)·(1/c), with one of the two 1/c factors accounting for the fact that the ‘magnetic’ force is a relativistic effect of the ‘electric’ force, really, and the other 1/c factor giving us the proper relationship between the magnetic and the electric constant. To drive home the point, I invite you to think about the following:

- μ0 is expressed in (V·s)/(A·m), while ε0 is expressed in (A·s)/(V·m), so the dimension in which the μ0·ε0 product is expressed is [(V·s)/(A·m)]·[(A·s)/(V·m)] = s2/m2, so that’s the dimension of 1/c2.

- Now, this dimensional analysis makes it clear that we can sort of distribute 1/c2 over the two constants. All it takes is re-defining the fundamental units we use to calculate stuff, i.e. the units for electric charge, for distance, for time and for force – or for inertia, as explained above. But so we could, if we wanted, equate both μ0 as well as ε0 with 1/c.

- Now, if we would then equate c with 1, we’d have μ0 = ε0 = c = 1. We’d have to define our units for electric charge, for distance, for time and for force accordingly, but it could be done, and then we could re-write Maxwell’s set of equations using these ‘natural’ units.

In any case, the nitty-gritty here is less important: the point is that μ0 and ε0 are also related through the speed of light and, hence, they are ‘properties’ of the vacuum as well. [I may add that this is quite obvious if you look at their definition, but we’re approaching the matter from another angle here.]

In any case, we’re done with this. On to the next!

On quantum oscillations, Planck’s constant, and Planck units

The second thought I want to develop is about the mentioned quantum oscillation. What is it? Or what could it be? An electromagnetic wave is caused by a moving electric charge. What kind of movement? Whatever: the charge could move up or down, or it could just spin around some axis—whatever, really. For example, if it spins around some axis, it will have a magnetic moment and, hence, the field is essentially magnetic, but then, again, E and B are related and so it doesn’t really matter if the first cause is magnetic or electric: that’s just our way of looking at the world: in another reference frame, one that’s moving with the charges, the field would essential be electric. So the motion can be anything: linear, rotational, or non-linear in some irregular way. It doesn’t matter: any motion can always be analyzed as the sum of a number of ‘ideal’ motions. So let’s assume we have some elementary charge in space, and it moves and so it emits some electromagnetic radiation.

So now we need to think about that oscillation. The key question is: how small can it be? Indeed, in one of my previous posts, I tried to explain some of the thinking behind the idea of the ‘Great Desert’, as physicists call it. The whole idea is based on our thinking about the limit: what is the smallest wavelength that still makes sense? So let’s pick up that conversation once again.

The Great Desert lies between the 1032 and 1043 Hz scale. 1032 Hz corresponds to a photon energy of Eγ = h·f = (4×10−15 eV·s)·(1032 Hz) = 4×1017 eV = 400,000 tera-electronvolt (1 TeV = 1012 eV). I use the γ (gamma) subscript in my Eγ symbol for two reasons: (1) to make it clear that I am not talking the electric field E here but energy, and (2) to make it clear we are talking ultra-high-energy gamma-rays here.

In fact, γ-rays of this frequency and energy are theoretical only. Ultra-high-energy gamma-rays are defined as rays with photon energies higher than 100 TeV, which is the upper limit for very-high-energy gamma-rays, which have been observed as part of the radiation emitted by so-called gamma-ray bursts (GRBs): flashes associated with extremely energetic explosions in distant galaxies. Wikipedia refers to them as the ‘brightest’ electromagnetic events know to occur in the Universe. These rays are not to be confused with cosmic rays, which consist of high-energy protons and atomic nuclei stripped of their electron shells. Cosmic rays aren’t rays really and, because they consist of particles with a considerable rest mass, their energy is even higher. The so-called Oh-My-God particle, for example, which is the most energetic particle ever detected, had an energy of 3×1020 eV, i.e. 300 million TeV. But it’s not a photon: its energy is largely kinetic energy, with the rest mass m0 counting for a lot in the m in the E = m·c2 formula. To be precise: the mentioned particle was thought to be an iron nucleus, and it packed the equivalent energy of a baseball traveling at 100 km/h!

But let me refer you to another source for a good discussion on these high-energy particles, so I can get get back to the energy of electromagnetic radiation. When I talked about the Great Desert in that post, I did so using the Planck-Einstein relation (E = h·f), which embodies the idea of the photon being valid always and everywhere and, importantly, at every scale. I also discussed the Great Desert using real-life light being emitted by real-life atomic oscillators. Hence, I may have given the (wrong) impression that the idea of a photon as a ‘wave train’ is inextricably linked with these real-life atomic oscillators, i.e. to electrons going from one energy level to the next in some atom. Let’s explore these assumptions somewhat more.

Let’s start with the second point. Electromagnetic radiation is emitted by any accelerating electric charge, so the atomic oscillator model is an assumption that should not be essential. And it isn’t. For example, whatever is left of the nucleus after alpha or beta decay (i.e. a nuclear decay process resulting in the emission of an α- or β-particle) it likely to be in an excited state, and likely to emit a gamma-ray for about 10−12 seconds, so that’s a burst that’s about 10,000 times shorter than the 10–8 seconds it takes for the energy of a radiating atom to die out. [As for the calculation of that 10–8 sec decay time – so that’s like 10 nanoseconds – I’ve talked about this before but it’s probably better to refer you to the source, i.e. one of Feynman’s Lectures.]

However, what we’re interested in is not the energy of the photon, but the energy of one cycle. In other words, we’re not thinking of the photon as some wave train here, but what we’re thinking about is the energy that’s packed into a space corresponding to one wavelength. What can we say about that?

As you know, that energy will depend both on the amplitude of the electromagnetic wave as well as its frequency. To be precise, the energy is (1) proportional to the square of the amplitude, and (2) proportional to the frequency. Let’s look at the first proportionality relation. It can be written in a number of ways, but one way of doing it is stating the following: if we know the electric field, then the amount of energy that passes per square meter per second through a surface that is normal to the direction in which the radiation is going (which we’ll denote by S – the s from surface – in the formula below), must be proportional to the average of the square of the field. So we write S ∝ 〈E2〉, and so we should think about the constant of proportionality now. Now, let’s not get into the nitty-gritty, and so I’ll just refer to Feynman for the derivation of the formula below:

S = ε0c·〈E2〉

So the constant of proportionality is ε0c. [Note that, in light of what we wrote above, we can also write this as S = (1/μ0·c)·〈(c·B)2〉 = (c/μ0)·〈B2〉, so that underlines once again that we’re talking one electromagnetic phenomenon only really.] So that’s a nice and rather intuitive result in light of all of the other formulas we’ve been jotting down. However, it is a ‘wave’ perspective. The ‘photon’ perspective assumes that, somehow, the amplitude is given and, therefore, the Planck-Einstein relation only captures the frequency variable: Eγ = h·f.

Indeed, ‘more energy’ in the ‘wave’ perspective basically means ‘more photons’, but photons are photons: they have a definite frequency and a definite energy, and both are given by that Planck-Einstein relation. So let’s look at that relation by doing a bit of dimensional analysis:

- Energy is measured in electronvolt or, using SI units, joule: 1 eV ≈ 1.6×10−19 J. Energy is force times distance: 1 joule = 1 newton·meter, which means that a larger force over a shorter distance yields the same energy as a smaller force over a longer distance. The oscillations we’re talking about here involve very tiny distances obviously. But the principle is the same: we’re talking some moving charge q, and the power – which is the time rate of change of the energy – that goes in or out at any point of time is equal to dW/dt = F·v, with W the work that’s being done by the charge as it emits radiation.

- I would also like to add that, as you know, forces are related to the inertia of things. Newton’s Law basically defines a force as that what causes a mass to accelerate: F = m·a = m·(dv/dt) = d(m·v)/dt = dp/dt, with p the momentum of the object that’s involved. When charges are involved, we’ve got the same thing: a potential difference will cause some current to change, and one of the equivalents of Newton’s Law F = m·a = m·(dv/dt) in electromagnetism is V = L·(dI/dt). [I am just saying this so you get a better ‘feel’ for what’s going on.]

- Planck’s constant is measured in electronvolt·seconds (eV·s) or in, using SI units, in joule·seconds (J·s), so its dimension is that of (physical) action, which is energy times time: [energy]·[time]. Again, a lot of energy during a short time yields the same energy as less energy over a longer time. [Again, I am just saying this so you get a better ‘feel’ for these dimensions.]

- The frequency f is the number of cycles per time unit, so that’s expressed per second, i.e. in herz (Hz) = 1/second = s−1.

So… Well… It all makes sense: [x joule] = [6.626×10−34 joule]·[1 second]×[f cycles]/[1 second]. But let’s try to deepen our understanding even more: what’s the Planck-Einstein relation really about?

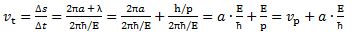

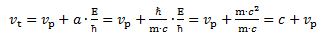

To answer that question, let’s think some more about the wave function. As you know, it’s customary to express the frequency as an angular frequency ω, as used in the wave function A(x, t) = A0·sin(kx − ωt). The angular frequency is the frequency expressed in radians per second. That’s because we need an angle in our wave function, and so we need to relate x and t to some angle. The way to think about this is as follows: one cycle takes a time T (i.e. the period of the wave) which is equal to T = 1/f. Yes: one second divided by the number of cycles per second gives you the time that’s needed for one cycle. One cycle is also equivalent to our argument ωt going around the full circle (i.e. 2π), so we write: ω·T = 2π and, therefore:

ω = 2π/T = 2π·f

Now we’re ready to play with the Planck-Einstein relation. We know it gives us the energy of one photon really, but what if we re-write our equation Eγ = h·f as Eγ/f = h? The dimensions in this equation are:

[x joule]·[1 second]/[f cyles] = [6.626×10−34 joule]·[1 second]

⇔ x = 6.626×10−34 joule per cycle

So that means that the energy per cycle is equal to 6.626×10−34 joule, i.e. the value of Planck’s constant.

Let me rephrase truly amazing result, so you appreciate it—perhaps: regardless of the frequency of the light (or our electromagnetic wave, in general) involved, the energy per cycle, i.e. per wavelength or per period, is always equal to 6.626×10−34 joule or, using the electronvolt as the unit, 4.135667662×10−15 eV. So, in case you wondered, that is the true meaning of Planck’s constant!

Now, if we have the frequency f, we also have the wavelength λ, because the velocity of the wave is the frequency times the wavelength: c = λ·f and, therefore, λ = c/f. So if we increase the frequency, the wavelength becomes smaller and smaller, and so we’re packing the same amount of energy – admittedly, 4.135667662×10−15 eV is a very tiny amount of energy – into a space that becomes smaller and smaller. Well… What’s tiny, and what’s small? All is relative, of course. 🙂 So that’s where the Planck scale comes in. If we pack that amount of energy into some tiny little space of the Planck dimension, i.e. a ‘length’ of 1.6162×10−35 m, then it becomes a tiny black hole, and it’s hard to think about how that would work.

[…] Let me make a small digression here. I said it’s hard to think about black holes but, of course, it’s not because it’s ‘hard’ that we shouldn’t try it. So let me just mention a few basic facts. For starters, black holes do emit radiation! So they swallow stuff, but they also spit stuff out. More in particular, there is the so-called Hawking radiation, as Roger Penrose and Stephen Hawking discovered.

Let me quickly make a few remarks on that: Hawking radiation is basically a form of blackbody radiation, so all frequencies are there, as shown below: the distribution of the various frequencies depends on the temperature of the black body, i.e. the black hole in this case. [The black curve is the curve that Lord Rayleigh and Sir James Jeans derived in the late 19th century, using classical theory only, so that’s the one that does not correspond to experimental fact, and which led Max Planck to become the ‘reluctant’ father of quantum mechanics. In any case, that’s history and so I shouldn’t dwell on this.]

The interesting thing about blackbody radiation, including Hawking radiation, is that it reduces energy and, hence, the equivalent mass of our blackbody. So Hawking radiation reduces the mass and energy of black holes and is therefore also known as black hole evaporation. So black holes that lose more mass than they gain through other means are expected to shrink and ultimately vanish. Therefore, there’s all kind of theories that say why micro black holes, like that Planck scale black hole we’re thinking of right now, should be much larger net emitters of radiation than large black holes and, hence, whey they should shrink and dissipate faster.

Hmm… Interesting… What do we do with all of this information? Well… Let’s think about it as we continue our trek on this long journey to reality over the next year or, more probably, years (plural). 🙂

The key lesson here is that space and time are intimately related because of the idea of movement, i.e. the idea of something having some velocity, and that it’s not so easy to separate the dimensions of time and distance in any hard and fast way. As energy scales become larger and, therefore, our natural time and distance units become smaller and smaller, it’s the energy concept that comes to the fore. It sort of ‘swallows’ all other dimensions, and it does lead to limiting situations which are hard to imagine. Of course, that just underscores the underlying unity of Nature, and the mysteries involved.

So… To relate all of this back to the story that our professor is trying to tell, it’s a simple story really. He’s talking about two fundamental constants basically, c and h, pointing out that c is a property of empty space, and h is related to something doing something. Well… OK. That’s really nothing new, and surely not ground-breaking research. 🙂

Now, let me finish my thoughts on all of the above by making one more remark. If you’ve read a thing or two about this – which you surely have – you’ll probably say: this is not how people usually explain it. That’s true, they don’t. Anything I’ve seen about this just associates the 1043 Hz scale with the 1028 eV energy scale, using the same Planck-Einstein relation. For example, the Wikipedia article on micro black holes writes that “the minimum energy of a microscopic black hole is 1019 GeV [i.e. 1028 eV], which would have to be condensed into a region on the order of the Planck length.” So that’s wrong. I want to emphasize this point because I’ve been led astray by it for years. It’s not the total photon energy, but the energy per cycle that counts. Having said that, it is correct, however, and easy to verify, that the 1043 Hz scale corresponds to a wavelength of the Planck scale: λ = c/f = (3×108 m/s)/(1043 s−1) = 3×10−35 m. The confusion between the photon energy and the energy per wavelength arises because of the idea of a photon: it travels at the speed of light and, hence, because of the relativistic length contraction effect, it is said to be point-like, to have no dimension whatsoever. So that’s why we think of packing all of its energy in some infinitesimally small place. But you shouldn’t think like that. The photon is dimensionless in our reference frame: in its own ‘world’, it is spread out, so it is a wave train. And it’s in its ‘own world’ that the contradictions start… 🙂

OK. Done!

My third and final point is about what our professor writes on the fundamental physical constants, and more in particular on what he writes on the fine-structure constant. In fact, I could just refer you to my own post on it, but that’s probably a bit too easy for me and a bit difficult for you 🙂 so let me summarize that post and tell you what you need to know about it.

The fine-structure constant

The fine-structure constant α is a dimensionless constant which also illustrates the underlying unity of Nature, but in a way that’s much more fascinating than the two or three things the professor mentions. Indeed, it’s quite incredible how this number (α = 0.00729735…, but you’ll usually see it written as its reciprocal, which is a number that’s close to 137.036…) links charge with the relative speeds, radii, and the mass of fundamental particles and, therefore, how this number also these concepts with each other. And, yes, the fact that it is, effectively, dimensionless, unlike h or c, makes it even more special. Let me quickly sum up what the very same number α all stands for:

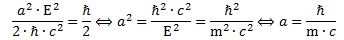

(1) α is the square of the electron charge expressed in Planck units: α = eP2.

(2) α is the square root of the ratio of (a) the classical electron radius and (b) the Bohr radius: α = √(re /r). You’ll see this more often written as re = α2r. Also note that this is an equation that does not depend on the units, in contrast to equation 1 (above), and 4 and 5 (below), which require you to switch to Planck units. It’s the square of a ratio and, hence, the units don’t matter. They fall away.

(3) α is the (relative) speed of an electron: α = v/c. [The relative speed is the speed as measured against the speed of light. Note that the ‘natural’ unit of speed in the Planck system of units is equal to c. Indeed, if you divide one Planck length by one Planck time unit, you get (1.616×10−35 m)/(5.391×10−44 s) = c m/s. However, this is another equation, just like (2), that does not depend on the units: we can express v and c in whatever unit we want, as long we’re consistent and express both in the same units.]

(4) α is also equal to the product of (a) the electron mass (which I’ll simply write as me here) and (b) the classical electron radius re (if both are expressed in Planck units): α = me·re. Now I think that’s, perhaps, the most amazing of all of the expressions for α. [If you don’t think that’s amazing, I’d really suggest you stop trying to study physics. :-)]

Also note that, from (2) and (4), we find that:

(5) The electron mass (in Planck units) is equal me = α/re = α/α2r = 1/αr. So that gives us an expression, using α once again, for the electron mass as a function of the Bohr radius r expressed in Planck units.

Finally, we can also substitute (1) in (5) to get:

(6) The electron mass (in Planck units) is equal to me = α/re = eP2/re. Using the Bohr radius, we get me = 1/αr = 1/eP2r.

So… As you can see, this fine-structure constant really links all of the fundamental properties of the electron: its charge, its radius, its distance to the nucleus (i.e. the Bohr radius), its velocity, its mass (and, hence, its energy),…

So… Why is what it is?

Well… We all marvel at this, but what can we say about it, really? I struggle how to interpret this, just as much – or probably much more 🙂 – as the professor who wrote the article I don’t like (because it’s so imprecise, and that’s what made me write all what I am writing here).

Having said that, it’s obvious that it points to a unity beyond these numbers and constants that I am only beginning to appreciate for what it is: deep, mysterious, and very beautiful. But so I don’t think that professor does a good job at showing how deep, mysterious and beautiful it all is. But then that’s up to you, my brother and you, my imaginary reader, to judge, of course. 🙂

[…] I forgot to mention what I mean with ‘Planck units’. Well… Once again, I should refer you to one of my other posts. But, yes, that’s too easy for me and a bit difficult for you. 🙂 So let me just note we get those Planck units by equating not less than five fundamental physical constants to 1, notably (1) the speed of light, (2) Planck’s (reduced) constant, (3) Boltzmann’s constant, (4) Coulomb’s constant and (5) Newton’s constant (i.e. the gravitational constant). Hence, we have a set of five equations here (c = ħ = kB = ke = G = 1), and so we can solve that to get the five Planck units, i.e. the Planck length unit, the Planck time unit, the Planck mass unit, the Planck energy unit, the Planck charge unit and, finally (oft forgotten), the Planck temperature unit. Of course, you should note that all mass and energy units are directly related because of the mass-energy equivalence relation E = mc2, which simplifies to E = m if c is equated to 1. [I could also say something about the relation between temperature and (kinetic) energy, but I won’t, as it would only further confuse you.]

OK. Done! 🙂

Addendum: How to think about space and time?

If you read the argument on the Planck scale and constant carefully, then you’ll note that it does not depend on the idea of an indivisible photon. However, it does depend on that Planck-Einstein relation being valid always and everywhere. Now, the Planck-Einstein relation is, in its essence, a fairly basic result from classical electromagnetic theory: it incorporates quantum theory – remember: it’s the equation that allowed Planck to solve the black-body radiation problem, and so it’s why they call Planck the (reluctant) ‘Father of Quantum Theory’ – but it’s not quantum theory.

So the obvious question is: can we make this reflection somewhat more general, so we can think of the electromagnetic force as an example only. In other words: can we apply the thoughts above to any force and any movement really?

The truth is: I haven’t advanced enough in my little study to give the equations for the other forces. Of course, we could think of gravity, and I developed some thoughts on how gravity waves might look like, but nothing specific really. And then we have the shorter-range nuclear forces, of course: the strong force, and the weak force. The laws involved are very different. The strong force involves color charges, and the way distances work is entirely different. So it would surely be some different analysis. However, the results should be the same. Let me offer some thoughts though:

- We know that the relative strength of the nuclear force is much larger, because it pulls like charges (protons) together, despite the strong electromagnetic force that wants to push them apart! So the mentioned problem of trying to ‘pack’ some oscillation in some tiny little space should be worse with the strong force. And the strong force is there, obviously, at tiny little distances!

- Even gravity should become important, because if we’ve got a lot of energy packed into some tiny space, its equivalent mass will ensure the gravitational forces also become important. In fact, that’s what the whole argument was all about!

- There’s also all this talk about the fundamental forces becoming one at the Planck scale. I must, again, admit my knowledge is not advanced enough to explain how that would be possible, but I must assume that, if physicists are making such statements, the argument must be fairly robust.

So… Whatever charge or whatever force we are talking about, we’ll be thinking of waves or oscillations—or simply movement, but it’s always a movement in a force field, and so there’s power and energy involved (energy is force times distance, and power is the time rate of change of energy). So, yes, we should expect the same issues in regard to scale. And so that’s what’s captured by h.

As we’re talking the smallest things possible, I should also mention that there are also other inconsistencies in the electromagnetic theory, which should (also) have their parallel for other forces. For example, the idea of a point charge is mathematically inconsistent, as I show in my post on fields and charges. Charge, any charge really, must occupy some space. It cannot all be squeezed into one dimensionless point. So the reasoning behind the Planck time and distance scale is surely valid.

In short, the whole argument about the Planck scale and those limits is very valid. However, does it imply our thinking about the Planck scale is actually relevant? I mean: it’s not because we can imagine how things might look like – they may look like those tiny little black holes, for example – that these things actually exist. GUT or string theorists obviously think they are thinking about something real. But, frankly, Feynman had a point when he said what he said about string theory, shortly before his untimely death in 1988: “I don’t like that they’re not calculating anything. I don’t like that they don’t check their ideas. I don’t like that for anything that disagrees with an experiment, they cook up an explanation—a fix-up to say, ‘Well, it still might be true.'”

It’s true that the so-called Standard Model does not look very nice. It’s not like Maxwell’s equations. It’s complicated. It’s got various ‘sectors’: the electroweak sector, the QCD sector, the Higgs sector,… So ‘it looks like it’s got too much going on’, as a friend of mine said when he looked at a new design for mountainbike suspension. 🙂 But, unlike mountainbike designs, there’s no real alternative for the Standard Model. So perhaps we should just accept it is what it is and, hence, in a way, accept Nature as we can see it. So perhaps we should just continue to focus on what’s here, before we reach the Great Desert, rather than wasting time on trying to figure out how things might look like on the other side, especially because we’ll never be able to test our theories about ‘the other side.’

On the other hand, we can see where the Great Desert sort of starts (somewhere near the 1032 Hz scale), and so it’s only natural to think it should also stop somewhere. In fact, we know where it stops: it stops at the 1043 Hz scale, because everything beyond that doesn’t make sense. The question is: is there actually there? Like fundamental strings or whatever you want to call it. Perhaps we should just stop where the Great Desert begins. And what’s the Great Desert anyway? Perhaps it’s a desert indeed, and so then there is absolutely nothing there. 🙂

Hmm… There’s not all that much one can say about it. However, when looking at the history of physics, there’s one thing that’s really striking. Most of what physicists can think of, in the sense that it made physical sense, turned out to exist. Think of anti-matter, for instance. Paul Dirac thought it might exist, that it made sense to exist, and so everyone started looking for it, and Carl Anderson found in a few years later (in 1932). In fact, it had been observed before, but people just didn’t pay attention, so they didn’t want to see it, in a way. […] OK. I am exaggerating a bit, but you know what I mean. The 1930s are full of examples like that. There was a burst of scientific creativity, as the formalism of quantum physics was being developed, and the experimental confirmations of the theory just followed suit.

In the field of astronomy, or astrophysics I should say, it was the same with black holes. No one could really imagine the existence of black holes until the 1960s or so: they were thought of a mathematical curiosity only, a logical possibility. However, the circumstantial evidence now is quite large and so… Well… It seems a lot of what we can think of actually has some existence somewhere. 🙂

So… Who knows? […] I surely don’t. And so I need to get back to the grind and work my way through the rest of Feynman’s Lectures and the related math. However, this was a nice digression, and so I am grateful to my brother he initiated it. 🙂

Some content on this page was disabled on June 17, 2020 as a result of a DMCA takedown notice from Michael A. Gottlieb, Rudolf Pfeiffer, and The California Institute of Technology. You can learn more about the DMCA here:

https://wordpress.com/support/copyright-and-the-dmca/

When analyzing this animation – look at the movement of the green, red and blue dots respectively – one cannot miss the equivalence between this oscillation and the movement of a mass on a spring – as depicted below.

When analyzing this animation – look at the movement of the green, red and blue dots respectively – one cannot miss the equivalence between this oscillation and the movement of a mass on a spring – as depicted below. The e−i·(E/ħ)·t function just gives us two springs for the price of one. 🙂 Now, you may want to imagine some kind of elastic medium –

The e−i·(E/ħ)·t function just gives us two springs for the price of one. 🙂 Now, you may want to imagine some kind of elastic medium –